## Chart: LM Trailing Loss vs. Number of Hybrid Full Layers

### Overview

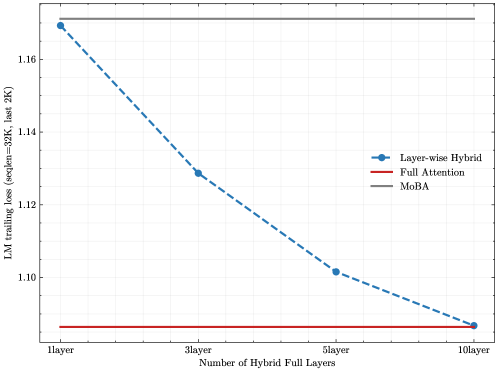

The image is a line chart comparing the Language Model (LM) trailing loss for three different models: Layer-wise Hybrid, Full Attention, and MoBA, as the number of hybrid full layers increases. The x-axis represents the number of hybrid full layers (1, 3, 5, and 10), and the y-axis represents the LM trailing loss (seqlen=32K, last 2K).

### Components/Axes

* **X-axis:** Number of Hybrid Full Layers, with labels at 1layer, 3layer, 5layer, and 10layer.

* **Y-axis:** LM trailing loss (seqlen=32K, last 2K), ranging from approximately 1.08 to 1.18.

* **Legend:** Located on the right side of the chart, it identifies the three models:

* Layer-wise Hybrid (blue dashed line with circular markers)

* Full Attention (red solid line)

* MoBA (gray solid line)

### Detailed Analysis

* **Layer-wise Hybrid (blue dashed line):** The LM trailing loss decreases as the number of hybrid full layers increases.

* 1 layer: approximately 1.17

* 3 layers: approximately 1.13

* 5 layers: approximately 1.10

* 10 layers: approximately 1.085

* **Full Attention (red solid line):** The LM trailing loss remains relatively constant as the number of hybrid full layers increases, staying at approximately 1.085.

* **MoBA (gray solid line):** The LM trailing loss remains constant at approximately 1.175, regardless of the number of hybrid full layers.

### Key Observations

* The Layer-wise Hybrid model shows a significant decrease in LM trailing loss as the number of hybrid full layers increases.

* The Full Attention model has the lowest LM trailing loss and remains constant across different numbers of hybrid full layers.

* The MoBA model has the highest LM trailing loss and remains constant across different numbers of hybrid full layers.

### Interpretation

The chart suggests that increasing the number of hybrid full layers in the Layer-wise Hybrid model improves its performance, as indicated by the decreasing LM trailing loss. The Full Attention model consistently outperforms the Layer-wise Hybrid and MoBA models, maintaining a low and stable LM trailing loss. The MoBA model's performance remains unchanged with varying numbers of hybrid full layers and exhibits the highest loss among the three models. This indicates that the hybrid layer configuration has a significant impact on the Layer-wise Hybrid model, while the Full Attention model is less sensitive to this parameter.