TECHNICAL ASSET FINGERPRINT

a66a33ee535db3d753f9dba4

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

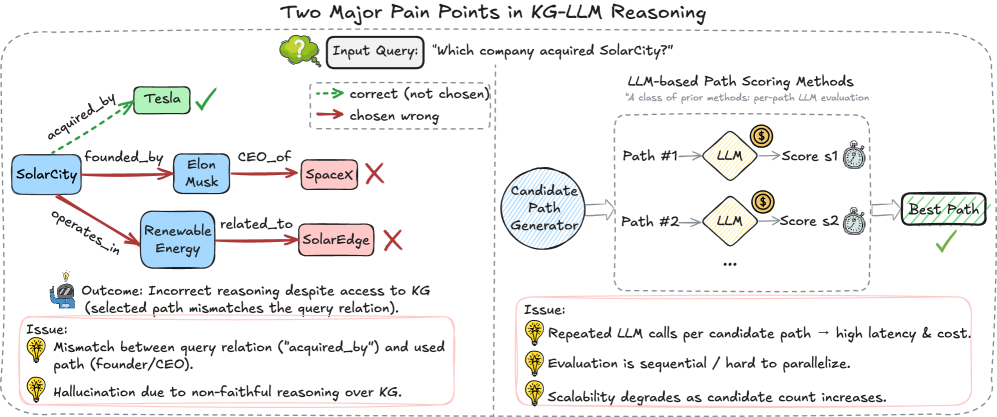

## Diagram: Two Major Pain Points in KG-LLM Reasoning

### Overview

The image is a diagram illustrating two major pain points in Knowledge Graph (KG) - Large Language Model (LLM) reasoning. It shows an example of incorrect reasoning by an LLM when answering a question about a knowledge graph, and it outlines the process of LLM-based path scoring, highlighting issues related to latency, cost, and scalability.

### Components/Axes

* **Title:** Two Major Pain Points in KG-LLM Reasoning

* **Input Query:** "Which company acquired SolarCity?"

* **Legend:**

* Green dashed arrow: correct (not chosen)

* Red solid arrow: chosen wrong

* **Knowledge Graph (Left Side):**

* Nodes: SolarCity, Tesla, Elon Musk, SpaceX, Renewable Energy, SolarEdge

* Edges: acquired\_by, founded\_by, CEO\_of, operates\_in, related\_to

* **LLM-based Path Scoring Methods (Right Side):**

* Candidate Path Generator

* LLM (appears twice)

* Score s1, Score s2

* Best Path

* **Outcome:** Incorrect reasoning despite access to KG (selected path mismatches the query relation).

* **Issues (Left Side):**

* Mismatch between query relation ("acquired\_by") and used path (founder/CEO).

* Hallucination due to non-faithful reasoning over KG.

* **Issues (Right Side):**

* Repeated LLM calls per candidate path -> high latency & cost.

* Evaluation is sequential / hard to parallelize.

* Scalability degrades as candidate count increases.

### Detailed Analysis

**Knowledge Graph (Left Side):**

* **SolarCity** is connected to **Tesla** via a green dashed arrow labeled "acquired\_by" with a green checkmark, indicating the correct answer.

* **SolarCity** is connected to **Elon Musk** via a red solid arrow labeled "founded\_by".

* **Elon Musk** is connected to **SpaceX** via a red solid arrow labeled "CEO\_of" with a red X, indicating an incorrect path.

* **SolarCity** is connected to **Renewable Energy** via a red solid arrow labeled "operates\_in".

* **Renewable Energy** is connected to **SolarEdge** via a red solid arrow labeled "related\_to" with a red X, indicating an incorrect path.

**LLM-based Path Scoring Methods (Right Side):**

* The **Candidate Path Generator** outputs two paths: Path #1 and Path #2.

* Each path is processed by an **LLM**.

* The LLM assigns a score to each path: Score s1 for Path #1 and Score s2 for Path #2.

* The path with the highest score is selected as the **Best Path**, indicated by a green checkmark.

**Issues (Left Side):**

* The diagram highlights that the LLM's reasoning is incorrect despite having access to the knowledge graph.

* The selected path (founder/CEO) mismatches the query relation (acquired\_by).

* The LLM exhibits hallucination due to non-faithful reasoning over the knowledge graph.

**Issues (Right Side):**

* Repeated LLM calls per candidate path lead to high latency and cost.

* Evaluation is sequential, making it hard to parallelize.

* Scalability degrades as the candidate count increases.

### Key Observations

* The diagram illustrates how an LLM can fail to correctly answer a question about a knowledge graph, even when the correct information is present.

* The LLM's incorrect reasoning is attributed to a mismatch between the query relation and the selected path, as well as hallucination.

* The LLM-based path scoring method suffers from issues related to latency, cost, and scalability.

### Interpretation

The diagram highlights the challenges of using LLMs for reasoning over knowledge graphs. While LLMs have the potential to answer complex questions based on structured knowledge, they can also make mistakes due to incorrect reasoning, hallucination, and scalability issues. The diagram suggests that further research is needed to improve the accuracy and efficiency of LLM-based knowledge graph reasoning methods. The issues on the right side of the diagram suggest that the process is computationally expensive and difficult to scale, which could limit its applicability in real-world scenarios.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

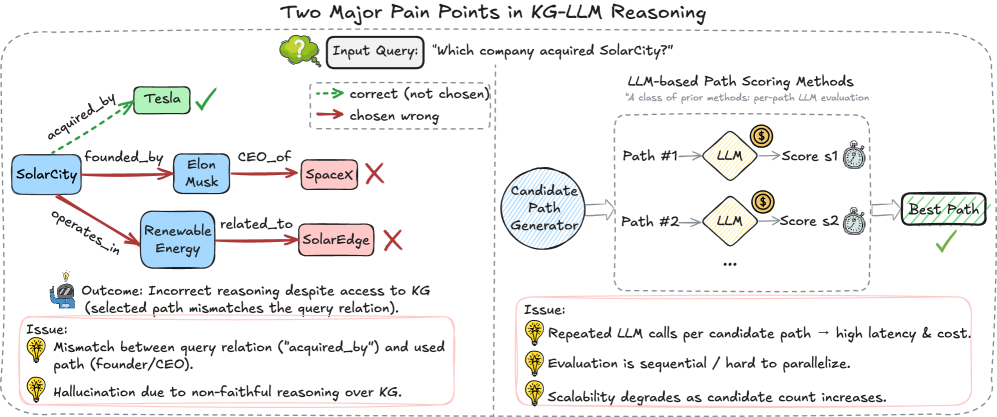

## Diagram: Two Major Pain Points in KG-LLM Reasoning

### Overview

This diagram illustrates two key challenges in Knowledge Graph (KG) and Large Language Model (LLM) reasoning. The diagram is split into two main sections: a left side demonstrating a reasoning failure with a KG, and a right side illustrating the process of LLM-based path scoring. The diagram uses nodes, edges, and visual cues (checkmarks, crosses) to represent relationships and outcomes.

### Components/Axes

The diagram consists of the following components:

* **Nodes:** Represent entities like "SolarCity", "Tesla", "Elon Musk", "SpaceX", "Renewable Energy", "SolarEdge", "LLM", "Candidate Path Generator", "Best Path".

* **Edges:** Represent relationships between entities, labeled with phrases like "acquired_by", "founded_by", "CEO_of", "operates_in", "related_to".

* **Input Query:** "Which company acquired SolarCity?"

* **Outcome:** "Incorrect reasoning despite access to KG (selected path mismatches the query relation)."

* **Issues (Left Side):**

* "Mismatch between query relation ("acquired_by") and used path (Founder/CEO)."

* "Hallucination due to non-faithful reasoning over KG."

* **LLM-based Path Scoring Methods:** A description stating "A class of prior methods: per-path LLM evaluation".

* **Issue (Right Side):**

* "Repeated LLM calls per candidate path – high latency & cost."

* "Evaluation is sequential / hard to parallelize."

* "Scalability degrades as candidate count increases."

### Detailed Analysis or Content Details

**Left Side: Reasoning Failure**

* **SolarCity** is connected to **Tesla** via an edge labeled "acquired_by" with a checkmark, indicating the correct path.

* **SolarCity** is also connected to **Elon Musk** via "founded_by".

* **Elon Musk** is connected to **SpaceX** via "CEO_of".

* **SolarCity** is connected to **Renewable Energy** via "operates_in".

* **SolarCity** is connected to **SolarEdge** via "related_to" with a cross, indicating an incorrect path.

* The "Outcome" states "Incorrect reasoning despite access to KG (selected path mismatches the query relation)."

* The "Issues" listed are:

* "Mismatch between query relation ("acquired_by") and used path (Founder/CEO)."

* "Hallucination due to non-faithful reasoning over KG."

**Right Side: LLM-based Path Scoring**

* A "Candidate Path Generator" produces multiple paths.

* Each "Path" (Path #1, Path #2, etc.) is fed into an "LLM".

* The LLM assigns a "Score" (s1, s2, etc.) to each path.

* The path with the highest score is selected as the "Best Path".

* The "Issue" listed are:

* "Repeated LLM calls per candidate path – high latency & cost."

* "Evaluation is sequential / hard to parallelize."

* "Scalability degrades as candidate count increases."

### Key Observations

* The diagram highlights a discrepancy between the correct relationship ("acquired_by") and the paths the system explores (Founder/CEO, related_to).

* The LLM-based path scoring method, while attempting to evaluate multiple paths, suffers from scalability and efficiency issues due to repeated LLM calls.

* The use of checkmarks and crosses clearly indicates the correctness or incorrectness of different reasoning paths.

* The diagram visually represents the concept of "hallucination" in LLMs, where the model generates incorrect information despite having access to a knowledge graph.

### Interpretation

The diagram demonstrates the challenges of integrating Knowledge Graphs with Large Language Models for reasoning tasks. While KGs provide structured knowledge, LLMs can struggle to correctly utilize this knowledge, leading to inaccurate conclusions. The diagram suggests that the LLM may be prioritizing paths based on superficial similarities or biases, rather than the specific query relation. The right side of the diagram illustrates a common approach to mitigate this issue – scoring multiple paths – but also reveals its limitations in terms of computational cost and scalability. The diagram implies a need for more robust and efficient methods for aligning LLM reasoning with the underlying knowledge graph structure, and for addressing the issue of "hallucination" in LLM-based systems. The diagram is a visual explanation of a technical problem, not a presentation of data.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

\n

## Diagram: Two Major Pain Points in KG-LLM Reasoning

### Overview

The image is a technical diagram illustrating two primary failure modes or challenges in systems that combine Knowledge Graphs (KG) with Large Language Models (LLMs) for reasoning tasks. It uses a specific example query to demonstrate the first problem and a process flowchart to illustrate the second.

### Components/Axes

The diagram is divided into two main panels, separated by a vertical dashed line.

**Title:** "Two Major Pain Points in KG-LLM Reasoning" (centered at the top).

**Legend (Top Center):**

* `-->` (Green, dashed arrow): "correct (not chosen)"

* `-->` (Red, solid arrow): "chosen wrong"

**Left Panel - Knowledge Graph Reasoning Example:**

* **Input Query (in a thought bubble):** "Which company acquired SolarCity?"

* **Knowledge Graph Nodes (Blue rounded rectangles):** `SolarCity`, `Renewable Energy`

* **Knowledge Graph Entities (Other colored rounded rectangles):** `Tesla` (Green), `Elon Musk` (Blue), `SpaceX` (Pink), `SolarEdge` (Pink)

* **Relationship Edges (Arrows with labels):**

* `acquired_by` (Green, dashed arrow from SolarCity to Tesla)

* `founded_by` (Red, solid arrow from SolarCity to Elon Musk)

* `CEO_of` (Red, solid arrow from Elon Musk to SpaceX)

* `operates_in` (Red, solid arrow from SolarCity to Renewable Energy)

* `related_to` (Red, solid arrow from Renewable Energy to SolarEdge)

* **Outcome Text (Below the graph):** "Outcome: Incorrect reasoning despite access to KG (selected path mismatches the query relation)."

* **Issue Box (Pink background, bottom left):**

* **Header:** "Issue:"

* **Bullet 1:** "Mismatch between query relation ("acquired_by") and used path (founder/CEO)."

* **Bullet 2:** "Hallucination due to non-faithful reasoning over KG."

**Right Panel - Process Flowchart:**

* **Subtitle:** "LLM-based Path Scoring Methods"

* **Annotation:** "*A class of prior methods: per-path LLM evaluation*"

* **Process Flow:**

1. `Candidate Path Generator` (Circle) --> produces multiple paths.

2. Paths are shown as `Path #1`, `Path #2`, `...` (Text).

3. Each path is evaluated by an `LLM` (Diamond shape with a dollar sign `$` icon).

4. Each evaluation produces a `Score s1`, `Score s2` (Text with a stopwatch icon).

5. Scores are used to select the `Best Path` (Green rounded rectangle with a checkmark).

* **Issue Box (Pink background, bottom right):**

* **Header:** "Issue:"

* **Bullet 1:** "Repeated LLM calls per candidate path → high latency & cost."

* **Bullet 2:** "Evaluation is sequential / hard to parallelize."

* **Bullet 3:** "Scalability degrades as candidate count increases."

### Detailed Analysis

**Left Panel Analysis (Trend Verification):**

The visual trend shows a reasoning path that diverges from the correct answer. The correct relationship (`acquired_by`) is present in the graph (green dashed line to `Tesla`) but is not selected. Instead, the system follows a red, solid "chosen wrong" path: `SolarCity` → `founded_by` → `Elon Musk` → `CEO_of` → `SpaceX`. A second, also incorrect, path is shown: `SolarCity` → `operates_in` → `Renewable Energy` → `related_to` → `SolarEdge`. This demonstrates a failure to align the query's intent with the correct graph relation.

**Right Panel Analysis (Component Isolation):**

This section isolates the computational bottleneck. The flow is linear and sequential: generate paths, then evaluate each one individually with an LLM call. The dollar sign (`$`) and stopwatch icons explicitly symbolize the cost and latency associated with each evaluation step. The "..." indicates this process repeats for an arbitrary number of candidate paths.

### Key Observations

1. **Spatial Grounding:** The legend is positioned at the top center, applying to both panels. In the left panel, the correct path (green, dashed) is visually distinct but bypassed. The incorrect paths (red, solid) are prominently displayed.

2. **Dual Failure Modes:** The diagram highlights two distinct but related problems: a *semantic* failure (wrong reasoning path chosen) and a *systemic* failure (inefficient evaluation process).

3. **Symbolism:** Icons are used effectively: a lightbulb for "Issue," a dollar sign for cost, a stopwatch for latency, a checkmark for correct/best, and an 'X' for incorrect.

4. **Color Coding:** Consistent use of green for correct/optimal and red for incorrect/problematic reinforces the message.

### Interpretation

This diagram argues that current KG-LLM reasoning systems suffer from a fundamental trade-off between **faithfulness** and **efficiency**.

* **The Left Panel (Faithfulness Problem)** demonstrates that even with a structured knowledge graph, an LLM can hallucinate or follow a plausible but incorrect reasoning chain (`founder/CEO` instead of `acquired_by`). This suggests the model may not be grounding its reasoning sufficiently in the provided graph structure, leading to unfaithful answers.

* **The Right Panel (Efficiency Problem)** shows that the common method of scoring each candidate path with a separate LLM call is inherently unscalable. It creates a direct linear relationship between the number of potential answers (candidate paths) and both cost and response time. This sequential bottleneck makes the approach impractical for complex queries that generate many candidate paths.

**Underlying Message:** The two pain points are interconnected. Solving the faithfulness problem (left) might require generating and evaluating more candidate paths to find the correct one, which in turn exacerbates the efficiency problem (right). Therefore, advances in this field likely require new methods that can both accurately identify the correct reasoning path *and* do so without prohibitive computational cost, perhaps through more efficient scoring mechanisms or parallelizable evaluation.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Diagram: Two Major Pain Points in KG-LLM Reasoning

### Overview

The diagram illustrates two critical challenges in knowledge graph (KG)-based large language model (LLM) reasoning. It contrasts a KG structure with an LLM-based path evaluation system, highlighting mismatches in reasoning and scalability issues. The left side shows a KG with entities and relationships, while the right side demonstrates how an LLM evaluates candidate reasoning paths.

### Components/Axes

#### Left Side (Knowledge Graph)

- **Entities**:

- SolarCity (blue box)

- Tesla (green box)

- Elon Musk (blue box)

- SpaceX (pink box)

- Renewable Energy (blue box)

- SolarEdge (pink box)

- **Relationships**:

- `acquired_by` (dashed green arrow: Tesla → SolarCity)

- `founded_by` (solid red arrow: SolarCity → Elon Musk)

- `CEO_of` (solid red arrow: Elon Musk → SpaceX)

- `operates_in` (solid red arrow: SolarCity → Renewable Energy)

- `related_to` (solid red arrow: Renewable Energy → SolarEdge)

- **Annotations**:

- Green checkmark next to Tesla (correct answer)

- Red X next to SpaceX (incorrectly chosen by LLM)

- Text box: "Outcome: Incorrect reasoning despite access to KG (selected path mismatches the query relation)."

#### Right Side (LLM-Based Path Scoring)

- **Components**:

- **Candidate Path Generator**: Circular node generating multiple paths.

- **LLM Evaluation**: Diamond-shaped nodes labeled "LLM" with associated scores (s1, s2, ...).

- **Best Path Selection**: Final node with green checkmark.

- **Annotations**:

- Text box: "Issue: Repeated LLM calls per candidate path → high latency & cost."

- Text box: "Issue: Evaluation is sequential / hard to parallelize."

- Text box: "Issue: Scalability degrades as candidate count increases."

### Detailed Analysis

#### Left Side (KG Structure)

1. **Correct Path**:

- SolarCity was acquired by Tesla (dashed green arrow).

- Confirmed by green checkmark.

2. **Incorrect Path**:

- LLM selected SpaceX via the `CEO_of` relationship (Elon Musk → SpaceX).

- Red X indicates error.

3. **Mismatched Relationships**:

- Query asks for "acquired_by," but LLM used "founded_by" and "CEO_of" paths.

- Hallucination due to non-faithful reasoning over KG.

#### Right Side (Path Scoring)

1. **Path Evaluation**:

- Multiple candidate paths (e.g., Path #1, Path #2) are scored by LLM.

- Scores (s1, s2) are assigned, but the process is sequential.

2. **Scalability Issues**:

- Repeated LLM calls increase latency and cost.

- Evaluation cannot be parallelized, limiting efficiency.

- Scalability degrades as candidate paths grow.

### Key Observations

1. **Incorrect Reasoning**: Despite access to the KG, the LLM failed to identify Tesla as the acquirer of SolarCity, instead selecting SpaceX via unrelated relationships.

2. **Path Evaluation Inefficiencies**: The sequential LLM evaluation process introduces high computational overhead and poor scalability.

3. **Hallucination**: The LLM generated a reasoning path (Elon Musk → SpaceX) that does not align with the query's required relationship ("acquired_by").

### Interpretation

The diagram highlights two systemic issues in KG-LLM integration:

1. **KG-LLM Mismatch**: The LLM's reasoning diverges from the KG's factual structure, leading to hallucinations. This suggests a need for tighter alignment between query intent and KG relationship semantics.

2. **Path Scoring Bottlenecks**: The sequential LLM evaluation creates scalability challenges, making the system impractical for large-scale applications. Parallelization or heuristic-based pruning of candidate paths could mitigate this.

3. **Trust in KG Data**: The failure to leverage the KG correctly implies either insufficient KG curation (e.g., missing "acquired_by" relationships) or inadequate LLM prompting to constrain reasoning to valid KG paths.

The diagram underscores the need for hybrid systems that combine KG constraints with LLM flexibility while addressing computational inefficiencies in path evaluation.

DECODING INTELLIGENCE...