\n

## Diagram: Conceptual Model of Language Processing

### Overview

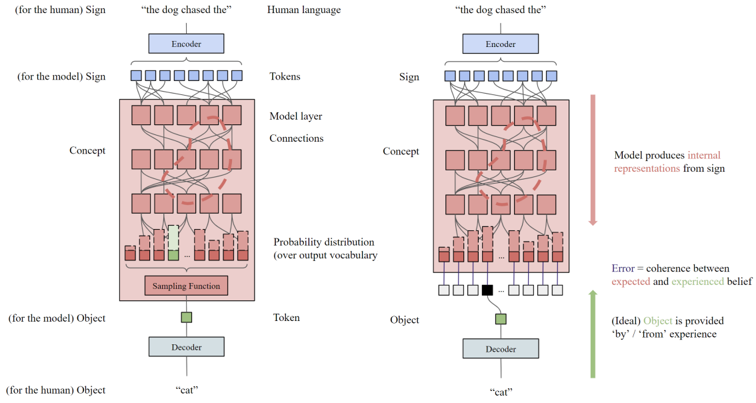

The image presents a diagram illustrating a conceptual model of language processing, comparing how a human and a model (likely a neural network) process language and arrive at an object representation. The diagram depicts two parallel processing pipelines, one for the human and one for the model, with stages labeled "Sign," "Concept," and "Object." The diagram highlights the difference in how the model and human handle error and the source of the object representation.

### Components/Axes

The diagram consists of the following components:

* **Human Pipeline (Left):**

* **Sign:** Input text "the dog chased the".

* **Concept:** A series of interconnected boxes representing conceptual layers.

* **Object:** Output "cat".

* **Model Pipeline (Right):**

* **Sign:** Input text "the dog chased the".

* **Concept:** A series of interconnected boxes representing conceptual layers.

* **Object:** Output "cat".

* **Encoder:** Converts human language into tokens.

* **Decoder:** Converts tokens into an object.

* **Sampling Function:** A red box within the model's concept layer.

* **Probability Distribution (over output vocabulary):** A row of boxes below the sampling function, with one box highlighted in green.

* **Connections:** Lines connecting the layers, representing information flow.

* **Error:** Defined as the coherence between expected and experienced belief.

* **Labels:** "Human language", "Tokens", "Model layer Connections", "Probability distribution (over output vocabulary)", "Sampling Function", "Token", "Object".

* **Annotations:** "Model produces internal representations from sign", "Ideally Object is provided by / from experience".

### Detailed Analysis or Content Details

The diagram shows a parallel structure for human and model language processing.

**Human Pipeline:**

* The input "the dog chased the" is processed through conceptual layers, ultimately resulting in the object "cat". The process is depicted as a direct flow from sign to object.

**Model Pipeline:**

* The input "the dog chased the" is processed through conceptual layers.

* The "Sampling Function" (red box) selects a token from the "Probability distribution (over output vocabulary)". The highlighted green box in the probability distribution suggests a higher probability for a specific token.

* The selected token is then decoded into the object "cat".

* The diagram indicates that the model *produces* internal representations from the sign, while the human's object is ideally *provided by* experience.

* The error is defined as the difference between expected and experienced belief. The right side of the diagram shows a black square in the probability distribution, indicating an error.

**Connections:**

* The connections between the "Sign" and "Concept" layers are represented by numerous lines, indicating a complex network of interactions.

* The connections between the "Concept" and "Sampling Function" layers are also numerous.

* The connection between the "Sampling Function" and the "Decoder" is a single line.

### Key Observations

* The model's process involves a probabilistic sampling step, which introduces uncertainty and potential for error.

* The human process is depicted as more direct and less probabilistic.

* The diagram emphasizes the difference in how the object representation is obtained – generated by the model versus experienced by the human.

* The error is explicitly defined and visually represented in the model pipeline.

### Interpretation

The diagram illustrates a simplified model of how humans and machines process language to arrive at an understanding of the world. It highlights the key difference between the two: humans ground their understanding in experience, while models generate internal representations based on learned probabilities. The inclusion of the "Sampling Function" and "Probability Distribution" emphasizes the stochastic nature of model-based language processing, and the potential for errors. The diagram suggests that the model's understanding is not directly tied to real-world experience, which could lead to discrepancies between its internal representations and actual reality. The definition of "Error" as coherence between expected and experienced belief is a crucial point, suggesting that the model's performance is evaluated based on its ability to align its internal beliefs with external observations. The diagram is a conceptual illustration and does not provide specific numerical data or quantitative measurements. It serves as a visual aid for understanding the theoretical differences between human and machine language processing.