## Diagram: Neural Model Processing Pipeline

### Overview

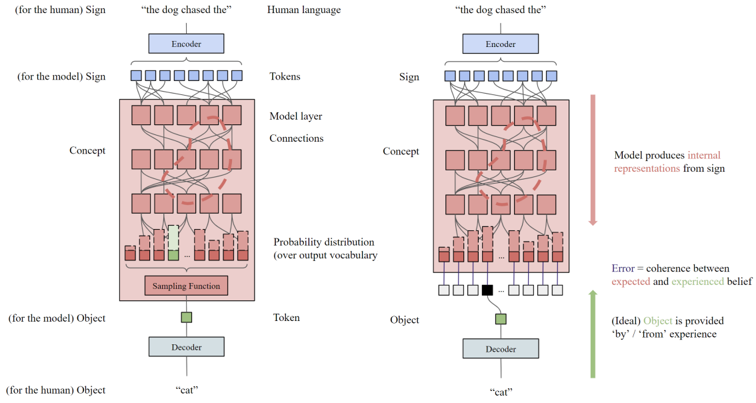

The diagram illustrates a neural model's processing pipeline for natural language understanding, comparing human cognition (left) and model behavior (right). It shows how input signs ("the dog chased the") are encoded, processed through model layers, and decoded into objects ("cat"). Key components include tokenization, concept mapping, probability distributions, and error measurement between expected/experienced beliefs.

### Components/Axes

- **Left Side (Human Cognition)**:

- **Encoder**: Converts human sign ("the dog chased the") into tokens.

- **Model Layer**: Contains interconnected "Concept" nodes (red squares) and "Connections" (red lines).

- **Probability Distribution**: Outputs over vocabulary (red bars).

- **Sampling Function**: Selects a token (green square).

- **Decoder**: Maps token to object ("cat").

- **Right Side (Model Behavior)**:

- Identical structure to the left, but with:

- **Error Measurement**: Red arrow labeled "Error = coherence between expected and experienced belief."

- **Internal Representations**: Model produces these from sign (red arrow).

- **Legend**:

- **Red**: Human cognition elements.

- **Blue**: Model tokens.

- **Green**: Object ("cat").

### Detailed Analysis

1. **Input Flow**:

- Both sides start with the same sign ("the dog chased the") encoded into tokens (blue squares).

- Tokens feed into the Model Layer, which maps them to abstract "Concepts" (red squares) via dense connections.

2. **Probability Distribution**:

- The Model Layer outputs a probability distribution over vocabulary (red bars), representing likely next tokens.

3. **Sampling & Decoding**:

- A sampling function (green square) selects a token, which the Decoder maps to an object ("cat").

4. **Error Measurement**:

- A red arrow highlights the discrepancy between the model's internal representations and the human-provided object ("cat"), quantified as "Error."

### Key Observations

- **Symmetry**: Both sides share identical architecture but differ in error measurement.

- **Conceptual Abstraction**: The Model Layer acts as a black box, transforming tokens into high-level concepts.

- **Error Source**: The error arises from mismatches between the model's internal state and the ground-truth object.

### Interpretation

This diagram emphasizes the gap between human language processing and model behavior. The error measurement suggests that models struggle to align internal representations with human expectations, even when the correct object is provided. The dense connections in the Model Layer imply complex feature interactions, but the lack of transparency in concept formation highlights challenges in interpretability. The use of "cat" as the object (ideal outcome) underscores the importance of grounding models in experiential data to reduce error.