## Diagram: Comparison of Retrieval-Augmented Generation (RAG) vs. Iterative RAG for LLMs

### Overview

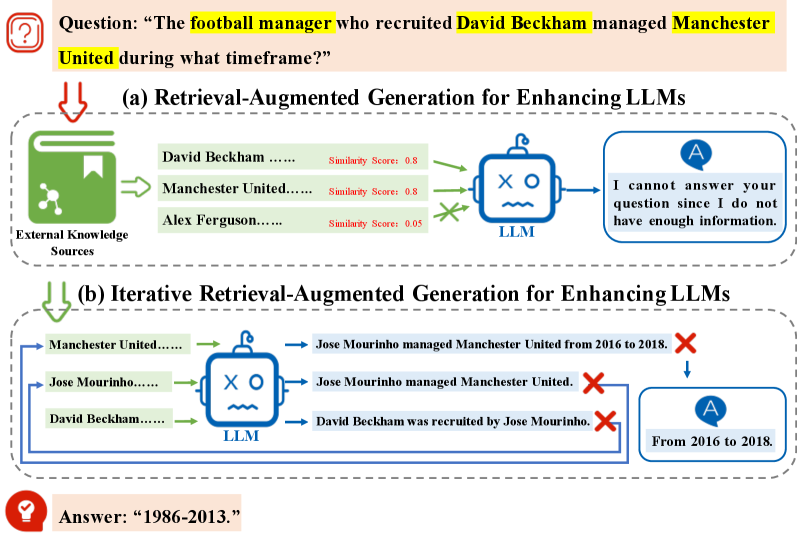

The image is a technical diagram illustrating two methods for enhancing Large Language Models (LLMs) with external knowledge to answer complex questions. It uses a specific example question about football to demonstrate the processes and outcomes of standard Retrieval-Augmented Generation (RAG) versus Iterative RAG. The diagram is divided into two main panels, labeled (a) and (b), with a question at the top and the final correct answer at the bottom.

### Components/Axes

The diagram is structured as a flowchart with the following key components:

1. **Question Box (Top):** Contains the query: "The football manager who recruited David Beckham managed Manchester United during what timeframe?"

2. **Panel (a): Retrieval-Augmented Generation for Enhancing LLMs**

* **External Knowledge Sources:** Represented by a green book icon. It lists three retrieved text snippets with associated similarity scores.

* **LLM Icon:** A blue robot face representing the language model.

* **Output Box:** The model's generated response.

3. **Panel (b): Iterative Retrieval-Augmented Generation for Enhancing LLMs**

* **Iterative Retrieval Loop:** Shows multiple retrieval steps feeding into the LLM.

* **LLM Icon:** Same as in panel (a).

* **Intermediate Outputs:** Three generated statements, two marked with red "X" symbols.

* **Final Output Box:** The model's final answer after iteration.

4. **Answer Box (Bottom):** Displays the correct, verified answer: "1986-2013."

### Detailed Analysis

**Panel (a) - Standard RAG Process:**

* **Input:** The question is processed to retrieve relevant information from external sources.

* **Retrieved Data (from left to right):**

* "David Beckham ......" (Similarity Score: 0.8)

* "Manchester United......" (Similarity Score: 0.8)

* "Alex Ferguson......" (Similarity Score: 0.05)

* **Process:** The LLM receives these three snippets. The low score for "Alex Ferguson" is visually indicated by a green "X" over the arrow, suggesting it was not effectively utilized or was deemed irrelevant by the system.

* **Output:** The LLM states: "I cannot answer your question since I do not have enough information."

**Panel (b) - Iterative RAG Process:**

* **Step 1 Retrieval:** The process starts by retrieving "Manchester United......".

* **LLM Generation 1:** Produces the statement: "Jose Mourinho managed Manchester United from 2016 to 2018." This is marked with a red "X", indicating it is an incorrect or incomplete path for answering the original question.

* **Step 2 Retrieval:** Based on the first output, the system retrieves "Jose Mourinho......".

* **LLM Generation 2:** Produces: "Jose Mourinho managed Manchester United." Also marked with a red "X".

* **Step 3 Retrieval:** The system then retrieves "David Beckham......".

* **LLM Generation 3:** Produces: "David Beckham was recruited by Jose Mourinho." Marked with a red "X".

* **Final Output:** After these iterations, the system outputs: "From 2016 to 2018." This answer is derived from the first generated statement but is incorrect for the original question.

**Final Answer:**

* The correct answer, displayed separately at the bottom, is: "1986-2013."

### Key Observations

1. **Process Contrast:** Panel (a) shows a single retrieval step leading to failure. Panel (b) shows a multi-step, iterative process where each retrieval is informed by the previous LLM output.

2. **Error Propagation in Iteration:** The iterative process in (b) follows a logical but incorrect chain (Manchester United -> Jose Mourinho -> David Beckham), leading to a confident but wrong answer ("2016 to 2018").

3. **Similarity Score Discrepancy:** In panel (a), "Alex Ferguson" has a very low similarity score (0.05) compared to the other two terms (0.8). This is the critical missing piece of information needed to answer the question correctly (Alex Ferguson was the manager who recruited Beckham and had the 1986-2013 tenure).

4. **Visual Cues:** Red "X" marks are used to denote incorrect reasoning paths or outputs within the iterative process. Green arrows and icons denote the flow of information.

### Interpretation

This diagram serves as a pedagogical tool to explain the limitations of basic RAG and the potential pitfalls of iterative RAG.

* **What it demonstrates:** It shows that standard RAG can fail if the initial retrieval misses a key, low-similarity piece of information (Alex Ferguson). Iterative RAG attempts to refine answers by chaining retrievals, but this can lead the model down a plausible but incorrect reasoning path if the initial steps are misguided.

* **Relationship between elements:** The question is the driver. The external knowledge is the raw material. The LLM is the reasoning engine. The similarity scores guide the initial retrieval. The iterative loop shows how the model's own outputs can become inputs for the next retrieval step, creating a feedback loop.

* **Notable anomaly:** The core insight is the **similarity score paradox**. The most relevant fact for answering the question ("Alex Ferguson") has the lowest retrieval score. This highlights a fundamental challenge in RAG systems: semantic similarity search does not always equate to factual relevance for a complex, multi-hop question. The correct answer requires connecting three facts (Beckham, Manchester United, Ferguson) and a timeframe, which neither RAG approach in the diagram successfully achieves automatically. The correct answer is provided externally, underscoring the system's failure.