TECHNICAL ASSET FINGERPRINT

a6acc620098965c7e49989cc

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

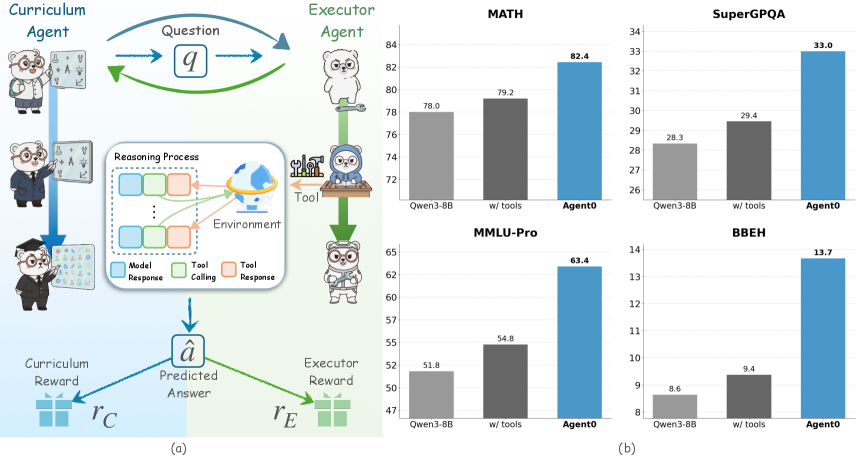

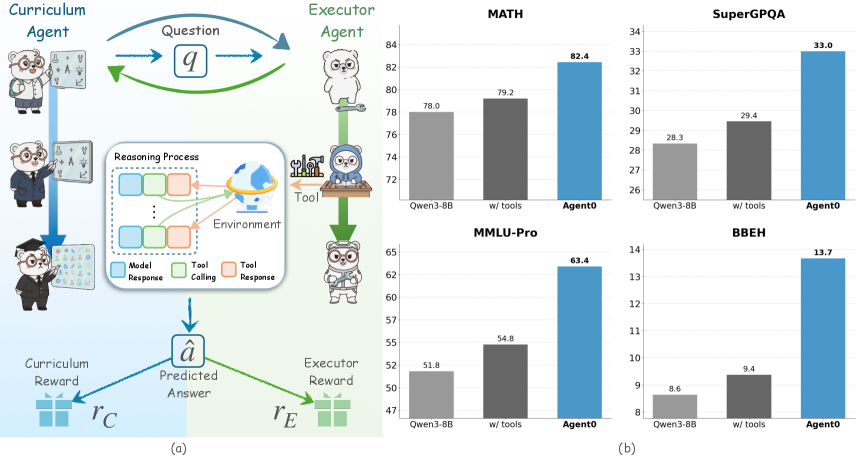

## Workflow Diagram and Bar Charts: Agent Performance

### Overview

The image presents a workflow diagram illustrating the interaction between a Curriculum Agent and an Executor Agent, alongside bar charts comparing the performance of different models (Qwen3-8B, Qwen3-8B with tools, and Agent0) on various benchmarks (MATH, SuperGPQA, MMLU-Pro, and BBEH).

### Components/Axes

**Part (a): Workflow Diagram**

* **Title:** Curriculum Agent vs. Executor Agent

* **Agents:**

* Curriculum Agent: Represented by a bear character in three stages: student, intern, and graduate.

* Executor Agent: Represented by a bear character in three stages: standing, using tools, and wearing a hard hat.

* **Flow:**

* The Curriculum Agent poses a "Question" (q) to the Executor Agent.

* The Executor Agent engages in a "Reasoning Process" within an "Environment," potentially using "Tool" (tool calling and tool response).

* The Executor Agent produces a "Predicted Answer" (â).

* Both agents receive rewards: Curriculum Reward (rC) and Executor Reward (rE).

* **Reasoning Process Legend:**

* Model Response (blue)

* Tool Calling (green)

* Tool Response (orange)

**Part (b): Bar Charts**

* **General Layout:** Four bar charts arranged in a 2x2 grid. Each chart compares the performance of three models: Qwen3-8B, Qwen3-8B with tools, and Agent0.

* **X-axis:** Model type (Qwen3-8B, w/ tools, Agent0)

* **Y-axis:** Performance score (varies by benchmark)

* **Bar Colors:**

* Qwen3-8B: Light gray

* w/ tools: Dark gray

* Agent0: Blue

### Detailed Analysis

**MATH Benchmark**

* **Y-axis:** Scale from 72 to 84

* **Qwen3-8B:** 78.0

* **w/ tools:** 79.2

* **Agent0:** 82.4

* **Trend:** Performance increases from Qwen3-8B to w/ tools to Agent0.

**SuperGPQA Benchmark**

* **Y-axis:** Scale from 26 to 34

* **Qwen3-8B:** 28.3

* **w/ tools:** 29.4

* **Agent0:** 33.0

* **Trend:** Performance increases from Qwen3-8B to w/ tools to Agent0.

**MMLU-Pro Benchmark**

* **Y-axis:** Scale from 47 to 65

* **Qwen3-8B:** 51.8

* **w/ tools:** 54.8

* **Agent0:** 63.4

* **Trend:** Performance increases from Qwen3-8B to w/ tools to Agent0.

**BBEH Benchmark**

* **Y-axis:** Scale from 8 to 14

* **Qwen3-8B:** 8.6

* **w/ tools:** 9.4

* **Agent0:** 13.7

* **Trend:** Performance increases from Qwen3-8B to w/ tools to Agent0.

### Key Observations

* In all four benchmarks, Agent0 consistently outperforms Qwen3-8B and Qwen3-8B with tools.

* Using tools generally improves the performance of Qwen3-8B, but the improvement is not as significant as using Agent0.

* The workflow diagram illustrates a reinforcement learning setup where agents learn through interaction and feedback.

### Interpretation

The data suggests that Agent0 is a more effective model for the given benchmarks compared to Qwen3-8B, even when Qwen3-8B is augmented with external tools. The workflow diagram provides context for how these models might be trained and evaluated within a reinforcement learning framework. The consistent outperformance of Agent0 across different benchmarks indicates its robustness and potential for generalization. The use of tools improves Qwen3-8B's performance, suggesting that tool integration is a valuable strategy for enhancing model capabilities.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Bar Charts: Agent Performance on Benchmarks

### Overview

The image presents a diagram illustrating a curriculum learning framework with two agents (Curriculum Agent and Executor Agent) and four bar charts comparing the performance of different models (Owen3-8B, w/ tools, and Agento) on four benchmarks: MATH, SuperGLQA, MMLU-Pro, and BBHE. The diagram on the left (a) shows the interaction between the agents, the reasoning process, and the reward mechanisms. The bar charts on the right (b) display the performance scores.

### Components/Axes

The diagram (a) includes components labeled: "Curriculum Agent", "Executor Agent", "Question" (q), "Reasoning Process", "Environment", "Tool", "Model Response", "Tool Calling", "Tool Response", "Predicted Answer" (â), "Curriculum Reward" (rC), and "Executor Reward" (rE).

The bar charts (b) have the following components:

* **X-axis:** Model - Owen3-8B, w/ tools, Agento

* **Y-axis:** Score (ranging from approximately 47 to 84, depending on the benchmark)

* **Benchmarks (separate charts):** MATH, SuperGLQA, MMLU-Pro, BBHE

* **Color Coding:** Owen3-8B (grey), w/ tools (dark grey), Agento (blue)

### Detailed Analysis or Content Details

**MATH:**

* Owen3-8B: Approximately 78.0

* w/ tools: Approximately 79.2

* Agento: Approximately 82.4

The trend is upward, with Agento performing best, followed by w/ tools, and then Owen3-8B.

**SuperGLQA:**

* Owen3-8B: Approximately 28.3

* w/ tools: Approximately 29.4

* Agento: Approximately 33.0

The trend is upward, with Agento performing best, followed by w/ tools, and then Owen3-8B.

**MMLU-Pro:**

* Owen3-8B: Approximately 51.8

* w/ tools: Approximately 54.8

* Agento: Approximately 63.4

The trend is upward, with Agento performing best, followed by w/ tools, and then Owen3-8B.

**BBHE:**

* Owen3-8B: Approximately 8.6

* w/ tools: Approximately 9.4

* Agento: Approximately 13.7

The trend is upward, with Agento performing best, followed by w/ tools, and then Owen3-8B.

### Key Observations

* Agento consistently outperforms both Owen3-8B and the "w/ tools" model across all four benchmarks.

* Adding tools ("w/ tools") consistently improves performance compared to Owen3-8B, but Agento still surpasses it.

* The largest performance gains are observed in the MMLU-Pro benchmark, where Agento achieves a score of approximately 63.4, significantly higher than the other models.

* BBHE shows the lowest overall scores, indicating it is the most challenging benchmark.

### Interpretation

The data suggests that the Agento model, leveraging a curriculum learning framework with an Executor Agent, demonstrates superior performance on a variety of reasoning benchmarks compared to the baseline Owen3-8B model and even an enhanced version with tools. The consistent improvement across all benchmarks indicates that the curriculum learning approach and the Agento architecture are effective in enhancing reasoning capabilities. The varying degree of improvement across benchmarks suggests that the approach is more beneficial for certain types of reasoning tasks (e.g., MMLU-Pro) than others (e.g., BBHE). The diagram (a) illustrates the iterative process of question generation, reasoning, tool usage, and reward feedback, which likely contributes to the improved performance of Agento. The Executor Agent's ability to utilize tools appears to provide a moderate performance boost, but the curriculum learning framework implemented in Agento provides a more substantial advantage. The consistent trend of Agento outperforming the other models suggests a robust and generalizable improvement in reasoning ability.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Diagram and Bar Charts: Multi-Agent System Performance Comparison

### Overview

The image is a composite figure containing two main panels. Panel (a) on the left is a system architecture diagram illustrating a multi-agent framework involving a "Curriculum Agent" and an "Executor Agent." Panel (b) on the right consists of four bar charts comparing the performance of three models ("Qwen3-8B", "w/ tools", and "Agent0") across four different benchmarks: MATH, SuperGPQA, MMLU-Pro, and BBEH.

### Components/Axes

#### Panel (a): System Architecture Diagram

* **Top Section:**

* **Left Agent:** Labeled "Curriculum Agent". Depicted as a cartoon bear in a graduation cap and gown, holding a scroll.

* **Right Agent:** Labeled "Executor Agent". Depicted as a cartoon bear in a lab coat, holding a wrench.

* **Central Element:** A box labeled "Question" containing the symbol "q". Arrows flow from the Curriculum Agent to the Question box and from the Question box to the Executor Agent. A green arrow also flows back from the Executor Agent to the Curriculum Agent.

* **Middle Section:**

* **Left Flow:** A blue arrow descends from the Curriculum Agent to a second bear icon (in a suit), then to a third bear icon (in a graduation cap), pointing towards a box labeled "Predicted Answer" with the symbol "â".

* **Central Process Box:** Labeled "Reasoning Process". Contains a flowchart with three colored boxes: "Model Response" (blue), "Tool Calling" (green), and "Tool Response" (orange). Arrows connect these boxes in a cycle. An icon labeled "Environment" (depicting a globe with a satellite) is connected to this process.

* **Right Flow:** A green arrow descends from the Executor Agent to a bear icon at a desk with a computer, then to another bear icon holding a magnifying glass, pointing towards the "Predicted Answer" box.

* **Bottom Section:**

* **Central Output:** A box labeled "Predicted Answer" containing the symbol "â".

* **Reward Signals:** Two arrows originate from the "Predicted Answer" box.

* A blue arrow points left to a gift box icon labeled "Curriculum Reward" with the symbol "r_C".

* A green arrow points right to a gift box icon labeled "Executor Reward" with the symbol "r_E".

#### Panel (b): Performance Bar Charts

* **Common Elements:**

* **X-axis (All Charts):** Three categories: "Qwen3-8B" (light gray bar), "w/ tools" (dark gray bar), "Agent0" (blue bar).

* **Y-axis:** Numerical score for each benchmark. The scale varies per chart.

* **Chart 1 (Top-Left): MATH**

* **Title:** "MATH"

* **Y-axis Range:** Approximately 70 to 84.

* **Data Points:**

* Qwen3-8B: 78.0

* w/ tools: 79.2

* Agent0: 82.4

* **Chart 2 (Top-Right): SuperGPQA**

* **Title:** "SuperGPQA"

* **Y-axis Range:** Approximately 26 to 34.

* **Data Points:**

* Qwen3-8B: 28.3

* w/ tools: 29.4

* Agent0: 33.0

* **Chart 3 (Bottom-Left): MMLU-Pro**

* **Title:** "MMLU-Pro"

* **Y-axis Range:** Approximately 47 to 65.

* **Data Points:**

* Qwen3-8B: 51.8

* w/ tools: 54.8

* Agent0: 63.4

* **Chart 4 (Bottom-Right): BBEH**

* **Title:** "BBEH"

* **Y-axis Range:** Approximately 8 to 14.

* **Data Points:**

* Qwen3-8B: 8.6

* w/ tools: 9.4

* Agent0: 13.7

### Detailed Analysis

* **Diagram Flow (Panel a):** The diagram illustrates a closed-loop, multi-agent system. The Curriculum Agent generates or selects a question (`q`). This question is processed by the Executor Agent, which engages in a "Reasoning Process" involving model responses and tool interactions with an "Environment." The final output is a "Predicted Answer" (`â`). This answer is evaluated, generating two distinct reward signals: a "Curriculum Reward" (`r_C`) fed back to the Curriculum Agent, and an "Executor Reward" (`r_E`) fed back to the Executor Agent. This suggests a reinforcement learning setup where both agents are optimized based on the outcome.

* **Performance Trends (Panel b):** Across all four benchmarks, a consistent performance hierarchy is observed:

1. **Agent0 (Blue Bar):** Achieves the highest score in every chart.

2. **w/ tools (Dark Gray Bar):** Performs better than the base model but worse than Agent0.

3. **Qwen3-8B (Light Gray Bar):** Has the lowest score in every comparison.

* **Magnitude of Improvement:** The performance gap between "Agent0" and the other models varies by task.

* The smallest absolute gain is on the **MATH** benchmark (+4.4 points over Qwen3-8B).

* The largest relative gains appear on the **BBEH** benchmark, where Agent0's score (13.7) is approximately 59% higher than Qwen3-8B's (8.6).

### Key Observations

1. **Consistent Superiority:** Agent0 demonstrates a clear and consistent performance advantage over both the base model (Qwen3-8B) and the model augmented with tools ("w/ tools") across diverse reasoning and knowledge benchmarks (MATH, SuperGPQA, MMLU-Pro, BBEH).

2. **Tool Use Benefit:** The "w/ tools" configuration consistently outperforms the base "Qwen3-8B" model, indicating that providing tool access improves performance on these tasks.

3. **Architectural Synergy:** The diagram in panel (a) provides a potential explanation for the results in panel (b). It depicts a sophisticated system where specialized agents (Curriculum and Executor) collaborate, utilize tools, and are trained via reward signals. This complex architecture likely underpins the "Agent0" model, explaining its superior performance compared to simpler baselines.

4. **Task Diversity:** The benchmarks cover a range of domains: quantitative reasoning (MATH), graduate-level question answering (SuperGPQA), broad multidisciplinary knowledge (MMLU-Pro), and likely a specialized reasoning task (BBEH). Agent0's lead across all of them suggests robust generalization.

### Interpretation

The data presents a compelling case for the efficacy of the multi-agent, tool-augmented framework illustrated in panel (a). The "Agent0" model, which presumably implements this framework, doesn't just marginally improve upon baselines; it establishes a new performance ceiling on each benchmark.

The relationship between the two panels is causal and explanatory. Panel (a) is the *method*—a system designed for collaborative reasoning and learning through environmental interaction and reward. Panel (b) is the *result*—empirical evidence that this method produces a more capable model. The consistent ranking (Agent0 > w/ tools > Base) across varied tasks suggests the improvements are not coincidental or task-specific but stem from fundamental architectural advantages.

The "Reasoning Process" box is particularly insightful. It shows that the system's strength likely comes from dynamically interleaving internal model reasoning ("Model Response") with external action ("Tool Calling") and observation ("Tool Response"). This closed-loop interaction with an "Environment" allows for verification, information retrieval, and complex problem-solving steps that a static model cannot perform. The separate reward signals for the Curriculum and Executor agents further suggest a sophisticated training regime where each component is optimized for its specific role in the overall problem-solving pipeline.

In essence, the figure argues that moving beyond a single, monolithic model to a structured, multi-agent system with tool use and specialized training leads to significant and consistent gains in AI capability across challenging domains.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Diagram: Curriculum-Executor Agent System Architecture

### Overview

The diagram illustrates a two-agent system with feedback loops for curriculum learning. The **Curriculum Agent** (left) and **Executor Agent** (right) interact through a question (`q`) and reasoning process involving model responses, tool calls, and tool responses. Rewards (`r_C`, `r_E`) are generated based on predicted answers (`â`).

### Components/Axes

- **Left Side (Curriculum Agent)**:

- Labels: "Curriculum Agent" (three anthropomorphic figures: teacher, student, researcher).

- Arrows: Blue arrow labeled `q` (question) pointing to Executor Agent.

- Reward: Blue arrow labeled `r_C` (Curriculum Reward) pointing to `â` (Predicted Answer).

- **Center (Reasoning Process)**:

- Boxes:

- Blue: "Model Response"

- Green: "Tool Calling"

- Orange: "Tool Response"

- Globe icon labeled "Environment."

- **Right Side (Executor Agent)**:

- Labels: "Executor Agent" (three anthropomorphic figures: tool user, evaluator, analyst).

- Arrows: Green arrow labeled `â` (Predicted Answer) pointing to Tool.

- Reward: Green arrow labeled `r_E` (Executor Reward) pointing to `â`.

- **Legend**:

- Blue: Model Response

- Green: Tool Calling

- Orange: Tool Response

### Detailed Analysis

- **Flow**:

1. Curriculum Agent generates a question (`q`).

2. Executor Agent uses tools (e.g., calculator, database) to process `q`.

3. Feedback loops:

- Curriculum Reward (`r_C`) adjusts `â` based on correctness.

- Executor Reward (`r_E`) evaluates tool effectiveness.

### Key Observations

- The system emphasizes iterative learning via rewards and tool integration.

- Tool responses (`Tool Response`) are critical for refining predictions (`â`).

---

## Bar Charts: Task Performance Comparison

### Overview

Four bar charts compare performance metrics (percentage) across tasks: **MATH**, **SuperGPQA**, **MMLU-Pro**, and **BBEH**. Three methods are evaluated: **Owen3-BB**, **w/ tools**, and **Agent0**.

### Components/Axes

- **X-Axis**: Methods (Owen3-BB, w/ tools, Agent0).

- **Y-Axis**: Performance (%) with approximate values:

- **MATH**: 78.0% (Owen3-BB), 79.2% (w/ tools), 82.4% (Agent0).

- **SuperGPQA**: 28.3% (Owen3-BB), 29.4% (w/ tools), 33.0% (Agent0).

- **MMLU-Pro**: 51.8% (Owen3-BB), 54.8% (w/ tools), 63.4% (Agent0).

- **BBEH**: 8.6% (Owen3-BB), 9.4% (w/ tools), 13.7% (Agent0).

- **Legend**:

- Blue: Agent0

- Dark Gray: w/ tools

- Light Gray: Owen3-BB

### Detailed Analysis

- **Trends**:

- **Agent0** consistently outperforms other methods across all tasks.

- **w/ tools** improves performance over Owen3-BB but lags behind Agent0.

- **BBEH** shows the lowest scores, indicating poor task alignment.

### Key Observations

- Agent0 achieves **82.4% in MATH** (highest) and **13.7% in BBEH** (lowest among top performers).

- Owen3-BB underperforms in all tasks compared to w/ tools and Agent0.

---

## Interpretation

### System Architecture (Diagram)

The diagram highlights a symbiotic relationship between curriculum and execution agents. The Curriculum Agent focuses on knowledge structuring (`r_C`), while the Executor Agent leverages tools for real-world problem-solving (`r_E`). The feedback loops suggest adaptive learning, where rewards refine both prediction accuracy (`â`) and tool utility.

### Task Performance (Bar Charts)

- **Agent0’s Dominance**: Outperforms baseline methods (Owen3-BB, w/ tools) in all tasks, suggesting superior integration of curriculum learning and tool usage.

- **Tool Impact**: Adding tools (`w/ tools`) improves performance by ~1-5% over Owen3-BB, but Agent0’s holistic approach yields larger gains (e.g., +3.2% in MATH).

- **BBEH Anomaly**: Despite Agent0’s improvement, BBEH scores remain low (≤13.7%), indicating potential task-specific limitations or misalignment with Agent0’s design.

### Implications

- **Agent0’s Strengths**: Effective in structured tasks (MATH, MMLU-Pro) but struggles with BBEH, hinting at domain-specific challenges.

- **Tool Dependency**: While tools enhance performance, Agent0’s end-to-end learning likely reduces reliance on external tools compared to `w/ tools`.

- **Curriculum Reward (`r_C`)**: Critical for aligning predictions (`â`) with educational goals, as seen in Agent0’s consistent gains.

This analysis underscores the value of integrated curriculum-execution systems for adaptive reasoning, with Agent0 representing a significant advancement over incremental tool-based approaches.

DECODING INTELLIGENCE...