TECHNICAL ASSET FINGERPRINT

a6e97dc9b919ed995e317f2d

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Diagram: Reasoning Challenges and Solutions

### Overview

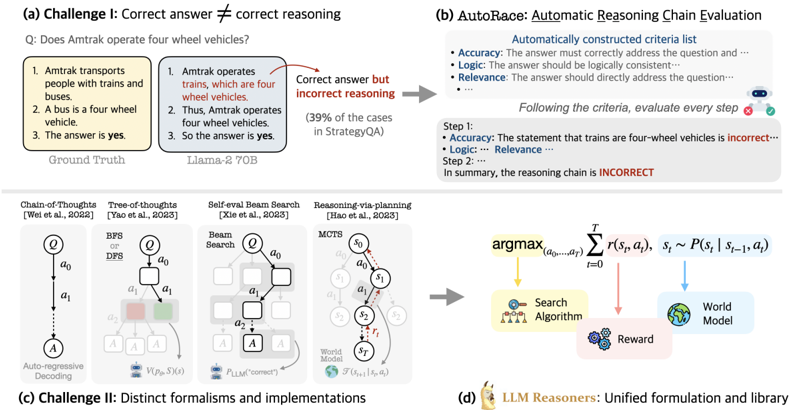

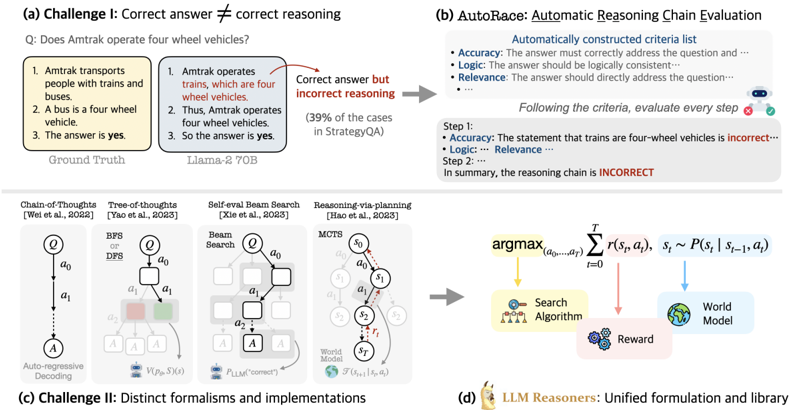

The image presents a multi-part diagram illustrating challenges in reasoning for language models and potential solutions. It covers issues like correct answers with incorrect reasoning, distinct formalisms in reasoning implementations, and introduces a method called AutoRace for automatic reasoning chain evaluation.

### Components/Axes

* **(a) Challenge I: Correct answer ≠ correct reasoning:** This section highlights a scenario where a language model (Llama-2 70B) arrives at the correct answer but through flawed reasoning, contrasted with the "Ground Truth."

* **Question:** "Does Amtrak operate four wheel vehicles?"

* **Ground Truth:**

1. "Amtrak transports people with trains and buses."

2. "A bus is a four wheel vehicle."

3. "The answer is yes."

* **Llama-2 70B:**

1. "Amtrak operates trains, which are four wheel vehicles."

2. "Thus, Amtrak operates four wheel vehicles."

3. "So the answer is yes."

* **Annotation:** "Correct answer but incorrect reasoning" points from the Llama-2 70B example.

* "(39% of the cases in StrategyQA)" is noted below the Llama-2 70B example.

* **(b) AutoRace: Automatic Reasoning Chain Evaluation:** This section describes a method for evaluating the reasoning chain of a language model.

* **Automatically constructed criteria list:**

* "Accuracy: The answer must correctly address the question and..."

* "Logic: The answer should be logically consistent..."

* "Relevance: The answer should directly address the question..."

* **Process:** "Following the criteria, evaluate every step."

* "Step 1: Accuracy: The statement that trains are four-wheel vehicles is incorrect..."

* "Logic: Relevance..."

* "Step 2: ..."

* **Conclusion:** "In summary, the reasoning chain is INCORRECT."

* **(c) Challenge II: Distinct formalisms and implementations:** This section illustrates different approaches to reasoning, including:

* **Chain-of-Thoughts [Wei et al., 2022]:** A diagram shows a sequential process: Q -> a0 -> a1 -> A, labeled "Auto-regressive Decoding."

* **Tree-of-thoughts [Yao et al., 2023]:** A tree-like structure with nodes labeled Q, a0, a1, a2, and A. "BFS or DFS" is noted at the top. There is a V(P(s), S)(s) at the bottom.

* **Self-eval Beam Search [Xie et al., 2023]:** A diagram shows a beam search process with nodes labeled Q, a0, a1, a2, and A. "Beam Search" is noted at the top. There is a P_LLM("correct") at the bottom.

* **Reasoning-via-planning [Hao et al., 2023]:** A diagram shows a process with nodes labeled s0, s1, s2, and sT. "MCTS" is noted at the top. There is a F(s_t+1 | s_t, a_t) at the bottom.

* **(d) LLM Reasoners: Unified formulation and library:** This section presents a unified formulation for LLM reasoners.

* **Equation:** argmax_(a0,...,aT) Σ_(t=0)^T r(s_t, a_t), s_t ~ P(s_t | s_(t-1), a_t)

* **Components:**

* "Search Algorithm" (yellow)

* "Reward" (pink)

* "World Model" (blue)

### Detailed Analysis

* **Challenge I:** The example highlights that language models can arrive at the correct answer through incorrect reasoning. The Llama-2 70B model incorrectly assumes that Amtrak operates four-wheel vehicles, leading to the correct answer but based on a false premise.

* **AutoRace:** This method provides a structured way to evaluate the reasoning chain of a language model by assessing accuracy, logic, and relevance at each step.

* **Challenge II:** The diagrams illustrate different approaches to reasoning, including chain-of-thoughts, tree-of-thoughts, self-eval beam search, and reasoning-via-planning. Each approach has its own structure and implementation.

* **LLM Reasoners:** The unified formulation presents a general framework for LLM reasoners, incorporating a search algorithm, reward function, and world model.

### Key Observations

* Language models can arrive at the correct answer through incorrect reasoning.

* AutoRace provides a structured way to evaluate the reasoning chain of a language model.

* There are different approaches to reasoning, each with its own structure and implementation.

* The unified formulation presents a general framework for LLM reasoners.

### Interpretation

The diagram highlights the challenges in reasoning for language models and presents potential solutions. The example in Challenge I demonstrates that language models can arrive at the correct answer through incorrect reasoning, which can be problematic in many applications. AutoRace provides a structured way to evaluate the reasoning chain of a language model, which can help to identify and correct errors in reasoning. The diagrams in Challenge II illustrate different approaches to reasoning, each with its own strengths and weaknesses. The unified formulation presents a general framework for LLM reasoners, which can help to develop more robust and reliable reasoning systems.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Diagram: Reasoning Challenges & LLM Frameworks

### Overview

The image presents a comparison of reasoning challenges in Large Language Models (LLMs), specifically focusing on scenarios where a correct answer is arrived at through incorrect reasoning. It also illustrates a framework for automatic reasoning chain evaluation (AutoRace) and different reasoning formalisms/implementations. The image is divided into four sections: (a) Challenge 1, (b) AutoRace, (c) Challenge II, and (d) LLM Reasoners.

### Components/Axes

The image does not contain traditional axes or charts. It consists of text blocks, diagrams, and flowcharts. Key components include:

* **Challenge 1:** Presents a question, ground truth, and an LLM's response with highlighted incorrect reasoning.

* **AutoRace:** Outlines criteria for evaluating reasoning chains (Accuracy, Logic, Relevance) and a step-by-step evaluation process.

* **Challenge II:** Illustrates four different reasoning formalisms: Chain-of-Thoughts, Tree-of-Thoughts, Self-eval Beam Search, and Reasoning-via-planning.

* **LLM Reasoners:** Depicts a unified formulation and library using a search algorithm, world model, and reward system.

### Detailed Analysis or Content Details

**(a) Challenge 1: Correct answer ≠ correct reasoning**

* **Question:** "Does Amtrak operate four wheel vehicles?"

* **Ground Truth:**

1. Amtrak transports people with trains and buses.

2. A bus is a four wheel vehicle.

3. The answer is yes.

* **Llama-2 70B Response:**

1. Amtrak operates trains, which are four wheel vehicles. (Highlighted in red)

2. Thus, Amtrak operates four wheel vehicles.

3. So the answer is yes.

* **Annotation:** "Correct answer but incorrect reasoning (39% of the cases in StrategyQA)"

**(b) AutoRace: Automatic Reasoning Chain Evaluation**

* **Automatically constructed criteria list:**

* Accuracy: The answer must correctly address the question and…

* Logic: The answer should be logically consistent…

* Relevance: The answer should directly address the question…

* **Evaluation Steps:**

* Step 1: Accuracy: The statement that trains are four-wheel vehicles is incorrect… Logic: Relevance…

* Step 2: In summary, the reasoning chain is INCORRECT.

**(c) Challenge II: Distinct formalisms and implementations**

* **Chain-of-Thoughts [Wei et al., 2022]:** A diagram showing a sequence of states q0, a0, q1, a1, … leading to answer A. Labeled "Auto-regressive Decoding".

* **Tree-of-Thoughts [Yao et al., 2023]:** A branching tree diagram with states q0, a0, q1, a1, … and multiple paths leading to answer A. Labeled "BFS, DFS".

* **Self-eval Beam Search [Xie et al., 2023]:** A grid-like diagram representing a beam search with states q0, a0, q1, a1, … and a "World Model". Labeled "PLM(correct)".

* **Reasoning-via-planning [Hao et al., 2023]:** A diagram showing states s0, a0, s1, a1, … with a "World Model" and labeled "MCTS".

**(d) LLM Reasoners: Unified formulation and library**

* **Formula:** argmax<sub>(a<sub>0</sub>,…,a<sub>T</sub>)</sub> ∑<sub>t=0</sub><sup>T</sup> r<sub>t</sub>(s<sub>t</sub>, a<sub>t</sub>) , s<sub>t</sub> ~ P(s<sub>t</sub> | s<sub>t-1</sub>, a<sub>t</sub>)

* **Diagram:** A flowchart showing "Search Algorithm" feeding into a "World Model" which outputs a "Reward".

### Key Observations

* The image highlights the challenge of LLMs arriving at correct answers through flawed reasoning processes.

* AutoRace provides a structured approach to evaluating the correctness of reasoning chains.

* Different reasoning formalisms (Chain-of-Thoughts, Tree-of-Thoughts, etc.) offer varying approaches to problem-solving.

* The LLM Reasoners framework integrates a search algorithm, world model, and reward system for improved reasoning.

### Interpretation

The image demonstrates the complexities of evaluating reasoning in LLMs. While LLMs can generate seemingly correct answers, the underlying reasoning process may be flawed, as illustrated in Challenge 1. AutoRace attempts to address this by providing a framework for assessing the logical consistency and relevance of reasoning chains. The different reasoning formalisms presented in Challenge II represent various attempts to improve the reasoning capabilities of LLMs. The LLM Reasoners framework suggests a potential path towards more robust and reliable reasoning by integrating search algorithms, world models, and reward systems. The formula in section (d) suggests a reinforcement learning approach to optimizing the reasoning process. The image underscores the need for continued research and development in the area of LLM reasoning to ensure that these models not only provide correct answers but also justify them with sound logic.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Diagram: Composite Figure on Reasoning Challenges and Evaluation Methods

### Overview

The image is a composite figure containing four distinct sub-figures labeled (a) through (d). It illustrates challenges in evaluating the reasoning of Large Language Models (LLMs) and presents a proposed solution framework called "AutoRace." The figure combines textual examples, flowcharts, mathematical formulations, and conceptual diagrams to explain the concepts.

### Components/Axes

The figure is divided into four quadrants:

* **Top-Left (a):** Labeled "Challenge I: Correct answer ≠ correct reasoning." Contains a question, two reasoning chains (Ground Truth and Llama-2 70B), and an annotation.

* **Top-Right (b):** Labeled "AutoRace: Automatic Reasoning Chain Evaluation." Contains a criteria list and a step-by-step evaluation example.

* **Bottom-Left (c):** Labeled "Challenge II: Distinct formalisms and implementations." Contains four schematic diagrams representing different reasoning methods.

* **Bottom-Right (d):** Labeled "LLM Reasoners: Unified formulation and library." Contains a mathematical optimization formula and a conceptual diagram linking three components.

### Detailed Analysis

#### (a) Challenge I: Correct answer ≠ correct reasoning

* **Question (Q):** "Does Amtrak operate four wheel vehicles?"

* **Ground Truth Reasoning Chain:**

1. Amtrak transports people with trains and buses.

2. A bus is a four wheel vehicle.

3. The answer is **yes**.

* **Llama-2 70B Reasoning Chain:**

1. Amtrak operates trains, which are four wheel vehicles.

2. Amtrak operates four wheel vehicles.

3. So the answer is **yes**.

* **Annotation (Red Text):** "Correct answer but incorrect reasoning (39% of the cases in StrategyQA)". This points to the Llama-2 70B chain, indicating its premise (trains are four-wheel vehicles) is factually incorrect, even though the final answer matches the ground truth.

#### (b) AutoRace: Automatic Reasoning Chain Evaluation

* **Automatically constructed criteria list:**

* **Accuracy:** The answer must correctly address the question and ...

* **Logic:** The answer should be logically consistent ...

* **Relevance:** The answer should directly address the question ...

* **Evaluation Example:** "Following the criteria, evaluate each step"

* **Step 1:**

* **Accuracy:** The statement that trains are four-wheel vehicles is **incorrect**... (marked with a red 'x' icon).

* **Logic:** ... (ellipsis indicates continuation).

* **Relevance:** ... (ellipsis indicates continuation).

* **Step 2:** ... (ellipsis indicates continuation).

* **Conclusion (Red Text):** "In summary, the reasoning chain is **INCORRECT**."

#### (c) Challenge II: Distinct formalisms and implementations

This section shows four different schematic representations of reasoning processes:

1. **Chain-of-Thoughts (Wei et al., 2022):** A linear sequence: `Q -> a0 -> a1 -> ... -> A`. Labeled "Auto-Regressive Decoding."

2. **Tree-of-thoughts (Yao et al., 2023):** A tree structure with branching from a node `Q` to `a0`, then to multiple `a1` nodes. Uses "BFS or DFS" and includes a value function `V(pθ, S(x))`.

3. **Self-refl Beam Search (Xie et al., 2023):** A beam search structure expanding from `Q` to `a0`, then to multiple `a1` nodes, with a scoring function `fLLM("correct")`.

4. **Reasoning via planning (Hao et al., 2023):** A Markov Decision Process (MDP) formulation with states `s0, s1, s2, sT` and actions `a0, a1, a2`. Includes a reward function `S(sT; q, a)`.

#### (d) LLM Reasoners: Unified formulation and library

* **Mathematical Formula:**

`argmax_(a0,...,aT) Σ_(t=0)^T r(st, at), st ~ P(st | s_t-1, at)`

This represents optimizing a sequence of actions `(a0,...,aT)` to maximize the sum of rewards `r` over time steps `t`, where the state `st` evolves according to a transition probability `P`.

* **Conceptual Diagram:** The formula is linked to three components:

* **Search Algorithm** (icon of a magnifying glass over a network).

* **Reward** (icon of a star).

* **World Model** (icon of a globe).

Arrows indicate the Search Algorithm uses the World Model and receives a Reward signal.

### Key Observations

1. **Core Problem Identified:** The figure highlights that an LLM can produce a correct final answer based on flawed or incorrect intermediate reasoning steps (Challenge I).

2. **Evaluation Framework:** AutoRace is presented as a method to automatically evaluate each step of a reasoning chain against criteria like accuracy, logic, and relevance.

3. **Diversity of Methods:** Challenge II visually demonstrates the variety of existing formalisms (linear, tree, beam search, MDP) used to implement reasoning in LLMs, suggesting a lack of standardization.

4. **Proposed Unification:** Part (d) proposes a unified mathematical formulation (an optimization problem over a sequence of actions and states) and a library ("LLM Reasoners") to encompass these diverse methods, connecting search algorithms, reward signals, and world models.

### Interpretation

This composite figure argues that evaluating LLM reasoning is non-trivial because surface-level correctness (the final answer) can mask flawed logic. It positions **AutoRace** as a diagnostic tool to dissect reasoning chains. The figure then contextualizes this problem within the broader landscape of LLM reasoning research, which uses disparate technical approaches (shown in part c). The proposed solution in part (d) is a move towards **abstraction and unification**: by framing reasoning as a sequential decision-making problem (via the MDP-like formula), different implementation strategies (chain, tree, beam search) can be seen as specific instances of a more general "search" process guided by a "world model" and a "reward." This unification aims to simplify research, enable fairer comparisons, and systematically improve the reliability of LLM reasoning. The 39% statistic from StrategyQA underscores the practical significance of the issue.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Technical Document: AI Reasoning Challenges and Methodologies

### Overview

The image presents a structured analysis of challenges in AI reasoning, evaluation frameworks, and methodologies. It is divided into four sections:

1. **(a) Challenge I**: Correct answer ≠ Correct reasoning

2. **(b) AutoRace**: Automatic Reasoning Chain Evaluation

3. **(c) Challenge II**: Distinct formalisms and implementations

4. **(d) LLM Reasoners**: Unified formulation and library

---

### Components/Axes

#### Section (a): Challenge I

- **Textual Content**:

- Question: *"Does Amtrak operate four wheel vehicles?"*

- Ground Truth: *"Yes"* (Amtrak uses buses, which are four-wheel vehicles).

- Incorrect Reasoning:

1. Amtrak operates trains (four-wheel vehicles).

2. Thus, Amtrak operates four-wheel vehicles.

3. So the answer is yes.

- **Error Highlight**: The reasoning incorrectly assumes Amtrak operates trains, ignoring buses.

- **Diagram**:

- Flowchart with three reasoning steps (boxes labeled 1–3).

- Arrows connect steps to the conclusion.

- **Key Text**: *"Correct answer but incorrect reasoning"* (red arrow).

#### Section (b): AutoRace

- **Criteria List**:

- **Accuracy**: Answer must address the question.

- **Logic**: Logical consistency required.

- **Relevance**: Directly address the question.

- **Evaluation Example**:

- Step 1: Trains are four-wheel vehicles (incorrect, as Amtrak uses buses).

- Step 2: Conclusion: Reasoning chain is **INCORRECT** (red text).

#### Section (c): Challenge II

- **Methods and References**:

1. **Chain-of-Thoughts** (Wei et al., 2022): Auto-regressive decoding.

2. **Tree-of-Thoughts** (Yao et al., 2023): BFS/DFS search.

3. **Self-eval Beam Search** (Xie et al., 2023): Beam search with self-evaluation.

4. **Reasoning-via-planning** (Hao et al., 2023): MCTS (Monte Carlo Tree Search).

#### Section (d): LLM Reasoners

- **Mathematical Formulation**:

- **Equation**:

$$

\argmax_{(a_0,\dots,a_T)} \sum_{t=0}^T r(s_t, a_t), \quad s_t \sim P(s_t | s_{t-1}, a_t)

$$

- **Components**:

- **Search Algorithm**: Explores action sequences.

- **World Model**: Simulates environment dynamics.

- **Reward**: Optimizes cumulative reward.

---

### Detailed Analysis

#### Section (a)

- **Error Analysis**: The reasoning chain incorrectly links Amtrak to trains instead of buses, despite the correct answer being "yes."

- **Diagram Flow**: Steps 1–3 form a linear chain, but Step 1’s premise is factually wrong.

#### Section (b)

- **Evaluation Framework**:

- Automatically checks for accuracy, logic, and relevance.

- Example shows failure due to incorrect premise (trains vs. buses).

#### Section (c)

- **Method Comparison**:

- **Chain-of-Thoughts**: Linear reasoning (auto-regressive).

- **Tree-of-Thoughts**: Branching exploration (BFS/DFS).

- **Self-eval Beam Search**: Combines beam search with self-correction.

- **Reasoning-via-planning**: Uses MCTS for strategic planning.

#### Section (d)

- **Formalized Approach**:

- Maximizes cumulative reward over time steps.

- Integrates search algorithms and world models for dynamic reasoning.

---

### Key Observations

1. **Challenge I**: Highlights the disconnect between factual correctness and logical reasoning.

2. **AutoRace**: Emphasizes structured evaluation criteria (accuracy, logic, relevance).

3. **Challenge II**: Shows diversity in reasoning methodologies (search, planning, self-evaluation).

4. **LLM Reasoners**: Proposes a unified framework for action-sequence optimization.

---

### Interpretation

- **Challenge I** underscores the need for robust reasoning frameworks to avoid factual errors.

- **AutoRace** provides a systematic way to evaluate reasoning chains, critical for debugging AI systems.

- **Challenge II** reflects the complexity of AI reasoning, requiring diverse approaches (e.g., MCTS for strategic tasks).

- **LLM Reasoners** formalizes reasoning as an optimization problem, aligning with reinforcement learning principles.

- **Notable Insight**: The image stresses that correctness alone is insufficient; reasoning quality must be rigorously evaluated.

DECODING INTELLIGENCE...