## Diagram: LLM Math Problem-Solving Pipeline with Tool Integration

### Overview

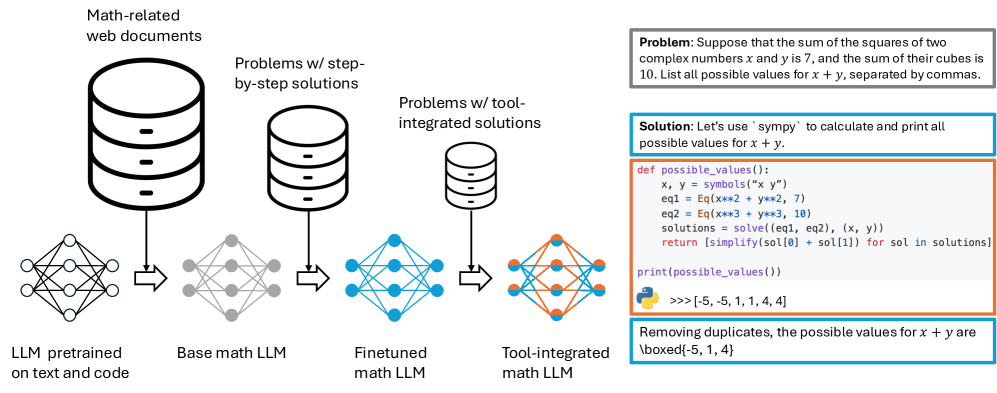

The image is a technical diagram illustrating a four-stage pipeline for developing a Large Language Model (LLM) capable of solving complex mathematical problems. The pipeline progresses from a general-purpose model to a specialized, tool-integrated system. A concrete example of a math problem and its solution using the final model is provided on the right side.

### Components/Axes

The diagram is divided into two main sections: a **Pipeline Flowchart** on the left and a **Problem-Solution Example** on the right.

**Pipeline Flowchart (Left Side):**

* **Data Sources (Top Row):** Three cylindrical database icons represent the training data sources.

1. **Leftmost Database:** Labeled "Math-related web documents".

2. **Middle Database:** Labeled "Problems w/ step-by-step solutions".

3. **Rightmost Database:** Labeled "Problems w/ tool-integrated solutions".

* **Model Stages (Bottom Row):** Four neural network diagrams represent the evolving LLM.

1. **Stage 1 (Far Left):** A gray neural network labeled "LLM pretrained on text and code". An arrow points from this model to the next.

2. **Stage 2:** A gray neural network labeled "Base math LLM". An arrow points from the "Math-related web documents" database to this model. An arrow also points from this model to the next.

3. **Stage 3:** A blue neural network labeled "Finetuned math LLM". An arrow points from the "Problems w/ step-by-step solutions" database to this model. An arrow also points from this model to the next.

4. **Stage 4 (Far Right):** An orange neural network labeled "Tool-integrated math LLM". An arrow points from the "Problems w/ tool-integrated solutions" database to this model.

* **Flow Arrows:** Black arrows indicate the flow of data and model progression from left to right.

**Problem-Solution Example (Right Side):**

* **Problem Box (Top):** A gray-bordered box containing a mathematical problem statement.

* **Solution Box (Middle):** A blue-bordered box containing a Python code solution.

* **Output/Result Box (Bottom):** A green-bordered box containing the final answer.

### Detailed Analysis

**Pipeline Flow:**

1. The process begins with a base "LLM pretrained on text and code."

2. This model is trained on "Math-related web documents" to become a "Base math LLM."

3. The base math model is then finetuned on "Problems w/ step-by-step solutions" to create a "Finetuned math LLM."

4. Finally, this finetuned model is further trained on "Problems w/ tool-integrated solutions" to produce the final "Tool-integrated math LLM."

**Problem-Solution Example Content:**

* **Problem Statement:** "Suppose that the sum of the squares of two complex numbers x and y is 7, and the sum of their cubes is 10. List all possible values for x + y, separated by commas."

* **Solution Code (Python using `sympy`):**

```python

def possible_values():

x, y = symbols("x y")

eq1 = Eq(x**2 + y**2, 7)

eq2 = Eq(x**3 + y**3, 10)

solutions = solve([eq1, eq2], (x, y))

return [simplify(sol[0] + sol[1]) for sol in solutions]

print(possible_values())

```

* **Code Output:** `>>>[-5, -5, 1, 4, 4]`

* **Final Answer:** "Removing duplicates, the possible values for x + y are \boxed{-5, 1, 4}"

### Key Observations

1. **Progressive Specialization:** The pipeline shows a clear progression from general language understanding to specialized mathematical reasoning, culminating in the ability to use external computational tools (like the `sympy` library).

2. **Data Dependency:** Each model stage is explicitly linked to a specific type of training data, indicating that the quality and nature of the data are crucial for each step of specialization.

3. **Tool Integration as Final Step:** The use of external tools (symbolic math solvers) is presented as the final, advanced capability, enabling the model to solve problems that require precise symbolic computation beyond pure textual reasoning.

4. **Example Validation:** The problem-solution box serves as a concrete validation of the "Tool-integrated math LLM's" capability. It demonstrates the model generating executable code to solve a non-trivial problem involving complex numbers and polynomial equations.

### Interpretation

This diagram outlines a methodology for creating a specialized AI system for advanced mathematics. It argues that solving complex math problems requires more than just training on mathematical text; it requires a staged approach that builds from general knowledge to specific problem-solving formats, and finally to the integration of external computational tools.

The pipeline suggests a **Peircean investigative** logic:

* **Abduction:** The initial pretrained LLM forms a broad hypothesis about language and code structure.

* **Deduction:** The finetuning stages apply logical rules (mathematical steps, tool usage) to narrow down the model's function.

* **Induction:** The final tool-integrated model tests its hypotheses by generating and executing code, using the concrete results (like the output `[-5, -5, 1, 4, 4]`) to confirm the solution.

The inclusion of the `sympy` example is critical. It shows the system moving from *representing* math problems in text to *actively solving* them through code generation and execution. This represents a shift from a model that *knows about* math to one that *does* math. The final answer, presented in the `\boxed{}` format common in mathematical publications, signifies the system's ability to produce formal, precise results suitable for academic or technical contexts. The entire process demonstrates a pathway toward more reliable and capable AI assistants in STEM fields.