## Process Diagram: Four-Stage Vision-Language Model Pipeline

### Overview

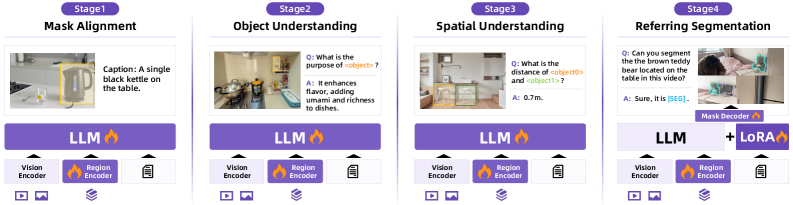

The image displays a horizontal, four-stage technical diagram illustrating a progressive pipeline for vision-language understanding. Each stage is represented by a labeled panel, showing an input image, a textual query or caption, a model response, and the underlying neural network components. The pipeline progresses from basic captioning to complex visual reasoning and segmentation.

### Components/Axes

The diagram is structured into four sequential stages, arranged left to right:

1. **Stage 1: Mask Alignment**

* **Input Image:** A kitchen scene with a kettle on a table.

* **Text (Caption):** "Caption: A single black kettle on the table."

* **Model Response:** Not explicitly shown for this stage.

* **Components:** A large purple block labeled "LLM" with a fire icon. Below it are icons for "Vision Encoder" and "Region Encoder," with a document icon to the right.

2. **Stage 2: Object Understanding**

* **Input Image:** A kitchen scene with a person and various objects.

* **Text (Question):** "Q: What is the purpose of <region100>?"

* **Model Response (Answer):** "A: It enhances flavor, adding freshness and richness to dishes."

* **Components:** Identical to Stage 1: "LLM" (with fire icon), "Vision Encoder," "Region Encoder," and a document icon.

3. **Stage 3: Spatial Understanding**

* **Input Image:** A living room scene with furniture.

* **Text (Question):** "Q: What is the distance of <region100> and <region101>?"

* **Model Response (Answer):** "A: 0.7m."

* **Components:** Identical to Stages 1 and 2: "LLM" (with fire icon), "Vision Encoder," "Region Encoder," and a document icon.

4. **Stage 4: Referring Segmentation**

* **Input Image:** A person interacting with a teddy bear on a table.

* **Text (Question):** "Q: Can you segment the brown teddy bear on the table in this video?"

* **Model Response (Answer):** "A: Sure, it is [SEG]."

* **Components:** The "LLM" block is now connected to a "Mask Decoder" block. Below the LLM are the "Vision Encoder" and "Region Encoder." A new "LoRA" block with a fire icon is added to the right of the Mask Decoder. The document icon remains.

### Detailed Analysis

The diagram details a hierarchical model architecture where each stage builds upon the capabilities of the previous one.

* **Stage 1 (Mask Alignment):** The task is basic image captioning. The model identifies a primary object ("black kettle") and its spatial context ("on the table"). The core components are a Large Language Model (LLM), a Vision Encoder for processing the image, and a Region Encoder for handling specific image regions.

* **Stage 2 (Object Understanding):** The task advances to visual question answering (VQA) about object function. The model references a specific region (`<region100>`) and provides a detailed, knowledge-based answer about its purpose ("enhances flavor..."). The same core component set (LLM, Vision Encoder, Region Encoder) is used.

* **Stage 3 (Spatial Understanding):** The task involves spatial reasoning between two objects. The model must understand the relationship between `<region100>` and `<region101>` and quantify their distance ("0.7m"). The component architecture remains consistent.

* **Stage 4 (Referring Segmentation):** The most complex task requires generating a pixel-level segmentation mask (`[SEG]`) for a described object ("brown teddy bear"). The architecture expands: the LLM now interfaces with a **Mask Decoder** to produce the segmentation output. A **LoRA** (Low-Rank Adaptation) module is introduced, suggesting parameter-efficient fine-tuning for this specific task.

### Key Observations

1. **Progressive Complexity:** The pipeline demonstrates a clear escalation in task difficulty: from description (Stage 1), to functional reasoning (Stage 2), to spatial quantification (Stage 3), and finally to precise pixel-level localization and segmentation (Stage 4).

2. **Architectural Evolution:** The core model (LLM + Vision Encoder + Region Encoder) is stable for the first three reasoning tasks. The architecture only changes significantly for the segmentation task (Stage 4), adding specialized decoders (Mask Decoder) and adaptation modules (LoRA).

3. **Unified Interface:** All stages use a consistent visual language: a purple "LLM" block with a fire icon (likely indicating a powerful or active model), and standardized icons for encoders. The input/output format is also consistent (image + text query → text answer).

4. **Region-Based Reasoning:** Stages 2 and 3 explicitly use region tokens (`<region100>`, `<region101>`), indicating the model's ability to ground its reasoning in specific, localized parts of the image.

### Interpretation

This diagram illustrates a sophisticated, multi-stage framework for integrating vision and language. It suggests a research or engineering approach where a powerful, general-purpose LLM is progressively augmented with visual understanding capabilities.

* **The "Fire" Icon:** The consistent use of a fire icon on the LLM and LoRA blocks likely symbolizes these components as the "engine" or most computationally intensive parts of the system.

* **From Understanding to Action:** The pipeline moves from passive understanding (captioning, QA) to active, generative output (segmentation). Stage 4 represents a shift from answering questions *about* the image to performing a precise, pixel-level *operation* on the image.

* **Modularity and Specialization:** The architecture implies a modular design. The core LLM-vision backbone handles general reasoning, while specialized modules (Mask Decoder, LoRA) are plugged in for specific, demanding tasks like segmentation. This is a common pattern in modern AI to balance capability with efficiency.

* **Underlying Message:** The diagram communicates that achieving human-like visual understanding requires a hierarchy of skills, from basic recognition to complex spatial and functional reasoning, culminating in the ability to precisely manipulate visual data. The consistent component set for the first three stages argues for the versatility of a well-designed vision-language foundation model.