## Diagram: Synchronization Modes in a Machine Learning Training Pipeline

### Overview

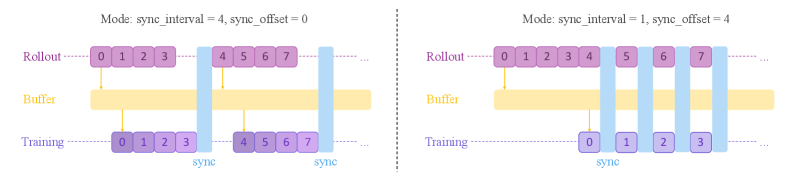

The image displays two side-by-side diagrams illustrating different synchronization strategies between a "Rollout" process, a shared "Buffer," and a "Training" process. The diagrams compare how data batches flow and synchronize under two distinct parameter sets for `sync_interval` and `sync_offset`.

### Components/Axes

The diagrams are structured with three horizontal layers, from top to bottom:

1. **Rollout**: Represented by a sequence of purple rectangular boxes containing numbers (0, 1, 2, 3, etc.). This likely represents the generation of data batches or experiences.

2. **Buffer**: A continuous, horizontal yellow bar. This represents a shared memory or storage area where rollout data is held.

3. **Training**: Represented by another sequence of purple rectangular boxes, mirroring the Rollout layer. This represents the consumption of data batches for model training.

Vertical light blue bars labeled **"sync"** connect the layers, indicating synchronization points where data is transferred or consistency is enforced.

**Text Labels:**

* **Left Diagram Title:** `Mode: sync_interval = 4, sync_offset = 0`

* **Right Diagram Title:** `Mode: sync_interval = 1, sync_offset = 4`

* **Layer Labels (Left Side):** `Rollout`, `Buffer`, `Training` (written in a purple font).

* **Synchronization Label:** `sync` (written in blue at the base of each vertical blue bar).

### Detailed Analysis

The diagrams visualize the temporal relationship and data flow between the three components based on the synchronization parameters.

**Left Diagram (`sync_interval = 4, sync_offset = 0`):**

* **Rollout Sequence:** Generates batches in two visible groups: `0, 1, 2, 3` and then `4, 5, 6, 7`.

* **Training Sequence:** Consumes batches in identical groups: `0, 1, 2, 3` and then `4, 5, 6, 7`.

* **Synchronization Flow:**

1. Rollout generates batch `0`. An orange arrow points down to the Buffer, indicating data is stored.

2. After Rollout completes batch `3`, a vertical blue "sync" bar appears. A yellow arrow from the Buffer points to Training batch `0`, and a blue arrow from the sync bar points to Training batch `0`. This indicates a synchronized transfer of the first group of data (0-3) from the Buffer to the Training process.

3. The pattern repeats for the next group (batches 4-7).

* **Trend:** Synchronization occurs in **batches of 4** (`sync_interval=4`). Training starts immediately after the first rollout group is complete (`sync_offset=0`).

**Right Diagram (`sync_interval = 1, sync_offset = 4`):**

* **Rollout Sequence:** Generates batches sequentially: `0, 1, 2, 3, 4, 5, 6, 7`.

* **Training Sequence:** Consumes batches sequentially: `0, 1, 2, 3`.

* **Synchronization Flow:**

1. Rollout generates batches `0, 1, 2, 3, 4`. Data from each is stored in the Buffer (orange arrows).

2. **After Rollout completes batch `4`**, the first vertical blue "sync" bar appears. A yellow arrow from the Buffer points to Training batch `0`, and a blue arrow from the sync bar points to Training batch `0`. This indicates training begins only after rollout has produced 5 batches (0 through 4), establishing an offset.

3. Subsequent syncs happen after **every single new rollout batch** (`5`, `6`, `7`). Each sync bar connects the latest rollout batch to the next sequential training batch.

* **Trend:** Synchronization occurs **after every single rollout batch** (`sync_interval=1`). Training is **delayed** until the rollout has produced 5 batches (`sync_offset=4`, meaning training starts at index 0 when rollout is at index 4).

### Key Observations

1. **Parameter Impact:** The `sync_interval` parameter controls the frequency of synchronization (batched vs. continuous). The `sync_offset` parameter controls the delay or lead time between the rollout and training processes.

2. **Visual Metaphor:** The Buffer acts as an intermediary queue. The orange arrows show data being enqueued from Rollout. The yellow arrows show data being dequeued for Training, triggered by the blue sync events.

3. **Spatial Layout:** The Rollout and Training layers are aligned vertically to emphasize their sequential correspondence. The sync bars are placed at the temporal point where the handoff occurs.

### Interpretation

These diagrams illustrate two fundamental strategies for coordinating data generation (rollout) and model update (training) in reinforcement learning or similar iterative learning systems.

* The **left mode (`interval=4, offset=0`)** represents a **batch-synchronous** approach. It waits for a full batch of experiences to be collected before synchronously updating the training process. This can be computationally efficient but may introduce latency, as training is idle while waiting for the rollout batch to complete.

* The **right mode (`interval=1, offset=4`)** represents a **more asynchronous, pipelined** approach. Training starts after an initial warm-up period (the offset) and then continuously processes experiences as soon as they are available (every interval). This can lead to more frequent model updates and potentially faster adaptation, but with higher synchronization overhead and the complexity of managing a constantly shifting data window.

The choice between these modes involves a trade-off between computational throughput, update frequency, and system complexity. The diagrams effectively communicate how the `sync_interval` and `sync_offset` parameters directly govern this trade-off by defining the choreography between the rollout and training workers.