## Bar Charts: Model Accuracy Comparison

### Overview

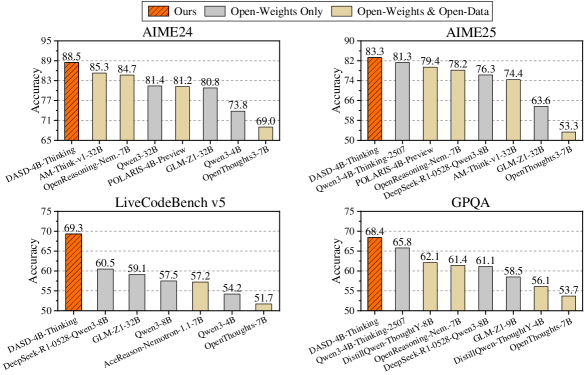

The image contains four bar charts comparing the accuracy of different language models on four different benchmarks: AIME24, AIME25, LiveCodeBench v5, and GPQA. The models are evaluated under three conditions: "Ours" (orange), "Open-Weights Only" (gray), and "Open-Weights & Open-Data" (beige).

### Components/Axes

* **Legend:** Located at the top of the image.

* Orange: "Ours"

* Gray: "Open-Weights Only"

* Beige: "Open-Weights & Open-Data"

* **Y-axis:** "Accuracy" ranging from 65 to 95 for AIME24, 50 to 90 for AIME25, 50 to 75 for LiveCodeBench v5, and 50 to 75 for GPQA.

* **X-axis:** Different language models, varying for each benchmark.

### Detailed Analysis

#### AIME24

* **Trend:** The "Ours" model (DASD-4B-Thinking) has the highest accuracy, followed by "Open-Weights & Open-Data" models, and then "Open-Weights Only" models.

* **Data Points:**

* DASD-4B-Thinking (Ours): 88.5

* AM-Think-v1-32B (Open-Weights & Open-Data): 85.3

* OpenReasoning-Nem.-7B (Open-Weights & Open-Data): 84.7

* POLARIS-4B-Preview (Open-Weights Only): 81.4

* GLM-Z1-32B (Open-Weights Only): 81.2

* Qwen3-32B (Open-Weights Only): 80.8

* Qwen3-4B (Open-Weights Only): 73.8

* OpenThoughts3-7B (Open-Weights & Open-Data): 69.0

#### AIME25

* **Trend:** Similar to AIME24, "Ours" performs best, followed by "Open-Weights & Open-Data", and then "Open-Weights Only".

* **Data Points:**

* DASD-4B-Thinking (Ours): 83.3

* Qwen3-4B-Thinking-2507 (Open-Weights Only): 81.3

* POLARIS-4B-Preview (Open-Weights & Open-Data): 79.4

* OpenReasoning-Nem.-7B (Open-Weights & Open-Data): 78.2

* AM-Think-v1-32B (Open-Weights Only): 76.3

* GLM-Z1-32B (Open-Weights & Open-Data): 74.4

* OpenThoughts3-7B (Open-Weights Only): 53.3

* Qwen3-8B (Open-Weights Only): 63.6

#### LiveCodeBench v5

* **Trend:** "Ours" significantly outperforms the other models. "Open-Weights & Open-Data" models generally perform better than "Open-Weights Only".

* **Data Points:**

* DASD-4B-Thinking (Ours): 69.3

* DeepSeek-R1-0528-Qwen3-8B (Open-Weights Only): 60.5

* GLM-Z1-32B (Open-Weights Only): 59.1

* Qwen3-8B (Open-Weights Only): 57.5

* AceReason-Nemotron-1.1-7B (Open-Weights & Open-Data): 57.2

* Qwen3-4B (Open-Weights Only): 54.2

* OpenThoughts-7B (Open-Weights Only): 51.7

#### GPQA

* **Trend:** "Ours" performs best. The performance of "Open-Weights Only" and "Open-Weights & Open-Data" models is relatively close, with some variation.

* **Data Points:**

* DASD-4B-Thinking (Ours): 68.4

* Qwen3-4B-Thinking-2507 (Open-Weights Only): 65.8

* OpenReasoning-Nem.-7B (Open-Weights & Open-Data): 62.1

* DistillQwen-ThoughtY-8B (Open-Weights & Open-Data): 61.4

* DeepSeek-R1-0528-Qwen3-8B (Open-Weights & Open-Data): 61.1

* GLM-Z1-9B (Open-Weights Only): 58.5

* DistillQwen-ThoughtY-4B (Open-Weights & Open-Data): 56.1

* OpenThoughts-7B (Open-Weights Only): 53.7

### Key Observations

* The "Ours" model (DASD-4B-Thinking) consistently achieves the highest accuracy across all four benchmarks.

* Using "Open-Weights & Open-Data" generally improves performance compared to using "Open-Weights Only".

* The performance difference between models varies across different benchmarks.

### Interpretation

The data suggests that the "Ours" model (DASD-4B-Thinking) is superior to the other models tested across these four benchmarks. The use of both open weights and open data generally leads to better performance than using open weights alone, indicating the importance of data in training these language models. The varying performance differences across benchmarks highlight the different strengths and weaknesses of each model on different types of tasks. The "Ours" model likely benefits from a combination of architectural choices, training data, and training methodology that makes it particularly effective on these benchmarks.