\n

## Bar Charts: Model Performance Comparison on Various Benchmarks

### Overview

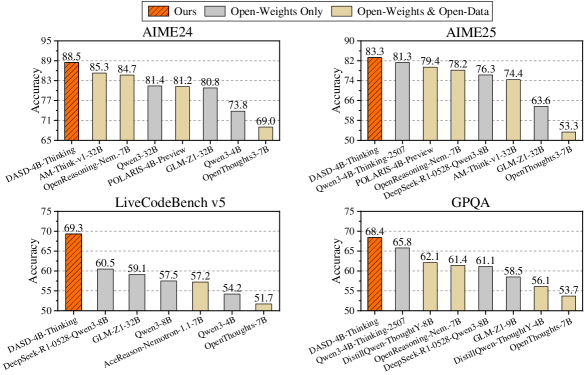

The image presents four bar charts comparing the performance of different language models across four benchmarks: AIME24, AIME25, LiveCodeBench v5, and GPQA. The performance metric is "Accuracy". Three model configurations are compared: "Ours" (orange bars), "Open-Weights Only" (dark grey bars), and "Open-Weights & Open-Data" (light grey bars).

### Components/Axes

* **Y-axis (all charts):** Accuracy, ranging from approximately 50 to 95. The axis is labeled "Accuracy".

* **X-axis (all charts):** Model names. The specific models vary per chart.

* **Legend (top-left of AIME24 chart):**

* "Ours" - Orange

* "Open-Weights Only" - Dark Grey

* "Open-Weights & Open-Data" - Light Grey

* **Chart Titles:** AIME24, AIME25, LiveCodeBench v5, GPQA. These are positioned above each respective chart.

* **Gridlines:** Horizontal gridlines are present in all charts to aid in reading values.

### Detailed Analysis or Content Details

**AIME24:**

* **Trend:** "Ours" consistently outperforms "Open-Weights Only" and "Open-Weights & Open-Data". "Open-Weights & Open-Data" generally performs better than "Open-Weights Only".

* **Data Points:**

* DASD-4B-Thinking: Ours = 89, Open-Weights Only = 83, Open-Weights & Open-Data = 78

* AM-Thak v32B: Ours = 85.5, Open-Weights Only = 82, Open-Weights & Open-Data = 76

* OpenReasoning-Nem-7B: Ours = 84.7, Open-Weights Only = 81, Open-Weights & Open-Data = 74

* POLARIS-4B-Preview: Ours = 81.4, Open-Weights Only = 78, Open-Weights & Open-Data = 72

* GLM-21-32B: Ours = 81.2, Open-Weights Only = 77, Open-Weights & Open-Data = 71

* OpenThoughts-7B: Ours = 73.8, Open-Weights Only = 69, Open-Weights & Open-Data = 65

**AIME25:**

* **Trend:** Similar to AIME24, "Ours" is the best performer, followed by "Open-Weights & Open-Data", and then "Open-Weights Only".

* **Data Points:**

* DASD-4B-Thinking-2507: Ours = 83.3, Open-Weights Only = 79.4, Open-Weights & Open-Data = 74

* Owen3-4B-Thinking-2507: Ours = 78.2, Open-Weights Only = 76.3, Open-Weights & Open-Data = 69

* DeepSeek-R1-0528-Thinking: Ours = 76.3, Open-Weights Only = 74.4, Open-Weights & Open-Data = 68

* POLARIS-4B-Preview: Ours = 76.3, Open-Weights Only = 63.6, Open-Weights & Open-Data = 53.3

* AM-Thak v32B: Ours = 74.4, Open-Weights Only = 63.6, Open-Weights & Open-Data = 53.3

* OpenThoughts-7B: Ours = 63.6, Open-Weights Only = 53.3, Open-Weights & Open-Data = 53.3

**LiveCodeBench v5:**

* **Trend:** "Ours" significantly outperforms the other two configurations. "Open-Weights & Open-Data" is slightly better than "Open-Weights Only".

* **Data Points:**

* DASD-4B-Thinking: Ours = 69.3, Open-Weights Only = 60.5, Open-Weights & Open-Data = 59.1

* DeepSeek-R1-0528-Thinking: Ours = 59.1, Open-Weights Only = 57.5, Open-Weights & Open-Data = 57.2

* GLM-21-32B: Ours = 57.5, Open-Weights Only = 57.2, Open-Weights & Open-Data = 54.2

* AceReason-Nemotron-1.1-7B: Ours = 57.2, Open-Weights Only = 54.2, Open-Weights & Open-Data = 51.7

* OpenThoughts-7B: Ours = 54.2, Open-Weights Only = 51.7, Open-Weights & Open-Data = 51.7

**GPQA:**

* **Trend:** "Ours" is the best performer, followed by "Open-Weights Only", and then "Open-Weights & Open-Data".

* **Data Points:**

* DASD-4B-Thinking-2507: Ours = 68.4, Open-Weights Only = 65.8, Open-Weights & Open-Data = 62.1

* Owen3-4B-Thinking-Y4B: Ours = 65.8, Open-Weights Only = 61.4, Open-Weights & Open-Data = 58.6

* DeepSeek-R1-0528-Thinking: Ours = 61.4, Open-Weights Only = 61.1, Open-Weights & Open-Data = 56.1

* Distill-Thinking-Y4B: Ours = 61.1, Open-Weights Only = 58.6, Open-Weights & Open-Data = 53.7

* OpenReasoning-Nem-7B: Ours = 61.1, Open-Weights Only = 58.6, Open-Weights & Open-Data = 53.7

* Distill-GLM-9B: Ours = 58.6, Open-Weights Only = 53.7, Open-Weights & Open-Data = 53.7

* OpenThoughts-7B: Ours = 56.1, Open-Weights Only = 53.7, Open-Weights & Open-Data = 53.7

### Key Observations

* "Ours" consistently achieves the highest accuracy across all benchmarks and models.

* Adding open data ("Open-Weights & Open-Data") generally improves performance compared to using only open weights ("Open-Weights Only"), but the improvement is not always substantial.

* The performance differences between models vary depending on the benchmark.

* The gap between "Ours" and the other configurations is most pronounced in the LiveCodeBench v5 benchmark.

### Interpretation

The data suggests that the "Ours" model configuration represents a significant improvement over using only open weights or open weights combined with open data. This could be due to a variety of factors, such as a more effective training methodology, a larger dataset, or a more sophisticated model architecture. The consistent performance gains across different benchmarks indicate that the benefits of "Ours" are not specific to any particular task. The addition of open data generally improves performance, suggesting that incorporating external knowledge can be beneficial, but the extent of the improvement varies. The large performance gap on LiveCodeBench v5 might indicate that this benchmark is particularly sensitive to the specific capabilities of the "Ours" model. The consistent performance of OpenThoughts-7B at the lower end of the spectrum across all benchmarks suggests it may be a less capable model compared to the others tested. The data provides a quantitative comparison of different model configurations, allowing for informed decisions about which models to use for specific tasks.