## Bar Chart Comparison: Model Accuracy Across Four Benchmarks

### Overview

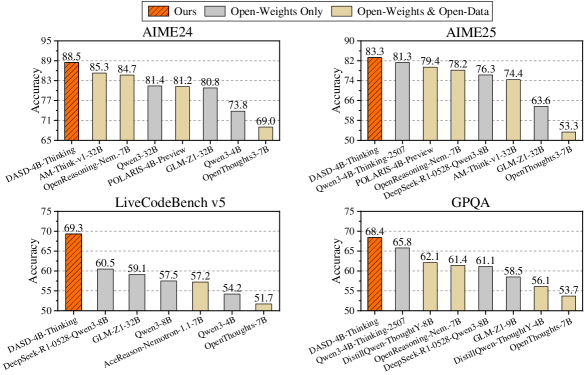

The image displays a 2x2 grid of four bar charts, each comparing the accuracy performance of various AI models on a specific benchmark. The charts share a common legend and visual style, using grouped bars to represent three distinct model categories. The overall purpose is to benchmark the performance of a model labeled "Ours" against other models, categorized by their training data openness.

### Components/Axes

* **Common Legend (Top Center):** Positioned above the charts, it defines three categories:

* **Ours:** Represented by orange bars.

* **Open-Weights Only:** Represented by gray bars.

* **Open-Weights & Open-Data:** Represented by beige/light tan bars.

* **Chart Structure:** Each of the four charts has:

* **Y-axis:** Labeled "Accuracy". The scale varies per chart (e.g., 65-95 for AIME24, 50-90 for AIME25).

* **X-axis:** Lists the names of specific AI models being compared.

* **Bars:** Grouped bars for each model, colored according to the legend. The exact accuracy value is printed atop each bar.

### Detailed Analysis

**1. Top-Left Chart: AIME24**

* **Y-axis Scale:** 65 to 95.

* **Models & Data Points (from left to right):**

* `DASD-4B-ThinkIng` (Orange - "Ours"): **88.5**

* `AIM-Thinking-v1-32B` (Gray - "Open-Weights Only"): **85.3**

* `OpenReasoning-Nem...-7B` (Beige - "Open-Weights & Open-Data"): **84.7**

* `Qwen3-32B` (Gray): **81.4**

* `POLARIS-4B-Preview` (Gray): **81.2**

* `GLM-Z1-32B` (Gray): **80.8**

* `Qwen3-4B` (Gray): **73.8**

* `OpenThoughts-3-7B` (Beige): **69.0**

* **Trend:** The "Ours" model (`DASD-4B-ThinkIng`) achieves the highest accuracy. Performance generally decreases moving right, with the "Open-Weights & Open-Data" models (`OpenReasoning-Nem...-7B`, `OpenThoughts-3-7B`) showing a wider spread in results.

**2. Top-Right Chart: AIME25**

* **Y-axis Scale:** 50 to 90.

* **Models & Data Points (from left to right):**

* `DASD-4B-ThinkIng` (Orange - "Ours"): **83.3**

* `Qwen3-4B-Thinking` (Gray): **81.3**

* `POLARIS-4B-Preview` (Gray): **79.4**

* `DeepSeek-R1-0528-Qwen3-8B` (Beige): **78.2**

* `AIM-Thinking-v1-32B` (Gray): **76.3**

* `GLM-Z1-32B` (Gray): **74.4**

* `Qwen3-32B` (Gray): **63.6**

* `OpenThoughts-3-7B` (Beige): **53.3**

* **Trend:** Similar to AIME24, the "Ours" model leads. There is a significant drop-off for the last two models (`Qwen3-32B`, `OpenThoughts-3-7B`), with the latter showing the lowest score across all charts.

**3. Bottom-Left Chart: LiveCodeBench v5**

* **Y-axis Scale:** 50 to 75.

* **Models & Data Points (from left to right):**

* `DASD-4B-ThinkIng` (Orange - "Ours"): **69.3**

* `DeepSeek-R1-0528-Qwen3-8B` (Beige): **60.5**

* `GLM-Z1-32B` (Gray): **59.1**

* `Qwen3-32B` (Gray): **57.5**

* `AceReason-Nemotron-1.1-7B` (Beige): **57.2**

* `Qwen3-4B` (Gray): **54.2**

* `OpenThoughts-3-7B` (Beige): **51.7**

* **Trend:** The "Ours" model has a substantial lead of nearly 9 percentage points over the next best model. The performance gradient is more gradual here compared to the AIME charts.

**4. Bottom-Right Chart: GPQA**

* **Y-axis Scale:** 50 to 75.

* **Models & Data Points (from left to right):**

* `DASD-4B-ThinkIng` (Orange - "Ours"): **68.4**

* `Qwen3-4B-Thinking` (Gray): **65.8**

* `Doubao-1.5-thinking-pro` (Gray): **62.1**

* `OpenReasoning-Nem...-7B` (Beige): **61.4**

* `DeepSeek-R1-0528-Qwen3-8B` (Beige): **61.1**

* `GLM-Z1-9B` (Gray): **58.5**

* `Doubao-1.5-thinking-lite` (Gray): **56.1**

* `OpenThoughts-3-7B` (Beige): **53.7**

* **Trend:** The "Ours" model again leads, but the margin is smaller (~2.6 points) compared to the second-place `Qwen3-4B-Thinking`. The chart shows a relatively steady decline in accuracy across the listed models.

### Key Observations

1. **Consistent Leader:** The model labeled "Ours" (`DASD-4B-ThinkIng`) is the top performer on all four benchmarks presented.

2. **Category Performance:** Models in the "Open-Weights Only" (gray) category are the most numerous and occupy a wide range of positions, from near the top to the bottom. The "Open-Weights & Open-Data" (beige) models are fewer and often appear in the lower half of the rankings, with `OpenThoughts-3-7B` consistently scoring the lowest in each chart it appears in.

3. **Benchmark Variability:** The absolute accuracy scores and the performance gaps between models vary significantly across benchmarks. For example, the lead of "Ours" is most pronounced in LiveCodeBench v5 and smallest in GPQA.

4. **Model Name Consistency:** Some models appear across multiple benchmarks (e.g., `DASD-4B-ThinkIng`, `GLM-Z1-32B`, `Qwen3-32B`, `OpenThoughts-3-7B`), allowing for cross-benchmark performance tracking.

### Interpretation

This set of charts serves as a comparative performance report, likely from the creators of the `DASD-4B-ThinkIng` model. The data suggests that their model achieves state-of-the-art or leading accuracy across diverse reasoning and knowledge benchmarks (AIME math, coding, graduate-level QA) when compared to a selection of other open-weight models.

The grouping by data openness ("Open-Weights Only" vs. "Open-Weights & Open-Data") implies an investigation into whether access to training data correlates with benchmark performance. The results here do not show a clear advantage for models with open data; in fact, the top-performing "Ours" model is categorized separately, and the open-data models often trail behind. This could indicate that model architecture, training methodology, or data quality (not just openness) are more critical factors for these specific tasks.

The consistent placement of `OpenThoughts-3-7B` at the bottom might suggest it is a smaller or less optimized model within this comparison group. The variability in the performance gap (e.g., large in LiveCodeBench, smaller in GPQA) highlights that model strengths are benchmark-dependent, and no single model dominates by an identical margin across all domains. The charts effectively argue for the competitive performance of the "Ours" model within the open-weights landscape as of the data's collection date.