## Bar Chart: Model Performance Across Datasets

### Overview

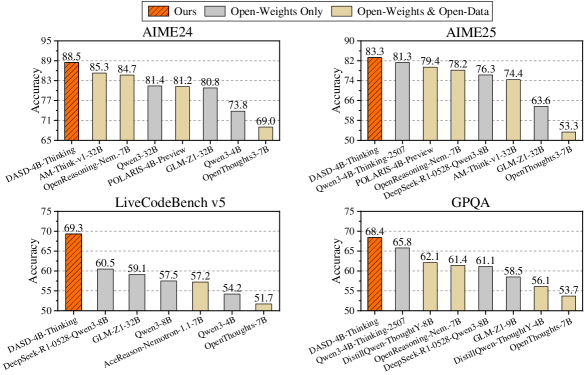

The image is a grouped bar chart comparing the accuracy of various AI models across four datasets: AIME24, AIME25, LiveCodeBench v5, and GPQA. Each dataset is represented as a separate sub-chart, with models listed on the x-axis and accuracy percentages on the y-axis. Three categories are compared: "Ours" (orange), "Open-Weights Only" (gray), and "Open-Weights & Open-Data" (beige). The chart highlights performance differences between proprietary and open-source models.

### Components/Axes

- **X-axis**: Model names (e.g., DASD-4B-Thinking, Qwen3-4B-Preview, OpenThoughts3-7B).

- **Y-axis**: Accuracy percentages (ranging from 50% to 95%).

- **Legend**: Located at the top, with three color-coded categories:

- **Orange**: "Ours" (proprietary model).

- **Gray**: "Open-Weights Only" (open-source models without open data).

- **Beige**: "Open-Weights & Open-Data" (open-source models with open data).

- **Sub-charts**: Four datasets (AIME24, AIME25, LiveCodeBench v5, GPQA) are displayed as separate grouped bar charts.

### Detailed Analysis

#### AIME24

- **Models and Accuracy**:

- DASD-4B-Thinking: 88.5 (Ours), 85.3 (Open-Weights Only), 84.7 (Open-Weights & Open-Data).

- AM-Think-v1-32B: 85.3 (Ours), 84.7 (Open-Weights Only), 81.4 (Open-Weights & Open-Data).

- OpenReasoning-Nem-7B: 84.7 (Ours), 81.4 (Open-Weights Only), 81.2 (Open-Weights & Open-Data).

- Qwen3-32B: 81.4 (Ours), 81.2 (Open-Weights Only), 80.8 (Open-Weights & Open-Data).

- POLARIS-4B-Preview: 81.2 (Ours), 80.8 (Open-Weights Only), 73.8 (Open-Weights & Open-Data).

- GLM-Z1-32B: 80.8 (Ours), 73.8 (Open-Weights Only), 69.0 (Open-Weights & Open-Data).

- Qwen3-4B: 73.8 (Ours), 69.0 (Open-Weights Only), 69.0 (Open-Weights & Open-Data).

- OpenThoughts3-7B: 69.0 (Ours), 69.0 (Open-Weights Only), 69.0 (Open-Weights & Open-Data).

#### AIME25

- **Models and Accuracy**:

- DASD-4B-Thinking: 83.3 (Ours), 81.3 (Open-Weights Only), 79.4 (Open-Weights & Open-Data).

- Qwen3-4B-Preview: 81.3 (Ours), 79.4 (Open-Weights Only), 78.2 (Open-Weights & Open-Data).

- POLARIS-4B-Reasoning-Nem-7B: 79.4 (Ours), 78.2 (Open-Weights Only), 76.3 (Open-Weights & Open-Data).

- DeepSeek-R1-0528-7B: 78.2 (Ours), 76.3 (Open-Weights Only), 74.4 (Open-Weights & Open-Data).

- Qwen3-8B: 76.3 (Ours), 74.4 (Open-Weights Only), 63.6 (Open-Weights & Open-Data).

- OpenThoughts3-7B: 74.4 (Ours), 63.6 (Open-Weights Only), 53.3 (Open-Weights & Open-Data).

#### LiveCodeBench v5

- **Models and Accuracy**:

- DASD-4B-Thinking: 69.3 (Ours), 60.5 (Open-Weights Only), 59.1 (Open-Weights & Open-Data).

- DeepSeek-R1-0528-8B: 60.5 (Ours), 59.1 (Open-Weights Only), 57.5 (Open-Weights & Open-Data).

- GLM-Z1-32B: 59.1 (Ours), 57.5 (Open-Weights Only), 57.2 (Open-Weights & Open-Data).

- Qwen3-8B: 57.5 (Ours), 57.2 (Open-Weights Only), 54.2 (Open-Weights & Open-Data).

- AceReason-Nemotron-1.1-7B: 57.2 (Ours), 54.2 (Open-Weights Only), 51.7 (Open-Weights & Open-Data).

- Qwen3-4B: 54.2 (Ours), 51.7 (Open-Weights Only), 51.7 (Open-Weights & Open-Data).

- OpenThoughts-7B: 51.7 (Ours), 51.7 (Open-Weights Only), 51.7 (Open-Weights & Open-Data).

#### GPQA

- **Models and Accuracy**:

- DASD-4B-Thinking: 68.4 (Ours), 65.8 (Open-Weights Only), 62.1 (Open-Weights & Open-Data).

- Qwen3-4B-Preview: 65.8 (Ours), 62.1 (Open-Weights Only), 61.4 (Open-Weights & Open-Data).

- DistillQwen-7B: 62.1 (Ours), 61.4 (Open-Weights Only), 61.1 (Open-Weights & Open-Data).

- OpenThoughts3-7B: 61.4 (Ours), 61.1 (Open-Weights Only), 58.5 (Open-Weights & Open-Data).

- GLM-Z1-32B: 61.1 (Ours), 58.5 (Open-Weights Only), 56.1 (Open-Weights & Open-Data).

- OpenThoughts-7B: 58.5 (Ours), 56.1 (Open-Weights Only), 53.7 (Open-Weights & Open-Data).

### Key Observations

1. **"Ours" (Orange) consistently outperforms other categories** across all datasets, with the largest margins in AIME24 (e.g., DASD-4B-Thinking at 88.5% vs. 85.3% for Open-Weights Only).

2. **Open-Weights & Open-Data (Beige)** often underperforms compared to "Ours" but sometimes matches or slightly exceeds "Open-Weights Only" (e.g., Qwen3-32B in AIME24: 81.2% vs. 80.8%).

3. **Significant drops in accuracy** are observed for some models in AIME25 and GPQA, such as OpenThoughts3-7B (53.3% in AIME25) and OpenThoughts-7B (53.7% in GPQA).

4. **LiveCodeBench v5** shows the lowest overall accuracy, with "Ours" models ranging from 51.7% to 69.3%.

### Interpretation

The chart demonstrates that proprietary models ("Ours") generally achieve higher accuracy than open-source alternatives, particularly in complex reasoning tasks (AIME24, AIME25). Open-source models with open data ("Open-Weights & Open-Data") perform better than those without, but still lag behind proprietary solutions. The steep decline in accuracy for models like OpenThoughts3-7B and OpenThoughts-7B suggests potential limitations in their architecture or training data. These findings highlight the challenges of open-source models in matching proprietary systems, even with access to open data. The consistency of "Ours" across datasets underscores its robustness, while the variability in open-source performance emphasizes the need for further optimization in open-source frameworks.