## Diagram: Question Prompt Annotation Example

### Overview

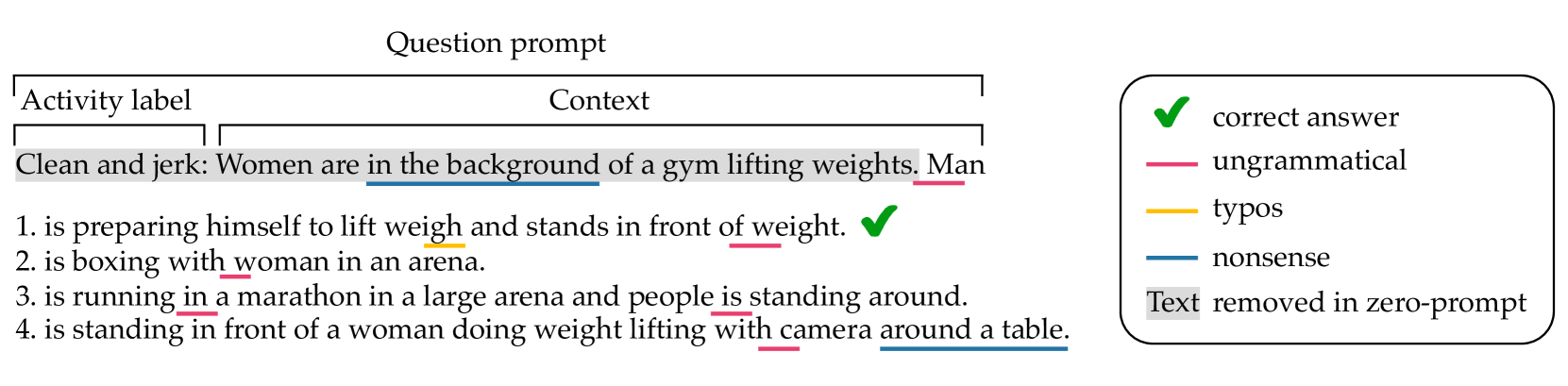

The image is a technical diagram illustrating a text annotation or evaluation scheme. It displays a "Question prompt" with an example sentence and multiple-choice answers, where specific words and phrases are underlined in different colors to indicate various types of errors or annotations. A legend on the right explains the color-coding system.

### Components/Axes

The diagram is divided into two primary regions:

1. **Left Region (Main Content):** Contains the annotated text example.

* **Header:** The text "Question prompt" is centered at the top.

* **Structure Labels:** Two labels, "Activity label" and "Context," are positioned above the example sentence, connected by a bracket line indicating they are components of the prompt.

* **Example Sentence:** "Clean and jerk: Women are in the background of a gym lifting weights. Man"

* **Numbered Options:** Four numbered answer choices follow the example sentence.

* **Annotations:** Various words and phrases within the sentence and options are underlined with colored lines. A green checkmark (✓) appears next to option 1.

* **Highlighting:** The phrase "Text removed in zero-prompt" is highlighted with a gray background.

2. **Right Region (Legend):** A rounded rectangle containing the annotation key.

* **Symbols & Labels:**

* A green checkmark (✓) labeled "correct answer"

* A red line labeled "ungrammatical"

* An orange line labeled "typos"

* A blue line labeled "nonsense"

* A gray highlight box labeled "Text removed in zero-prompt"

### Detailed Analysis

**Annotated Text Transcription & Color-Coding:**

* **Example Sentence:** "Clean and jerk: Women are in the background of a gym lifting weights. Man"

* "in the background" is underlined in **blue** (nonsense).

* "Man" is underlined in **red** (ungrammatical).

* **Option 1:** "is preparing himself to lift weigh and stands in front of weight."

* "weigh" is underlined in **orange** (typo).

* "weight." is underlined in **red** (ungrammatical).

* A **green checkmark (✓)** is placed at the end of the line, indicating this is the correct answer.

* **Option 2:** "is boxing with woman in an arena."

* "woman" is underlined in **red** (ungrammatical).

* **Option 3:** "is running in a marathon in a large arena and people is standing around."

* "in" is underlined in **red** (ungrammatical).

* "is" is underlined in **red** (ungrammatical).

* **Option 4:** "is standing in front of a woman doing weight lifting with camera around a table."

* "with camera" is underlined in **red** (ungrammatical).

* "around a table." is underlined in **blue** (nonsense).

* **Zero-Prompt Note:** The phrase "Text removed in zero-prompt" is displayed with a **gray background highlight**. This label is positioned in the bottom-right of the left region, below the options.

### Key Observations

1. **Multi-Layer Annotation:** The diagram demonstrates a system where a single text can have multiple, overlapping annotations (e.g., a word can be both ungrammatical and part of a nonsense phrase).

2. **Error Typology:** It defines four distinct categories for annotation: correctness, grammaticality, typographical accuracy, and semantic plausibility ("nonsense").

3. **Contextual Example:** The chosen example ("Clean and jerk") suggests the domain may be related to activity recognition, video captioning, or visual question answering, where descriptions of scenes are evaluated.

4. **Spatial Layout:** The legend is placed to the right of the main content for easy reference. The structural labels ("Activity label", "Context") are placed directly above the relevant text segments they describe.

### Interpretation

This diagram serves as a **specification or training guide for a text annotation task**, likely for evaluating machine-generated descriptions or answers. It defines a precise schema for human annotators to flag different types of errors in text.

* **Purpose:** The system is designed to move beyond a simple "correct/incorrect" binary. It provides granular feedback on *why* a text is flawed—whether due to grammatical rules, spelling, or factual/logical coherence with the given context ("Clean and jerk").

* **"Zero-Prompt" Context:** The highlighted note "Text removed in zero-prompt" is a critical clue. It implies there are different experimental conditions. A "zero-prompt" condition likely involves evaluating the model's output without providing the initial "Activity label" and "Context" (the gray-highlighted text in the example). This diagram shows what text is *removed* for that condition, allowing for a comparison of model performance with and without explicit context.

* **Underlying Workflow:** The presence of a "correct answer" (option 1) among flawed options indicates this could be an example from a dataset used to train or test a model's ability to select the most appropriate description, with the annotations explaining why the other options are inferior. The meticulous error tagging suggests the data is used for fine-grained analysis or to train models that can understand and generate text with fewer specific error types.