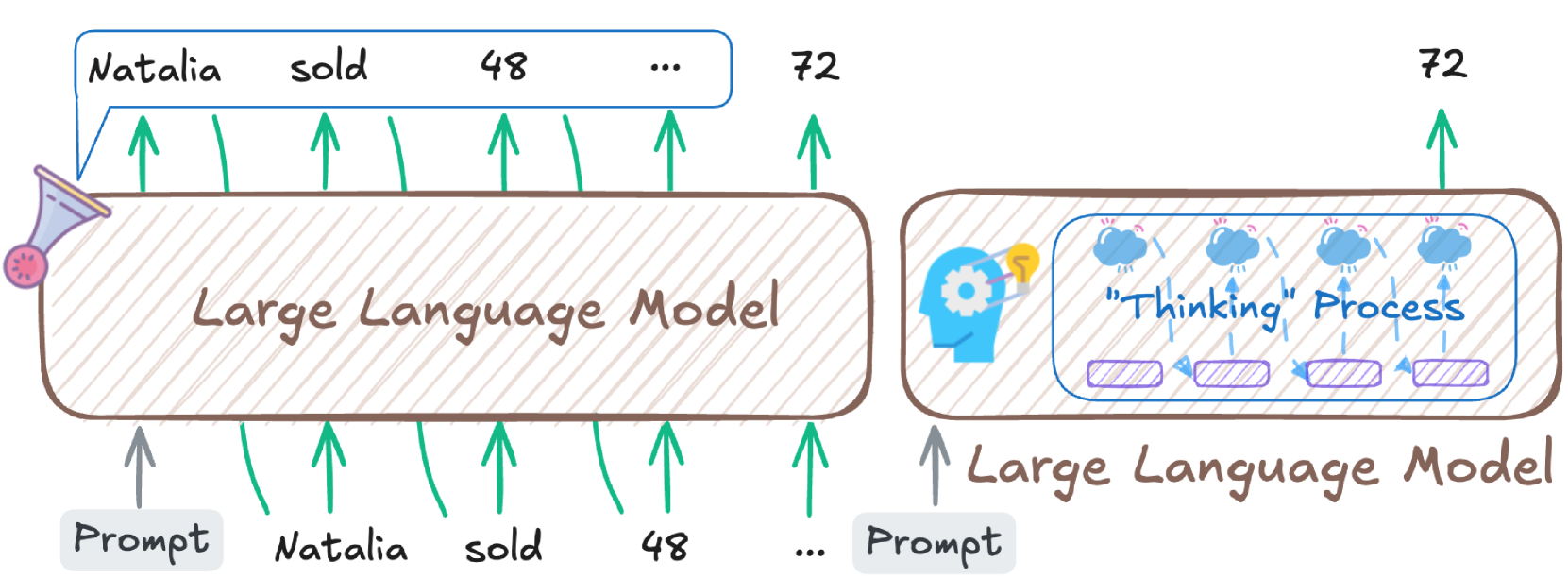

This image is a conceptual diagram illustrating two different modes of operation for a "Large Language Model" (LLM), presented side-by-side. Both diagrams depict an input "Prompt" leading to an output "72", but through distinct internal processes.

The image is composed of two main sections: a left diagram and a right diagram.

---

## Left Diagram: Direct Generation Model

This section illustrates a Large Language Model generating output directly from input.

* **Main Component:** A large, rounded rectangular box with a light brown outline and diagonal hatching inside. It is centrally labeled with the text "Large Language Model" in brown cursive font.

* **Input:**

* Below the "Large Language Model" box, there is a grey rounded rectangular text box labeled "Prompt". A grey upward arrow originates from the top of this "Prompt" box and points to the bottom edge of the "Large Language Model".

* To the right of the "Prompt" input, several green upward arrows point to the bottom edge of the "Large Language Model". These arrows imply additional input tokens, though specific labels for these inputs are not provided at the bottom.

* **Output:**

* Above the "Large Language Model" box, a blue speech bubble-like box contains a sequence of words and numbers: "Natalia sold 48 ...". Green upward arrows originate from the top edge of the "Large Language Model" and point to each element in this sequence ("Natalia", "sold", "48", and the ellipsis "...").

* Further to the right, outside the blue speech bubble, the number "72" is displayed. A green upward arrow originates from the top edge of the "Large Language Model" and points to this "72".

* **Iconography:** A stylized megaphone or funnel icon, colored purple and pink, is attached to the top-left corner of the "Large Language Model" box.

### Flow Description (Left Diagram)

A "Prompt" (and potentially other inputs) is fed into the "Large Language Model". The model then directly generates a sequence of tokens, exemplified by "Natalia sold 48 ...", and ultimately produces a final numerical output of "72". This suggests a direct, token-by-token generation process.

## Right Diagram: Thinking Process Model

This section illustrates a Large Language Model engaging in an internal "Thinking" Process before generating output.

* **Main Component:** A large, rounded rectangular box with a light brown outline and diagonal hatching inside. It is labeled with the text "Large Language Model" in brown cursive font, positioned below an internal process.

* **Input:**

* Below the "Large Language Model" box, there is a grey rounded rectangular text box labeled "Prompt". A grey upward arrow originates from the top of this "Prompt" box and points to the bottom edge of the "Large Language Model".

* **Internal Process Component:**

* Inside the "Large Language Model" box, there is a nested, smaller, rounded rectangular box with a blue outline and diagonal hatching. This inner box is explicitly labeled ""Thinking" Process" in blue cursive font.

* **Iconography within ""Thinking" Process" box:**

* On the left side, a blue human head silhouette with a white cogwheel inside and a yellow lightbulb illuminating above it, symbolizing thought or ideation.

* Above the ""Thinking" Process" label, three blue cloud icons are arranged horizontally. Each cloud has small white upward arrows emanating from it, possibly representing intermediate thoughts or generated ideas.

* Below the ""Thinking" Process" label, three purple, hatched, rounded rectangular boxes are arranged horizontally. Blue dashed arrows connect these boxes sequentially from left to right, indicating a flow or sequence of distinct internal processing steps.

* **Output:**

* Above the "Large Language Model" box (and specifically originating from the "Thinking" Process component), the number "72" is displayed. A green upward arrow originates from the top edge of the "Large Language Model" and points to this "72".

### Flow Description (Right Diagram)

A "Prompt" is fed into the "Large Language Model". Instead of direct generation, the model first engages in an internal ""Thinking" Process". This process involves conceptualization (head with cogwheel/lightbulb), generation of intermediate thoughts (clouds), and a sequence of internal steps (purple boxes). After completing this internal process, the model produces the final numerical output of "72". This suggests a more complex, multi-step reasoning or deliberation phase.

## Overall Interpretation

The image contrasts two conceptual approaches for an LLM to arrive at a specific output ("72"). The left diagram depicts a direct, possibly less explicit, generation path, while the right diagram illustrates a model that incorporates an explicit, structured "thinking" or reasoning phase internally before producing the final result. Both models achieve the same numerical outcome, but through different conceptual mechanisms.