\n

## Diagram: Large Language Model Interaction

### Overview

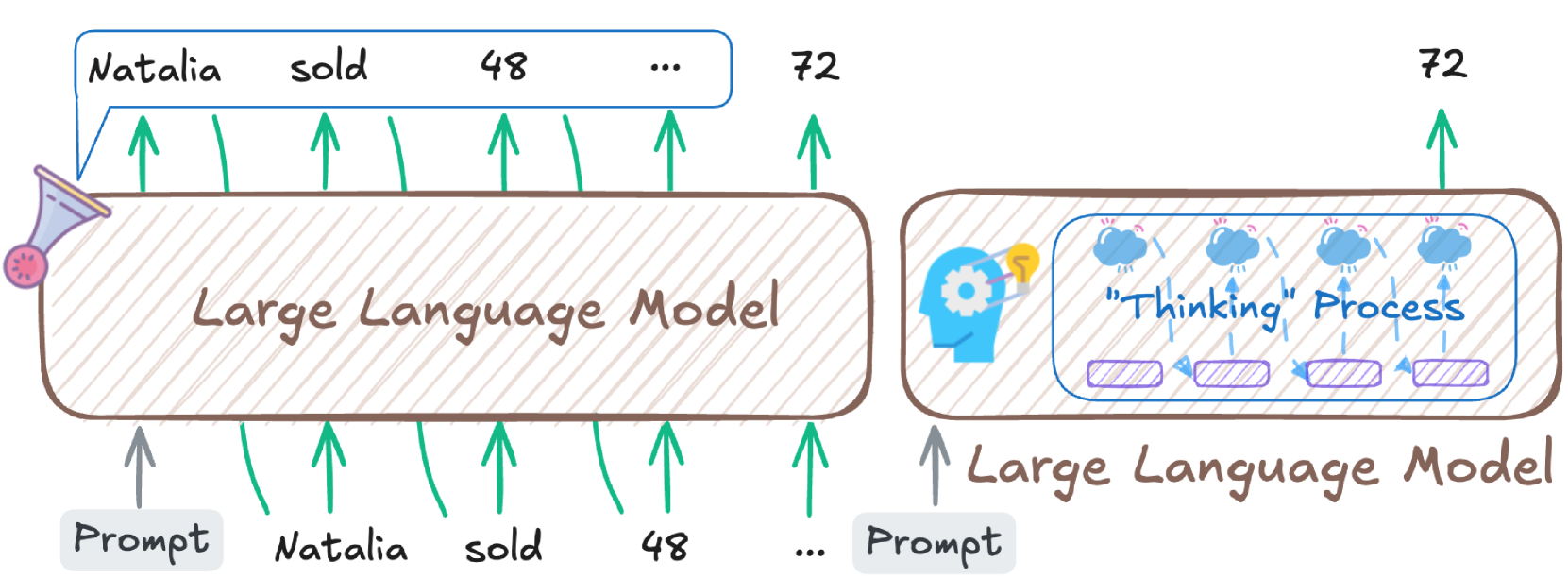

The image depicts a diagram illustrating the interaction between a "Prompt" and a "Large Language Model" (LLM), and the resulting output. It shows two instances of the LLM, one receiving a prompt and the other representing the "Thinking Process" within the LLM. Arrows indicate the flow of information.

### Components/Axes

The diagram consists of the following components:

* **Large Language Model (LLM):** Represented as a light blue rectangle with diagonal stripes. The text "Large Language Model" is written inside.

* **Prompt:** Text label indicating the input to the LLM.

* **Output:** Text labels representing the output from the LLM: "Natalia", "sold", "48", "...", and "72".

* **"Thinking Process":** A visual representation within the second LLM, showing a head with clouds and gears, and the text ""Thinking" Process".

* **Arrows:** Green arrows indicating the direction of information flow.

* **Megaphone:** A small icon representing the prompt.

### Detailed Analysis or Content Details

The diagram shows two parallel processes.

**Left Side:**

* A "Prompt" is directed towards the "Large Language Model".

* The LLM generates outputs: "Natalia", "sold", "48", "...", and "72". Each output has an arrow pointing upwards from the LLM.

* The outputs are listed horizontally above the LLM.

**Right Side:**

* A "Prompt" is directed towards the "Large Language Model".

* Inside the LLM, a "Thinking Process" is visualized with a head containing clouds and gears.

* The LLM generates outputs: "72".

* The output is listed horizontally above the LLM.

The outputs "Natalia", "sold", "48", "..." are also shown as inputs to the first LLM.

### Key Observations

* The diagram illustrates a feedback loop where the outputs of the LLM can be used as inputs.

* The "Thinking Process" visualization suggests internal processing within the LLM.

* The ellipsis ("...") indicates that there may be more outputs than those explicitly listed.

* The output "72" appears in both the left and right LLM processes.

### Interpretation

The diagram demonstrates how a Large Language Model processes a prompt and generates outputs. The inclusion of the "Thinking Process" visualization suggests that the LLM doesn't simply regurgitate information but engages in some form of internal reasoning. The feedback loop, where outputs become inputs, highlights the iterative nature of LLM interactions. The repetition of "72" in both processes could indicate a key piece of information or a significant result of the LLM's processing. The diagram is a simplified representation of a complex process, focusing on the input-output relationship and the internal "thinking" aspect of the LLM. It doesn't provide specific data or numerical values beyond the listed outputs, but rather illustrates the conceptual flow of information.