## Diagram: Large Language Model (LLM) Processing Approaches (Direct vs. with "Thinking" Process)

### Overview

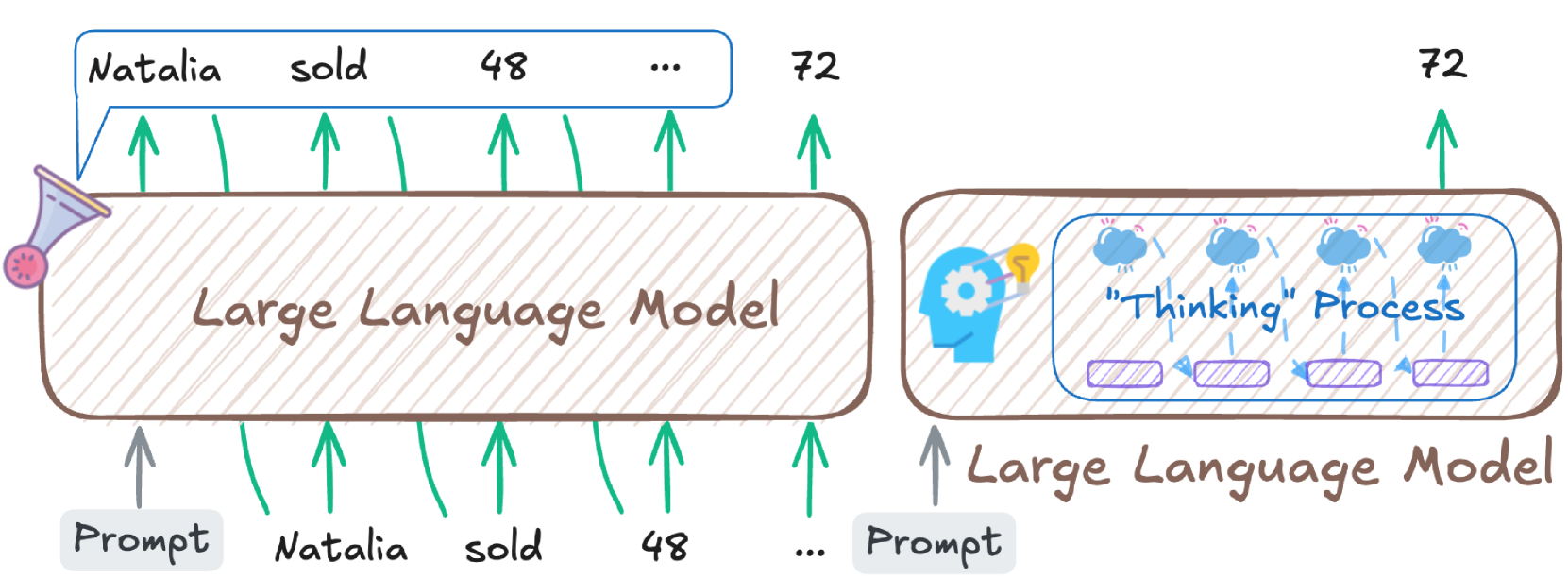

The diagram compares two methods of a **Large Language Model (LLM)** processing a prompt to generate the output "72". The left shows a direct LLM approach, while the right shows an LLM with an explicit "Thinking" process (e.g., chain-of-thought reasoning) before output. The prompt involves the text "Natalia sold 48 ...", and both methods produce "72" as the final output.

### Components (Diagram Elements)

#### Left Section: Direct LLM Processing

- **Input (Prompt):** A gray box labeled "Prompt" feeds text tokens: *"Natalia"*, *"sold"*, *"48"*, *"..."* (green upward arrows to the LLM).

- **LLM:** A brown rounded rectangle labeled *"Large Language Model"* (with a funnel icon on the left, symbolizing input ingestion).

- **Output:** Green upward arrows from the LLM to the text: *"Natalia"*, *"sold"*, *"48"*, *"..."*, *"72"* (top text, with *"72"* as the final output).

#### Right Section: LLM with "Thinking" Process

- **Input (Prompt):** A gray box labeled *"Prompt"* feeds a gray upward arrow to the LLM.

- **LLM:** A brown rounded rectangle labeled *"Large Language Model"* (with a brain icon with gears, symbolizing reasoning).

- **"Thinking" Process:** A blue box inside the LLM containing:

- Four cloud icons (representing reasoning steps).

- Purple rectangles (representing processing units).

- Label: *"Thinking" Process* (blue text).

- **Output:** A green upward arrow from the LLM to the text *"72"* (top text).

### Detailed Analysis

- **Left (Direct LLM):**

- Input tokens: *"Natalia"*, *"sold"*, *"48"*, *"..."* (each with green arrows to the LLM).

- The LLM processes these tokens directly, outputting *"Natalia"*, *"sold"*, *"48"*, *"..."*, and finally *"72"*.

- **Right (LLM with "Thinking" Process):**

- Input: *"Prompt"* → LLM with an internal *"Thinking" Process* (four cloud steps + purple processing units).

- Output: *"72"* (same as the left, but via explicit reasoning steps).

### Key Observations

- Both approaches yield the same output (*"72"*), but the right method includes an explicit *"Thinking" Process* (reasoning steps) inside the LLM.

- The left LLM uses a funnel icon (input ingestion), while the right uses a brain-with-gears icon (reasoning).

- The *"Thinking" Process* has four cloud icons (possibly four reasoning steps) and purple rectangles (processing units).

### Interpretation

The diagram contrasts two LLM inference paradigms:

- **Direct Processing (Left):** The LLM maps input tokens to output tokens without explicit reasoning steps (e.g., a "black-box" approach).

- **Reasoning-Augmented Processing (Right):** The LLM uses an internal *"Thinking" Process* (e.g., chain-of-thought reasoning) to solve the problem, even though the final output is identical.

This suggests that LLMs may implicitly perform reasoning (even if not visible) to generate answers, or that adding explicit reasoning steps (like in chain-of-thought prompting) is represented internally. The diagram highlights how different internal mechanisms (direct vs. reasoning-augmented) can produce the same output, emphasizing the role of "thinking" in LLM problem-solving.