## Code Snippet Comparison: Neuro-Symbolic Framework Implementations

### Overview

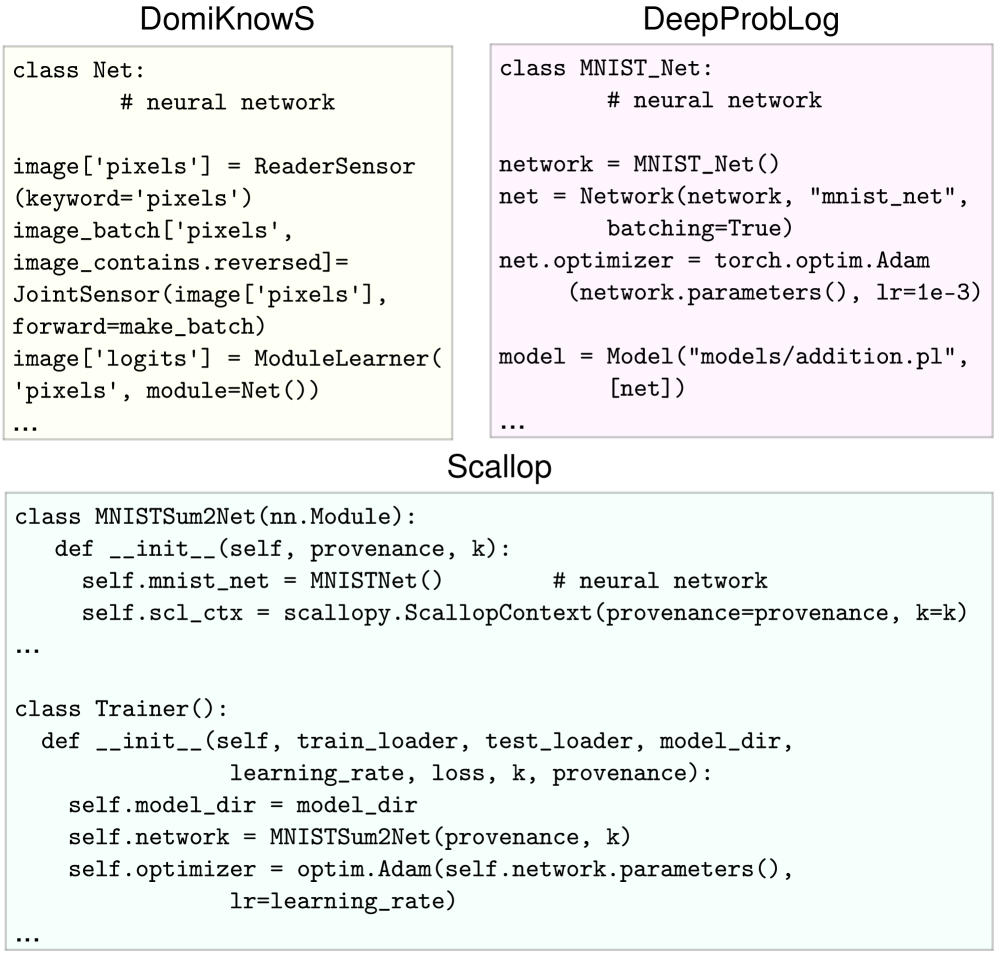

The image displays a comparative layout of code snippets from three different neuro-symbolic AI frameworks: **DomiKnowS**, **DeepProbLog**, and **Scallop**. Each framework's implementation for a similar task (likely involving MNIST digit processing and a logical reasoning component) is shown in a separate, color-coded box. The image serves as a technical comparison of syntactic and architectural approaches.

### Components/Axes

The image is structured into three distinct rectangular regions, each with a centered title above a code block.

1. **Top-Left Box (DomiKnowS):**

* **Title:** `DomiKnowS`

* **Background Color:** Light beige (#f5f5dc approx.)

* **Content:** A Python-like class definition for a neural network and sensor-based data flow.

2. **Top-Right Box (DeepProbLog):**

* **Title:** `DeepProbLog`

* **Background Color:** Light pink (#ffe4e1 approx.)

* **Content:** Python code instantiating a network, defining an optimizer, and linking to a ProbLog model file.

3. **Bottom Box (Scallop):**

* **Title:** `Scallop`

* **Background Color:** Light green (#e0f2e0 approx.)

* **Content:** Python code defining a neural network module (`MNISTSum2Net`) and a `Trainer` class, using a custom `ScallopContext`.

### Detailed Analysis / Content Details

**1. DomiKnowS Snippet (Top-Left):**

```python

class Net:

# neural network

image['pixels'] = ReaderSensor(keyword='pixels')

image_batch['pixels', image_contains.reversed] = JointSensor(image['pixels'], forward=make_batch)

image['logits'] = ModuleLearner('pixels', module=Net())

...

```

* **Key Elements:** Defines a `Net` class. Uses a declarative, sensor-based approach (`ReaderSensor`, `JointSensor`, `ModuleLearner`) to link data (`image['pixels']`) to the learning module. The `...` indicates omitted code.

**2. DeepProbLog Snippet (Top-Right):**

```python

class MNIST_Net:

# neural network

network = MNIST_Net()

net = Network(network, "mnist_net", batching=True)

net.optimizer = torch.optim.Adam(network.parameters(), lr=1e-3)

model = Model("models/addition.pl", [net])

...

```

* **Key Elements:** Defines a `MNIST_Net` class. Explicitly creates a `Network` object, configures a PyTorch Adam optimizer (`torch.optim.Adam`) with a learning rate of `1e-3`, and integrates it with a ProbLog model file (`"models/addition.pl"`). The `...` indicates omitted code.

**3. Scallop Snippet (Bottom):**

```python

class MNISTSum2Net(nn.Module):

def __init__(self, provenance, k):

self.mnist_net = MNISTNet() # neural network

self.scl_ctx = scallopy.ScallopContext(provenance=provenance, k=k)

...

class Trainer():

def __init__(self, train_loader, test_loader, model_dir,

learning_rate, loss, k, provenance):

self.model_dir = model_dir

self.network = MNISTSum2Net(provenance, k)

self.optimizer = optim.Adam(self.network.parameters(),

lr=learning_rate)

...

```

* **Key Elements:**

* `MNISTSum2Net`: A PyTorch `nn.Module` that contains an `MNISTNet` and initializes a `ScallopContext` with parameters `provenance` and `k`.

* `Trainer`: A class that initializes the model (`MNISTSum2Net`) and an Adam optimizer (`optim.Adam`). It accepts hyperparameters like `learning_rate`, `loss`, `k`, and `provenance`. The `...` indicates omitted code in both classes.

### Key Observations

1. **Common Task Pattern:** All three snippets are structured to handle a similar workflow: defining a neural network component (for MNIST), setting up an optimizer (explicitly in DeepProbLog and Scallop, implied in DomiKnowS), and integrating with a symbolic or probabilistic logic framework.

2. **Syntactic Diversity:** The frameworks exhibit distinct syntactic philosophies:

* **DomiKnowS:** Uses a declarative, sensor-based API (`ReaderSensor`, `ModuleLearner`).

* **DeepProbLog:** Follows a more imperative, object-oriented style, directly instantiating network and optimizer objects and linking to an external `.pl` (ProbLog) file.

* **Scallop:** Employs a PyTorch-centric, modular class structure (`nn.Module`) with a dedicated context object (`ScallopContext`) for its neuro-symbolic reasoning.

3. **Optimizer Configuration:** DeepProbLog and Scallop explicitly show the use of the Adam optimizer. DeepProbLog hardcodes the learning rate (`lr=1e-3`), while Scallop's `Trainer` accepts it as a parameter (`lr=learning_rate`).

4. **Symbolic Integration Point:** The integration with symbolic logic is framework-specific: DomiKnowS uses sensors and learners, DeepProbLog references a ProbLog model file (`addition.pl`), and Scallop uses a `ScallopContext`.

### Interpretation

This image is a technical comparison aimed at developers or researchers evaluating neuro-symbolic AI frameworks. It demonstrates the **architectural and syntactic trade-offs** between three prominent systems.

* **What it suggests:** The choice of framework significantly impacts code structure and verbosity. DomiKnowS appears to offer a higher-level, declarative abstraction. DeepProbLog provides a clear, imperative bridge between PyTorch and ProbLog. Scallop offers a PyTorch-native, modular approach with explicit context management.

* **How elements relate:** Each code block is a self-contained example of the framework's "boilerplate" for a common task (MNIST + logic). The side-by-side presentation allows for direct comparison of how each system answers the same fundamental questions: "How do I define my network?", "How do I connect it to data?", and "How do I set up training?"

* **Notable Anomalies:** The `...` in each snippet indicates that these are simplified, illustrative excerpts, not complete programs. The DomiKnowS snippet is the most abstract, hiding the optimizer definition, while the others expose it. The specific task (e.g., "addition" hinted at in DeepProbLog's model file) is not fully defined, focusing the comparison on the framework integration pattern rather than the end application.

In essence, the image serves as a Rosetta Stone for understanding the initial setup and coding paradigm of these three neuro-symbolic tools, highlighting that the "best" choice depends on a developer's preference for declarative vs. imperative style and desired level of abstraction.