## Code Snippet Analysis: Neural Network Implementations

### Overview

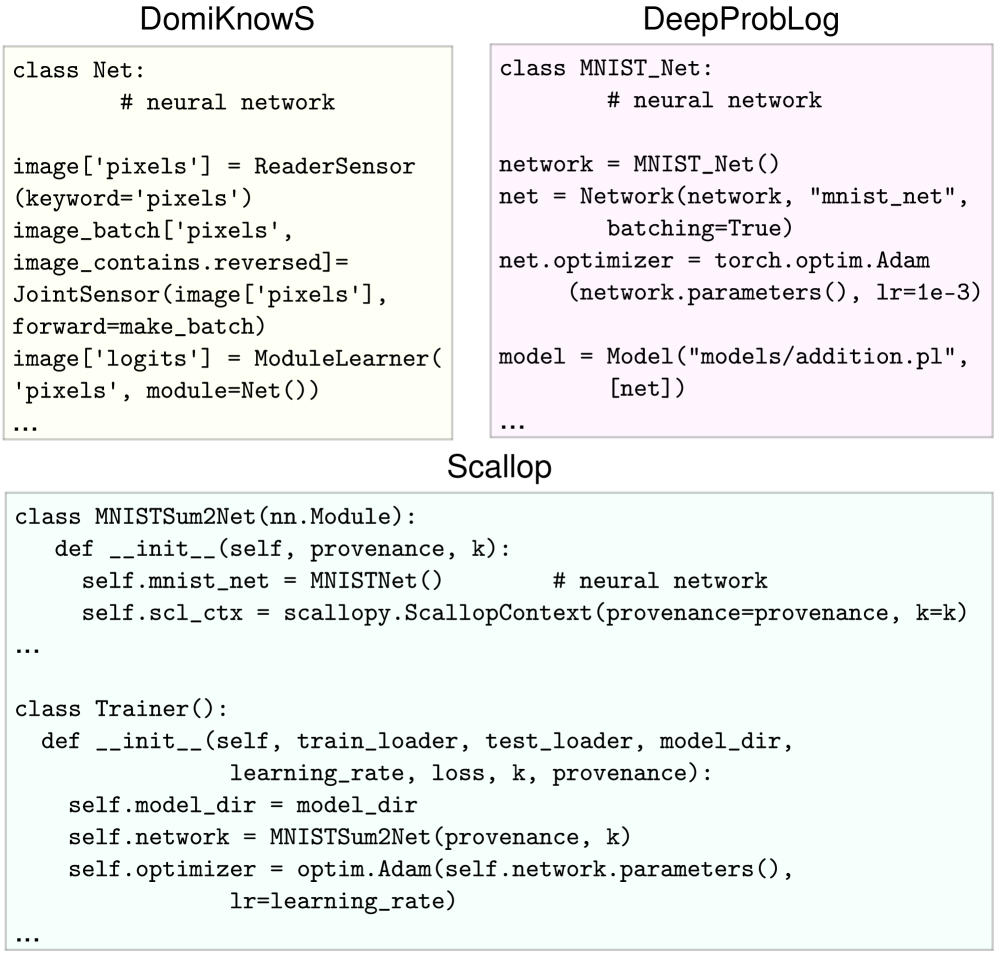

The image contains three code snippets demonstrating neural network implementations across different frameworks: DomiKnowS, DeepProbLog, and Scallop. Each snippet defines classes for neural network architectures, data processing, and training configurations.

### Components/Axes

1. **DomiKnowS Framework**

- Class: `Net`

- Key Components:

- `image['pixels']`: Uses `ReaderSensor` for pixel data extraction

- `image_batch`: Processes reversed pixel batches via `JointSensor`

- `image['logits']`: Implements `ModuleLearner` for logit generation

- Parameters:

- `forward=make_batch` (batch processing method)

- `module=Net()` (self-referential module definition)

2. **DeepProbLog Framework**

- Class: `MNIST_Net`

- Key Components:

- Network architecture definition

- Optimizer configuration: `torch.optim.Adam`

- Loss parameter: `lr=1e-3` (learning rate)

- Configuration:

- Batching enabled (`batching=True`)

- Model file: `"models/addition.pl"`

3. **Scallop Framework**

- Class: `MNISTSum2Net`

- Key Components:

- Inherits from `nn.Module`

- Uses `ScallopContext` for provenance tracking

- Training Class: `Trainer`

- Parameters: `learning_rate`, `loss`, `k`, `provenance`

- Optimizer: `optim.Adam` with learning rate parameter

### Detailed Analysis

1. **DomiKnowS Implementation**

- Emphasizes sensor-based data processing (`ReaderSensor`, `JointSensor`)

- Implements custom batch processing (`forward=make_batch`)

- Uses module-based learning architecture

2. **DeepProbLog Implementation**

- Standard PyTorch-style network definition

- Explicit optimizer and learning rate configuration

- Model persistence through Prolog files

3. **Scallop Implementation**

- Combines neural network architecture with provenance tracking

- Uses Scallop's context management for reproducibility

- Training parameters include both technical (learning rate) and conceptual (provenance) elements

### Key Observations

1. All implementations use Adam optimizer but with different parameter configurations

2. DomiKnowS and Scallop emphasize provenance tracking through different mechanisms

3. DeepProbLog uses explicit Prolog model files for persistence

4. Learning rate configuration varies in explicitness across frameworks

5. Batch processing approaches differ significantly between implementations

### Interpretation

The code snippets demonstrate three distinct approaches to neural network implementation:

1. **DomiKnowS** focuses on sensor-driven data processing and module-based architecture

2. **DeepProbLog** follows conventional deep learning practices with PyTorch integration

3. **Scallop** introduces provenance-aware neural networks through context management

Notable differences include:

- DomiKnowS's unique sensor-based data pipeline

- Scallop's integration of provenance tracking with neural network training

- DeepProbLog's standard deep learning configuration with Prolog model persistence

The implementations suggest a progression from specialized data processing frameworks (DomiKnowS) to hybrid deep learning/prolog systems (DeepProbLog) and finally to provenance-aware neural networks (Scallop). The learning rate configuration shows increasing explicitness in parameter management across the frameworks.