\n

## Diagram: DeepSeek Model Development Flow

### Overview

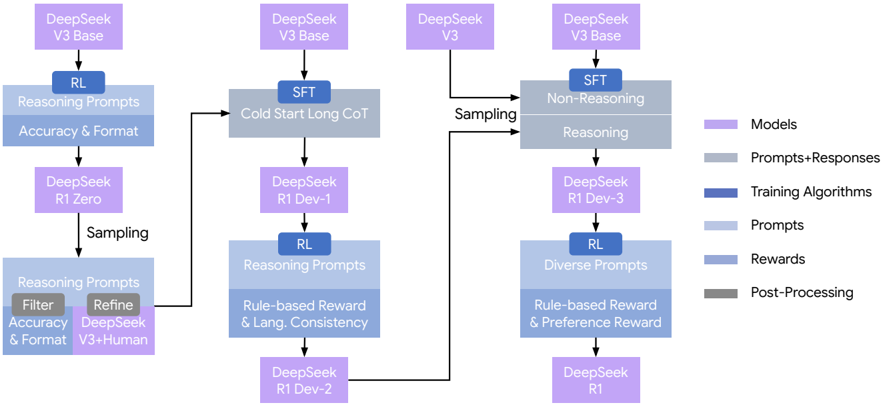

The image depicts a flowchart illustrating the development process of the DeepSeek model, progressing through various stages of Reinforcement Learning (RL), Supervised Fine-Tuning (SFT), and sampling techniques. The diagram highlights the iterative refinement of the model through different prompt strategies, reward systems, and post-processing steps.

### Components/Axes

The diagram consists of rectangular nodes representing model versions or stages, connected by arrows indicating the flow of development. A legend on the right side categorizes the nodes by color:

* **Purple:** Models

* **Pink:** Prompts+Responses

* **Blue:** Training Algorithms

* **Orange:** Prompts

* **Light Blue:** Rewards

* **Gray:** Post-Processing

The diagram's flow starts from the top and branches out, converging at certain points. Key model names include "DeepSeek V3 Base", "DeepSeek R1 Zero", "DeepSeek R1 Dev-1", "DeepSeek R1 Dev-2", "DeepSeek R1 Dev-3", and "DeepSeek R1".

### Detailed Analysis or Content Details

The diagram can be broken down into four main branches originating from "DeepSeek V3 Base":

**Branch 1 (Leftmost):**

1. "DeepSeek V3 Base" -> RL (Training Algorithm) with "Reasoning Prompts" (Prompts) focusing on "Accuracy & Format" (Rewards).

2. This leads to "DeepSeek R1 Zero".

3. "DeepSeek R1 Zero" -> Sampling -> "Reasoning Prompts" (Prompts) for "Filter, Accuracy & Format" (Post-Processing) and "Refine DeepSeek V3+Human".

**Branch 2 (Second from Left):**

1. "DeepSeek V3 Base" -> SFT (Training Algorithm) with "Cold Start Long CoT" (Prompts).

2. This leads to "DeepSeek R1 Dev-1".

3. "DeepSeek R1 Dev-1" -> RL (Training Algorithm) with "Reasoning Prompts" (Prompts) and "Rule-based Reward & Lang. Consistency" (Rewards).

4. This leads to "DeepSeek R1 Dev-2".

**Branch 3 (Second from Right):**

1. "DeepSeek V3 Base" -> SFT (Training Algorithm) with "Non-Reasoning" (Prompts) and "Reasoning" (Prompts).

2. This leads to "DeepSeek R1 Dev-3".

3. "DeepSeek R1 Dev-3" -> RL (Training Algorithm) with "Diverse Prompts" (Prompts) and "Rule-based Reward & Preference Reward" (Rewards).

4. This leads to "DeepSeek R1".

**Branch 4 (Rightmost):**

1. "DeepSeek V3 Base" -> Sampling. This branch does not lead to any further model development stages.

The "Sampling" steps are visually represented as arrows connecting different stages.

### Key Observations

* The model development process is highly iterative, with multiple feedback loops involving RL and SFT.

* Different prompt strategies ("Reasoning Prompts", "Cold Start Long CoT", "Non-Reasoning", "Diverse Prompts") are employed at various stages.

* Reward systems evolve from focusing on "Accuracy & Format" to incorporating "Lang. Consistency" and "Preference Reward".

* The diagram suggests a parallel development approach, with multiple model versions ("Dev-1", "Dev-2", "Dev-3") being refined simultaneously.

* The rightmost branch, starting with "DeepSeek V3 Base" and leading to "Sampling", appears to be a separate exploratory path.

### Interpretation

The diagram illustrates a sophisticated model development pipeline for DeepSeek, emphasizing the importance of iterative refinement through RL and SFT. The use of diverse prompt strategies and reward systems suggests a focus on improving both the accuracy and the quality of the model's responses. The parallel development of multiple model versions indicates a commitment to exploring different approaches and identifying the most effective configurations. The "Sampling" branch might represent a process of generating data or evaluating model performance. The diagram highlights a complex interplay between model architecture, training algorithms, prompt engineering, and reward design, all aimed at creating a robust and high-performing language model. The diagram does not provide quantitative data, but rather a qualitative overview of the development process. It is a high-level representation of the workflow, and further details would be needed to understand the specific parameters and configurations used at each stage.