\n

## Bar Chart: EMD of Verbal and Internal Confidence Across Language Models

### Overview

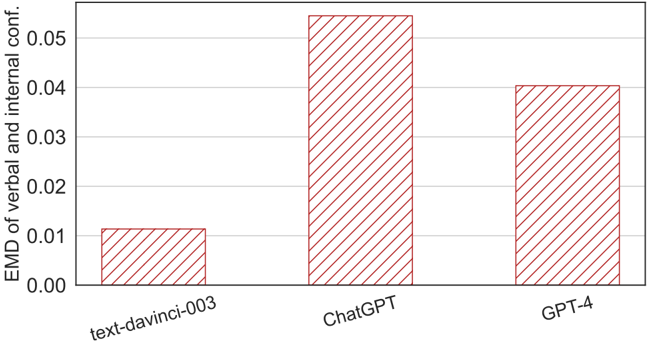

The image displays a vertical bar chart comparing three large language models on a metric labeled "EMD of verbal and internal conf." The chart uses a single visual style for all bars and presents a clear comparative analysis.

### Components/Axes

* **Chart Type:** Vertical Bar Chart.

* **Y-Axis:**

* **Label:** "EMD of verbal and internal conf." (Likely an abbreviation for "Earth Mover's Distance of verbal and internal confidence").

* **Scale:** Linear scale ranging from 0.00 to 0.05.

* **Major Ticks:** Marked at intervals of 0.01 (0.00, 0.01, 0.02, 0.03, 0.04, 0.05).

* **X-Axis:**

* **Label:** Not explicitly labeled, but categories are model names.

* **Categories (from left to right):** "text-davinci-003", "ChatGPT", "GPT-4".

* **Data Series & Legend:**

* There is no separate legend box. All three bars share the same visual style: a white fill with a pattern of diagonal red lines (hatching) running from top-left to bottom-right.

* **Spatial Layout:** The chart is contained within a rectangular frame. The y-axis is on the left, the x-axis categories are centered below their respective bars at the bottom. The title or caption is not visible within the cropped image.

### Detailed Analysis

The chart presents the Earth Mover's Distance (EMD) value for each model. EMD is a measure of the distance between two probability distributions; in this context, it quantifies the discrepancy between a model's stated (verbal) confidence and its inferred (internal) confidence. A lower EMD indicates better alignment between these two confidence measures.

1. **text-davinci-003:**

* **Visual Trend:** This is the shortest bar, indicating the lowest EMD value.

* **Estimated Value:** The top of the bar aligns just above the 0.01 grid line. **Approximate Value: 0.011** (with an uncertainty of ±0.001).

2. **ChatGPT:**

* **Visual Trend:** This is the tallest bar, indicating the highest EMD value.

* **Estimated Value:** The top of the bar extends significantly above the 0.05 grid line. **Approximate Value: 0.054** (with an uncertainty of ±0.002).

3. **GPT-4:**

* **Visual Trend:** This bar is of intermediate height, shorter than ChatGPT but taller than text-davinci-003.

* **Estimated Value:** The top of the bar aligns just above the 0.04 grid line. **Approximate Value: 0.041** (with an uncertainty of ±0.001).

### Key Observations

* **Clear Hierarchy:** There is a distinct and significant ordering in the EMD values: text-davinci-003 < GPT-4 < ChatGPT.

* **Magnitude of Difference:** The EMD for ChatGPT (~0.054) is approximately five times larger than that of text-davinci-003 (~0.011). The EMD for GPT-4 (~0.041) is roughly four times that of text-davinci-003.

* **Non-Linear Progression:** The progression from the older model (text-davinci-003) to the newer ones does not show a monotonic improvement (decrease) in this specific metric. ChatGPT shows a substantial increase in EMD compared to its predecessor, while GPT-4 shows a reduction compared to ChatGPT but remains significantly higher than text-davinci-003.

### Interpretation

This chart provides a quantitative snapshot of a specific alignment property—calibration between expressed and underlying confidence—across three generations of OpenAI's models.

* **What the Data Suggests:** The data suggests that the transition from the text-davinci-003 model to the ChatGPT (likely instruct-tuned) model was associated with a major increase in the discrepancy between verbal and internal confidence. This could imply that the tuning process for ChatGPT, while improving other capabilities like instruction following, may have inadvertently decoupled the model's ability to accurately report its own internal certainty.

* **Relationship Between Elements:** The subsequent reduction in EMD for GPT-4 compared to ChatGPT indicates that this calibration issue was addressed to some degree in the newer model, though it did not return to the lower level seen in text-davinci-003. This could reflect a more sophisticated or different alignment strategy in GPT-4.

* **Notable Anomalies/Implications:** The most striking finding is the poor calibration (high EMD) of ChatGPT relative to both its predecessor and successor. For applications requiring reliable confidence estimates (e.g., high-stakes decision support, medical diagnosis, or factual verification), this metric is critical. The chart implies that users should be cautious about interpreting ChatGPT's stated confidence levels as accurate reflections of its internal certainty, whereas text-davinci-003 and, to a lesser extent, GPT-4 may provide more calibrated confidence signals. The chart highlights that model capability and alignment properties like calibration do not always improve in a linear fashion with each new release.