## Diagram: Neural Logic Machine (NLM) Architecture

### Overview

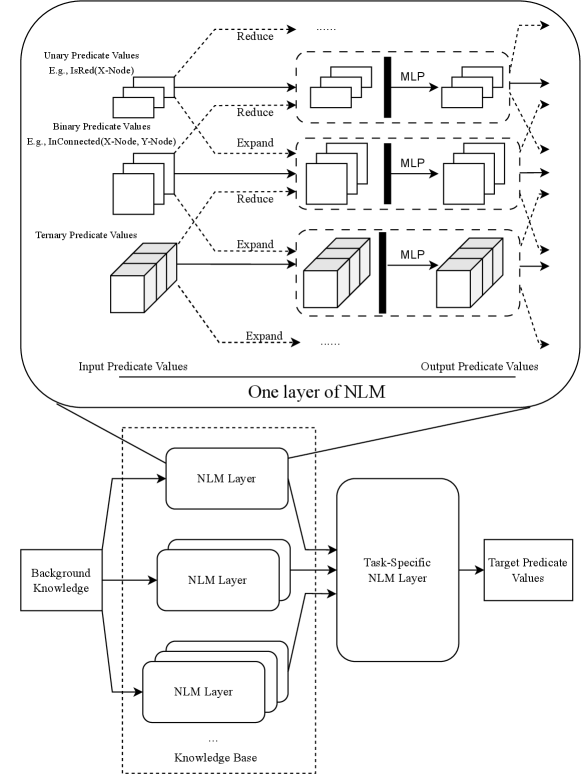

The image is a technical diagram illustrating the architecture of a Neural Logic Machine (NLM). It is divided into two main sections: a detailed view of a single NLM layer at the top, and a broader system view showing how multiple NLM layers are composed at the bottom. The diagram explains how logical predicates of different arities (unary, binary, ternary) are processed through a neural network structure involving reduction, expansion, and Multi-Layer Perceptron (MLP) operations.

### Components/Axes

The diagram is a flowchart with labeled boxes, arrows, and text annotations. There are no traditional chart axes. Key components and labels are:

**Top Section: "One layer of NLM"**

* **Input Side (Left):** Labeled "Input Predicate Values". Contains three distinct input blocks:

1. **Unary Predicate Values:** Example given: `E.g., IsRed(X-Node)`. Represented by a stack of 2D squares.

2. **Binary Predicate Values:** Example given: `E.g., IsConnected(X-Node, Y-Node)`. Represented by a stack of 3D cubes.

3. **Ternary Predicate Values:** Represented by a stack of larger 3D cubes.

* **Processing Operations:** Arrows labeled with operations connect inputs to processing blocks:

* `Reduce` (dashed arrows from Unary and Binary inputs).

* `Expand` (dashed arrows from Binary and Ternary inputs).

* **Processing Blocks:** Three dashed-line boxes, each containing:

* Input representation (squares/cubes).

* A vertical black bar labeled `MLP` (Multi-Layer Perceptron).

* Output representation (squares/cubes).

* **Output Side (Right):** Labeled "Output Predicate Values". Arrows point from the processing blocks to this side.

**Bottom Section: System Architecture**

* **Input (Far Left):** A box labeled `Background Knowledge`.

* **Core Processing (Center-Left):** A large dashed box labeled `Knowledge Base`. Inside are multiple stacked boxes labeled `NLM Layer`. Arrows show `Background Knowledge` feeding into each `NLM Layer`.

* **Task Processing (Center-Right):** A large rounded rectangle labeled `Task-Specific NLM Layer`. Arrows from all `NLM Layer` boxes in the Knowledge Base feed into this layer.

* **Output (Far Right):** A box labeled `Target Predicate Values`. An arrow points from the `Task-Specific NLM Layer` to this box.

### Detailed Analysis

The diagram details a hierarchical, modular neural network designed for logical reasoning.

**1. Single NLM Layer (Top Section):**

* **Data Flow:** The process is parallel for different predicate arities.

* **Unary Path:** Unary predicate values (e.g., properties of a single node) undergo a `Reduce` operation, are processed by an MLP, and contribute to the output.

* **Binary Path:** Binary predicate values (e.g., relationships between two nodes) can undergo both `Reduce` and `Expand` operations before MLP processing.

* **Ternary Path:** Ternary predicate values (e.g., relationships among three nodes) undergo an `Expand` operation before MLP processing.

* **Key Insight:** The `Reduce` and `Expand` operations likely transform the dimensionality or scope of the predicate tensors to prepare them for the MLP, which performs the core learned transformation. The output is a new set of "Output Predicate Values."

**2. Composed System (Bottom Section):**

* **Knowledge Base:** This is a stack of multiple `NLM Layer` modules. The diagram shows three explicitly, with ellipsis (`...`) indicating more. This suggests a deep architecture where logical reasoning is performed in successive stages.

* **Information Flow:** `Background Knowledge` (the initial set of facts or predicates) is fed as input to every layer in the Knowledge Base in parallel. The outputs of all these layers are then aggregated.

* **Task Specialization:** The aggregated output from the Knowledge Base is fed into a final, dedicated `Task-Specific NLM Layer`. This layer's role is to transform the general knowledge representations into the specific `Target Predicate Values` required for a given task.

### Key Observations

* **Arity-Specific Processing:** The architecture explicitly handles logical predicates of different numbers of arguments (unary, binary, ternary) with tailored operations (`Reduce`/`Expand`).

* **Modularity and Depth:** The system is built from reusable `NLM Layer` blocks, allowing for scalable depth in the Knowledge Base.

* **Two-Stage Reasoning:** The design separates general knowledge processing (the stacked NLM Layers) from task-specific inference (the final Task-Specific NLM Layer).

* **Visual Encoding:** The diagram uses dimensionality of shapes (2D squares for unary, 3D cubes for binary/ternary) to visually represent the arity of the predicates.

### Interpretation

This diagram illustrates a neuro-symbolic architecture that bridges connectionist neural networks with symbolic logic. The NLM is designed to learn and reason over structured, relational data represented as logical predicates.

* **What it demonstrates:** The core innovation is the structured processing within a single layer. Instead of a monolithic neural network, it uses arity-specific pathways with dimensionality manipulation (`Reduce`/`Expand`) followed by an MLP. This likely allows the model to learn rules and inferences that respect the logical structure of the data (e.g., properties of objects vs. relationships between objects).

* **Relationship between elements:** The bottom diagram shows how complex reasoning is achieved by composing simple, uniform NLM layers. The Knowledge Base acts as a general-purpose reasoning engine, processing the background knowledge into increasingly abstract or refined representations. The Task-Specific layer then acts as a "conclusion drawer," mapping these rich representations to the desired output for a particular problem (e.g., answering a query, classifying a scene).

* **Notable design choice:** The parallel input of `Background Knowledge` to all Knowledge Base layers is significant. It suggests that each layer can access the original facts, potentially allowing for different layers to specialize in different types of inferences or levels of abstraction without losing access to the base data. This is a form of residual or dense connection pattern tailored for logical reasoning tasks.

**Language Note:** All text in the diagram is in English.