## Histogram: Model Confidence vs. Proportion of Agreement/Disagreement

### Overview

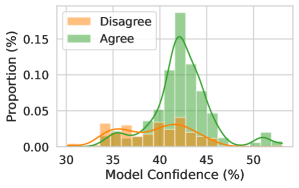

The image is a histogram showing the distribution of model confidence levels, separated by whether the model's prediction agreed or disagreed with a human annotator. The x-axis represents the model's confidence (in percentage), and the y-axis represents the proportion of instances (in percentage). Two distributions are plotted: one for instances where the model disagreed (orange) and one for instances where the model agreed (green).

### Components/Axes

* **X-axis:** Model Confidence (%), ranging from 30% to 50%. Increments are not explicitly marked, but the axis spans 20 percentage points.

* **Y-axis:** Proportion (%), ranging from 0.00% to 0.15%. Increments are not explicitly marked.

* **Legend:** Located in the top-left corner.

* Orange: Disagree

* Green: Agree

### Detailed Analysis

* **Disagree (Orange):**

* The distribution is relatively flat, with a small peak around 35% confidence.

* The orange line is relatively flat, with a small peak around 35% confidence.

* There is a small bump around 50% confidence.

* **Agree (Green):**

* The distribution is concentrated around 42-45% confidence.

* The green line has a clear peak around 43% confidence.

* There is a small bump around 52% confidence.

### Key Observations

* The model tends to be more confident when it agrees with human annotators.

* The distribution of confidence levels is much narrower for instances where the model agrees compared to instances where it disagrees.

* The model rarely disagrees with high confidence.

### Interpretation

The histogram suggests that the model's confidence is a good indicator of its accuracy. When the model is highly confident, it is more likely to agree with human annotators. Conversely, when the model is less confident, it is more likely to disagree. The concentration of "Agree" instances around 42-45% confidence suggests that this is the typical confidence level when the model is correct. The flatter distribution of "Disagree" instances indicates that the model's confidence is less reliable when it is incorrect. The small bump around 50% confidence for both "Agree" and "Disagree" might indicate a specific type of input where the model is often either very confident or very uncertain.