# Technical Data Extraction: Model Performance Analysis

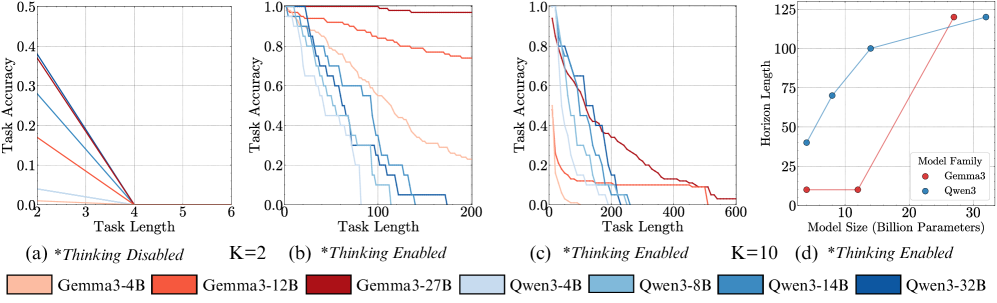

This document provides a comprehensive extraction of data and trends from the provided image, which consists of four technical charts (labeled a, b, c, and d) comparing the performance of two model families, **Gemma3** and **Qwen3**, across various task lengths and model sizes.

---

## 1. Global Legend and Metadata

The following models are represented across the charts, distinguished by color:

| Model Family | Color Code | Model Name |

| :--- | :--- | :--- |

| **Gemma3** | Light Orange | Gemma3-4B |

| | Medium Orange | Gemma3-12B |

| | Dark Red | Gemma3-27B |

| **Qwen3** | Very Light Blue | Qwen3-4B |

| | Light Blue | Qwen3-8B |

| | Medium Blue | Qwen3-14B |

| | Dark Blue | Qwen3-32B |

---

## 2. Chart (a): *Thinking Disabled*, K=2

* **Y-Axis:** Task Accuracy (Scale: 0.0 to 0.5)

* **X-Axis:** Task Length (Scale: 2 to 6)

* **Trend Analysis:** All models exhibit a sharp, linear decline in accuracy as task length increases.

* **Data Points:**

* At **Task Length 2**, accuracies range from ~0.01 (Qwen3-4B) to ~0.38 (Qwen3-32B and Gemma3-27B).

* By **Task Length 4**, the accuracy for **all models** drops to 0.0.

* **Conclusion:** Without "Thinking" enabled, no model can handle tasks longer than length 3.

---

## 3. Chart (b): *Thinking Enabled*, K=2

* **Y-Axis:** Task Accuracy (Scale: 0.0 to 1.0)

* **X-Axis:** Task Length (Scale: 0 to 200)

* **Trend Analysis:** Enabling "Thinking" significantly extends the horizon. Performance degrades as task length increases, but larger models maintain high accuracy for much longer.

* **Key Observations:**

* **Gemma3-27B (Dark Red):** Maintains near 1.0 accuracy across the entire range (0-200).

* **Gemma3-12B (Medium Orange):** Slopes downward gradually, ending at ~0.75 accuracy at length 200.

* **Qwen3-32B (Dark Blue):** Drops sharply after length 50, reaching 0.0 accuracy around length 175.

* **Small Models (4B variants):** Accuracy drops to 0.0 before task length 100.

---

## 4. Chart (c): *Thinking Enabled*, K=10

* **Y-Axis:** Task Accuracy (Scale: 0.0 to 1.0)

* **X-Axis:** Task Length (Scale: 0 to 600)

* **Trend Analysis:** Increasing K to 10 further extends the operational range. All models eventually decay to zero accuracy, but the decay is more gradual than in Chart (b).

* **Key Observations:**

* **Gemma3-27B (Dark Red):** The most resilient; accuracy stays above 0.5 until length ~200, then decays to near 0.0 by length 600.

* **Qwen3-32B (Dark Blue):** Accuracy drops below 0.5 at length ~150 and reaches 0.0 by length ~250.

* **Gemma3-4B (Light Orange):** Shows a very rapid decline, hitting near 0.0 before length 100.

---

## 5. Chart (d): Horizon Length vs. Model Size

* **Y-Axis:** Horizon Length (Scale: 0 to 125)

* **X-Axis:** Model Size (Billion Parameters) (Scale: 0 to 30+)

* **Legend [x, y]:** Located at the bottom right of the chart.

* **Trend Analysis:**

* **Qwen3 (Blue Line):** Shows a logarithmic-style growth. Horizon length increases significantly from 4B to 14B parameters, then plateaus/slows toward 32B.

* **Gemma3 (Red Line):** Shows a "step-function" or exponential growth. Horizon length remains low and flat for 4B and 12B models, then shoots up vertically for the 27B model.

* **Extracted Data Points (Approximate):**

| Model Family | Model Size (B) | Horizon Length |

| :--- | :--- | :--- |

| **Qwen3** | ~4B | ~40 |

| **Qwen3** | ~8B | ~70 |

| **Qwen3** | ~14B | ~100 |

| **Qwen3** | ~32B | ~120 |

| **Gemma3** | ~4B | ~10 |

| **Gemma3** | ~12B | ~10 |

| **Gemma3** | ~27B | ~120 |

---

## Summary of Findings

1. **Thinking Capability:** Enabling "Thinking" is the primary driver for handling longer task lengths. Without it, all models fail by task length 4.

2. **Scaling:** Larger models consistently outperform smaller models in "Horizon Length" (the ability to maintain accuracy over longer tasks).

3. **Family Comparison:** Qwen3 models show better "Horizon" performance at smaller parameter counts (4B-14B), while Gemma3 requires a larger parameter count (27B) to achieve its maximum horizon, at which point it matches or slightly exceeds the largest Qwen3 model.