## Bar Chart: Qualitative Assessments of Model Baseline Results

### Overview

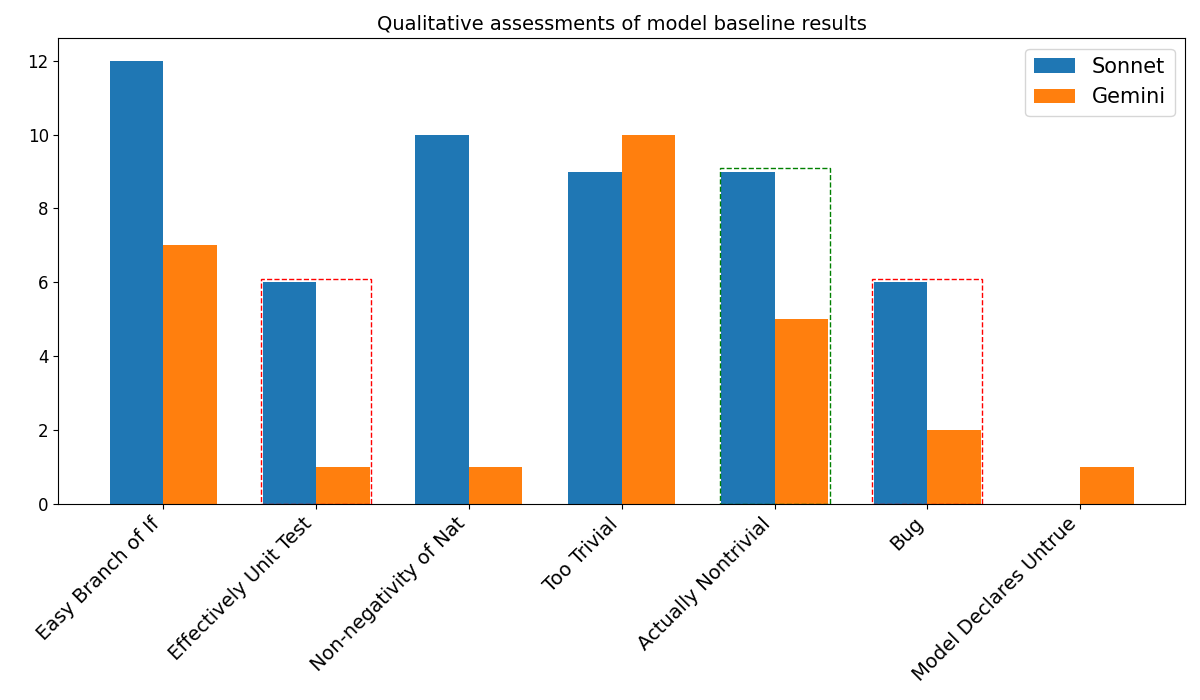

This is a grouped bar chart comparing the performance of two models, "Sonnet" and "Gemini," across seven qualitative assessment categories. The chart is titled "Qualitative assessments of model baseline results." The y-axis represents a numerical score or count, while the x-axis lists the assessment categories. The chart includes visual annotations (dashed boxes) highlighting specific data points.

### Components/Axes

* **Title:** "Qualitative assessments of model baseline results" (centered at the top).

* **Y-Axis:** Vertical axis on the left. It is labeled with numerical values from 0 to 12, with major tick marks at intervals of 2 (0, 2, 4, 6, 8, 10, 12). The axis line is solid black.

* **X-Axis:** Horizontal axis at the bottom. It lists seven categorical labels, rotated approximately 45 degrees for readability. The categories are:

1. Easy Branch of If

2. Effectively Unit Test

3. Non-negativity of Nat

4. Too Trivial

5. Actually Nontrivial

6. Bug

7. Model Declares Untrue

* **Legend:** Located in the top-right corner of the chart area. It contains two entries:

* A blue square labeled "Sonnet"

* An orange square labeled "Gemini"

* **Data Series:** Two series of vertical bars, grouped by category.

* **Sonnet (Blue Bars):** Represented by solid blue bars.

* **Gemini (Orange Bars):** Represented by solid orange bars.

* **Annotations:** Three dashed red rectangular boxes and one dashed green rectangular box are drawn around specific bars, likely for emphasis or to indicate a point of interest.

* A red dashed box surrounds the Gemini (orange) bar in the "Effectively Unit Test" category.

* A red dashed box surrounds the Gemini (orange) bar in the "Non-negativity of Nat" category.

* A green dashed box surrounds the Sonnet (blue) bar in the "Actually Nontrivial" category.

* A red dashed box surrounds the Gemini (orange) bar in the "Bug" category.

### Detailed Analysis

**Data Point Extraction (Approximate Values):**

| Category | Sonnet (Blue) Value | Gemini (Orange) Value | Annotation |

| :--- | :--- | :--- | :--- |

| Easy Branch of If | 12 | 7 | None |

| Effectively Unit Test | 6 | 1 | Red dashed box around Gemini bar |

| Non-negativity of Nat | 10 | 1 | Red dashed box around Gemini bar |

| Too Trivial | 9 | 10 | None |

| Actually Nontrivial | 9 | 5 | Green dashed box around Sonnet bar |

| Bug | 6 | 2 | Red dashed box around Gemini bar |

| Model Declares Untrue | 0 | 1 | None |

**Trend Verification:**

* **Sonnet (Blue):** The trend is variable. It starts very high (12), drops significantly (6), rises again (10), dips slightly (9), holds steady (9), drops again (6), and finally falls to zero (0). The highest score is in "Easy Branch of If," and the lowest is in "Model Declares Untrue."

* **Gemini (Orange):** The trend is also variable but generally lower than Sonnet. It starts moderately high (7), drops very low (1, 1), spikes to its highest point (10), falls to a mid-level (5), drops again (2), and ends very low (1). Its peak is in "Too Trivial."

### Key Observations

1. **Performance Disparity:** Sonnet outperforms Gemini in 5 out of 7 categories ("Easy Branch of If," "Effectively Unit Test," "Non-negativity of Nat," "Actually Nontrivial," "Bug").

2. **Gemini's Strength:** Gemini's only category where it scores higher than Sonnet is "Too Trivial" (10 vs. 9).

3. **Low Scores:** Both models have very low scores (1 or 0) in several categories. Gemini scores 1 in "Effectively Unit Test," "Non-negativity of Nat," and "Model Declares Untrue." Sonnet scores 0 in "Model Declares Untrue."

4. **Annotations:** The dashed boxes highlight specific low scores for Gemini (in "Effectively Unit Test," "Non-negativity of Nat," and "Bug") and a relatively high score for Sonnet (in "Actually Nontrivial"). This suggests these data points are considered particularly noteworthy, possibly indicating failures or successes worth investigating.

5. **Category "Model Declares Untrue":** This is the only category where one model (Sonnet) has a score of zero, while the other (Gemini) has a minimal score of 1.

### Interpretation

This chart provides a comparative snapshot of how two AI models (Sonnet and Gemini) perform on a set of qualitative benchmarks, likely related to code generation, reasoning, or logical tasks given the category names (e.g., "Unit Test," "Bug," "Branch of If").

* **What the data suggests:** Sonnet appears to be the stronger or more reliable model across a broader range of these specific qualitative assessments. Its high score in "Easy Branch of If" suggests strength in basic logical control flow, while its high score in "Non-negativity of Nat" might indicate robustness in handling mathematical or logical constraints. Gemini's singular peak in "Too Trivial" could imply it is adept at solving problems deemed simple, but it struggles significantly with others, as indicated by the very low scores highlighted by the red boxes.

* **Relationship between elements:** The side-by-side bars allow for direct, category-by-category comparison. The annotations draw the viewer's eye to extreme values—either very low performance (Gemini's 1s) or a point of relative strength (Sonnet's 9 in a category where Gemini scored 5). This framing suggests the chart's purpose is not just to show overall performance but to pinpoint specific areas of concern or advantage.

* **Notable anomalies:** The "Model Declares Untrue" category is an outlier. A score of zero for Sonnet is striking and could mean the model never produced this specific type of error in the assessment, or the test was not applicable. The near-zero scores for Gemini in multiple categories are also anomalous and indicate potential critical weaknesses in those areas.

* **Underlying message:** The chart likely aims to argue that model evaluation must be granular. While one model may be better overall, the other can have specific strengths. The annotations emphasize that failures in certain categories (like producing bugs or failing unit tests) are particularly important to note, even if the model performs well elsewhere. The data underscores the importance of diverse testing suites to capture the full performance profile of an AI model.