\n

## Charts: Validation Loss vs. FLOPS for Different Model Configurations

### Overview

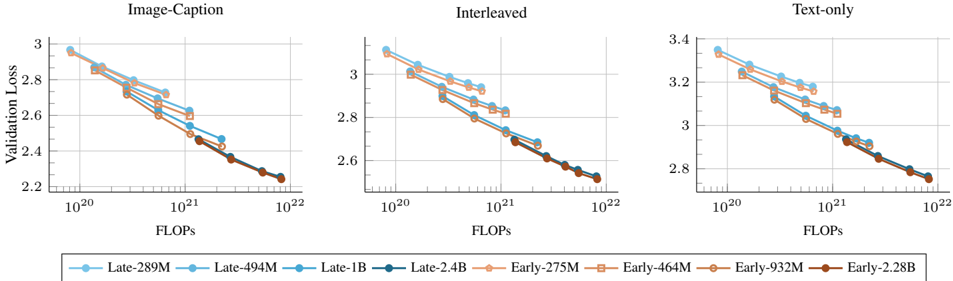

The image presents three separate charts, each displaying validation loss as a function of FLOPS (Floating Point Operations). The charts compare the performance of different model configurations (Late-289M, Late-494M, Late-1B, Late-2.4B, Early-275M, Early-464M, Early-932M, Early-2.28B) across three different training scenarios: Image-Caption, Interleaved, and Text-only. All charts share the same x-axis (FLOPS) and y-axis (Validation Loss), but vary in the specific data displayed.

### Components/Axes

* **X-axis:** FLOPS, ranging from 10<sup>20</sup> to 10<sup>22</sup> (logarithmic scale).

* **Y-axis:** Validation Loss, ranging from approximately 2.2 to 3.4.

* **Legend:** Located at the bottom of the image, containing the following model configurations and their corresponding colors:

* Late-289M (Light Blue)

* Late-494M (Turquoise)

* Late-1B (Blue)

* Late-2.4B (Dark Blue)

* Early-275M (Orange)

* Early-464M (Brown)

* Early-932M (Dark Brown)

* Early-2.28B (Dark Orange)

* **Chart Titles:**

* Image-Caption (Top-Left)

* Interleaved (Top-Center)

* Text-only (Top-Right)

### Detailed Analysis or Content Details

**Image-Caption Chart (Left)**

* **Late-289M (Light Blue):** Starts at approximately 3.05, decreases to approximately 2.35.

* **Late-494M (Turquoise):** Starts at approximately 3.0, decreases to approximately 2.3.

* **Late-1B (Blue):** Starts at approximately 3.0, decreases to approximately 2.25.

* **Late-2.4B (Dark Blue):** Starts at approximately 3.0, decreases to approximately 2.2.

* **Early-275M (Orange):** Starts at approximately 3.1, decreases to approximately 2.4.

* **Early-464M (Brown):** Starts at approximately 3.05, decreases to approximately 2.3.

* **Early-932M (Dark Brown):** Starts at approximately 3.0, decreases to approximately 2.25.

* **Early-2.28B (Dark Orange):** Starts at approximately 3.0, decreases to approximately 2.2.

**Interleaved Chart (Center)**

* **Late-289M (Light Blue):** Starts at approximately 3.05, decreases to approximately 2.35.

* **Late-494M (Turquoise):** Starts at approximately 3.0, decreases to approximately 2.3.

* **Late-1B (Blue):** Starts at approximately 3.0, decreases to approximately 2.25.

* **Late-2.4B (Dark Blue):** Starts at approximately 3.0, decreases to approximately 2.2.

* **Early-275M (Orange):** Starts at approximately 3.1, decreases to approximately 2.4.

* **Early-464M (Brown):** Starts at approximately 3.05, decreases to approximately 2.3.

* **Early-932M (Dark Brown):** Starts at approximately 3.0, decreases to approximately 2.25.

* **Early-2.28B (Dark Orange):** Starts at approximately 3.0, decreases to approximately 2.2.

**Text-only Chart (Right)**

* **Late-289M (Light Blue):** Starts at approximately 3.3, decreases to approximately 2.6.

* **Late-494M (Turquoise):** Starts at approximately 3.3, decreases to approximately 2.6.

* **Late-1B (Blue):** Starts at approximately 3.25, decreases to approximately 2.55.

* **Late-2.4B (Dark Blue):** Starts at approximately 3.25, decreases to approximately 2.5.

* **Early-275M (Orange):** Starts at approximately 3.35, decreases to approximately 2.7.

* **Early-464M (Brown):** Starts at approximately 3.3, decreases to approximately 2.6.

* **Early-932M (Dark Brown):** Starts at approximately 3.25, decreases to approximately 2.55.

* **Early-2.28B (Dark Orange):** Starts at approximately 3.25, decreases to approximately 2.5.

In all three charts, the lines generally slope downwards, indicating that validation loss decreases as FLOPS increase. Larger models (higher parameter counts) generally achieve lower validation loss for a given FLOPS value.

### Key Observations

* The "Late" models consistently outperform the "Early" models across all three training scenarios.

* The performance gap between the models tends to narrow as FLOPS increase.

* The "Text-only" scenario generally exhibits higher validation loss compared to the "Image-Caption" and "Interleaved" scenarios.

* The largest models (Late-2.4B and Early-2.28B) achieve the lowest validation loss, but the improvement diminishes with increasing FLOPS.

### Interpretation

The charts demonstrate the relationship between model size (parameter count, represented by the model names), computational cost (FLOPS), and model performance (validation loss) for different training paradigms. The consistent outperformance of the "Late" models suggests that the training methodology or data used for these models is more effective. The decreasing validation loss with increasing FLOPS indicates that more computation generally leads to better model performance, but with diminishing returns. The higher validation loss in the "Text-only" scenario suggests that the models may require more computational resources or a different architecture to achieve comparable performance on text-based tasks compared to tasks involving images and captions or interleaved data. The charts provide valuable insights for optimizing model size and training strategies to achieve the desired balance between performance and computational cost. The diminishing returns observed at higher FLOPS values suggest that there may be a point beyond which increasing computational resources does not significantly improve model performance.