## System Architecture Diagram: Symbolic Reasoning Pipeline

### Overview

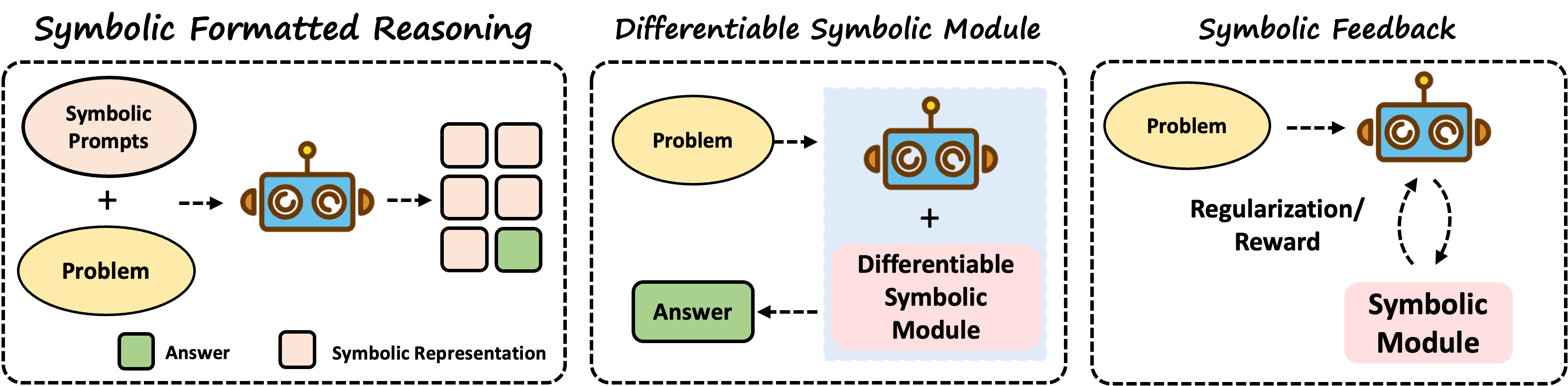

The image displays a three-part technical diagram illustrating a symbolic reasoning system architecture. The diagram is divided into three horizontally arranged, dashed-border rectangular modules, each representing a distinct phase of a process. The overall flow moves from left to right, depicting a pipeline that transforms symbolic inputs into structured outputs, incorporates a differentiable module, and includes a feedback mechanism. The visual style uses simple icons (a robot), colored shapes (ovals, rectangles), and directional arrows to indicate data flow and component relationships.

### Components/Flow

The diagram is segmented into three primary modules, each with a title at the top:

**1. Left Module: Symbolic Formatted Reasoning**

* **Title:** "Symbolic Formatted Reasoning" (top center of the module).

* **Inputs (Left Side):**

* A beige oval labeled **"Symbolic Prompts"**.

* A yellow oval labeled **"Problem"**.

* A plus sign (`+`) between them, indicating they are combined.

* **Processing Unit (Center):** A blue and brown robot icon, representing an AI or processing agent.

* **Output (Right Side):** A 3x3 grid of squares.

* Eight squares are beige.

* One square (bottom-right) is green.

* **Legend (Bottom of Module):**

* A green square labeled **"Answer"**.

* A beige square labeled **"Symbolic Representation"**.

* **Flow:** Dashed arrows show the flow: `Symbolic Prompts + Problem` --> `Robot` --> `Grid of Symbolic Representations and Answer`.

**2. Center Module: Differentiable Symbolic Module**

* **Title:** "Differentiable Symbolic Module" (top center).

* **Input (Left):** A yellow oval labeled **"Problem"**.

* **Processing Unit (Center):** A composite element within a light blue background:

* The same blue/brown robot icon.

* A plus sign (`+`) below it.

* A pink rectangle labeled **"Differentiable Symbolic Module"**.

* **Output (Bottom):** A green rectangle labeled **"Answer"**.

* **Flow:** A dashed arrow shows: `Problem` --> `[Robot + Differentiable Symbolic Module]` --> `Answer`.

**3. Right Module: Symbolic Feedback**

* **Title:** "Symbolic Feedback" (top center).

* **Input (Left):** A yellow oval labeled **"Problem"**.

* **Processing Unit (Top Right):** The blue/brown robot icon.

* **Feedback Components (Bottom Right):**

* Text: **"Regularization/Reward"**.

* A pink rectangle labeled **"Symbolic Module"**.

* **Flow:** A complex, cyclical flow is indicated by dashed arrows:

1. `Problem` --> `Robot`.

2. A curved, double-headed dashed arrow connects the `Robot` and the `Symbolic Module`, passing through the "Regularization/Reward" text. This indicates a bidirectional feedback or training loop between the robot (agent) and the symbolic module, mediated by a regularization or reward signal.

### Detailed Analysis

* **Visual Consistency:** The same robot icon is used across all three modules, signifying a core agent or model. The color coding is consistent: yellow for "Problem," green for "Answer," beige for "Symbolic Representation," and pink for symbolic modules.

* **Spatial Layout:** The modules are arranged linearly from left to right, suggesting a sequential or comparative process. Within each module, inputs are generally on the left, processing in the center, and outputs or feedback on the right or bottom.

* **Arrow Types:** All connections use dashed arrows, which may imply logical, data, or gradient flow rather than a strict physical connection.

* **Text Transcription:** All text is in English. No other languages are present.

### Key Observations

1. **Progression of Complexity:** The left module shows a basic input-to-output transformation. The center module introduces a specialized "Differentiable Symbolic Module" as a core component. The right module adds a dynamic feedback loop, indicating an adaptive or learning system.

2. **Role of the "Problem":** The "Problem" oval is a constant input across all three modules, serving as the primary query or task for the system.

3. **Output Specificity:** Only the first module explicitly shows the structure of its output (a grid where most elements are symbolic representations and one is the final answer). The other modules abstract the output to a simple "Answer" box.

4. **Feedback Mechanism:** The third module is the only one depicting a closed-loop system, where the output or performance (via Regularization/Reward) influences the internal Symbolic Module, which in turn affects the Robot's processing.

### Interpretation

This diagram illustrates a conceptual framework for integrating symbolic reasoning with differentiable (likely neural) components in an AI system.

* **Module 1 (Symbolic Formatted Reasoning)** represents a foundational stage where a problem, guided by symbolic prompts, is processed to produce both intermediate symbolic representations and a final answer. This suggests a structured, interpretable reasoning process.

* **Module 2 (Differentiable Symbolic Module)** proposes a hybrid architecture. Here, the core processing unit is not just a generic agent but a combination of the agent and a dedicated, differentiable symbolic module. This implies the system can learn or optimize symbolic operations through gradient-based methods, bridging connectionist and symbolic AI paradigms.

* **Module 3 (Symbolic Feedback)** introduces a meta-level learning or optimization process. The bidirectional arrow between the Robot and the Symbolic Module, mediated by "Regularization/Reward," indicates that the symbolic component itself can be trained or refined based on the agent's performance. This could involve reinforcement learning (reward) or constraints to prevent overfitting (regularization).

**Overall Purpose:** The diagram argues for a progressive AI architecture that moves from static symbolic reasoning to a hybrid, differentiable system, and finally to a self-improving system where the symbolic knowledge base is dynamically updated. It highlights the interplay between fixed symbolic structures ("Prompts," "Module") and adaptive learning processes ("Differentiable," "Feedback"). The consistent use of the "Problem" as an input grounds all three approaches in task-oriented problem-solving.