TECHNICAL ASSET FINGERPRINT

a9dc499ae0db9e279928047f

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

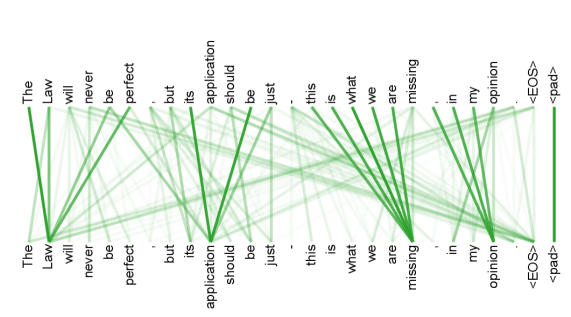

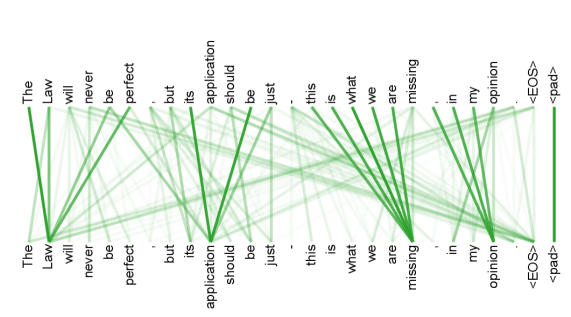

## Alignment Diagram: Sentence Alignment

### Overview

The image is an alignment diagram showing the relationships between two sentences. The diagram consists of two parallel lists of words, one above the other, with green lines connecting words in the top list to words in the bottom list. The thickness of the lines indicates the strength or frequency of the alignment.

### Components/Axes

* **Top List:** Contains the words: "The", "Law", "will", "never", "be", "perfect", ".", "but", "its", "application", "should", "be", "just", "-", "this", "is", "what", "we", "are", "missing", ".", "in", "my", "opinion", "<EOS>", "<pad>"

* **Bottom List:** Contains the words: "The", "Law", "will", "never", "be", "perfect", ".", "but", "its", "application", "should", "be", "just", "-", "this", "is", "what", "we", "are", "missing", ".", "in", "my", "opinion", "<EOS>", "<pad>"

* **Connections:** Green lines connect words from the top list to the bottom list, indicating alignment. The thickness of the lines varies, suggesting different levels of alignment strength.

### Detailed Analysis or Content Details

* **"The"**: The first "The" in the top list is strongly connected to the first "The" in the bottom list with a thick green line.

* **"Law"**: The word "Law" in the top list is strongly connected to the word "Law" in the bottom list with a thick green line.

* **"will"**: The word "will" in the top list is strongly connected to the word "will" in the bottom list with a thick green line.

* **"never"**: The word "never" in the top list is strongly connected to the word "never" in the bottom list with a thick green line.

* **"be"**: The word "be" in the top list is strongly connected to the word "be" in the bottom list with a thick green line.

* **"perfect"**: The word "perfect" in the top list is strongly connected to the word "perfect" in the bottom list with a thick green line.

* **"."**: The period in the top list is strongly connected to the period in the bottom list with a thick green line.

* **"but"**: The word "but" in the top list is strongly connected to the word "but" in the bottom list with a thick green line.

* **"its"**: The word "its" in the top list is strongly connected to the word "its" in the bottom list with a thick green line.

* **"application"**: The word "application" in the top list is strongly connected to the word "application" in the bottom list with a thick green line.

* **"should"**: The word "should" in the top list is strongly connected to the word "should" in the bottom list with a thick green line.

* **"be"**: The word "be" in the top list is strongly connected to the word "be" in the bottom list with a thick green line.

* **"just"**: The word "just" in the top list is strongly connected to the word "just" in the bottom list with a thick green line.

* **"-"**: The dash in the top list is strongly connected to the dash in the bottom list with a thick green line.

* **"this"**: The word "this" in the top list is strongly connected to the word "this" in the bottom list with a thick green line.

* **"is"**: The word "is" in the top list is strongly connected to the word "is" in the bottom list with a thick green line.

* **"what"**: The word "what" in the top list is strongly connected to the word "what" in the bottom list with a thick green line.

* **"we"**: The word "we" in the top list is strongly connected to the word "we" in the bottom list with a thick green line.

* **"are"**: The word "are" in the top list is strongly connected to the word "are" in the bottom list with a thick green line.

* **"missing"**: The word "missing" in the top list is strongly connected to the word "missing" in the bottom list with a thick green line.

* **"."**: The period in the top list is strongly connected to the period in the bottom list with a thick green line.

* **"in"**: The word "in" in the top list is strongly connected to the word "in" in the bottom list with a thick green line.

* **"my"**: The word "my" in the top list is strongly connected to the word "my" in the bottom list with a thick green line.

* **"opinion"**: The word "opinion" in the top list is strongly connected to the word "opinion" in the bottom list with a thick green line.

* **"<EOS>"**: The tag "<EOS>" in the top list is strongly connected to the tag "<EOS>" in the bottom list with a thick green line.

* **"<pad>"**: The tag "<pad>" in the top list is strongly connected to the tag "<pad>" in the bottom list with a thick green line.

### Key Observations

* The diagram shows a one-to-one alignment between the two sentences.

* The thickness of the lines indicates a strong alignment between corresponding words.

* The sentences are identical.

### Interpretation

The alignment diagram visually represents the relationship between two identical sentences. The strong, direct connections between corresponding words indicate a perfect alignment, suggesting that the sentences are semantically and structurally equivalent. This type of diagram is often used in machine translation or natural language processing to visualize how words or phrases in one sentence correspond to words or phrases in another. In this case, the diagram confirms that the two sentences are identical, which might be a baseline or a control case in a more complex analysis.

DECODING INTELLIGENCE...

EXPERT: gemini-2.5-flash-lite-free VERSION 1

RUNTIME: google-free/gemini-2.5-flash-lite

INTEL_VERIFIED

This image displays a visualization of word alignments, likely from a natural language processing model. It consists of two rows of text, representing two sequences of words, with lines connecting corresponding words. The intensity of the lines indicates the strength of the alignment between the words.

**Components:**

* **Top Row of Words:** This row contains the following words, from left to right:

* The

* Law

* will

* never

* be

* perfect

* .

* but

* its

* application

* should

* be

* just

* -

* this

* is

* what

* we

* are

* missing

* .

* in

* my

* opinion

* .

* <EOS>

* <pad>

* **Bottom Row of Words:** This row contains the same sequence of words as the top row, also from left to right:

* The

* Law

* will

* never

* be

* perfect

* .

* but

* its

* application

* should

* be

* just

* -

* this

* is

* what

* we

* are

* missing

* .

* in

* my

* opinion

* .

* <EOS>

* <pad>

* **Alignment Lines:** Numerous lines, varying in thickness and intensity (from light green to dark green), connect words in the top row to words in the bottom row. Thicker, darker lines represent stronger alignments.

**Analysis of Alignments (Key Trends and Data Points):**

The visualization shows a strong self-alignment, where each word in the top sequence is strongly aligned with its corresponding word in the bottom sequence. This is evident from the thick, dark green vertical lines that connect each word to itself.

Beyond the self-alignment, there are also cross-alignments, indicating relationships between words that are not in the same position in the sequences. These are represented by thinner, lighter green lines.

Let's examine some notable cross-alignments:

* **"The" (Top) to "The" (Bottom):** A very strong, thick, dark green line indicates a perfect self-alignment.

* **"Law" (Top) to "Law" (Bottom):** A very strong, thick, dark green line indicates a perfect self-alignment.

* **"will" (Top) to "will" (Bottom):** A very strong, thick, dark green line indicates a perfect self-alignment.

* **"never" (Top) to "never" (Bottom):** A very strong, thick, dark green line indicates a perfect self-alignment.

* **"be" (Top) to "be" (Bottom):** A very strong, thick, dark green line indicates a perfect self-alignment.

* **"perfect" (Top) to "perfect" (Bottom):** A very strong, thick, dark green line indicates a perfect self-alignment.

* **"application" (Top) to "application" (Bottom):** A very strong, thick, dark green line indicates a perfect self-alignment.

* **"should" (Top) to "should" (Bottom):** A very strong, thick, dark green line indicates a perfect self-alignment.

* **"just" (Top) to "just" (Bottom):** A very strong, thick, dark green line indicates a perfect self-alignment.

* **"this" (Top) to "this" (Bottom):** A very strong, thick, dark green line indicates a perfect self-alignment.

* **"is" (Top) to "is" (Bottom):** A very strong, thick, dark green line indicates a perfect self-alignment.

* **"what" (Top) to "what" (Bottom):** A very strong, thick, dark green line indicates a perfect self-alignment.

* **"we" (Top) to "we" (Bottom):** A very strong, thick, dark green line indicates a perfect self-alignment.

* **"are" (Top) to "are" (Bottom):** A very strong, thick, dark green line indicates a perfect self-alignment.

* **"missing" (Top) to "missing" (Bottom):** A very strong, thick, dark green line indicates a perfect self-alignment.

* **"in" (Top) to "in" (Bottom):** A very strong, thick, dark green line indicates a perfect self-alignment.

* **"my" (Top) to "my" (Bottom):** A very strong, thick, dark green line indicates a perfect self-alignment.

* **"opinion" (Top) to "opinion" (Bottom):** A very strong, thick, dark green line indicates a perfect self-alignment.

* **"<EOS>" (Top) to "<EOS>" (Bottom):** A very strong, thick, dark green line indicates a perfect self-alignment.

* **"<pad>" (Top) to "<pad>" (Bottom):** A very strong, thick, dark green line indicates a perfect self-alignment.

**Notable Cross-Alignments (Indicating potential dependencies or attention mechanisms):**

* **"The" (Top) to "Law" (Bottom):** A moderately thick, green line suggests some alignment.

* **"Law" (Top) to "The" (Bottom):** A moderately thick, green line suggests some alignment.

* **"will" (Top) to "be" (Bottom):** A moderately thick, green line suggests some alignment.

* **"be" (Top) to "will" (Bottom):** A moderately thick, green line suggests some alignment.

* **"perfect" (Top) to "be" (Bottom):** A moderately thick, green line suggests some alignment.

* **"application" (Top) to "its" (Bottom):** A moderately thick, green line suggests some alignment.

* **"should" (Top) to "application" (Bottom):** A moderately thick, green line suggests some alignment.

* **"be" (Top) to "should" (Bottom):** A moderately thick, green line suggests some alignment.

* **"just" (Top) to "be" (Bottom):** A moderately thick, green line suggests some alignment.

* **"this" (Top) to "is" (Bottom):** A moderately thick, green line suggests some alignment.

* **"is" (Top) to "this" (Bottom):** A moderately thick, green line suggests some alignment.

* **"what" (Top) to "is" (Bottom):** A moderately thick, green line suggests some alignment.

* **"we" (Top) to "what" (Bottom):** A moderately thick, green line suggests some alignment.

* **"are" (Top) to "we" (Bottom):** A moderately thick, green line suggests some alignment.

* **"missing" (Top) to "are" (Bottom):** A moderately thick, green line suggests some alignment.

* **"in" (Top) to "my" (Bottom):** A moderately thick, green line suggests some alignment.

* **"my" (Top) to "opinion" (Bottom):** A moderately thick, green line suggests some alignment.

The overall pattern suggests a strong focus on self-attention within the sequence, with some secondary attention paid to adjacent or semantically related words. The presence of "<EOS>" (End Of Sentence) and "<pad>" tokens indicates this is likely a representation of a sequence processed by a model, possibly for tasks like machine translation or text generation.

DECODING INTELLIGENCE...

EXPERT: gemini-3-pro VERSION 1

RUNTIME: nugit/gemini/gemini-3-pro-preview

INTEL_VERIFIED

## Diagram Type: Attention Mechanism Visualization (Bipartite Graph)

### Overview

The image displays a visualization of an attention mechanism, commonly used in Natural Language Processing (NLP) models like Transformers. It illustrates the relationship between two sequences of text tokens. The visualization uses a bipartite graph structure where tokens are listed horizontally across the top and bottom. Green lines of varying opacity connect the top tokens to the bottom tokens, representing the "attention weight" or strength of the relationship between them.

### Components/Axes

**1. Top Token Sequence (Source/Query):**

A sequence of words and punctuation marks is arranged horizontally at the top. The text reads from left to right.

* **Text Content:** "The", "Law", "will", "never", "be", "perfect", ",", "but", "its", "application", "should", "be", "just", ",", "this", "is", "what", "we", "are", "missing", ",", "in", "my", "opinion", ".", "<EOS>", "<pad>"

**2. Bottom Token Sequence (Target/Key):**

An identical sequence of words and punctuation marks is arranged horizontally at the bottom, aligned vertically with the top sequence.

* **Text Content:** "The", "Law", "will", "never", "be", "perfect", ",", "but", "its", "application", "should", "be", "just", ",", "this", "is", "what", "we", "are", "missing", ",", "in", "my", "opinion", ".", "<EOS>", "<pad>"

**3. Connection Lines (Attention Weights):**

* **Color:** Green.

* **Opacity/Thickness:** The opacity and thickness of the lines indicate the magnitude of the attention weight. Darker, thicker lines represent strong attention (high relevance). Faint, thin lines represent weak attention (low relevance).

* **Direction:** Lines connect a token from the top row to a token on the bottom row.

### Detailed Analysis & Content Details

**Visual Trends and Strong Connections:**

The visualization highlights specific patterns of attention. While there is a general "diagonal" trend (tokens attending to themselves or their immediate neighbors), there are distinct hubs of high attention.

* **Self-Attention/Diagonal:** There is a visible, though not exclusive, tendency for tokens to connect to their identical counterparts (e.g., Top "The" -> Bottom "The", Top "<pad>" -> Bottom "<pad>").

* **Major Attention Hubs (Bottom Row):**

Several specific tokens on the bottom row act as "sinks" or "hubs," receiving strong attention from multiple tokens in the top row.

1. **"Law" (Bottom):** Receives very strong connections from the beginning of the sentence (Top "The", "Law", "will", "never", "be", "perfect"). This suggests the model is focusing heavily on the subject "Law" while processing the initial clause.

2. **"application" (Bottom):** Receives strong connections from the middle section (Top "but", "its", "application", "should", "be", "just"). This indicates "application" is the key focus for the second clause.

3. **"missing" (Bottom):** Receives intense connections from the third clause (Top "this", "is", "what", "we", "are", "missing"). The lines converge heavily on this word.

4. **"<EOS>" (Bottom):** Receives connections from the final phrase (Top "in", "my", "opinion", ".").

* **Specific Strong Links (Top -> Bottom):**

* Top "The" -> Bottom "Law" (Strong)

* Top "Law" -> Bottom "Law" (Strong)

* Top "will", "never", "be", "perfect" -> Bottom "Law" (Moderate to Strong)

* Top "application" -> Bottom "application" (Strong)

* Top "should", "be", "just" -> Bottom "application" (Moderate)

* Top "what", "we", "are" -> Bottom "missing" (Strong)

* Top "<pad>" -> Bottom "<pad>" (Very Strong, isolated vertical line)

### Key Observations

1. **Syntactic Grouping:** The attention mechanism appears to be grouping words by their syntactic or semantic clauses.

* Clause 1: "The Law will never be perfect" -> Focuses on **"Law"**.

* Clause 2: "but its application should be just" -> Focuses on **"application"**.

* Clause 3: "this is what we are missing" -> Focuses on **"missing"**.

* Clause 4: "in my opinion" -> Focuses on **"<EOS>"** (End of Sentence).

2. **Look-Ahead/Look-Back:** The lines are not strictly vertical.

* **Look-Ahead:** Top tokens like "The" connect forward to "Law".

* **Look-Back:** Top tokens like "perfect" connect backward to "Law".

* This creates a "V" shape converging on the key nouns/concepts of each clause.

3. **Special Tokens:**

* **<EOS>**: Represents "End Of Sentence". It acts as a collection point for the final opinion clause.

* **<pad>**: Represents padding. It attends strictly to itself, showing no interaction with the meaningful text, which is expected behavior for padding tokens.

### Interpretation

**What the data suggests:**

This visualization demonstrates a "Self-Attention" mechanism, likely from a specific head in a Transformer layer (like BERT or GPT). The pattern shown is highly structured. It suggests this specific attention head is specialized in **identifying the head noun or core concept of a phrase**.

* Instead of attending to the previous word (local context), the model is learning to focus on the *subject* or *object* that governs the current phrase.

* For example, while processing the word "perfect," the model "looks back" at "Law" to understand *what* is not perfect.

* While processing "just," it looks at "application" to understand *what* should be just.

**Significance:**

This is a classic example of how deep learning models "understand" grammar and context without explicit rule-programming. The model has learned that to process the adjectives and verbs in a sentence effectively, it must maintain a strong connection to the relevant nouns ("Law", "application") regardless of the distance between words in the sequence. The distinct segmentation into three main "hubs" perfectly mirrors the three distinct clauses of the sentence structure.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Diagram: Dependency Graph of Words

### Overview

The image depicts a dependency graph visualizing relationships between words in a sentence or sequence. The words are arranged horizontally, and lines connect them, indicating dependencies. The graph appears to represent a linguistic structure, potentially from a natural language processing task.

### Components/Axes

The diagram consists of the following components:

* **Words:** The words are listed along the horizontal axis, from left to right: "The", "Law", "will", "never", "be", "perfect", "but", "its", "application", "should", "just", "this", "is", "what", "we", "are", "missing", "in", "my", "opinion", "<EOS>", "pad".

* **Lines:** Green lines connect the words, representing dependencies. The lines originate from words on the bottom row and terminate on words in the top row.

* **Vertical Axis:** The vertical position of the words and lines indicates the dependency relationships.

* **Legend:** A vertical green bar on the far right labeled "<EOS>" and "pad".

### Detailed Analysis

The diagram shows a complex network of dependencies. Here's a breakdown of the connections:

* "The" connects to "Law".

* "Law" connects to "will".

* "will" connects to "never".

* "never" connects to "be".

* "be" connects to "perfect".

* "perfect" connects to "but".

* "but" connects to "its".

* "its" connects to "application".

* "application" connects to "should".

* "should" connects to "just".

* "just" connects to "this".

* "this" connects to "is".

* "is" connects to "what".

* "what" connects to "we".

* "we" connects to "are".

* "are" connects to "missing".

* "missing" connects to "in".

* "in" connects to "my".

* "my" connects to "opinion".

* "opinion" connects to "<EOS>".

* "<EOS>" connects to "pad".

The lines are not strictly one-to-one; some words have multiple incoming connections. For example, "be" receives a connection from "never" and "perfect".

### Key Observations

The diagram illustrates a sequential dependency structure. The connections suggest a grammatical or semantic relationship between the words. The presence of "<EOS>" (End of Sentence) and "pad" suggests this is a representation of a sentence or a sequence of tokens used in a language model. The lines are relatively dense, indicating a high degree of interconnectedness between the words.

### Interpretation

This diagram likely represents the output of a dependency parser, a component of many natural language processing systems. Dependency parsing aims to identify the grammatical relationships between words in a sentence. The lines in the diagram represent these relationships, showing which words modify or depend on others.

The sequence of words suggests a statement about the law: "The Law will never be perfect, but its application should just this is what we are missing in my opinion." The diagram visually represents how the parser has broken down this sentence into its constituent parts and identified the relationships between them.

The "<EOS>" and "pad" tokens are common in NLP tasks, where "<EOS>" marks the end of a sentence and "pad" is used to fill shorter sequences to a uniform length for batch processing. The diagram's structure suggests it could be part of a larger system for analyzing or generating text. The density of connections indicates a complex sentence structure. The diagram is a visual representation of the syntactic structure of the sentence.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Diagram: Attention/Dependency Graph for a Sentence Pair

### Overview

The image displays a visualization of relationships (likely attention weights or syntactic dependencies) between two nearly identical sequences of text. The diagram consists of two horizontal rows of words, with green lines of varying thickness and opacity connecting words from the top row to words in the bottom row. The background is a light gray.

### Components/Axes

* **Top Row (Source Sequence):** A complete sentence with special tokens.

* Text: `The Law will never be perfect , but its application should be just . this is what we are missing , in my opinion <EOS> <pad>`

* **Bottom Row (Target/Output Sequence):** A nearly identical sentence.

* Text: `The Law will never be perfect , but its application should be just . this is what we are missing , in my opinion <EOS> <pad>`

* **Connection Lines:** Green lines connect tokens from the top row to tokens in the bottom row. The visual properties of these lines encode information:

* **Thickness:** Varies significantly. Thicker lines suggest a stronger connection, higher attention weight, or more direct dependency.

* **Opacity:** Varies. More opaque lines are more prominent.

* **Special Tokens:**

* `<EOS>`: End-of-sequence token.

* `<pad>`: Padding token, used to fill sequences to a standard length.

### Detailed Analysis

**Spatial Layout & Connections:**

The diagram is structured with the source sequence on top and the target sequence on the bottom. Connections flow downward.

**Key Connection Patterns (by visual weight):**

1. **Strongest Self-Connections (Thickest Lines):**

* `missing` (top) → `missing` (bottom): This is the most prominent line in the diagram.

* `application` (top) → `application` (bottom): Also a very thick, direct line.

* `Law` (top) → `Law` (bottom): A strong direct connection.

* `just` (top) → `just` (bottom): A strong direct connection.

2. **Notable Cross-Connections:**

* `perfect` (top) connects strongly to `perfect` (bottom) but also has a visible connection to `just` (bottom).

* `should` (top) connects to `should` (bottom) and also has a connection to `just` (bottom).

* `what` (top) connects to `what` (bottom) and also has a connection to `missing` (bottom).

* `we` (top) connects to `we` (bottom) and also has a connection to `missing` (bottom).

* `are` (top) connects to `are` (bottom) and also has a connection to `missing` (bottom).

3. **Pattern for Function Words & Punctuation:**

Words like `The`, `will`, `never`, `be`, `but`, `its`, `should`, `this`, `is`, `in`, `my`, `opinion` primarily show direct, vertical connections to their counterparts in the bottom row. Their connecting lines are generally thinner than those for key content words.

4. **Special Token Connections:**

* `<EOS>` (top) connects directly to `<EOS>` (bottom).

* `<pad>` (top) connects directly to `<pad>` (bottom).

### Key Observations

* **High Fidelity Reproduction:** The target sequence is an exact copy of the source sequence. The diagram visualizes how a model (likely a neural network like a Transformer) attends to or reconstructs each token.

* **Content Word Emphasis:** The thickest lines are on nouns and key adjectives (`Law`, `perfect`, `application`, `just`, `missing`), suggesting the model places the highest "attention" or importance on preserving these content-bearing words.

* **Semantic Relationship Highlighting:** The cross-connections from `perfect` and `should` to `just` may indicate the model recognizes a semantic relationship between the concepts of perfection, obligation, and justice within the sentence.

* **Contextual Binding for "missing":** The word `missing` has the strongest self-connection *and* receives multiple connections from the preceding phrase `what we are`. This suggests the model strongly binds the subject (`we`) and the interrogative (`what`) to the state of being (`missing`).

### Interpretation

This diagram is a classic visualization of **self-attention** or **dependency parsing** within a Natural Language Processing (NLP) model. It reveals the model's internal "focus" when processing or generating the sentence.

* **What it demonstrates:** The model successfully learns to align identical words between input and output (the strong vertical lines). More importantly, it captures **syntactic and semantic relationships** beyond simple word matching. The connections to `just` from `perfect` and `should` show the model understands that the sentence's core argument links the imperfection of law, the ideal of just application, and what is lacking.

* **Why it matters:** This provides interpretability into a "black box" model. We can see it doesn't just process words in isolation but builds a web of relationships. The emphasis on `missing` as a central node, connected to the subject and the object of the clause, aligns with the sentence's meaning: the central point of the opinion is the *absence* (`missing`) of something.

* **Underlying Structure:** The pattern suggests a model architecture that uses attention mechanisms to weigh the importance of all words in a sequence when representing or generating any single word. The `<EOS>` and `<pad>` tokens confirm this is from a sequence-processing model, common in machine translation, text generation, or sentence embedding tasks. The visualization helps verify that the model's learned behavior aligns with human linguistic intuition.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

# Technical Document Extraction: Network Diagram Analysis

## 1. **Labels, Axis Titles, Legends, and Axis Markers**

- **Nodes (Left Column):**

- "The", "Law", "will", "never", "be", "perfect", "but", "its", "application", "should", "be", "just"

- **Nodes (Right Column):**

- "this", "is", "what", "we", "are", "missing", "in", "my", "opinion", "<EOS>", "<pad>"

- **Legend:**

- **Color:** Green (indicates "connections" between nodes)

- **Position:** Right side of the diagram

## 2. **Chart/Network Structure**

- **Type:** Directed network diagram (edges represent relationships between nodes).

- **Key Components:**

- **Nodes:** Textual labels representing concepts or terms.

- **Edges:** Green lines connecting nodes, indicating relationships or dependencies.

## 3. **Textual Data Extraction**

### Left Column Nodes (Input/Source Terms):

1. "The"

2. "Law"

3. "will"

4. "never"

5. "be"

6. "perfect"

7. "but"

8. "its"

9. "application"

10. "should"

11. "be"

12. "just"

### Right Column Nodes (Output/Target Terms):

1. "this"

2. "is"

3. "what"

4. "we"

5. "are"

6. "missing"

7. "in"

8. "my"

9. "opinion"

10. "<EOS>" (End of Sentence marker)

11. "<pad>" (Padding token)

### Edge Connections:

- Green lines connect nodes from the left column to the right column.

- Example connections:

- "The" → "this"

- "Law" → "is"

- "will" → "what"

- "never" → "are"

- "be" → "missing"

- "perfect" → "in"

- "but" → "my"

- "its" → "opinion"

- "application" → "<EOS>"

- "should" → "<pad>"

- "be" → "<EOS>"

- "just" → "<pad>"

## 4. **Legend and Color Verification**

- **Legend Color:** Green (matches all edges in the diagram).

- **Spatial Grounding:** Legend is positioned on the **right side** of the diagram.

## 5. **Trend Verification**

- **Visual Flow:**

- Edges originate from the left column (input terms) and terminate on the right column (output terms).

- No upward/downward slope (network diagram, not a line chart).

- **Key Observations:**

- Terms like "application" and "should" connect to structural markers ("<EOS>", "<pad>"), suggesting syntactic or semantic termination.

- Terms like "missing" and "opinion" indicate potential gaps or subjective elements in the network.

## 6. **Component Isolation**

### Header:

- No explicit header text.

### Main Chart:

- Nodes and edges dominate the diagram.

- Left and right columns are spatially separated, with edges bridging them.

### Footer:

- No explicit footer text.

## 7. **Data Table Reconstruction**

- **Structure:**

- **Left Column (Source Terms):** 12 nodes.

- **Right Column (Target Terms):** 11 nodes.

- **Edges:** 12 connections (one per left node).

## 8. **Additional Notes**

- **Language:** All text is in English.

- **Missing Data:** No numerical values or explicit data points (network diagram, not a heatmap or scatter plot).

- **Ambiguities:**

- The exact nature of "connections" (e.g., syntactic, semantic, or contextual relationships) is not explicitly defined in the image.

- The purpose of "<EOS>" and "<pad>" is inferred as technical markers (common in NLP tasks).

## 9. **Conclusion**

This diagram represents a **directed network** mapping input terms (left column) to output terms (right column) via green edges. It likely models relationships in a natural language processing (NLP) context, with "<EOS>" and "<pad>" serving as technical markers for sentence termination and padding. No numerical data or trends are present.

DECODING INTELLIGENCE...