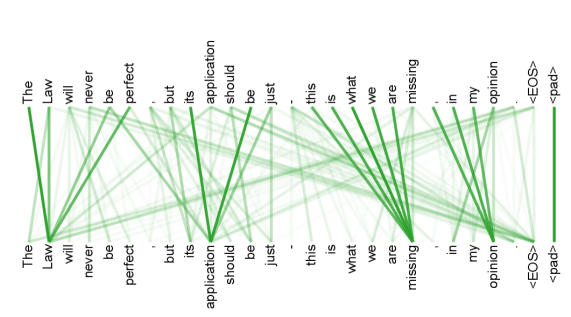

## Diagram: Attention/Dependency Graph for a Sentence Pair

### Overview

The image displays a visualization of relationships (likely attention weights or syntactic dependencies) between two nearly identical sequences of text. The diagram consists of two horizontal rows of words, with green lines of varying thickness and opacity connecting words from the top row to words in the bottom row. The background is a light gray.

### Components/Axes

* **Top Row (Source Sequence):** A complete sentence with special tokens.

* Text: `The Law will never be perfect , but its application should be just . this is what we are missing , in my opinion <EOS> <pad>`

* **Bottom Row (Target/Output Sequence):** A nearly identical sentence.

* Text: `The Law will never be perfect , but its application should be just . this is what we are missing , in my opinion <EOS> <pad>`

* **Connection Lines:** Green lines connect tokens from the top row to tokens in the bottom row. The visual properties of these lines encode information:

* **Thickness:** Varies significantly. Thicker lines suggest a stronger connection, higher attention weight, or more direct dependency.

* **Opacity:** Varies. More opaque lines are more prominent.

* **Special Tokens:**

* `<EOS>`: End-of-sequence token.

* `<pad>`: Padding token, used to fill sequences to a standard length.

### Detailed Analysis

**Spatial Layout & Connections:**

The diagram is structured with the source sequence on top and the target sequence on the bottom. Connections flow downward.

**Key Connection Patterns (by visual weight):**

1. **Strongest Self-Connections (Thickest Lines):**

* `missing` (top) → `missing` (bottom): This is the most prominent line in the diagram.

* `application` (top) → `application` (bottom): Also a very thick, direct line.

* `Law` (top) → `Law` (bottom): A strong direct connection.

* `just` (top) → `just` (bottom): A strong direct connection.

2. **Notable Cross-Connections:**

* `perfect` (top) connects strongly to `perfect` (bottom) but also has a visible connection to `just` (bottom).

* `should` (top) connects to `should` (bottom) and also has a connection to `just` (bottom).

* `what` (top) connects to `what` (bottom) and also has a connection to `missing` (bottom).

* `we` (top) connects to `we` (bottom) and also has a connection to `missing` (bottom).

* `are` (top) connects to `are` (bottom) and also has a connection to `missing` (bottom).

3. **Pattern for Function Words & Punctuation:**

Words like `The`, `will`, `never`, `be`, `but`, `its`, `should`, `this`, `is`, `in`, `my`, `opinion` primarily show direct, vertical connections to their counterparts in the bottom row. Their connecting lines are generally thinner than those for key content words.

4. **Special Token Connections:**

* `<EOS>` (top) connects directly to `<EOS>` (bottom).

* `<pad>` (top) connects directly to `<pad>` (bottom).

### Key Observations

* **High Fidelity Reproduction:** The target sequence is an exact copy of the source sequence. The diagram visualizes how a model (likely a neural network like a Transformer) attends to or reconstructs each token.

* **Content Word Emphasis:** The thickest lines are on nouns and key adjectives (`Law`, `perfect`, `application`, `just`, `missing`), suggesting the model places the highest "attention" or importance on preserving these content-bearing words.

* **Semantic Relationship Highlighting:** The cross-connections from `perfect` and `should` to `just` may indicate the model recognizes a semantic relationship between the concepts of perfection, obligation, and justice within the sentence.

* **Contextual Binding for "missing":** The word `missing` has the strongest self-connection *and* receives multiple connections from the preceding phrase `what we are`. This suggests the model strongly binds the subject (`we`) and the interrogative (`what`) to the state of being (`missing`).

### Interpretation

This diagram is a classic visualization of **self-attention** or **dependency parsing** within a Natural Language Processing (NLP) model. It reveals the model's internal "focus" when processing or generating the sentence.

* **What it demonstrates:** The model successfully learns to align identical words between input and output (the strong vertical lines). More importantly, it captures **syntactic and semantic relationships** beyond simple word matching. The connections to `just` from `perfect` and `should` show the model understands that the sentence's core argument links the imperfection of law, the ideal of just application, and what is lacking.

* **Why it matters:** This provides interpretability into a "black box" model. We can see it doesn't just process words in isolation but builds a web of relationships. The emphasis on `missing` as a central node, connected to the subject and the object of the clause, aligns with the sentence's meaning: the central point of the opinion is the *absence* (`missing`) of something.

* **Underlying Structure:** The pattern suggests a model architecture that uses attention mechanisms to weigh the importance of all words in a sequence when representing or generating any single word. The `<EOS>` and `<pad>` tokens confirm this is from a sequence-processing model, common in machine translation, text generation, or sentence embedding tasks. The visualization helps verify that the model's learned behavior aligns with human linguistic intuition.