## Flowchart: Interdisciplinary Application Evaluation Framework

### Overview

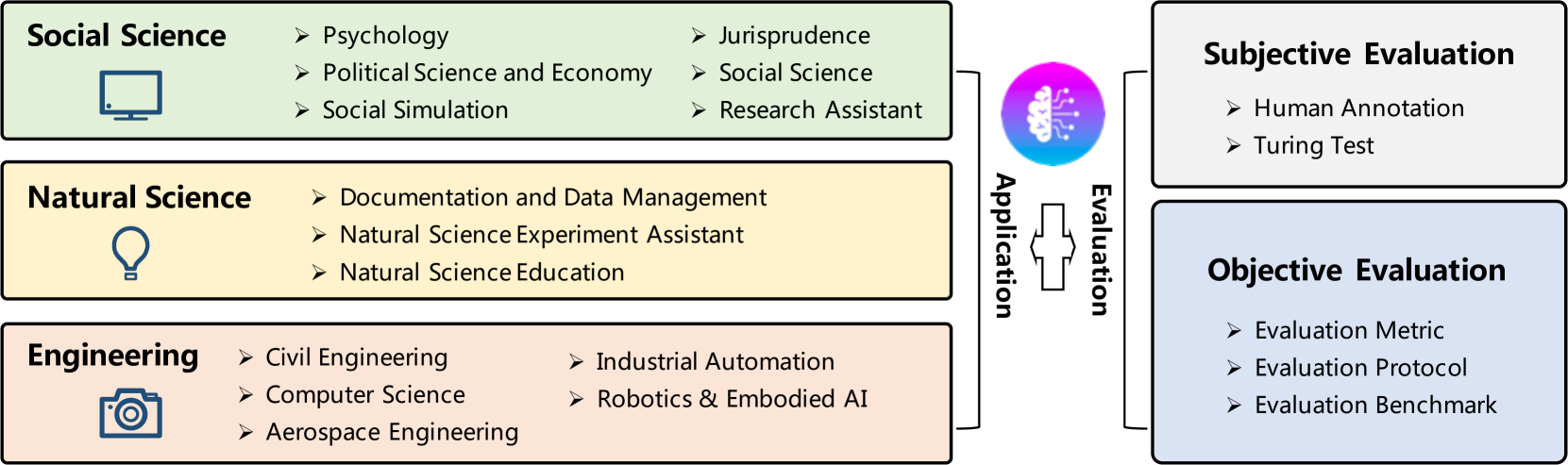

The diagram illustrates a hierarchical framework connecting three primary domains (Social Science, Natural Science, Engineering) to an "Application" node, which then branches into two evaluation types: Subjective Evaluation and Objective Evaluation. Arrows indicate directional relationships between components.

### Components/Axes

1. **Main Categories** (Left Column):

- **Social Science** (Green box with computer monitor icon)

- Psychology

- Political Science and Economy

- Social Simulation

- Jurisprudence

- Social Science (repeated)

- Research Assistant

- **Natural Science** (Yellow box with light bulb icon)

- Documentation and Data Management

- Natural Science Experiment Assistant

- Natural Science Education

- **Engineering** (Peach box with camera icon)

- Civil Engineering

- Computer Science

- Aerospace Engineering

- Industrial Automation

- Robotics & Embodied AI

2. **Central Nodes**:

- **Application** (Purple gradient brain icon)

- **Evaluation** (Gray box connecting Application to Evaluation types)

3. **Evaluation Subcategories** (Right Column):

- **Subjective Evaluation** (Light gray box)

- Human Annotation

- Turing Test

- **Objective Evaluation** (Blue box)

- Evaluation Metric

- Evaluation Protocol

- Evaluation Benchmark

### Detailed Analysis

- **Color Coding**:

- Social Science: Green (#98df8a)

- Natural Science: Yellow (#fdebd0)

- Engineering: Peach (#f4a582)

- Evaluation: Gray (#d8b365) and Blue (#b2df8a)

- **Flow Direction**:

- Top-to-bottom hierarchy for main categories

- Left-to-right flow from Application to Evaluation

- Subjective Evaluation (top-right) vs. Objective Evaluation (bottom-right)

### Key Observations

1. **Interdisciplinary Connections**:

- All three main categories feed into the central "Application" node, emphasizing cross-disciplinary integration.

- Engineering subcategories (e.g., Robotics) directly connect to both evaluation types, suggesting technical applications require dual assessment.

2. **Evaluation Balance**:

- Subjective Evaluation focuses on human-centric methods (Annotation, Turing Test).

- Objective Evaluation emphasizes quantifiable metrics (Protocols, Benchmarks).

3. **Repetition in Social Science**:

- "Social Science" appears twice under its own category, potentially indicating redundancy or emphasis on methodological consistency.

### Interpretation

This framework demonstrates how diverse research domains contribute to practical applications, which are then rigorously evaluated through complementary lenses:

- **Subjective Evaluation** captures qualitative, human-judgment aspects (e.g., ethical implications via Jurisprudence).

- **Objective Evaluation** ensures technical validity through measurable standards (e.g., Engineering benchmarks).

The central "Application" node acts as a convergence point, highlighting that effective solutions require synthesis of social, natural, and engineering principles. The dual evaluation structure underscores the necessity of balancing human insight with empirical validation in interdisciplinary research.