## Line Graph: Model Size vs. Accuracy for Different Architectures

### Overview

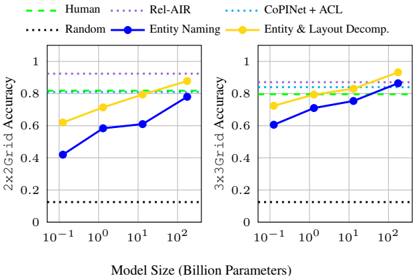

The image contains two line graphs comparing the accuracy of different AI models (2x2Grid and 3x3Grid) across varying model sizes (in billion parameters). The graphs include multiple data series with distinct line styles and colors, alongside benchmark lines for human performance and random guessing.

### Components/Axes

- **X-axis**: "Model Size (Billion Parameters)" with logarithmic scale markers at 10⁻¹, 10⁰, 10¹, and 10².

- **Y-axis (Left)**: "2x2Grid Accuracy" (0–1) for the left graph; "3x3Grid Accuracy" (0–1) for the right graph.

- **Legend**:

- **Human**: Dashed green line (~0.8 accuracy).

- **Rel-AIR**: Dotted purple line (~0.9 accuracy).

- **Random**: Dotted black line (~0.2 accuracy).

- **Entity Naming**: Solid blue line (2x2Grid: 0.4→0.8; 3x3Grid: 0.6→0.9).

- **Entity & Layout Decomp.**: Solid orange line (2x2Grid: 0.6→0.9; 3x3Grid: 0.7→0.95).

### Detailed Analysis

#### 2x2Grid Accuracy vs. Model Size

- **Human**: Flat dashed green line at ~0.8.

- **Rel-AIR**: Flat dotted purple line at ~0.9.

- **Random**: Flat dotted black line at ~0.2.

- **Entity Naming**: Solid blue line starts at ~0.4 (10⁻¹) and rises to ~0.8 (10²).

- **Entity & Layout Decomp.**: Solid orange line starts at ~0.6 (10⁻¹) and rises to ~0.9 (10²).

#### 3x3Grid Accuracy vs. Model Size

- **Human**: Flat dashed green line at ~0.8.

- **Rel-AIR**: Flat dotted purple line at ~0.9.

- **Random**: Flat dotted black line at ~0.2.

- **Entity Naming**: Solid blue line starts at ~0.6 (10⁻¹) and rises to ~0.9 (10²).

- **Entity & Layout Decomp.**: Solid orange line starts at ~0.7 (10⁻¹) and rises to ~0.95 (10²).

### Key Observations

1. **Model Size Impact**: Both Entity Naming and Entity & Layout Decomp. show significant accuracy improvements as model size increases (e.g., Entity & Layout Decomp. jumps from ~0.6 to ~0.9 in 2x2Grid).

2. **Benchmark Comparison**:

- Rel-AIR consistently outperforms Human (0.9 vs. 0.8).

- Random guessing remains far below all other methods (~0.2).

3. **Architecture Differences**:

- 3x3Grid models achieve higher accuracy than 2x2Grid for the same methods (e.g., Entity & Layout Decomp. reaches 0.95 vs. 0.9 in 2x2Grid).

- Entity & Layout Decomp. outperforms Entity Naming in both grid sizes.

### Interpretation

The data demonstrates that:

- **Larger models** (higher parameter counts) improve performance for decomposition-based methods (Entity & Layout Decomp.), suggesting scalability benefits.

- **Decomposition methods** (Entity & Layout Decomp.) outperform simpler approaches (Entity Naming), indicating architectural complexity matters.

- **Human and Rel-AIR benchmarks** set high accuracy thresholds (~0.8–0.9), implying current models are nearing human-level performance in some tasks.

- The **logarithmic x-axis** highlights that even small increases in model size (e.g., 10⁰ to 10¹) yield disproportionate accuracy gains.

### Spatial Grounding & Trend Verification

- **Legend Position**: Top-left corner, aligned with graph titles.

- **Line Colors**: Confirmed matches (e.g., Entity & Layout Decomp. is orange, Entity Naming is blue).

- **Trend Logic**: Solid lines (Entity methods) show upward slopes, while dashed/dotted lines (benchmarks) remain flat, validating the visual interpretation.

### Content Details

- **2x2Grid Data Points**:

- Entity Naming: 0.4 (10⁻¹), 0.6 (10⁰), 0.8 (10²).

- Entity & Layout Decomp.: 0.6 (10⁻¹), 0.8 (10¹), 0.9 (10²).

- **3x3Grid Data Points**:

- Entity Naming: 0.6 (10⁻¹), 0.8 (10¹), 0.9 (10²).

- Entity & Layout Decomp.: 0.7 (10⁻¹), 0.85 (10¹), 0.95 (10²).

### Notable Anomalies

- **Random Guessing**: Consistently flat at ~0.2, serving as a baseline for comparison.

- **Human Performance**: Slightly lower than Rel-AIR, suggesting Rel-AIR may incorporate human-like reasoning with added efficiency.

### Final Notes

The graphs emphasize the importance of model architecture and size in achieving high accuracy. Entity & Layout Decomp. appears most effective, particularly in larger models, while Random Guessing underscores the value of structured approaches. The data aligns with trends in AI research, where decomposition and scalability are critical for complex tasks.