\n

## Diagram: LLM-Based Complex Question Answering Process

### Overview

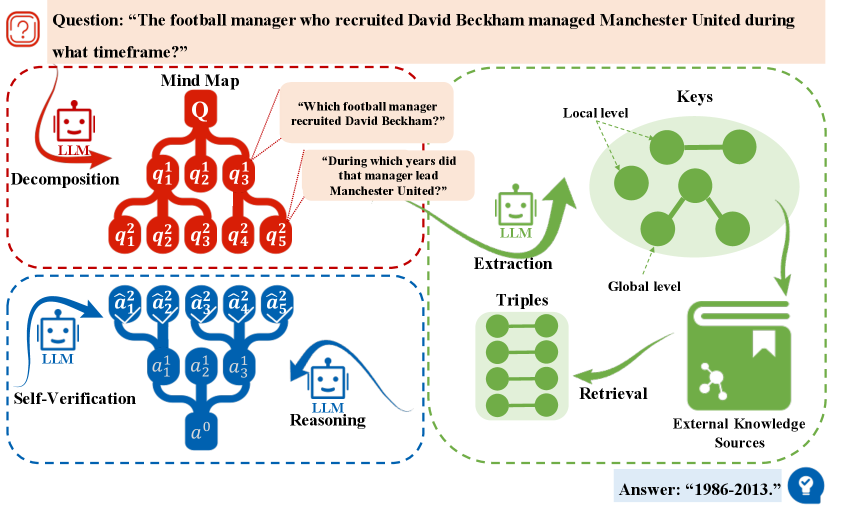

The image is a technical diagram illustrating a multi-step process for answering a complex, multi-hop question using a Large Language Model (LLM). The process involves decomposing the question, extracting relevant information from external knowledge sources, and performing self-verification of the answer. The example question used is: "The football manager who recruited David Beckham managed Manchester United during what timeframe?"

### Components/Axes

The diagram is organized into three main, color-coded sections connected by flow arrows:

1. **Decomposition (Red Dashed Box, Left Side):**

* **Input:** A complex question labeled "Q" at the top of a mind map.

* **Process:** An LLM (represented by a robot icon) decomposes the main question into a hierarchy of sub-questions.

* **Output:** A tree structure of sub-questions labeled `q¹₁`, `q¹₂`, `q¹₃` (first level) and `q²₁`, `q²₂`, `q²₃`, `q²₄`, `q²₅` (second level).

* **Example Sub-questions (in speech bubbles):**

* "Which football manager recruited David Beckham?"

* "During which years did that manager lead Manchester United?"

2. **Extraction & Retrieval (Green Dashed Box, Right Side):**

* **Process:** An LLM performs "Extraction" on the sub-questions.

* **Components:**

* **Keys:** A cluster of green nodes labeled "Local level" and "Global level," representing extracted key concepts or entities.

* **Triples:** A vertical stack of green nodes, representing structured knowledge triples (subject-predicate-object).

* **External Knowledge Sources:** Represented by a green book icon with a molecular symbol.

* **Flow:** Arrows show information flowing from the LLM to the Keys, then to the Triples, and finally interacting with the External Knowledge Sources via a "Retrieval" process.

3. **Self-Verification (Blue Dashed Box, Bottom Left):**

* **Process:** An LLM performs "Reasoning" and "Self-Verification."

* **Structure:** A tree structure of answers.

* Root node: `a⁰` (final answer).

* Intermediate nodes: `a¹₁`, `a¹₂`, `a¹₃`.

* Leaf nodes: `ã²₁`, `ã²₂`, `ã²₃`, `ã²₄`, `ã²₅` (verified sub-answers).

* **Flow:** Arrows indicate the LLM reasoning from sub-answers up to the final answer and a verification loop.

4. **Final Output (Bottom Right Corner):**

* A blue box contains the final answer to the example question: **"Answer: '1986-2013.'"**

### Detailed Analysis

The diagram visually maps the flow of information and processing steps:

1. A complex natural language question (**Q**) enters the system.

2. The **Decomposition** module breaks it into a structured set of simpler, answerable sub-questions (`q` nodes).

3. The **Extraction** module processes these sub-questions to identify key entities and relationships (**Keys**, **Triples**).

4. These keys and triples are used to **Retrieve** relevant facts from **External Knowledge Sources**.

5. The retrieved information feeds into the **Self-Verification** module, where an LLM reasons to construct intermediate answers (`a¹` nodes) and verifies sub-answers (`ã²` nodes) to build and confirm the final answer (`a⁰`).

6. The process culminates in a specific, factual answer: **"1986-2013."**

### Key Observations

* **Hierarchical Structure:** Both the question decomposition and answer construction are represented as tree structures, emphasizing a hierarchical, step-by-step reasoning process.

* **Modular Design:** The process is clearly segmented into distinct functional modules (Decomposition, Extraction, Verification), each with a specific role.

* **LLM Integration:** An LLM icon is present in all three main modules, indicating it is the core engine driving decomposition, extraction/retrieval, and reasoning/verification.

* **Example-Driven:** The diagram uses a concrete, multi-hop factual question about football history to ground the abstract process in a tangible example.

* **Color Coding:** Red is used for question processing, green for knowledge interaction, and blue for answer synthesis and verification, creating a clear visual distinction between phases.

### Interpretation

This diagram illustrates a sophisticated **neuro-symbolic or retrieval-augmented generation (RAG) pipeline** designed to overcome the limitations of a single LLM inference for complex queries. It demonstrates a **Peircean abductive-deductive reasoning cycle**:

1. **Abduction (Decomposition):** The system hypothesizes a structure of sub-problems (`q` nodes) that, if solved, would answer the main question.

2. **Deduction & Retrieval (Extraction):** It deduces what specific information (keys, triples) is needed to solve those sub-problems and retrieves it from an external knowledge base.

3. **Induction & Verification (Self-Verification):** It induces a final answer (`a⁰`) from the sub-answers and verifies its consistency and correctness.

The process highlights the importance of **structured reasoning** and **grounding** in external facts for accurate complex question answering. The final answer, "1986-2013," corresponds to the tenure of Sir Alex Ferguson, who is famously associated with signing David Beckham and managing Manchester United during that period. The diagram effectively argues that answering such questions reliably requires more than just pattern matching; it requires explicit decomposition, targeted knowledge retrieval, and systematic verification.