TECHNICAL ASSET FINGERPRINT

abb4f03139115920f057f5c2

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

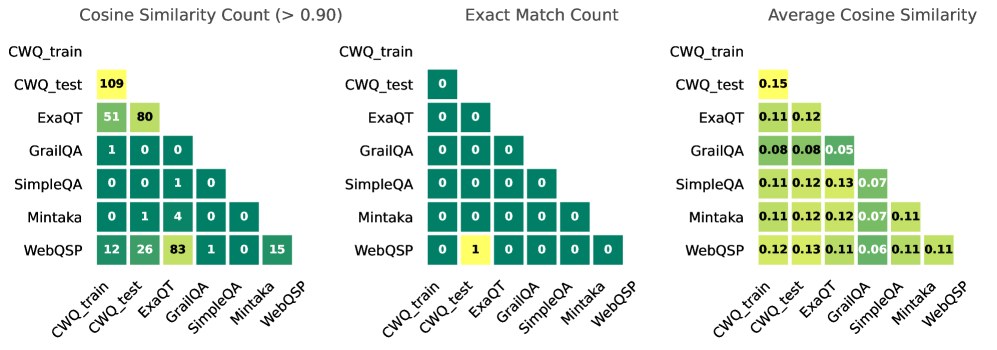

## Heatmap: Dataset Comparison Metrics

### Overview

The image presents three heatmaps comparing different datasets (CWQ_train, CWQ_test, ExaQT, GrailQA, SimpleQA, Mintaka, WebQSP) based on three metrics: Cosine Similarity Count (> 0.90), Exact Match Count, and Average Cosine Similarity. Each heatmap is a lower triangular matrix, where the rows and columns represent the datasets being compared. The color intensity of each cell represents the value of the metric for the corresponding dataset pair.

### Components/Axes

* **Titles:**

* Left: Cosine Similarity Count (> 0.90)

* Center: Exact Match Count

* Right: Average Cosine Similarity

* **Rows (Left Side):** CWQ_train, CWQ_test, ExaQT, GrailQA, SimpleQA, Mintaka, WebQSP

* **Columns (Bottom Side):** CWQ_train, CWQ_test, ExaQT, GrailQA, SimpleQA, Mintaka, WebQSP

* **Color Scale:** The color scale is not explicitly shown, but it appears to range from dark green (low values) to yellow (high values).

### Detailed Analysis

#### Cosine Similarity Count (> 0.90)

This heatmap shows the count of pairs with a cosine similarity greater than 0.90.

* **CWQ_train vs. CWQ_test:** 109

* **CWQ_train vs. ExaQT:** 51

* **CWQ_test vs. ExaQT:** 80

* **CWQ_train vs. GrailQA:** 1

* **CWQ_train vs. SimpleQA:** 0

* **CWQ_test vs. GrailQA:** 0

* **CWQ_test vs. SimpleQA:** 1

* **ExaQT vs. GrailQA:** 0

* **ExaQT vs. SimpleQA:** 0

* **GrailQA vs. SimpleQA:** 1

* **CWQ_train vs. Mintaka:** 0

* **CWQ_test vs. Mintaka:** 1

* **ExaQT vs. Mintaka:** 4

* **GrailQA vs. Mintaka:** 0

* **SimpleQA vs. Mintaka:** 0

* **CWQ_train vs. WebQSP:** 12

* **CWQ_test vs. WebQSP:** 26

* **ExaQT vs. WebQSP:** 83

* **GrailQA vs. WebQSP:** 1

* **SimpleQA vs. WebQSP:** 0

* **Mintaka vs. WebQSP:** 0

* **WebQSP vs. WebQSP:** 15

#### Exact Match Count

This heatmap shows the count of exact matches between the datasets.

* **CWQ_train vs. CWQ_test:** 0

* **CWQ_train vs. ExaQT:** 0

* **CWQ_test vs. ExaQT:** 0

* **CWQ_train vs. GrailQA:** 0

* **CWQ_test vs. GrailQA:** 0

* **ExaQT vs. GrailQA:** 0

* **CWQ_train vs. SimpleQA:** 0

* **CWQ_test vs. SimpleQA:** 0

* **ExaQT vs. SimpleQA:** 0

* **GrailQA vs. SimpleQA:** 0

* **CWQ_train vs. Mintaka:** 0

* **CWQ_test vs. Mintaka:** 0

* **ExaQT vs. Mintaka:** 0

* **GrailQA vs. Mintaka:** 0

* **SimpleQA vs. Mintaka:** 0

* **CWQ_train vs. WebQSP:** 0

* **CWQ_test vs. WebQSP:** 1

* **ExaQT vs. WebQSP:** 0

* **GrailQA vs. WebQSP:** 0

* **SimpleQA vs. WebQSP:** 0

* **Mintaka vs. WebQSP:** 0

* **WebQSP vs. WebQSP:** 0

#### Average Cosine Similarity

This heatmap shows the average cosine similarity between the datasets.

* **CWQ_train vs. CWQ_test:** 0.15

* **CWQ_train vs. ExaQT:** 0.11

* **CWQ_test vs. ExaQT:** 0.12

* **CWQ_train vs. GrailQA:** 0.08

* **CWQ_test vs. GrailQA:** 0.08

* **ExaQT vs. GrailQA:** 0.05

* **CWQ_train vs. SimpleQA:** 0.11

* **CWQ_test vs. SimpleQA:** 0.12

* **ExaQT vs. SimpleQA:** 0.13

* **GrailQA vs. SimpleQA:** 0.07

* **CWQ_train vs. Mintaka:** 0.11

* **CWQ_test vs. Mintaka:** 0.12

* **ExaQT vs. Mintaka:** 0.12

* **GrailQA vs. Mintaka:** 0.07

* **SimpleQA vs. Mintaka:** 0.11

* **CWQ_train vs. WebQSP:** 0.12

* **CWQ_test vs. WebQSP:** 0.13

* **ExaQT vs. WebQSP:** 0.11

* **GrailQA vs. WebQSP:** 0.06

* **SimpleQA vs. WebQSP:** 0.11

* **Mintaka vs. WebQSP:** 0.11

* **WebQSP vs. WebQSP:** 0.11

### Key Observations

* **Cosine Similarity Count:** ExaQT and WebQSP have a high cosine similarity count (83), indicating many similar pairs. CWQ_test also shows a high similarity with ExaQT (80). CWQ_train and CWQ_test also have a high similarity (109).

* **Exact Match Count:** Exact matches are rare, with only CWQ_test and WebQSP having a single exact match.

* **Average Cosine Similarity:** The average cosine similarity values are relatively low across all dataset pairs, ranging from 0.05 to 0.15.

### Interpretation

The heatmaps provide insights into the similarity and overlap between different datasets. The high cosine similarity counts between CWQ_train, CWQ_test, ExaQT, and WebQSP suggest that these datasets contain many similar questions or examples. The low exact match counts indicate that while the questions may be semantically similar, they are rarely identical. The average cosine similarity values suggest that, on average, the datasets are not highly similar, but there are pockets of high similarity as indicated by the cosine similarity counts. The data suggests that ExaQT and WebQSP share a significant number of semantically similar questions.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

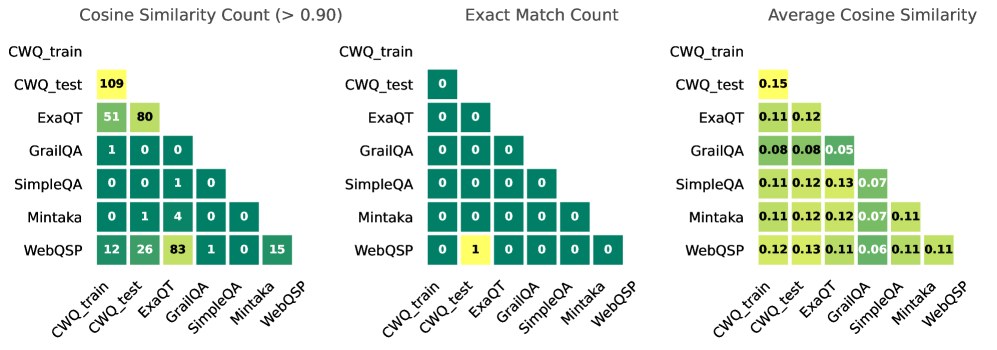

## Heatmap: Cosine Similarity and Match Counts Between Datasets

### Overview

The image presents three heatmaps comparing different datasets (CWQ_train, CWQ_test, ExaQT, GrailQA, SimpleQA, Mintaka, WebQSP) based on cosine similarity and exact match counts. The heatmaps are arranged horizontally, with the first showing cosine similarity counts (above 0.90), the second showing exact match counts, and the third showing average cosine similarity. Each heatmap uses a color gradient to represent the magnitude of the values, with darker shades indicating higher values.

### Components/Axes

* **Datasets:** The datasets are listed on both the x-axis and y-axis of each heatmap: CWQ_train, CWQ_test, ExaQT, GrailQA, SimpleQA, Mintaka, WebQSP.

* **Heatmap 1 (Cosine Similarity Count (> 0.90)):** Values represent the number of times the cosine similarity between two datasets exceeds 0.90.

* **Heatmap 2 (Exact Match Count):** Values represent the number of exact matches between two datasets.

* **Heatmap 3 (Average Cosine Similarity):** Values represent the average cosine similarity between two datasets.

* **Color Scale:** The color scale is not explicitly provided, but it appears to range from light green (low values) to dark green (high values).

* **Placement:** The three heatmaps are positioned side-by-side horizontally. The dataset labels are positioned below each heatmap.

### Detailed Analysis or Content Details

**Heatmap 1: Cosine Similarity Count (> 0.90)**

* CWQ_train vs. CWQ_test: 109

* CWQ_train vs. ExaQT: 51

* CWQ_train vs. GrailQA: 1

* CWQ_train vs. SimpleQA: 0

* CWQ_train vs. Mintaka: 1

* CWQ_train vs. WebQSP: 12

* CWQ_test vs. ExaQT: 80

* CWQ_test vs. GrailQA: 0

* CWQ_test vs. SimpleQA: 0

* CWQ_test vs. Mintaka: 4

* CWQ_test vs. WebQSP: 26

* ExaQT vs. GrailQA: 0

* ExaQT vs. SimpleQA: 0

* ExaQT vs. Mintaka: 0

* ExaQT vs. WebQSP: 83

* GrailQA vs. SimpleQA: 0

* GrailQA vs. Mintaka: 0

* GrailQA vs. WebQSP: 0

* SimpleQA vs. Mintaka: 0

* SimpleQA vs. WebQSP: 0

* Mintaka vs. WebQSP: 15

**Heatmap 2: Exact Match Count**

* All values are 0, except:

* CWQ_train vs. CWQ_train: 0

* CWQ_test vs. CWQ_test: 0

* ExaQT vs. ExaQT: 0

* GrailQA vs. GrailQA: 0

* SimpleQA vs. SimpleQA: 0

* Mintaka vs. Mintaka: 0

* WebQSP vs. WebQSP: 0

* CWQ_train vs. WebQSP: 1

* WebQSP vs. CWQ_train: 1

**Heatmap 3: Average Cosine Similarity**

* CWQ_train vs. CWQ_test: 0.15

* CWQ_train vs. ExaQT: 0.11, 0.12

* CWQ_train vs. GrailQA: 0.08, 0.05

* CWQ_train vs. SimpleQA: 0.11, 0.12, 0.07

* CWQ_train vs. Mintaka: 0.11, 0.12, 0.07

* CWQ_train vs. WebQSP: 0.12, 0.12, 0.11

* CWQ_test vs. ExaQT: 0.11

* CWQ_test vs. GrailQA: 0.08

* CWQ_test vs. SimpleQA: 0.11

* CWQ_test vs. Mintaka: 0.11

* CWQ_test vs. WebQSP: 0.11

### Key Observations

* **High Cosine Similarity:** CWQ_train and CWQ_test exhibit the highest cosine similarity counts (>0.90), with a value of 109. This suggests a strong overlap between these two datasets.

* **Exact Matches are Rare:** Exact matches between datasets are extremely rare, with only two instances (CWQ_train vs. WebQSP and WebQSP vs. CWQ_train) having a count of 1.

* **WebQSP and ExaQT Correlation:** WebQSP shows relatively high cosine similarity counts with ExaQT (83 > 0.90).

* **Low Average Cosine Similarity:** The average cosine similarity values are generally low, ranging from 0.05 to 0.15, indicating a limited overall similarity between the datasets.

* **GrailQA is Distinct:** GrailQA consistently shows low cosine similarity counts and average cosine similarity values, suggesting it is quite different from the other datasets.

### Interpretation

The data suggests that while some datasets share a high degree of similarity (specifically CWQ_train and CWQ_test), most datasets are relatively distinct from each other. The scarcity of exact matches reinforces this observation. The high cosine similarity between CWQ_train and CWQ_test likely indicates that CWQ_test is a subset or a closely related variation of CWQ_train. The low average cosine similarity values suggest that these datasets cover different aspects of the underlying problem space, or are represented in different ways. The distinctness of GrailQA could be due to its unique data source, task formulation, or data processing pipeline. The heatmap provides a quantitative assessment of the relationships between these datasets, which can be valuable for tasks such as dataset selection, transfer learning, and model evaluation. The fact that exact matches are so rare suggests that the datasets are not simply duplicates or minor variations of each other.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Heatmap Comparison: Dataset Similarity Metrics

### Overview

The image displays three horizontally arranged heatmap charts comparing seven datasets across three different similarity metrics. The datasets are: CWQ_train, CWQ_test, ExaQT, GrailQA, SimpleQA, Mintaka, and WebQSP. Each heatmap is a lower-triangular matrix showing pairwise comparisons.

### Components/Axes

* **Chart Titles (Top):**

* Left: "Cosine Similarity Count (> 0.90)"

* Center: "Exact Match Count"

* Right: "Average Cosine Similarity"

* **Axes Labels (Identical for all three charts):**

* **Y-axis (Vertical, Left side):** Lists datasets from top to bottom: CWQ_train, CWQ_test, ExaQT, GrailQA, SimpleQA, Mintaka, WebQSP.

* **X-axis (Horizontal, Bottom):** Lists datasets from left to right: CWQ_train, CWQ_test, ExaQT, GrailQA, SimpleQA, Mintaka, WebQSP. Labels are rotated approximately 45 degrees.

* **Legend/Color Scale:** Each chart uses a sequential color scale from dark green (low values) to bright yellow (high values). The specific numerical mapping is not provided, but the relative intensity is clear.

### Detailed Analysis

#### Chart 1: Cosine Similarity Count (> 0.90)

This chart counts the number of data points with a cosine similarity greater than 0.90 between dataset pairs.

* **Trend:** The diagonal (self-comparison) and certain off-diagonal pairs show high counts (yellow), while most other pairs have very low counts (dark green).

* **Data Points (Row, Column -> Value):**

* CWQ_test vs. CWQ_train -> **109** (Highest value in the chart)

* ExaQT vs. CWQ_train -> **51**

* ExaQT vs. CWQ_test -> **80**

* GrailQA vs. CWQ_train -> **1**

* GrailQA vs. ExaQT -> **0**

* SimpleQA vs. CWQ_train -> **0**

* SimpleQA vs. ExaQT -> **1**

* SimpleQA vs. GrailQA -> **0**

* Mintaka vs. CWQ_train -> **0**

* Mintaka vs. ExaQT -> **1**

* Mintaka vs. GrailQA -> **4**

* Mintaka vs. SimpleQA -> **0**

* WebQSP vs. CWQ_train -> **12**

* WebQSP vs. CWQ_test -> **26**

* WebQSP vs. ExaQT -> **83**

* WebQSP vs. GrailQA -> **1**

* WebQSP vs. SimpleQA -> **0**

* WebQSP vs. Mintaka -> **15**

* WebQSP vs. WebQSP (diagonal) -> **15**

#### Chart 2: Exact Match Count

This chart counts the number of exact matches between dataset pairs.

* **Trend:** The matrix is almost entirely dark green (zero), indicating extremely few exact matches. Only one off-diagonal cell is non-zero.

* **Data Points (Row, Column -> Value):**

* All diagonal cells (self-comparison) are **0**.

* All off-diagonal cells are **0**, except:

* WebQSP vs. CWQ_test -> **1**

#### Chart 3: Average Cosine Similarity

This chart shows the average cosine similarity score between dataset pairs.

* **Trend:** Values are generally low (all below 0.20). The diagonal (self-similarity) tends to have the highest values in each row, shown in lighter yellow-green. Off-diagonal similarities are modest.

* **Data Points (Row, Column -> Value):**

* CWQ_test vs. CWQ_train -> **0.15**

* ExaQT vs. CWQ_train -> **0.11**

* ExaQT vs. CWQ_test -> **0.12**

* GrailQA vs. CWQ_train -> **0.08**

* GrailQA vs. CWQ_test -> **0.08**

* GrailQA vs. ExaQT -> **0.05**

* SimpleQA vs. CWQ_train -> **0.11**

* SimpleQA vs. CWQ_test -> **0.12**

* SimpleQA vs. ExaQT -> **0.13**

* SimpleQA vs. GrailQA -> **0.07**

* Mintaka vs. CWQ_train -> **0.11**

* Mintaka vs. CWQ_test -> **0.12**

* Mintaka vs. ExaQT -> **0.12**

* Mintaka vs. GrailQA -> **0.07**

* Mintaka vs. SimpleQA -> **0.11**

* WebQSP vs. CWQ_train -> **0.12**

* WebQSP vs. CWQ_test -> **0.13**

* WebQSP vs. ExaQT -> **0.11**

* WebQSP vs. GrailQA -> **0.06**

* WebQSP vs. SimpleQA -> **0.11**

* WebQSP vs. Mintaka -> **0.11**

* WebQSP vs. WebQSP (diagonal) -> **0.11**

### Key Observations

1. **High Pairwise Similarity (Cosine Count):** CWQ_test, ExaQT, and WebQSP form a cluster with high counts of high-similarity pairs (>0.90). The pair (ExaQT, WebQSP) has the second-highest count (83).

2. **Near-Zero Exact Matches:** Exact matches between different datasets are virtually non-existent (only 1 instance found). This indicates the datasets are distinct in their exact content.

3. **Low Average Similarity:** Despite some pairs having many high-similarity points, the *average* cosine similarity across all pairs is low (0.05 to 0.15). This suggests similarity is not uniform but concentrated in subsets of data.

4. **Self-Similarity:** The diagonal values confirm that datasets are most similar to themselves, which is an expected sanity check.

### Interpretation

This analysis compares the composition of several question-answering or text datasets. The findings suggest:

* **Dataset Relationships:** CWQ (train/test), ExaQT, and WebQSP share significant semantic overlap, as evidenced by high cosine similarity counts. They may contain similar types of questions, answers, or textual patterns.

* **Distinct Content:** The lack of exact matches confirms these are not simply copies of each other; they are unique corpora. The similarity is in meaning or structure, not in verbatim text.

* **Nature of Similarity:** The contrast between high "Cosine Similarity Count (>0.90)" and low "Average Cosine Similarity" is critical. It implies that within these datasets, there are specific clusters or types of data points that are very similar to each other, but these clusters are embedded within a larger body of data that is not similar. The similarity is localized, not global.

* **Utility for Modeling:** Datasets with high pairwise similarity (like CWQ and ExaQT) might be used for cross-domain evaluation or could indicate redundancy. The low overall average similarity suggests that combining these datasets could provide a more diverse training or testing set. The outlier pair (WebQSP, ExaQT) with a high similarity count (83) warrants specific investigation into their common characteristics.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Heatmaps: Model Comparison Metrics

### Overview

The image presents three comparative heatmaps analyzing question-answering models (CWQ_train, CWQ_test, ExaQT, GrailQA, SimpleQA, Mintaka, WebQSP) across three metrics:

1. **Cosine Similarity Count (>0.90)**

2. **Exact Match Count**

3. **Average Cosine Similarity**

Values are color-coded (yellow=highest, green=medium, dark green=lowest) and positioned in a matrix format.

---

### Components/Axes

- **X-axis (Columns)**: Models compared as reference points (CWQ_train, CWQ_test, ExaQT, GrailQA, SimpleQA, Mintaka, WebQSP).

- **Y-axis (Rows)**: Models being evaluated against the reference points.

- **Legend**:

- **Yellow**: Highest values (e.g., 109, 0.15).

- **Green**: Medium values (e.g., 80, 0.12).

- **Dark Green**: Lowest values (e.g., 0, 0.05).

- **Placement**: Legend is positioned to the right of each heatmap.

---

### Detailed Analysis

#### 1. **Cosine Similarity Count (>0.90)**

- **CWQ_train vs CWQ_test**: 109 (yellow).

- **ExaQT vs CWQ_train**: 51 (green), **ExaQT vs CWQ_test**: 80 (green).

- **GrailQA**: All values ≤1 (dark green).

- **SimpleQA**: 1 match (dark green) in SimpleQA vs Mintaka.

- **Mintaka**: 4 matches (green) in Mintaka vs ExaQT.

- **WebQSP**: 12 (green) vs CWQ_train, 26 (green) vs CWQ_test, 83 (yellow) vs GrailQA, 15 (dark green) vs WebQSP.

#### 2. **Exact Match Count**

- **All values are 0** except:

- **WebQSP vs CWQ_test**: 1 (yellow).

#### 3. **Average Cosine Similarity**

- **CWQ_train vs CWQ_test**: 0.15 (yellow).

- **ExaQT**: 0.11 (vs CWQ_train), 0.12 (vs CWQ_test).

- **GrailQA**: 0.08 (vs CWQ_train/CWQ_test), 0.05 (self-comparison).

- **SimpleQA**: 0.11–0.13 (vs CWQ_train/CWQ_test), 0.07 (self-comparison).

- **Mintaka**: 0.11–0.12 (vs CWQ_train/CWQ_test), 0.07 (vs SimpleQA), 0.11 (self-comparison).

- **WebQSP**: 0.12–0.13 (vs CWQ_train/CWQ_test), 0.06 (vs GrailQA), 0.11 (self-comparison).

---

### Key Observations

1. **Dominance of CWQ_train/CWQ_test**:

- Highest Cosine Similarity Count (109) and Average Similarity (0.15) between CWQ_train and CWQ_test.

- Suggests these models share significant structural or data overlap.

2. **ExaQT Performance**:

- Strong similarity to CWQ_train/test (51–80 matches, 0.11–0.12 average).

- Indicates potential alignment with CWQ frameworks.

3. **Sparse Exact Matches**:

- Only 1 exact match (WebQSP vs CWQ_test) across all models.

- Highlights rarity of identical outputs despite similarity.

4. **Model-Specific Trends**:

- **GrailQA/SimpleQA**: Low similarity (≤0.08 average), suggesting divergent approaches.

- **WebQSP**: Moderate similarity (0.12–0.13) but low exact matches, implying nuanced differences.

---

### Interpretation

- **CWQ Framework Centrality**: CWQ_train/test act as a hub, with ExaQT showing partial alignment. Other models (GrailQA, SimpleQA) diverge significantly, possibly due to architectural or training differences.

- **Similarity ≠ Exactness**: High cosine similarity (e.g., WebQSP vs CWQ_train: 0.12) does not guarantee exact matches, suggesting semantic vs. lexical differences.

- **Outliers**:

- **SimpleQA vs Mintaka**: 4 matches (green) despite low average similarity (0.07), indicating sporadic overlaps.

- **WebQSP Self-Comparison**: 0.11 average similarity but 0 exact matches, highlighting internal variability.

- **Implications**:

- CWQ and ExaQT may share foundational design principles.

- GrailQA/SimpleQA require further investigation to identify unique methodologies.

- WebQSP’s moderate similarity but low exactness suggests potential for refinement in alignment strategies.

DECODING INTELLIGENCE...