## Diagram: Integrate Interpretability

### Overview

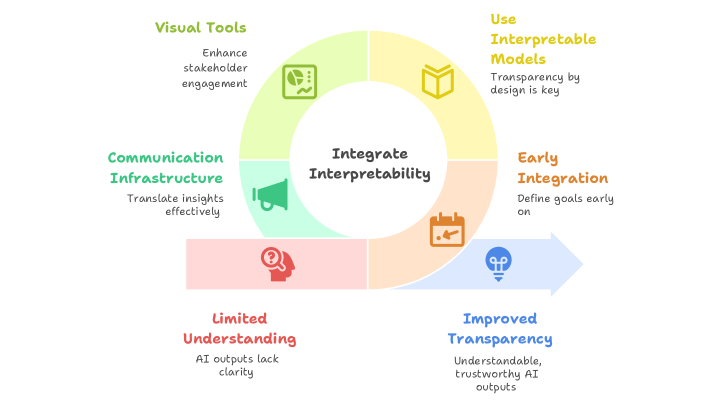

The image is a circular diagram illustrating the process of integrating interpretability into a system, likely related to AI or data analysis. The diagram shows a cycle starting with "Visual Tools" and progressing through "Use Interpretable Models," "Early Integration," and "Improved Transparency," before addressing the issue of "Limited Understanding." The central element is "Integrate Interpretability," emphasizing its core role.

### Components/Axes

* **Center:** "Integrate Interpretability"

* **Top:** "Visual Tools" (light green sector)

* Description: "Enhance stakeholder engagement"

* Icon: A chart icon with a line graph and data points.

* **Top-Right:** "Use Interpretable Models" (light yellow sector)

* Description: "Transparency by design is key"

* Icon: An open book icon.

* **Bottom-Right:** "Early Integration" (light orange sector)

* Description: "Define goals early on"

* Icon: A calendar icon.

* **Bottom:** "Improved Transparency" (light blue sector)

* Description: "Understandable, trustworthy AI outputs"

* Icon: A lightbulb icon.

* **Bottom-Left:** "Limited Understanding" (light red sector)

* Description: "AI outputs lack clarity"

* Icon: A head with a question mark inside.

* **Left:** "Communication Infrastructure" (light green sector)

* Description: "Translate insights effectively"

* Icon: A megaphone icon.

* **Arrow:** A light blue arrow starts from the "Early Integration" sector and points towards "Improved Transparency," indicating a progression or flow.

### Detailed Analysis or ### Content Details

The diagram presents a cyclical process for integrating interpretability. It starts with using visual tools to enhance stakeholder engagement. Then, it moves to using interpretable models to ensure transparency by design. Early integration involves defining goals early on. This leads to improved transparency, resulting in understandable and trustworthy AI outputs. The diagram also acknowledges the challenge of limited understanding, where AI outputs lack clarity, which needs to be addressed through the cycle.

### Key Observations

* The diagram emphasizes the importance of interpretability in AI systems.

* The cyclical nature suggests a continuous improvement process.

* Each stage is associated with a specific action and a corresponding benefit.

* The "Limited Understanding" stage highlights a potential problem that the cycle aims to solve.

### Interpretation

The diagram illustrates a strategy for building and maintaining interpretable AI systems. It suggests that by focusing on visual tools, interpretable models, early integration, and communication infrastructure, one can achieve improved transparency and overcome the challenges of limited understanding. The cyclical nature implies that this is an ongoing process of refinement and improvement. The diagram highlights the importance of transparency and stakeholder engagement in the development and deployment of AI systems.