## Diagram: Integrated Interpretability Framework

### Overview

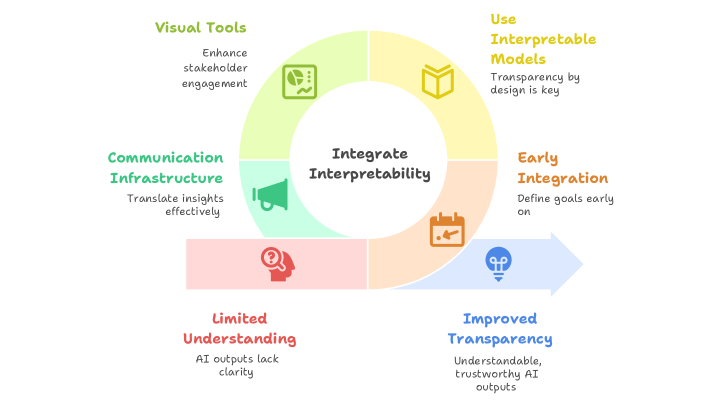

The image is a conceptual circular diagram illustrating a framework for integrating interpretability into AI systems. The central theme is "Integrate Interpretability," surrounded by six interconnected components or stages, each represented by a colored segment with an icon and descriptive text. A directional arrow indicates a flow from one component to another, suggesting a process or progression.

### Components/Axes

The diagram is structured as a circle divided into six segments, each with a distinct color, icon, title, and descriptive text. The central circle contains the core concept.

**Central Element:**

* **Text:** "Integrate Interpretability"

* **Position:** Center of the diagram.

**Surrounding Segments (Clockwise from top-right):**

1. **Segment (Yellow):**

* **Title:** "Use Interpretable Models"

* **Icon:** An open book.

* **Description:** "Transparency by design is key"

* **Position:** Top-right quadrant.

2. **Segment (Orange):**

* **Title:** "Early Integration"

* **Icon:** A calendar with a checkmark.

* **Description:** "Define goals early on"

* **Position:** Right side. A blue arrow originates from this segment and points towards the "Improved Transparency" segment.

3. **Segment (Blue):**

* **Title:** "Improved Transparency"

* **Icon:** A lightbulb with a keyhole.

* **Description:** "Understandable, trustworthy AI outputs"

* **Position:** Bottom-right quadrant. This is the endpoint of the arrow from "Early Integration."

4. **Segment (Red/Pink):**

* **Title:** "Limited Understanding"

* **Icon:** A head silhouette with a question mark.

* **Description:** "AI outputs lack clarity"

* **Position:** Bottom-left quadrant.

5. **Segment (Teal/Green):**

* **Title:** "Communication Infrastructure"

* **Icon:** A megaphone.

* **Description:** "Translate insights effectively"

* **Position:** Left side.

6. **Segment (Light Green):**

* **Title:** "Visual Tools"

* **Icon:** A dashboard or chart icon.

* **Description:** "Enhance stakeholder engagement"

* **Position:** Top-left quadrant.

### Detailed Analysis

The diagram presents a non-linear but interconnected model. The central goal is to "Integrate Interpretability." The surrounding elements can be interpreted as both prerequisites and outcomes of this integration.

* **Problem State:** "Limited Understanding" (red/pink) is presented as a starting challenge or negative state where AI outputs are unclear.

* **Solution Components:** The other five segments represent actionable components or strategies to address this challenge and achieve the central goal:

* **"Use Interpretable Models"** (yellow) advocates for model selection based on inherent transparency.

* **"Early Integration"** (orange) emphasizes planning interpretability from the project's outset.

* **"Communication Infrastructure"** (teal) focuses on the systems needed to convey AI insights.

* **"Visual Tools"** (light green) highlights the role of visualization in making insights accessible.

* **Desired Outcome:** "Improved Transparency" (blue) is depicted as a direct result of "Early Integration" (via the arrow) and represents the positive end-state: trustworthy and understandable AI.

### Key Observations

1. **Circular Layout:** The circular arrangement suggests that these components are part of a continuous, holistic system rather than a strict linear sequence. They all feed into and support the central integration goal.

2. **Directional Flow:** The only explicit directional cue is the blue arrow from "Early Integration" to "Improved Transparency." This implies a strong causal or sequential relationship between defining interpretability goals early and achieving transparent outcomes.

3. **Color Coding:** Each concept is assigned a unique, distinct color, aiding in visual segmentation and recall.

4. **Iconography:** Simple, universally recognizable icons (book, calendar, lightbulb, etc.) are paired with each concept to reinforce its meaning visually.

5. **Problem-Solution Contrast:** The "Limited Understanding" segment (red/pink) is visually and conceptually contrasted with the "Improved Transparency" segment (blue), framing the diagram as a journey from a problem to a solution.

### Interpretation

This diagram outlines a **socio-technical framework** for making AI systems more interpretable. It argues that interpretability is not a single feature but an integrated practice requiring multiple, concurrent efforts.

* **The Core Argument:** True interpretability ("Integrate Interpretability") is achieved by simultaneously employing **technical solutions** (selecting interpretable models, building communication infrastructures, using visual tools) and **process-oriented solutions** (integrating interpretability goals early in the development lifecycle).

* **Relationship Between Elements:** The components are interdependent. For example, "Visual Tools" are useless without a "Communication Infrastructure" to deliver the insights, and both are ineffective if the underlying model is not inherently interpretable ("Use Interpretable Models"). "Early Integration" acts as the catalyst that sets this entire system in motion, leading directly to the ultimate benefit of "Improved Transparency."

* **Underlying Message:** The framework moves beyond a purely technical view of interpretability (e.g., just using SHAP values). It emphasizes that achieving trustworthy AI requires deliberate design choices, effective communication strategies, and stakeholder engagement from the very beginning of a project. The "Limited Understanding" segment serves as a warning of the consequence of neglecting this integrated approach.