## Circular Diagram: Framework for AI Interpretability Integration

### Overview

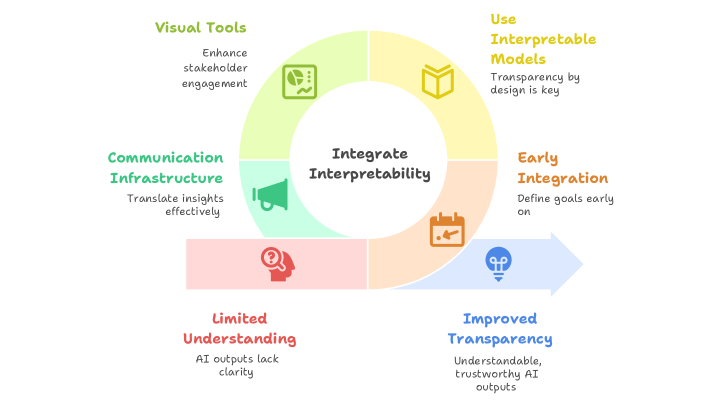

The diagram illustrates a cyclical framework for enhancing AI system transparency through four interconnected components. At its core, the phrase "Integrate Interpretability" anchors the process, with an arrow pointing rightward toward "Improved Transparency" as the ultimate outcome. The circular structure suggests iterative refinement of these elements.

### Components/Axes

1. **Quadrant Labels** (clockwise from top-left):

- **Visual Tools** (green): "Enhance stakeholder engagement"

- **Communication Infrastructure** (light blue): "Translate insights effectively"

- **Early Integration** (orange): "Define goals early on"

- **Limited Understanding** (pink): "AI outputs lack clarity"

2. **Central Node**:

- Text: "Integrate Interpretability"

- Color: White background with black text

3. **Arrow Element**:

- Direction: Rightward from center

- Label: "Improved Transparency"

- Description: "Understandable, trustworthy AI outputs"

- Color: Blue

4. **Icons**:

- Pie chart (green) in Visual Tools quadrant

- Megaphone (light blue) in Communication Infrastructure

- Calendar (orange) in Early Integration

- Question mark (pink) in Limited Understanding

### Detailed Analysis

- **Color Coding**:

- Green (#32CD32) for Visual Tools

- Light Blue (#ADD8E6) for Communication Infrastructure

- Orange (#FFA500) for Early Integration

- Pink (#FFC0CB) for Limited Understanding

- Blue (#0000FF) for the transparency arrow

- **Spatial Relationships**:

- All quadrants equidistant from center

- Arrow originates from center, terminates at right edge

- Icons positioned at quadrant centers

### Key Observations

1. The cyclical nature implies continuous improvement through iterative integration

2. "Limited Understanding" (pink) is positioned opposite the transparency goal, suggesting it's a challenge to overcome

3. Early Integration (orange) is adjacent to both Visual Tools and Communication Infrastructure, emphasizing foundational planning

4. The megaphone icon in Communication Infrastructure visually reinforces the "translate insights" concept

### Interpretation

This framework demonstrates that achieving trustworthy AI requires:

1. **Proactive Design**: Early goal-setting (Early Integration) creates the foundation

2. **Multi-modal Communication**: Combining visual tools with structured infrastructure ensures insights reach stakeholders effectively

3. **Addressing Limitations**: Recognizing current AI opacity (Limited Understanding) drives the need for interpretable models

4. **Iterative Process**: The circular structure suggests these elements must be continuously refined

The diagram emphasizes that transparency isn't achieved through a single solution but through systematic integration of design principles, communication strategies, and acknowledgment of current limitations. The rightward arrow implies that this integration process moves organizations from opacity toward explainable AI systems.