\n

## Horizontal Bar Chart: Salience Comparison of Large Language Models

### Overview

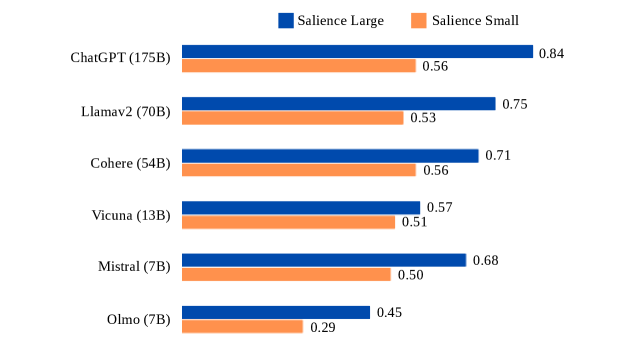

The image presents a horizontal bar chart comparing the "Salience Large" and "Salience Small" scores of six different Large Language Models (LLMs). The chart visually represents the salience scores for each model, with bars extending horizontally. The models are listed vertically on the left side of the chart.

### Components/Axes

* **Y-axis:** Lists the names of the LLMs: ChatGPT (175B), Llama2 (70B), Cohere (54B), Vicuna (13B), Mistral (7B), and Olmo (7B). The number in parentheses indicates the model size in billions of parameters.

* **X-axis:** Represents the salience score, ranging from approximately 0 to 1. No explicit scale is provided, but values are displayed at the end of each bar.

* **Legend:** Located in the top-right corner, the legend defines the colors used for "Salience Large" (blue) and "Salience Small" (orange).

### Detailed Analysis

The chart displays two bars for each LLM, one representing "Salience Large" and the other "Salience Small".

* **ChatGPT (175B):** "Salience Large" is approximately 0.84, and "Salience Small" is approximately 0.56. The blue bar is significantly longer than the orange bar.

* **Llama2 (70B):** "Salience Large" is approximately 0.75, and "Salience Small" is approximately 0.53. The blue bar is longer than the orange bar.

* **Cohere (54B):** "Salience Large" is approximately 0.71, and "Salience Small" is approximately 0.56. The blue bar is longer than the orange bar.

* **Vicuna (13B):** "Salience Large" is approximately 0.57, and "Salience Small" is approximately 0.51. The blue bar is slightly longer than the orange bar.

* **Mistral (7B):** "Salience Large" is approximately 0.68, and "Salience Small" is approximately 0.50. The blue bar is longer than the orange bar.

* **Olmo (7B):** "Salience Large" is approximately 0.45, and "Salience Small" is approximately 0.29. The blue bar is longer than the orange bar.

For all models, the "Salience Large" score is higher than the "Salience Small" score. The difference in scores varies between models.

### Key Observations

* ChatGPT (175B) has the highest "Salience Large" score (0.84) and a substantial difference between its "Salience Large" and "Salience Small" scores.

* Olmo (7B) has the lowest "Salience Large" score (0.45) and the smallest difference between its "Salience Large" and "Salience Small" scores.

* There is a general trend of larger models (higher parameter count) having higher "Salience Large" scores.

### Interpretation

The chart suggests that the "Salience" metric, when measured on a larger scale, tends to be higher for larger language models. "Salience" likely refers to the model's ability to identify or emphasize important information. The consistent difference between "Salience Large" and "Salience Small" across all models indicates that the method of measuring salience impacts the results, with the "Large" measurement consistently yielding higher scores. The fact that ChatGPT, the largest model, has the highest "Salience Large" score supports the hypothesis that model size is a significant factor in salience. The relatively small difference in salience scores for Olmo (7B) could indicate that smaller models may not exhibit the same scaling behavior as larger models, or that the salience metric is less sensitive for smaller models. This data could be used to inform model selection based on salience requirements, or to guide research into improving salience in smaller models.