TECHNICAL ASSET FINGERPRINT

ac95324d751e128f61d50a33

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Line Charts: Validation Loss vs. Tokens Seen

### Overview

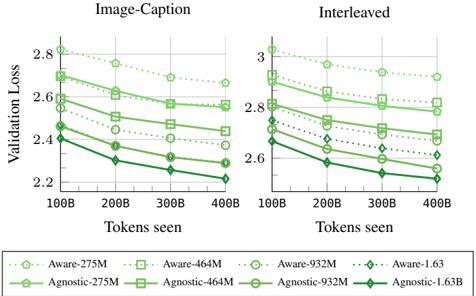

The image presents two line charts comparing the validation loss of "Aware" and "Agnostic" models with varying parameter sizes (275M, 464M, 932M, and 1.63B) against the number of tokens seen during training. The left chart is labeled "Image-Caption," and the right chart is labeled "Interleaved." Both charts share the same x and y axes, allowing for a direct comparison of the models' performance under different training conditions.

### Components/Axes

* **Titles:** "Image-Caption" (left chart), "Interleaved" (right chart)

* **Y-axis Label:** "Validation Loss"

* Scale: 2.2 to 2.8 for "Image-Caption", 2.6 to 3.0 for "Interleaved"

* **X-axis Label:** "Tokens seen"

* Scale: 100B, 200B, 300B, 400B

* **Legend:** Located at the bottom of the image.

* Aware-275M (light green, dotted line, circle marker)

* Aware-464M (light green, dotted line, square marker)

* Aware-932M (light green, dotted line, no marker)

* Aware-1.63 (light green, dotted line, diamond marker)

* Agnostic-275M (dark green, solid line, circle marker)

* Agnostic-464M (dark green, solid line, square marker)

* Agnostic-932M (dark green, solid line, no marker)

* Agnostic-1.63B (dark green, solid line, diamond marker)

### Detailed Analysis

#### Image-Caption Chart

* **Aware-275M:** (light green, dotted line, circle marker) Starts at approximately 2.8, decreases to about 2.7 by 400B tokens.

* **Aware-464M:** (light green, dotted line, square marker) Starts at approximately 2.7, decreases to about 2.65 by 400B tokens.

* **Aware-932M:** (light green, dotted line, no marker) Starts at approximately 2.6, decreases to about 2.5 by 400B tokens.

* **Aware-1.63:** (light green, dotted line, diamond marker) Starts at approximately 2.45, decreases to about 2.4 by 400B tokens.

* **Agnostic-275M:** (dark green, solid line, circle marker) Starts at approximately 2.6, decreases to about 2.4 by 400B tokens.

* **Agnostic-464M:** (dark green, solid line, square marker) Starts at approximately 2.5, decreases to about 2.3 by 400B tokens.

* **Agnostic-932M:** (dark green, solid line, no marker) Starts at approximately 2.4, decreases to about 2.25 by 400B tokens.

* **Agnostic-1.63B:** (dark green, solid line, diamond marker) Starts at approximately 2.45, decreases to about 2.2 by 400B tokens.

#### Interleaved Chart

* **Aware-275M:** (light green, dotted line, circle marker) Starts at approximately 3.05, decreases to about 2.9 by 400B tokens.

* **Aware-464M:** (light green, dotted line, square marker) Starts at approximately 2.9, decreases to about 2.8 by 400B tokens.

* **Aware-932M:** (light green, dotted line, no marker) Starts at approximately 2.8, decreases to about 2.7 by 400B tokens.

* **Aware-1.63:** (light green, dotted line, diamond marker) Starts at approximately 2.7, decreases to about 2.6 by 400B tokens.

* **Agnostic-275M:** (dark green, solid line, circle marker) Starts at approximately 2.7, decreases to about 2.5 by 400B tokens.

* **Agnostic-464M:** (dark green, solid line, square marker) Starts at approximately 2.7, decreases to about 2.45 by 400B tokens.

* **Agnostic-932M:** (dark green, solid line, no marker) Starts at approximately 2.6, decreases to about 2.35 by 400B tokens.

* **Agnostic-1.63B:** (dark green, solid line, diamond marker) Starts at approximately 2.65, decreases to about 2.2 by 400B tokens.

### Key Observations

* In both charts, the "Agnostic" models generally exhibit lower validation loss compared to the "Aware" models for a given parameter size.

* Larger parameter sizes (1.63B) tend to result in lower validation loss compared to smaller parameter sizes (275M, 464M, 932M) for both "Aware" and "Agnostic" models.

* The "Interleaved" training method generally results in higher validation loss compared to the "Image-Caption" training method for both "Aware" and "Agnostic" models.

* The validation loss decreases as the number of tokens seen increases for all models and training methods.

### Interpretation

The data suggests that "Agnostic" models are more effective than "Aware" models in terms of validation loss, indicating better generalization performance. Increasing the parameter size of the models also improves performance, as expected. The "Image-Caption" training method appears to be more effective than the "Interleaved" method, possibly due to the nature of the training data or the learning dynamics induced by the different training approaches. The decreasing validation loss with more tokens seen indicates that the models are learning and improving their performance as they are exposed to more data. The "Agnostic-1.63B" model trained with the "Image-Caption" method achieves the lowest validation loss, suggesting it is the most effective configuration among those tested.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Chart: Validation Loss vs. Tokens Seen

### Overview

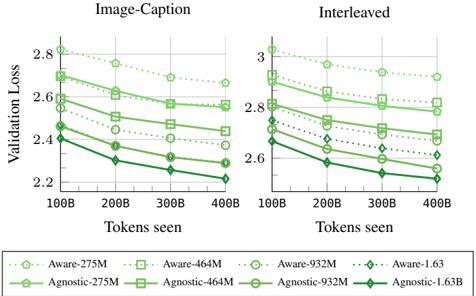

The image presents two line charts comparing validation loss against tokens seen for different model configurations. The left chart is labeled "Image-Caption" and the right chart is labeled "Interleaved". Each chart displays multiple lines representing different models, with the legend at the bottom identifying each line by model name and size (in millions or billions of parameters).

### Components/Axes

* **X-axis:** "Tokens seen", ranging from 100B to 400B, with markers at 100B, 200B, 300B, and 400B.

* **Y-axis (Left Chart):** "Validation Loss", ranging from 2.2 to 2.9, with markers at 2.2, 2.4, 2.6, and 2.8.

* **Y-axis (Right Chart):** "Validation Loss", ranging from 2.5 to 3.1, with markers at 2.6, 2.8, and 3.0.

* **Legend:** Located at the bottom of the image, containing the following model configurations:

* Aware-275M (light green, dashed)

* Agnostic-275M (dark green, solid)

* Aware-464M (light green, dash-dot)

* Agnostic-464M (dark green, dashed-dot)

* Aware-932M (light green, dotted)

* Agnostic-932M (dark green, solid-dotted)

* Aware-1.63 (light green, solid)

* Agnostic-1.63B (dark green, solid)

### Detailed Analysis or Content Details

**Image-Caption Chart (Left):**

* **Aware-275M:** Starts at approximately 2.85, decreases to approximately 2.65 at 200B, then fluctuates around 2.6 to 2.7, ending at approximately 2.6.

* **Agnostic-275M:** Starts at approximately 2.8, decreases to approximately 2.55 at 200B, then decreases to approximately 2.45 at 300B, and ends at approximately 2.4.

* **Aware-464M:** Starts at approximately 2.8, decreases to approximately 2.6 at 200B, then decreases to approximately 2.55 at 300B, and ends at approximately 2.5.

* **Agnostic-464M:** Starts at approximately 2.75, decreases to approximately 2.55 at 200B, then decreases to approximately 2.5 at 300B, and ends at approximately 2.5.

* **Aware-932M:** Starts at approximately 2.75, decreases to approximately 2.55 at 200B, then decreases to approximately 2.45 at 300B, and ends at approximately 2.4.

* **Agnostic-932M:** Starts at approximately 2.7, decreases to approximately 2.5 at 200B, then decreases to approximately 2.4 at 300B, and ends at approximately 2.35.

* **Aware-1.63:** Starts at approximately 2.7, decreases to approximately 2.45 at 200B, then decreases to approximately 2.3 at 300B, and ends at approximately 2.25.

* **Agnostic-1.63B:** Starts at approximately 2.65, decreases to approximately 2.4 at 200B, then decreases to approximately 2.3 at 300B, and ends at approximately 2.2.

**Interleaved Chart (Right):**

* **Aware-275M:** Starts at approximately 3.0, decreases to approximately 2.85 at 200B, then fluctuates around 2.8 to 2.9, ending at approximately 2.8.

* **Agnostic-275M:** Starts at approximately 3.0, decreases to approximately 2.8 at 200B, then decreases to approximately 2.75 at 300B, and ends at approximately 2.7.

* **Aware-464M:** Starts at approximately 3.0, decreases to approximately 2.8 at 200B, then decreases to approximately 2.7 at 300B, and ends at approximately 2.7.

* **Agnostic-464M:** Starts at approximately 2.95, decreases to approximately 2.75 at 200B, then decreases to approximately 2.7 at 300B, and ends at approximately 2.7.

* **Aware-932M:** Starts at approximately 2.95, decreases to approximately 2.75 at 200B, then decreases to approximately 2.65 at 300B, and ends at approximately 2.6.

* **Agnostic-932M:** Starts at approximately 2.9, decreases to approximately 2.7 at 200B, then decreases to approximately 2.6 at 300B, and ends at approximately 2.55.

* **Aware-1.63:** Starts at approximately 2.9, decreases to approximately 2.65 at 200B, then decreases to approximately 2.5 at 300B, and ends at approximately 2.4.

* **Agnostic-1.63B:** Starts at approximately 2.85, decreases to approximately 2.6 at 200B, then decreases to approximately 2.5 at 300B, and ends at approximately 2.4.

### Key Observations

* In both charts, all models exhibit a decreasing trend in validation loss as the number of tokens seen increases. This indicates that the models are learning and improving with more data.

* The larger models (Aware-1.63 and Agnostic-1.63B) consistently achieve lower validation loss compared to the smaller models.

* The "Agnostic" models generally perform slightly better than the "Aware" models, particularly at higher token counts.

* The rate of loss reduction appears to slow down as the number of tokens seen increases, suggesting diminishing returns from further training.

### Interpretation

The charts demonstrate the impact of model size and training data on validation loss. Larger models, with more parameters, are capable of achieving lower validation loss, indicating better generalization performance. The "Agnostic" models' slight advantage suggests that their architecture or training procedure may be more effective. The decreasing trend in validation loss with increasing tokens seen confirms the importance of data in model training. The flattening of the curves at higher token counts suggests that there is a point of diminishing returns, where adding more data does not significantly improve performance. These results are crucial for understanding the trade-offs between model size, training data, and performance, and for optimizing model training strategies. The two charts, "Image-Caption" and "Interleaved", show similar trends, suggesting that the observed effects are consistent across different training paradigms.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Line Chart: Validation Loss vs. Tokens Seen for Image-Caption and Interleaved Tasks

### Overview

The image displays two side-by-side line charts comparing the validation loss of eight different model variants over the course of training, measured in tokens seen. The left chart is titled "Image-Caption" and the right chart is titled "Interleaved". Each chart plots the performance of four "Aware" models and four "Agnostic" models, distinguished by line style, marker, and color. The general trend for all models is a decreasing validation loss as the number of tokens seen increases.

### Components/Axes

* **Chart Titles:**

* Left Panel: "Image-Caption"

* Right Panel: "Interleaved"

* **X-Axis (Both Panels):**

* Label: "Tokens seen"

* Scale: Linear, with major tick marks at 100B, 200B, 300B, and 400B (B likely denotes Billion).

* **Y-Axis (Both Panels):**

* Label: "Validation Loss"

* Scale: Linear.

* Left Panel ("Image-Caption") Range: Approximately 2.2 to 2.8.

* Right Panel ("Interleaved") Range: Approximately 2.6 to 3.0.

* **Legend (Bottom Center, spanning both panels):**

* The legend defines eight model variants, each with a unique combination of line style, marker, and color.

* **Aware Models (Dotted Lines):**

* `Aware-275M`: Light green, dotted line, circle marker (○).

* `Aware-464M`: Light green, dotted line, square marker (□).

* `Aware-932M`: Light green, dotted line, circle marker (○). *Note: Shares marker with Aware-275M but is a distinct line.*

* `Aware-1.63`: Dark green, dotted line, diamond marker (◇).

* **Agnostic Models (Solid Lines):**

* `Agnostic-275M`: Light green, solid line, circle marker (○).

* `Agnostic-464M`: Light green, solid line, square marker (□).

* `Agnostic-932M`: Light green, solid line, circle marker (○). *Note: Shares marker with Agnostic-275M but is a distinct line.*

* `Agnostic-1.63B`: Dark green, solid line, diamond marker (◇). *Note: The label in the legend reads "Agnostic-1.63B", while the corresponding Aware model is labeled "Aware-1.63".*

### Detailed Analysis

**Trend Verification:** All data series in both charts show a clear downward trend, indicating that validation loss decreases as more tokens are seen during training.

**Image-Caption Panel (Left):**

* **Aware-275M (Light green, dotted, ○):** Highest loss curve. Starts at ~2.82 at 100B, decreases to ~2.65 at 400B.

* **Aware-464M (Light green, dotted, □):** Second highest. Starts at ~2.72 at 100B, decreases to ~2.58 at 400B.

* **Aware-932M (Light green, dotted, ○):** Third highest. Starts at ~2.62 at 100B, decreases to ~2.48 at 400B.

* **Aware-1.63 (Dark green, dotted, ◇):** Lowest among Aware models. Starts at ~2.52 at 100B, decreases to ~2.38 at 400B.

* **Agnostic-275M (Light green, solid, ○):** Starts at ~2.68 at 100B, decreases to ~2.52 at 400B.

* **Agnostic-464M (Light green, solid, □):** Starts at ~2.58 at 100B, decreases to ~2.42 at 400B.

* **Agnostic-932M (Light green, solid, ○):** Starts at ~2.48 at 100B, decreases to ~2.32 at 400B.

* **Agnostic-1.63B (Dark green, solid, ◇):** Lowest overall loss curve. Starts at ~2.42 at 100B, decreases to ~2.22 at 400B.

**Interleaved Panel (Right):**

* **Aware-275M (Light green, dotted, ○):** Highest loss curve. Starts at ~3.02 at 100B, decreases to ~2.90 at 400B.

* **Aware-464M (Light green, dotted, □):** Second highest. Starts at ~2.92 at 100B, decreases to ~2.80 at 400B.

* **Aware-932M (Light green, dotted, ○):** Third highest. Starts at ~2.82 at 100B, decreases to ~2.70 at 400B.

* **Aware-1.63 (Dark green, dotted, ◇):** Lowest among Aware models. Starts at ~2.72 at 100B, decreases to ~2.60 at 400B.

* **Agnostic-275M (Light green, solid, ○):** Starts at ~2.88 at 100B, decreases to ~2.75 at 400B.

* **Agnostic-464M (Light green, solid, □):** Starts at ~2.78 at 100B, decreases to ~2.65 at 400B.

* **Agnostic-932M (Light green, solid, ○):** Starts at ~2.68 at 100B, decreases to ~2.55 at 400B.

* **Agnostic-1.63B (Dark green, solid, ◇):** Lowest overall loss curve. Starts at ~2.62 at 100B, decreases to ~2.48 at 400B.

### Key Observations

1. **Consistent Hierarchy:** In both tasks, for a given model size (e.g., 275M), the "Agnostic" variant (solid line) consistently achieves a lower validation loss than its "Aware" counterpart (dotted line).

2. **Model Size Scaling:** For both "Aware" and "Agnostic" families, larger models (e.g., 1.63B vs. 275M) consistently achieve lower validation loss at every checkpoint.

3. **Task Difficulty:** The "Interleaved" task appears to be more challenging than the "Image-Caption" task, as evidenced by the higher absolute validation loss values across all comparable models (e.g., Agnostic-1.63B starts at ~2.62 for Interleaved vs. ~2.42 for Image-Caption).

4. **Convergence Rate:** The slopes of the lines are relatively similar across models within a panel, suggesting that the rate of improvement with more training tokens is comparable, though larger models start from and maintain a lower loss.

### Interpretation

This chart demonstrates the comparative effectiveness of "Agnostic" versus "Aware" model architectures across two different multimodal tasks (image-captioning and interleaved image-text processing). The data strongly suggests that the "Agnostic" approach leads to better generalization (lower validation loss) than the "Aware" approach, regardless of model scale. Furthermore, the expected scaling law holds: increasing model capacity (from 275M to 1.63B parameters) yields significant performance gains. The consistent performance gap between the two tasks indicates that interleaved processing is a more complex problem for these models to learn. The visualization effectively communicates that architectural choice ("Agnostic" vs. "Aware") and model size are both critical, independent factors for reducing loss in these multimodal learning scenarios.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Line Graph: Validation Loss vs. Tokens Seen (Image-Caption and Interleaved)

### Overview

The image is a line graph comparing validation loss across different model sizes and architectures (Aware and Agnostic) as a function of tokens seen. Two x-axis categories are labeled "Image-Caption" and "Interleaved," with the y-axis representing "Validation Loss" (ranging from 2.2 to 3.0). Multiple data series are plotted, each corresponding to a model variant (e.g., Aware-275M, Agnostic-275M) with distinct colors and markers.

### Components/Axes

- **X-Axis (Horizontal)**:

- Label: "Tokens seen"

- Categories: 100B, 200B, 300B, 400B (increasing left to right)

- Subcategories: "Image-Caption" (left) and "Interleaved" (right)

- **Y-Axis (Vertical)**:

- Label: "Validation Loss"

- Scale: 2.2 to 3.0 (increasing downward)

- **Legend (Bottom)**:

- **Aware Models**:

- Aware-275M: Dotted line with circle markers (light green)

- Aware-464M: Dotted line with square markers (light green)

- Aware-932M: Dotted line with circle markers (light green)

- Aware-1.63B: Dotted line with diamond markers (light green)

- **Agnostic Models**:

- Agnostic-275M: Solid line with square markers (dark green)

- Agnostic-464M: Solid line with square markers (dark green)

- Agnostic-932M: Solid line with circle markers (dark green)

- Agnostic-1.63B: Solid line with diamond markers (dark green)

### Detailed Analysis

#### Image-Caption Section

- **Aware-275M**: Starts at ~2.8 (100B tokens) and decreases to ~2.6 (400B tokens).

- **Aware-464M**: Starts at ~2.7 and decreases to ~2.5.

- **Aware-932M**: Starts at ~2.6 and decreases to ~2.4.

- **Aware-1.63B**: Starts at ~2.5 and decreases to ~2.3.

- **Agnostic-275M**: Starts at ~2.6 and decreases to ~2.4.

- **Agnostic-464M**: Starts at ~2.5 and decreases to ~2.3.

- **Agnostic-932M**: Starts at ~2.4 and decreases to ~2.2.

- **Agnostic-1.63B**: Starts at ~2.3 and decreases to ~2.1.

#### Interleaved Section

- **Aware-275M**: Starts at ~3.0 and decreases to ~2.8.

- **Aware-464M**: Starts at ~2.9 and decreases to ~2.7.

- **Aware-932M**: Starts at ~2.8 and decreases to ~2.6.

- **Aware-1.63B**: Starts at ~2.7 and decreases to ~2.5.

- **Agnostic-275M**: Starts at ~2.8 and decreases to ~2.6.

- **Agnostic-464M**: Starts at ~2.7 and decreases to ~2.5.

- **Agnostic-932M**: Starts at ~2.6 and decreases to ~2.4.

- **Agnostic-1.63B**: Starts at ~2.5 and decreases to ~2.3.

### Key Observations

1. **Downward Trend**: All models show a consistent decrease in validation loss as tokens seen increase, indicating improved performance with more data.

2. **Model Efficiency**:

- Aware models consistently outperform Agnostic models (lower validation loss) across all token ranges.

- Larger models (e.g., 1.63B) achieve lower loss than smaller models (e.g., 275M) in both sections.

3. **Interleaved vs. Image-Caption**:

- Interleaved section starts with higher validation loss than Image-Caption but follows a similar downward trend.

- The gap between Aware and Agnostic models is narrower in the Interleaved section.

### Interpretation

The data suggests that:

- **Increased Token Exposure** improves model performance (lower validation loss) for all architectures.

- **Aware Models** are more efficient, likely due to better alignment with task-specific data (e.g., image-caption pairs).

- **Interleaved Training** (mixing tasks) introduces higher initial loss but converges similarly to Image-Caption training, though with slightly less efficiency.

- **Model Size** directly impacts performance: larger models (1.63B) achieve lower loss than smaller ones, highlighting the trade-off between computational cost and accuracy.

### Spatial Grounding and Trend Verification

- **Legend Placement**: Bottom of the graph, clearly mapping colors/markers to model names.

- **Line Trends**:

- Aware-275M (dotted circle) slopes downward in both sections.

- Agnostic-1.63B (solid diamond) shows the steepest decline in the Interleaved section.

- **Color Consistency**: All Aware models use light green shades, while Agnostic models use dark green, ensuring visual distinction.

### Content Details

- **Data Points**:

- For example, Aware-932M in Image-Caption starts at ~2.6 (100B tokens) and ends at ~2.4 (400B tokens).

- Agnostic-464M in Interleaved starts at ~2.7 (100B tokens) and ends at ~2.5 (400B tokens).

- **Markers**: Circles, squares, and diamonds differentiate model sizes within each category (Aware/Agnostic).

### Notable Patterns

- **Convergence**: All models approach similar loss values at 400B tokens, suggesting diminishing returns beyond a certain token threshold.

- **Interleaved Complexity**: Higher initial loss in Interleaved may reflect the challenge of balancing multiple tasks during training.

This analysis confirms that Aware models with larger capacities (e.g., 1.63B) are optimal for tasks requiring high validation accuracy, while smaller models may suffice for resource-constrained scenarios.

DECODING INTELLIGENCE...