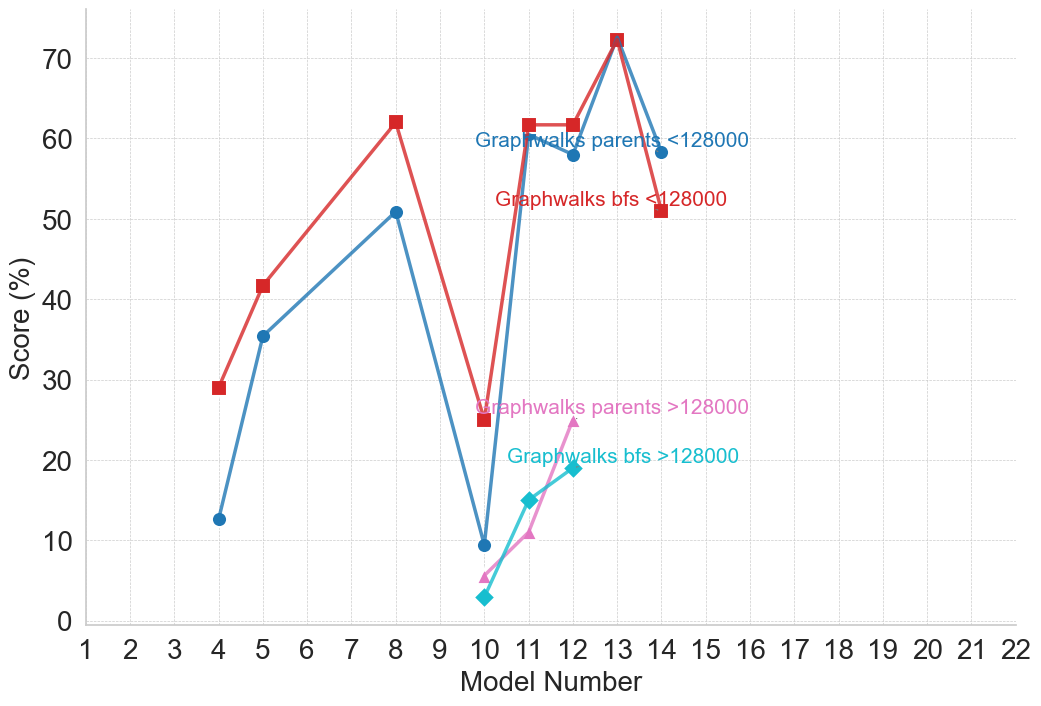

## Line Chart: Graphwalks Performance Comparison

### Overview

This line chart compares the performance of two graphwalk algorithms – one using parents with a limit of 12800, and another using breadth-first search (BFS) with a limit of 12800 – across a range of model numbers from 1 to 22. The performance is measured as a "Score (%)" on the y-axis, plotted against the "Model Number" on the x-axis. There are also lines representing the same algorithms but with a limit greater than 12800.

### Components/Axes

* **X-axis:** "Model Number" ranging from 1 to 22, with tick marks at integer values.

* **Y-axis:** "Score (%)" ranging from 0 to 70, with tick marks at intervals of 10.

* **Lines:**

* Graphwalks parents < 12800 (Blue)

* Graphwalks bfs < 12800 (Red)

* Graphwalks parents > 12800 (Gray)

* Graphwalks bfs > 12800 (Teal)

* **Legend:** Located in the top-right corner, labeling each line with its corresponding algorithm and limit.

### Detailed Analysis

Let's analyze each line individually, noting trends and approximate data points.

* **Graphwalks parents < 12800 (Blue):** This line starts at approximately 14% at Model Number 2, rises sharply to a peak of around 62% at Model Number 8, then declines to approximately 20% at Model Number 12, and remains relatively flat around 20% for the remaining models.

* **Graphwalks bfs < 12800 (Red):** This line begins at approximately 18% at Model Number 2, increases to around 42% at Model Number 5, continues to rise to a peak of approximately 52% at Model Number 9, then drops sharply to around 18% at Model Number 11, and remains relatively flat around 18% for the remaining models.

* **Graphwalks parents > 12800 (Gray):** This line starts at approximately 28% at Model Number 4, rises to a peak of around 32% at Model Number 7, then declines to approximately 16% at Model Number 11, and remains relatively flat around 16% for the remaining models.

* **Graphwalks bfs > 12800 (Teal):** This line begins at approximately 10% at Model Number 2, increases to around 22% at Model Number 11, then declines to approximately 18% at Model Number 13, and remains relatively flat around 18% for the remaining models.

Here's a more detailed breakdown of approximate values at specific Model Numbers:

| Model Number | Graphwalks parents < 12800 (%) | Graphwalks bfs < 12800 (%) | Graphwalks parents > 12800 (%) | Graphwalks bfs > 12800 (%) |

|--------------|-----------------------------------|-----------------------------------|-----------------------------------|-----------------------------------|

| 2 | 14 | 18 | - | 10 |

| 4 | 28 | 30 | 28 | - |

| 5 | 35 | 42 | - | - |

| 7 | 55 | 48 | 32 | - |

| 8 | 62 | 50 | - | - |

| 9 | 58 | 52 | - | - |

| 11 | 20 | 18 | 16 | 22 |

| 12 | 20 | 18 | 16 | 18 |

| 13 | 20 | 18 | 16 | 18 |

| 22 | 20 | 18 | 16 | 18 |

### Key Observations

* The "Graphwalks parents < 12800" algorithm consistently outperforms the other algorithms, particularly between Model Numbers 5 and 9.

* The performance of all algorithms tends to decrease after Model Number 9.

* The "Graphwalks bfs > 12800" algorithm starts with the lowest score but shows a gradual increase up to Model Number 11.

* The "Graphwalks parents > 12800" algorithm consistently has lower scores than its counterpart with the limit of < 12800.

### Interpretation

The data suggests that the "Graphwalks parents" algorithm, when constrained to a limit of 12800, is the most effective approach for these models. The significant drop in performance for all algorithms after Model Number 9 could indicate a point where the models become more complex or require different algorithmic strategies. The comparison between the < 12800 and > 12800 limits for both algorithms suggests that the limit of 12800 is a crucial parameter for optimal performance. Exceeding this limit does not improve performance and may even slightly decrease it. The initial low performance of the BFS algorithm with the > 12800 limit suggests it may require more models to converge to a stable performance level. The consistent flatlining of all lines after Model Number 12 indicates that further model iterations are unlikely to yield significant performance improvements with these algorithms and parameters.