# Technical Document Extraction: DeepSeekMoE Architecture Evolution

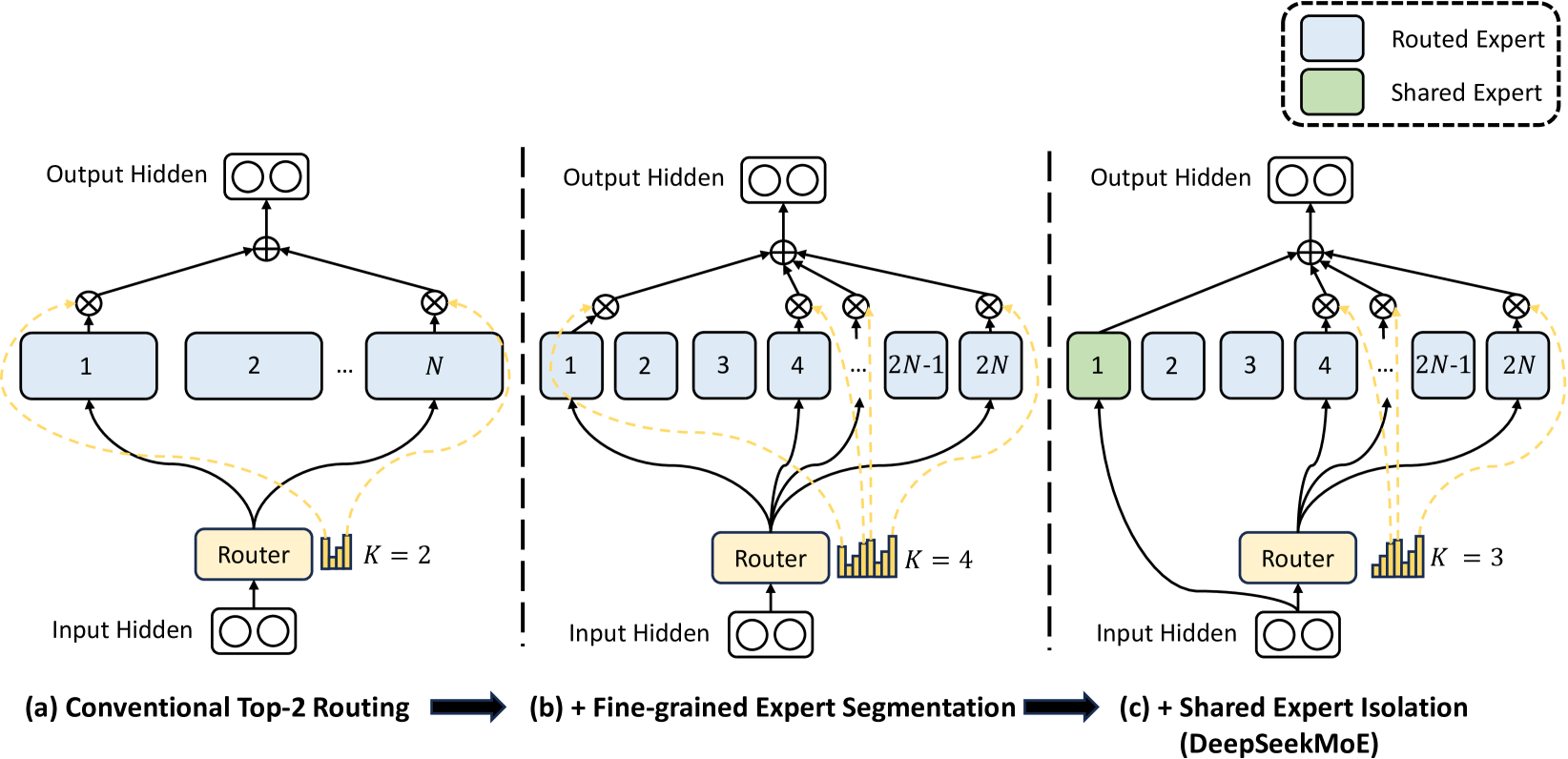

This document provides a detailed technical breakdown of the provided image, which illustrates the architectural evolution from conventional Mixture-of-Experts (MoE) to the DeepSeekMoE structure.

## 1. Legend and Global Components

**Location:** Top-right corner [x: 800-1000, y: 0-150]

* **Light Blue Box:** Routed Expert

* **Light Green Box:** Shared Expert

* **Yellow Box:** Router

* **Circle with Plus (+):** Summation/Aggregation node

* **Circle with Cross (x):** Gating/Multiplication node (weighting)

* **Dashed Yellow Lines:** Routing weights/signals from the Router to the gating nodes.

---

## 2. Component Analysis by Stage

The image is divided into three vertical segments (a, b, and c) separated by dashed lines, showing a progression of complexity.

### (a) Conventional Top-2 Routing

**Description:** This represents the baseline MoE architecture.

* **Input:** "Input Hidden" layer represented by two neurons.

* **Routing Mechanism:** The input flows into a **Router**. A histogram indicates $K=2$, meaning the top 2 experts are selected.

* **Expert Layer:** Contains $N$ large "Routed Experts" (labeled 1, 2, ... $N$).

* **Flow:**

1. The Router sends signals to two specific experts (in this diagram, Expert 1 and Expert $N$).

2. The input is processed by these selected experts.

3. The output of each selected expert is multiplied by a gating weight (indicated by the $\otimes$ node).

4. The weighted outputs are summed ($\oplus$) to produce the **Output Hidden** layer.

### (b) + Fine-grained Expert Segmentation

**Description:** This stage introduces the concept of splitting experts into smaller units.

* **Input:** "Input Hidden" layer.

* **Routing Mechanism:** The Router now selects $K=4$ experts.

* **Expert Layer:** The total number of experts has increased to $2N$ (labeled 1, 2, 3, 4, ... $2N-1, 2N$). These experts are visually smaller than those in stage (a), suggesting the same total parameter count is divided into more numerous, smaller experts.

* **Flow:**

1. The Router selects 4 experts (visually: 1, 4, $2N-1$, and $2N$).

2. Each selected expert's output is gated and summed.

3. **Trend:** By increasing $K$ and the total number of experts, the model achieves more granular specialization.

### (c) + Shared Expert Isolation (DeepSeekMoE)

**Description:** This is the final architecture, which isolates specific experts to be always active.

* **Input:** "Input Hidden" layer.

* **Hybrid Routing Mechanism:**

* **Shared Expert (Green):** Expert 1 is now a "Shared Expert." It receives the input directly, bypassing the Router's selection logic. It is always active.

* **Routed Experts (Blue):** The remaining experts (2 through $2N$) are routed. The Router selects $K=3$ experts from this pool.

* **Expert Layer:** Contains 1 Shared Expert (Green) and $2N-1$ Routed Experts (Blue).

* **Flow:**

1. The input is sent to the Shared Expert (Expert 1) and the Router.

2. The Router selects 3 experts (visually: 4, $2N-1$, and $2N$).

3. The output of the Shared Expert and the 3 selected Routed Experts are all gated and summed into the **Output Hidden** layer.

* **Key Fact:** This architecture combines fixed knowledge (Shared) with specialized knowledge (Routed).

---

## 3. Textual Transcriptions

| Region | Original Text |

| :--- | :--- |

| **Header/Legend** | Routed Expert, Shared Expert |

| **Diagram (a)** | Conventional Top-2 Routing, Router, $K=2$, Input Hidden, Output Hidden, 1, 2, $N$ |

| **Diagram (b)** | + Fine-grained Expert Segmentation, Router, $K=4$, Input Hidden, Output Hidden, 1, 2, 3, 4, $2N-1$, $2N$ |

| **Diagram (c)** | + Shared Expert Isolation (DeepSeekMoE), Router, $K=3$, Input Hidden, Output Hidden, 1, 2, 3, 4, $2N-1$, $2N$ |

---

## 4. Summary of Architectural Trends

1. **Granularity:** Moving from (a) to (b), the experts are segmented into smaller units ($N \rightarrow 2N$), and the number of activated experts increases ($K=2 \rightarrow K=4$).

2. **Specialization vs. Commonality:** Moving from (b) to (c), the model designates specific experts as "Shared," ensuring certain parameters are always utilized for every token, while the remaining "Routed" experts provide conditional computation.