## Diagram: Federated Learning System with Hierarchical Models

### Overview

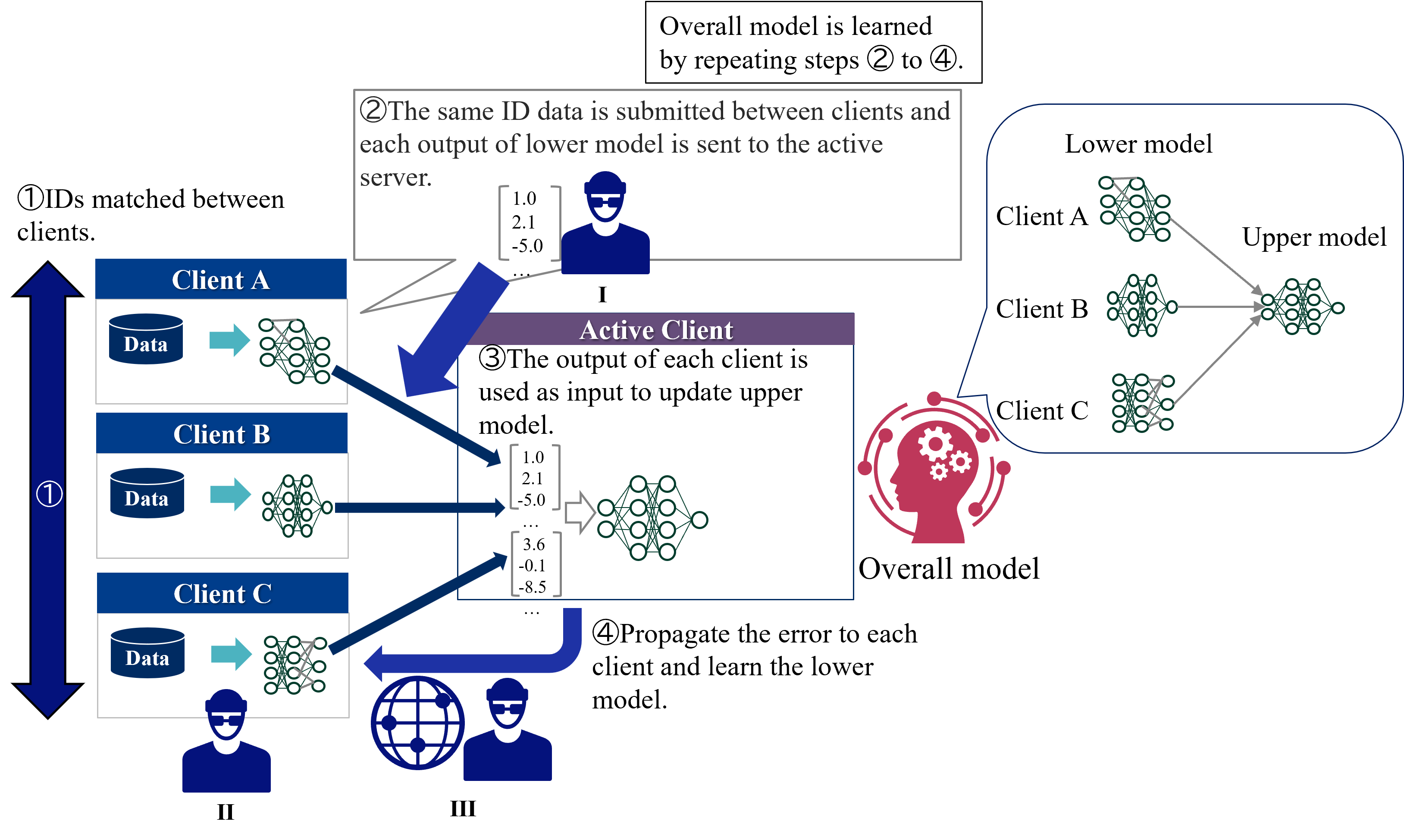

This image is a technical diagram illustrating a federated learning process involving multiple clients and a central server. The system uses a two-tier model architecture (lower and upper models) and operates through a four-step iterative cycle. The diagram is divided into three main spatial regions: a left column showing client data processing, a central area detailing the active client/server interaction, and a right section depicting the overall model hierarchy.

### Components/Axes

**Textual Elements & Labels:**

* **Top Header Box:** "Overall model is learned by repeating steps ② to ④."

* **Step ① Label (Left):** "①IDs matched between clients."

* **Step ② Label (Top-Center):** "②The same ID data is submitted between clients and each output of lower model is sent to the active server."

* **Step ③ Label (Center):** "③The output of each client is used as input to update upper model."

* **Step ④ Label (Bottom-Center):** "④Propagate the error to each client and learn the lower model."

* **Client Labels (Left Column):** "Client A", "Client B", "Client C".

* **Data Icons (Left Column):** Each client box contains a cylinder icon labeled "Data".

* **Central Entity Label:** "Active Client" (in a purple header bar).

* **Model Hierarchy Labels (Right):** "Lower model", "Upper model", "Overall model".

* **Roman Numeral Labels:** "I" (below the active server icon), "II" (below the client icons on the left), "III" (below the globe/user icon at the bottom).

**Visual Components & Flow:**

* **Left Column (Region II):** Three client blocks (A, B, C). Each shows a "Data" cylinder feeding into a neural network icon (representing the "lower model").

* **Central Region (Active Client/I):**

* An icon of a person at a computer (the active server).

* A box showing example output vectors from clients: `[1.0, 2.1, -5.0, ...]` and `[3.6, -0.1, -8.5, ...]`.

* A neural network icon representing the "upper model" being updated.

* A globe icon with a user (labeled III) connected via a blue arrow, indicating error propagation back to clients.

* **Right Region:** A schematic showing the model architecture. Three "Lower model" neural networks (one each for Client A, B, C) feed their outputs into a single "Upper model" neural network. This combined system is labeled "Overall model" and is accompanied by a red icon of a head with gears.

* **Flow Arrows:**

1. A large, double-headed blue arrow on the far left labeled "①" connects the three clients, indicating ID matching.

2. Blue arrows from each client's lower model point to the central "Active Client" server, corresponding to step ②.

3. A blue arrow from the "Active Client" server points to the upper model network, corresponding to step ③.

4. A large, curved blue arrow from the "Active Client" region points back to the clients via the globe icon, corresponding to step ④.

### Detailed Analysis

**Process Flow (Step-by-Step):**

1. **Step ① - ID Matching:** Client A, Client B, and Client C align their datasets using matched identifiers. This is a prerequisite for the federated process.

2. **Step ② - Forward Pass to Server:** Each client processes its local data through its own "lower model" neural network. The output vectors (exemplified by `[1.0, 2.1, -5.0, ...]`) are sent to a central "Active Client" server.

3. **Step ③ - Upper Model Update:** The server aggregates the outputs from all clients' lower models. These aggregated outputs are used as input to train or update a central "upper model" neural network.

4. **Step ④ - Error Propagation & Lower Model Update:** The error or gradient signal from the updated upper model is propagated back through the network (symbolized by the globe) to each individual client. Each client then uses this error to update its own local "lower model."

**Model Architecture:**

* **Lower Models:** Client-specific neural networks. There is one lower model per client (A, B, C).

* **Upper Model:** A single, central neural network that learns from the combined outputs of all lower models.

* **Overall Model:** The complete system, comprising the distributed lower models and the central upper model, learned iteratively.

### Key Observations

* **Hierarchical Separation:** The architecture explicitly separates client-specific feature extraction (lower models) from a centralized aggregation and decision model (upper model).

* **Iterative Learning:** The process is cyclical, with the overall model improving through repeated iterations of steps ② through ④.

* **Active Client Role:** The diagram designates one entity as the "Active Client," which appears to function as the central server coordinating the upper model update and error propagation.

* **Data Privacy Implication:** Raw data never leaves the clients (only model outputs/updates are shared), which is a core principle of federated learning.

* **Visual Metaphors:** The "head with gears" icon for the "Overall model" suggests centralized intelligence, while the globe icon for error propagation implies a distributed network.

### Interpretation

This diagram depicts a **federated learning framework with a hierarchical model structure**. The core innovation or focus is the separation of the learning task into two tiers:

1. **Distributed Representation Learning (Lower Models):** Each client trains a local model to extract features or create representations from its private data. This allows for personalization and data privacy.

2. **Centralized Aggregation & Inference (Upper Model):** The server learns a global model that combines the representations from all clients. This upper model likely performs the final task (e.g., classification, regression) and provides a unified learning signal.

The process ensures that sensitive data remains on local devices while still allowing a powerful global model to be trained. The "ID matching" step (①) is critical, implying the data across clients must be aligned for the same entities (e.g., the same users, products, or time periods) for the federated learning to be meaningful. The system's power comes from its iterative nature, where the global model's feedback continuously improves the local models, creating a virtuous cycle of distributed learning. This approach is valuable in scenarios like mobile keyboard prediction, medical research across hospitals, or financial fraud detection, where data cannot be centralized due to privacy, regulation, or volume.