\n

## Diagram: Federated Learning Process

### Overview

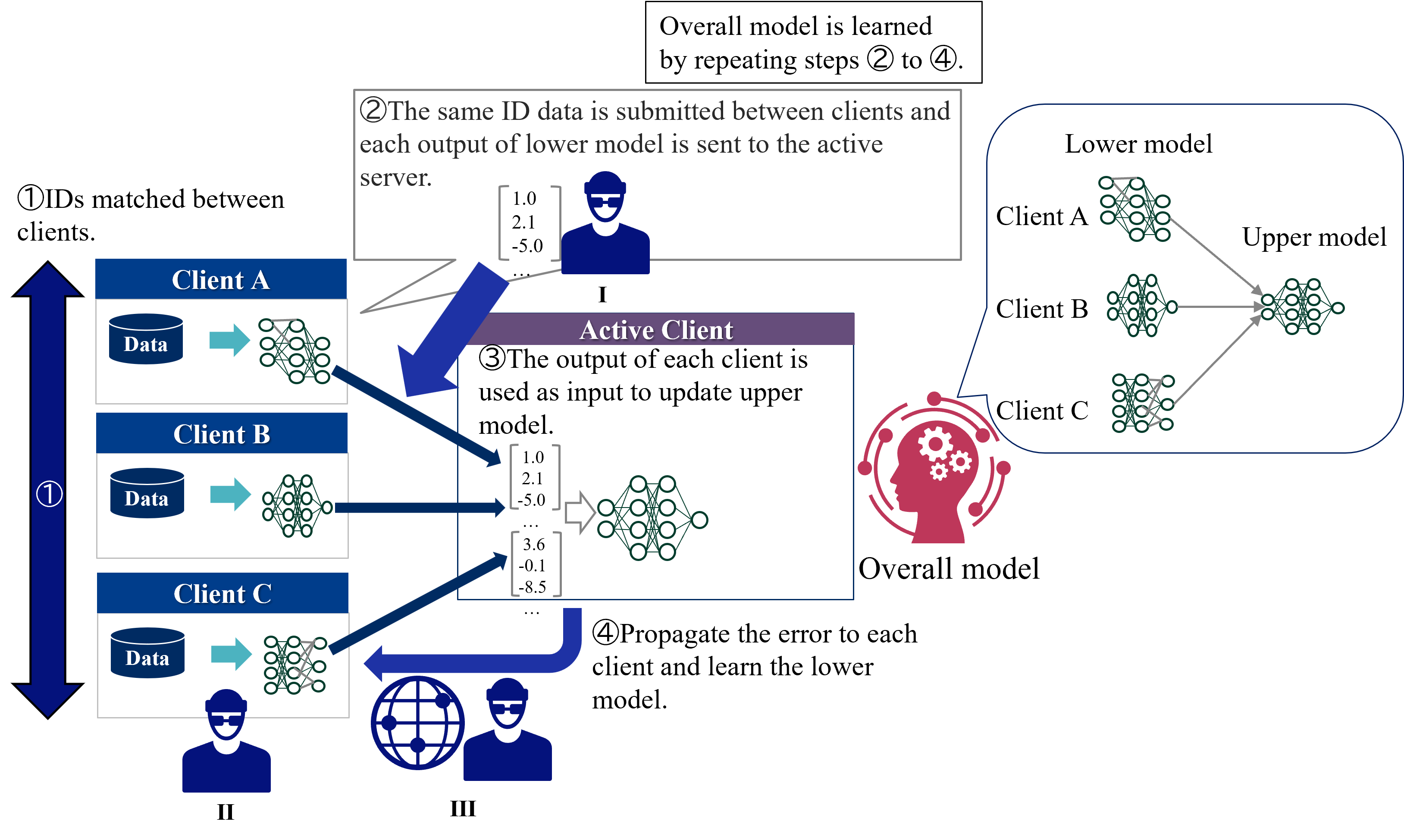

This diagram illustrates a federated learning process involving multiple clients (A, B, and C) and an active server. The process involves data sharing, model updates, and error propagation to learn an overall model without directly sharing the raw data. The diagram is segmented into three main sections: Client Side (left), Server/Aggregation (center), and Model Architecture (right).

### Components/Axes

The diagram features the following components:

* **Clients:** Client A, Client B, Client C (represented as dark blue rectangles)

* **Active Client:** Represented by a figure with a globe (III)

* **Active Server:** Represented by a rectangle labeled "I"

* **Overall Model:** A larger interconnected network of nodes.

* **Lower Model:** Smaller interconnected networks of nodes, one for each client.

* **Upper Model:** A network of nodes that aggregates the lower models.

* **Data:** Represented as a rectangle within each client.

* **Arrows:** Indicate the flow of information and data.

* **Numbered Steps:** 1, 2, 3, 4, describing the process.

### Detailed Analysis or Content Details

The diagram outlines a four-step process:

**Step 1: IDs Matched Between Clients.**

* A large purple arrow indicates IDs are matched between clients.

**Step 2: Data Submission and Lower Model Output.**

* The same ID data is submitted between clients and each output of the lower model is sent to the active server (I).

* The active server (I) displays a data block with the following values:

* 1.0

* 2.1

* -5.0

**Step 3: Input to Update Upper Model.**

* The output of each client is used as input to update the upper model.

* The active client (III) displays a data block with the following values:

* 3.6

* -0.1

* -8.5

**Step 4: Error Propagation and Lower Model Learning.**

* The error is propagated to each client and used to learn the lower model.

**Model Architecture (Right Side):**

* **Client A, B, C:** Each client has a "Lower Model" (a network of nodes) and is connected to the "Upper Model".

* **Upper Model:** The upper model appears to aggregate the outputs from the lower models of each client.

* **Overall Model:** The overall model is learned by repeating steps 2 to 4.

### Key Observations

* The diagram emphasizes the decentralized nature of the learning process, with clients retaining their data locally.

* The active server acts as a central aggregator of model updates.

* The process is iterative, with repeated steps 2-4 refining the overall model.

* The data blocks within the active server and active client contain numerical values, suggesting some form of model parameters or gradients.

### Interpretation

This diagram depicts a federated learning system. Federated learning is a machine learning technique that trains an algorithm across multiple decentralized edge devices or servers holding local data samples, without exchanging them. This approach contrasts with traditional centralized machine learning where all the data is uploaded to one server.

The diagram highlights the key steps involved:

1. **Initialization:** Clients have local data and initial models.

2. **Local Training:** Each client trains its local model using its data.

3. **Aggregation:** The active server aggregates the model updates from the clients.

4. **Global Update:** The aggregated updates are used to improve the global model.

The numerical values within the server and active client blocks likely represent model weights, gradients, or other parameters used in the training process. The iterative nature of the process (repeating steps 2-4) suggests that the model is refined over time through multiple rounds of local training and global aggregation.

The use of an "active client" (III) suggests a potential mechanism for selecting a subset of clients to participate in each round of training, which can improve efficiency and scalability. The globe icon associated with the active client might indicate that clients are geographically distributed.

The diagram effectively communicates the core principles of federated learning, emphasizing data privacy, decentralized training, and iterative model refinement.