TECHNICAL ASSET FINGERPRINT

ae5f2dda15b572d34588d98b

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

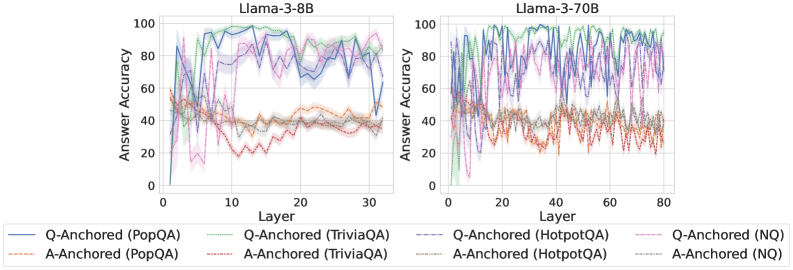

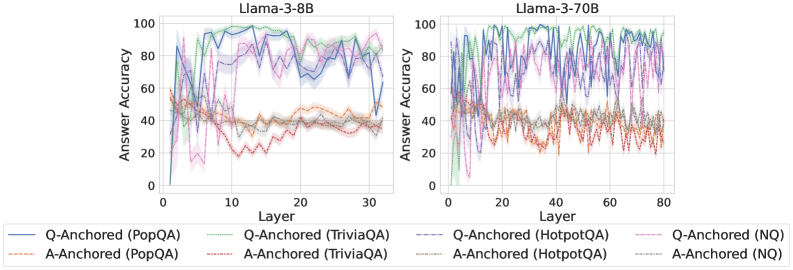

## Line Charts: Llama-3-8B and Llama-3-70B Answer Accuracy vs. Layer

### Overview

The image presents two line charts comparing the answer accuracy of Llama-3-8B and Llama-3-70B models across different layers for various question-answering datasets. The charts depict the performance of both "Q-Anchored" and "A-Anchored" approaches on PopQA, TriviaQA, HotpotQA, and NQ datasets. The x-axis represents the layer number, while the y-axis represents the answer accuracy.

### Components/Axes

**Left Chart (Llama-3-8B):**

* **Title:** Llama-3-8B

* **X-axis:** Layer, with markers at 0, 10, 20, and 30.

* **Y-axis:** Answer Accuracy, ranging from 0 to 100, with markers at 0, 20, 40, 60, 80, and 100.

**Right Chart (Llama-3-70B):**

* **Title:** Llama-3-70B

* **X-axis:** Layer, with markers at 0, 20, 40, 60, and 80.

* **Y-axis:** Answer Accuracy, ranging from 0 to 100, with markers at 0, 20, 40, 60, 80, and 100.

**Legend (Located at the bottom of both charts):**

* **Q-Anchored (PopQA):** Solid Blue Line

* **A-Anchored (PopQA):** Dashed Brown Line

* **Q-Anchored (TriviaQA):** Dotted Green Line

* **A-Anchored (TriviaQA):** Dash-Dot Green Line

* **Q-Anchored (HotpotQA):** Dash-Dot Purple Line

* **A-Anchored (HotpotQA):** Dotted Red Line

* **Q-Anchored (NQ):** Dashed Purple Line

* **A-Anchored (NQ):** Dash-Dot Brown Line

### Detailed Analysis

**Llama-3-8B:**

* **Q-Anchored (PopQA):** (Solid Blue) Starts around 60% accuracy at layer 0, drops slightly, then rises sharply to fluctuate between 80% and 100% from layer 10 onwards.

* **A-Anchored (PopQA):** (Dashed Brown) Starts around 50% accuracy, decreases slightly, and then stabilizes around 40% from layer 10 onwards.

* **Q-Anchored (TriviaQA):** (Dotted Green) Starts around 60% accuracy, drops slightly, then rises sharply to fluctuate between 70% and 90% from layer 10 onwards.

* **A-Anchored (TriviaQA):** (Dash-Dot Green) Starts around 50% accuracy, decreases slightly, and then stabilizes around 40% from layer 10 onwards.

* **Q-Anchored (HotpotQA):** (Dash-Dot Purple) Starts around 60% accuracy, drops sharply, then rises sharply to fluctuate between 70% and 90% from layer 10 onwards.

* **A-Anchored (HotpotQA):** (Dotted Red) Starts around 50% accuracy, decreases sharply, and then stabilizes around 30% from layer 10 onwards.

* **Q-Anchored (NQ):** (Dashed Purple) Starts around 60% accuracy, drops sharply, then rises sharply to fluctuate between 70% and 90% from layer 10 onwards.

* **A-Anchored (NQ):** (Dash-Dot Brown) Starts around 50% accuracy, decreases slightly, and then stabilizes around 40% from layer 10 onwards.

**Llama-3-70B:**

* **Q-Anchored (PopQA):** (Solid Blue) Starts around 60% accuracy, fluctuates significantly, and then stabilizes around 80% to 100% from layer 10 onwards.

* **A-Anchored (PopQA):** (Dashed Brown) Starts around 50% accuracy, decreases slightly, and then stabilizes around 40% from layer 10 onwards.

* **Q-Anchored (TriviaQA):** (Dotted Green) Starts around 60% accuracy, fluctuates significantly, and then stabilizes around 80% to 100% from layer 10 onwards.

* **A-Anchored (TriviaQA):** (Dash-Dot Green) Starts around 50% accuracy, decreases slightly, and then stabilizes around 40% from layer 10 onwards.

* **Q-Anchored (HotpotQA):** (Dash-Dot Purple) Starts around 60% accuracy, fluctuates significantly, and then stabilizes around 70% to 90% from layer 10 onwards.

* **A-Anchored (HotpotQA):** (Dotted Red) Starts around 50% accuracy, decreases sharply, and then stabilizes around 30% from layer 10 onwards.

* **Q-Anchored (NQ):** (Dashed Purple) Starts around 60% accuracy, fluctuates significantly, and then stabilizes around 70% to 90% from layer 10 onwards.

* **A-Anchored (NQ):** (Dash-Dot Brown) Starts around 50% accuracy, decreases slightly, and then stabilizes around 40% from layer 10 onwards.

### Key Observations

* For both models, "Q-Anchored" approaches generally achieve higher answer accuracy than "A-Anchored" approaches across all datasets.

* The "Q-Anchored" lines show a sharp increase in accuracy around layer 10 for Llama-3-8B, while Llama-3-70B fluctuates more.

* The "A-Anchored" lines tend to stabilize around 40% accuracy after the initial layers for both models, except for HotpotQA, which stabilizes around 30%.

* Llama-3-70B has a longer x-axis, indicating more layers than Llama-3-8B.

### Interpretation

The data suggests that anchoring the question (Q-Anchored) leads to better performance in question-answering tasks compared to anchoring the answer (A-Anchored) for both Llama-3-8B and Llama-3-70B models. The initial layers seem to be crucial for the "Q-Anchored" approaches, as they exhibit a significant increase in accuracy around layer 10. The larger model, Llama-3-70B, shows more fluctuation in accuracy across layers, potentially indicating a more complex learning process. The consistent performance of "A-Anchored" approaches around 40% suggests a baseline level of accuracy that is independent of the number of layers. The lower accuracy of "A-Anchored (HotpotQA)" might indicate that HotpotQA is a more challenging dataset for this approach.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Line Chart: Answer Accuracy vs. Layer for Llama Models

### Overview

The image presents two line charts comparing the answer accuracy of two Llama models (Llama-3-8B and Llama-3-70B) across different layers. The x-axis represents the layer number, and the y-axis represents the answer accuracy, ranging from 0 to 100. Each chart displays multiple lines, each representing a different question-answering dataset and anchoring method.

### Components/Axes

* **X-axis:** Layer (ranging from approximately 0 to 30 for Llama-3-8B and 0 to 80 for Llama-3-70B).

* **Y-axis:** Answer Accuracy (ranging from 0 to 100).

* **Left Chart Title:** Llama-3-8B

* **Right Chart Title:** Llama-3-70B

* **Legend:**

* Q-Anchored (PopQA) - Blue line

* A-Anchored (PopQA) - Light Brown line

* Q-Anchored (TriviaQA) - Purple line

* A-Anchored (TriviaQA) - Light Purple line

* Q-Anchored (HotpotQA) - Green line

* A-Anchored (HotpotQA) - Light Green line

* Q-Anchored (NQ) - Red line

* A-Anchored (NQ) - Orange line

### Detailed Analysis or Content Details

**Llama-3-8B Chart (Left):**

* **Q-Anchored (PopQA):** The blue line starts at approximately 10, rises sharply to around 90 by layer 5, then fluctuates between 60 and 90 for the remainder of the layers, ending at approximately 85.

* **A-Anchored (PopQA):** The light brown line starts at approximately 10, rises to around 40 by layer 5, and remains relatively stable between 30 and 50 for the rest of the layers, ending at approximately 40.

* **Q-Anchored (TriviaQA):** The purple line starts at approximately 10, rises to around 80 by layer 5, and fluctuates between 60 and 90 for the remainder of the layers, ending at approximately 75.

* **A-Anchored (TriviaQA):** The light purple line starts at approximately 10, rises to around 40 by layer 5, and remains relatively stable between 30 and 50 for the rest of the layers, ending at approximately 40.

* **Q-Anchored (HotpotQA):** The green line starts at approximately 10, rises to around 85 by layer 5, and fluctuates between 60 and 90 for the remainder of the layers, ending at approximately 80.

* **A-Anchored (HotpotQA):** The light green line starts at approximately 10, rises to around 40 by layer 5, and remains relatively stable between 30 and 50 for the rest of the layers, ending at approximately 40.

* **Q-Anchored (NQ):** The red line starts at approximately 10, rises to around 60 by layer 5, and fluctuates between 40 and 70 for the remainder of the layers, ending at approximately 60.

* **A-Anchored (NQ):** The orange line starts at approximately 10, rises to around 40 by layer 5, and remains relatively stable between 30 and 50 for the rest of the layers, ending at approximately 40.

**Llama-3-70B Chart (Right):**

* **Q-Anchored (PopQA):** The blue line starts at approximately 10, rises sharply to around 90 by layer 5, then fluctuates between 60 and 90 for the remainder of the layers, ending at approximately 80.

* **A-Anchored (PopQA):** The light brown line starts at approximately 10, rises to around 40 by layer 5, and remains relatively stable between 30 and 50 for the rest of the layers, ending at approximately 40.

* **Q-Anchored (TriviaQA):** The purple line starts at approximately 10, rises to around 80 by layer 5, and fluctuates between 60 and 90 for the remainder of the layers, ending at approximately 75.

* **A-Anchored (TriviaQA):** The light purple line starts at approximately 10, rises to around 40 by layer 5, and remains relatively stable between 30 and 50 for the rest of the layers, ending at approximately 40.

* **Q-Anchored (HotpotQA):** The green line starts at approximately 10, rises to around 85 by layer 5, and fluctuates between 60 and 90 for the remainder of the layers, ending at approximately 80.

* **A-Anchored (HotpotQA):** The light green line starts at approximately 10, rises to around 40 by layer 5, and remains relatively stable between 30 and 50 for the rest of the layers, ending at approximately 40.

* **Q-Anchored (NQ):** The red line starts at approximately 10, rises to around 60 by layer 5, and fluctuates between 40 and 70 for the remainder of the layers, ending at approximately 60.

* **A-Anchored (NQ):** The orange line starts at approximately 10, rises to around 40 by layer 5, and remains relatively stable between 30 and 50 for the rest of the layers, ending at approximately 40.

### Key Observations

* For both models, the "Q-Anchored" lines consistently exhibit higher answer accuracy than the corresponding "A-Anchored" lines across all datasets.

* The answer accuracy generally increases rapidly in the initial layers (up to layer 5) for all datasets and anchoring methods.

* After the initial increase, the answer accuracy tends to plateau and fluctuate, suggesting diminishing returns from adding more layers.

* The 70B model shows similar trends to the 8B model, but extends to a larger number of layers (80 vs 30).

* PopQA, TriviaQA, and HotpotQA datasets generally achieve higher accuracy than the NQ dataset.

### Interpretation

The data suggests that question-anchored methods consistently outperform answer-anchored methods in terms of answer accuracy for both Llama models. This indicates that focusing on the question during the learning process is more effective than focusing on the answer. The initial rapid increase in accuracy with the first few layers suggests that the early layers are crucial for capturing fundamental knowledge. The plateauing of accuracy after a certain number of layers indicates that adding more layers may not significantly improve performance, and could potentially lead to overfitting. The differences in accuracy between datasets may reflect the complexity and quality of the datasets themselves. The 70B model's extended layer range allows for potentially more nuanced learning, but the overall trends remain consistent with the 8B model. This data is valuable for understanding the strengths and weaknesses of different training strategies and for optimizing the architecture of Llama models.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Line Charts: Llama-3 Model Answer Accuracy by Layer

### Overview

The image displays two side-by-side line charts comparing the "Answer Accuracy" across network layers for two different-sized language models: Llama-3-8B (left) and Llama-3-70B (right). Each chart plots the performance of eight different experimental conditions, defined by an anchoring method (Q-Anchored or A-Anchored) applied to four different question-answering datasets.

### Components/Axes

* **Chart Titles:** "Llama-3-8B" (left chart), "Llama-3-70B" (right chart).

* **Y-Axis (Both Charts):** Label: "Answer Accuracy". Scale: 0 to 100, with major tick marks at intervals of 20 (0, 20, 40, 60, 80, 100).

* **X-Axis (Left Chart - Llama-3-8B):** Label: "Layer". Scale: 0 to 30, with major tick marks at 0, 10, 20, 30.

* **X-Axis (Right Chart - Llama-3-70B):** Label: "Layer". Scale: 0 to 80, with major tick marks at 0, 20, 40, 60, 80.

* **Legend (Bottom, spanning both charts):** Contains 8 entries, differentiating lines by color and style (solid vs. dashed).

* **Solid Lines (Q-Anchored):**

* Blue: Q-Anchored (PopQA)

* Green: Q-Anchored (TriviaQA)

* Purple: Q-Anchored (HotpotQA)

* Pink: Q-Anchored (NQ)

* **Dashed Lines (A-Anchored):**

* Orange: A-Anchored (PopQA)

* Red: A-Anchored (TriviaQA)

* Gray: A-Anchored (HotpotQA)

* Light Blue: A-Anchored (NQ)

### Detailed Analysis

**Llama-3-8B Chart (Left):**

* **Q-Anchored Lines (Solid):** All four lines show a rapid initial rise from layer 0, reaching a high plateau (approximately 70-100% accuracy) by layer 5-10. They maintain this high performance with moderate fluctuations across the remaining layers (10-30). The Green (TriviaQA) and Pink (NQ) lines appear to be the highest and most stable, often near 90-100%. The Blue (PopQA) and Purple (HotpotQA) lines are slightly lower and more volatile, with a notable dip for Blue around layer 25.

* **A-Anchored Lines (Dashed):** These lines perform significantly worse. They start low, rise to a modest peak between layers 5-15 (approximately 40-60% accuracy), and then generally decline or stagnate at a lower level (20-50%) for the remaining layers. The Red (TriviaQA) line shows the most pronounced decline after its early peak. The Orange (PopQA), Gray (HotpotQA), and Light Blue (NQ) lines cluster together in the 30-50% range for most layers.

**Llama-3-70B Chart (Right):**

* **Q-Anchored Lines (Solid):** Similar to the 8B model, these lines rise quickly to a high accuracy band (approximately 70-100%). However, the performance is much more volatile, with frequent, sharp peaks and troughs across all layers (0-80). Despite the noise, the Green (TriviaQA) and Pink (NQ) lines again appear to be the strongest performers. The Purple (HotpotQA) line shows extreme volatility, with deep drops below 60%.

* **A-Anchored Lines (Dashed):** These lines also show higher volatility compared to their 8B counterparts. They occupy a lower accuracy band, mostly between 20-60%. There is no clear upward trend after the initial layers; instead, they fluctuate within this range. The Red (TriviaQA) line is particularly low and volatile, often dipping near 20%.

### Key Observations

1. **Anchoring Method Dominance:** The most striking pattern is the clear and consistent separation between Q-Anchored (solid lines) and A-Anchored (dashed lines) performance across both models and all datasets. Q-Anchored methods yield substantially higher answer accuracy.

2. **Model Size and Volatility:** The larger Llama-3-70B model exhibits significantly greater layer-to-layer volatility in accuracy for both anchoring methods compared to the more stable Llama-3-8B.

3. **Dataset Hierarchy:** Within the Q-Anchored group, performance on TriviaQA (Green) and NQ (Pink) tends to be highest and most stable, followed by PopQA (Blue) and HotpotQA (Purple).

4. **Early Layer Behavior:** Both models show a critical phase in the first 5-10 layers where accuracy for Q-Anchored methods rapidly ascends to its operational plateau.

5. **A-Anchored Plateau/Decline:** A-Anchored methods in the 8B model show an early peak followed by a decline, suggesting later layers may be less optimized for this representation. In the 70B model, they simply fluctuate at a low level.

### Interpretation

The data strongly suggests that the **anchoring method is a far more critical factor for final answer accuracy than the specific layer within the network** (for layers beyond the initial few). Representations anchored to the question (Q-Anchored) are consistently and significantly more effective for extracting accurate answers than those anchored to the answer (A-Anchored) across diverse datasets.

The increased volatility in the 70B model could indicate more specialized or "opinionated" layers, where individual layers have stronger, more variable effects on the final output. This might be a characteristic of larger models with greater capacity. The consistent performance hierarchy among datasets (TriviaQA/NQ > PopQA/HotpotQA) for Q-Anchored methods may reflect intrinsic differences in dataset difficulty or how well the model's pre-training aligns with the knowledge required for each.

**Notable Anomaly:** The sharp, deep dip in the Q-Anchored (HotpotQA - Purple) line in the Llama-3-70B chart around layer 50 is an outlier. This could represent a layer that is particularly detrimental to performance on multi-hop reasoning tasks (which HotpotQA tests), or it could be an artifact of the specific experimental run.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 2

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Line Graph: Answer Accuracy Across Model Layers for Llama-3-8B and Llama-3-70B

### Overview

The image contains two side-by-side line graphs comparing answer accuracy across transformer model layers for two Llama-3 variants (8B and 70B parameters). Each graph tracks six distinct data series representing different anchoring methods (Q-Anchored/A-Anchored) across four datasets (PopQA, TriviaQA, HotpotQA, NQ). The graphs show significant variability in accuracy across layers, with notable differences between model sizes.

### Components/Axes

- **X-axis (Layer)**:

- Llama-3-8B: 0–30 (discrete increments)

- Llama-3-70B: 0–80 (discrete increments)

- **Y-axis (Answer Accuracy)**: 0–100% (continuous scale)

- **Legends**:

- Position: Bottom of both charts

- Entries (color/style):

1. Q-Anchored (PopQA): Solid blue

2. Q-Anchored (TriviaQA): Dotted green

3. Q-Anchored (HotpotQA): Dashed purple

4. Q-Anchored (NQ): Dotted pink

5. A-Anchored (PopQA): Dashed orange

6. A-Anchored (TriviaQA): Dotted gray

7. A-Anchored (HotpotQA): Dashed red

8. A-Anchored (NQ): Dotted brown

### Detailed Analysis

#### Llama-3-8B (Left Chart)

- **Q-Anchored (PopQA)**: Peaks at ~90% accuracy in layer 10, drops to ~40% by layer 30 with high volatility.

- **A-Anchored (PopQA)**: Starts at ~50%, fluctuates between 30–60% with sharp dips (e.g., layer 15: ~20%).

- **Q-Anchored (TriviaQA)**: Stable ~70–80% until layer 20, then erratic (60–90%).

- **A-Anchored (TriviaQA)**: Gradual decline from ~60% to ~40% with oscillations.

- **Q-Anchored (HotpotQA)**: Sharp initial drop from ~80% to ~50% by layer 10, then stabilizes ~60–70%.

- **A-Anchored (HotpotQA)**: Starts ~70%, declines to ~40% by layer 30 with jagged patterns.

- **Q-Anchored (NQ)**: Peaks ~95% at layer 5, crashes to ~30% by layer 30.

- **A-Anchored (NQ)**: Starts ~60%, declines to ~20% with irregular fluctuations.

#### Llama-3-70B (Right Chart)

- **Q-Anchored (PopQA)**: Starts ~85%, stabilizes ~70–80% after layer 20.

- **A-Anchored (PopQA)**: Starts ~60%, stabilizes ~50–60% after layer 40.

- **Q-Anchored (TriviaQA)**: Peaks ~90% at layer 10, declines to ~70% by layer 80.

- **A-Anchored (TriviaQA)**: Starts ~70%, declines to ~50% with moderate volatility.

- **Q-Anchored (HotpotQA)**: Starts ~80%, stabilizes ~65–75% after layer 30.

- **A-Anchored (HotpotQA)**: Starts ~75%, declines to ~50% with oscillations.

- **Q-Anchored (NQ)**: Peaks ~95% at layer 5, declines to ~60% by layer 80.

- **A-Anchored (NQ)**: Starts ~65%, declines to ~40% with irregular drops.

### Key Observations

1. **Model Size Impact**: Llama-3-70B shows more stable accuracy trends compared to Llama-3-8B, particularly in later layers.

2. **Anchoring Method Differences**:

- Q-Anchored methods generally outperform A-Anchored in early layers but show diminishing returns in larger models.

- A-Anchored methods exhibit greater volatility in smaller models (8B).

3. **Dataset Variability**:

- NQ (Natural Questions) shows the most dramatic drops in accuracy across both models.

- PopQA maintains higher baseline accuracy than TriviaQA/HotpotQA in later layers.

4. **Layer-Specific Anomalies**:

- Layer 5 in Llama-3-8B (Q-Anchored NQ) shows an outlier peak at ~95%.

- Layer 30 in Llama-3-8B (A-Anchored PopQA) has a sharp dip to ~20%.

### Interpretation

The data suggests that model size (70B vs. 8B) correlates with improved stability in answer accuracy across layers, particularly for Q-Anchored methods. However, anchoring effectiveness varies significantly by dataset:

- **PopQA** benefits most from Q-Anchoring in smaller models but shows diminishing returns in larger models.

- **NQ** exhibits the most instability, with Q-Anchored methods collapsing in later layers despite initial promise.

- The A-Anchored methods' volatility in the 8B model implies architectural limitations in smaller transformers for maintaining consistent reasoning chains.

These patterns highlight the importance of dataset-specific anchoring strategies and suggest that larger models may better preserve Q-Anchored performance but still struggle with dataset heterogeneity. The sharp declines in NQ accuracy across all layers warrant further investigation into question complexity and model attention mechanisms.

DECODING INTELLIGENCE...