## Line Chart: Training Efficiency Comparison with Reward Models

### Overview

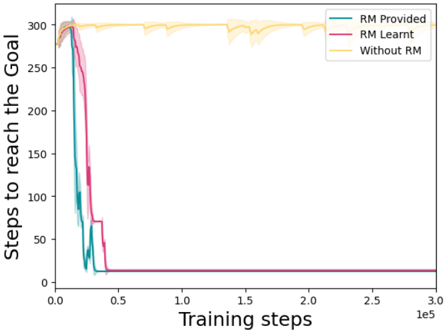

The image is a line chart comparing the performance of three different training conditions over the course of training steps. The chart plots the number of steps required to reach a goal against the number of training steps completed. The primary visual takeaway is a dramatic difference in learning efficiency between conditions that use a Reward Model (RM) and one that does not.

### Components/Axes

* **Chart Type:** Line chart with shaded confidence intervals or variance bands.

* **Y-Axis (Vertical):**

* **Label:** "Steps to reach the Goal"

* **Scale:** Linear scale from 0 to 300.

* **Markers:** 0, 50, 100, 150, 200, 250, 300.

* **X-Axis (Horizontal):**

* **Label:** "Training steps"

* **Scale:** Linear scale from 0.0 to 3.0, with a multiplier of `1e5` (100,000). Therefore, the range is 0 to 300,000 training steps.

* **Markers:** 0.0, 0.5, 1.0, 1.5, 2.0, 2.5, 3.0 (all x 1e5).

* **Legend:** Located in the top-right corner of the plot area.

* **"RM Provided"** - Represented by a teal/green line.

* **"RM Learnt"** - Represented by a magenta/pink line.

* **"Without RM"** - Represented by a yellow/gold line.

### Detailed Analysis

**1. "RM Provided" (Teal Line):**

* **Trend:** Exhibits an extremely rapid, near-vertical descent at the very beginning of training.

* **Data Points:** Starts at approximately 300 steps to goal at step 0. By approximately 20,000 training steps (0.2 x 1e5), it has plummeted to near 0 (approximately 10-20 steps to goal). It then remains flat and stable at this low value for the remainder of the training (up to 300,000 steps).

* **Variance:** The shaded teal band is very narrow after the initial drop, indicating consistent, low-variance performance.

**2. "RM Learnt" (Magenta Line):**

* **Trend:** Also shows a very rapid descent, but with a slight delay compared to "RM Provided".

* **Data Points:** Starts at ~300. The sharp decline begins slightly after the teal line, reaching near 0 (approximately 10-20 steps to goal) by roughly 40,000-50,000 training steps (0.4-0.5 x 1e5). It then plateaus at the same low level as "RM Provided".

* **Variance:** The shaded magenta band is narrow after convergence, similar to the teal line.

**3. "Without RM" (Yellow Line):**

* **Trend:** Shows no significant improvement over the entire training period. The line remains high and relatively flat with minor fluctuations.

* **Data Points:** Hovers consistently around the 300 mark (steps to goal) from step 0 to step 300,000. There are small dips and rises, but no sustained downward trend.

* **Variance:** The shaded yellow band is notably wider than for the other two lines throughout the entire chart, indicating high variability and instability in performance without a reward model.

### Key Observations

1. **Binary Outcome:** There is a stark, binary difference in outcomes. Conditions with a reward model (both provided and learnt) achieve near-perfect performance (minimal steps to goal) very early in training. The condition without a reward model fails to learn the task effectively.

2. **Learning Speed:** "RM Provided" converges the fastest, followed closely by "RM Learnt". The delay for "RM Learnt" is logical, as it must first learn the reward model itself before using it for guidance.

3. **Stability:** The "Without RM" condition is not only ineffective but also highly unstable, as evidenced by the wide confidence band. The RM conditions are highly stable after convergence.

4. **Ceiling Effect:** The "Without RM" line appears to be at or near a performance ceiling (300 steps), suggesting the task is very difficult or impossible to solve efficiently through the base training method alone.

### Interpretation

This chart provides strong empirical evidence for the critical role of a Reward Model (RM) in this specific reinforcement learning or optimization task. The data suggests that:

* **The RM is the key enabling component:** The task appears to be intractable for the base algorithm ("Without RM"), which makes no progress. The introduction of an RM, whether pre-provided or learned concurrently, unlocks successful learning.

* **Pre-specifying the RM is optimal:** While a learnt RM works, having the RM provided from the start ("RM Provided") leads to the fastest convergence. This implies that the process of learning the RM itself, while successful, adds a small but measurable overhead to the overall training time.

* **The mechanism is robust:** Once the RM-guided training converges, performance is both excellent (near 0 steps to goal) and highly reliable (low variance). This indicates the solution found is stable and generalizes well within the training distribution.

**In essence, the chart tells a story of a task that is unsolvable without the right guidance signal (the RM). The RM transforms the learning problem from impossible to trivially easy, with pre-specification offering a slight speed advantage over learning it on the fly.**