TECHNICAL ASSET FINGERPRINT

ae8c74470525a9b1809c5d8f

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

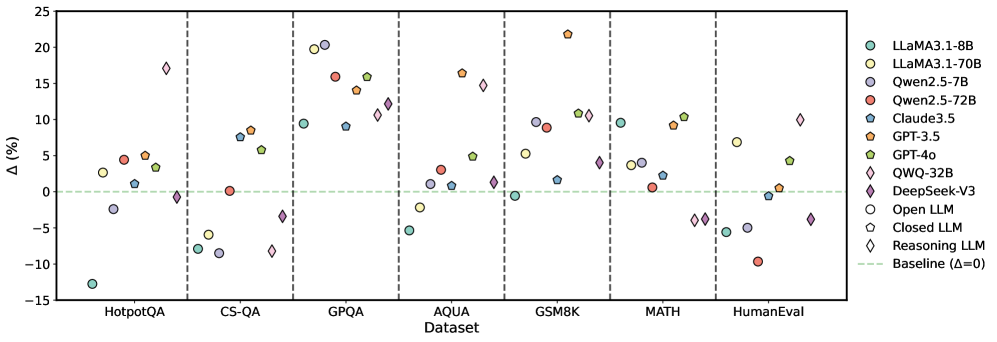

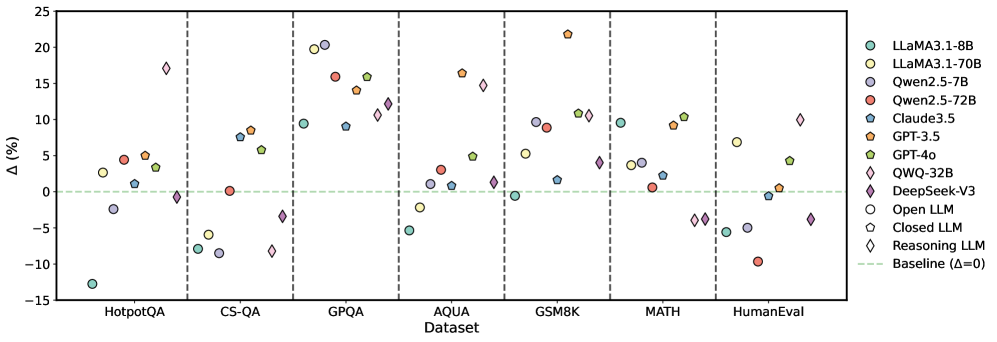

## Scatter Plot: Model Performance Across Datasets

### Overview

The image is a scatter plot comparing the performance of various language models (LLMs) across different datasets. The y-axis represents the change in performance (Δ in percentage), and the x-axis represents the datasets. Each model is represented by a unique color and shape combination, as indicated in the legend on the right. A horizontal dashed green line indicates the baseline performance (Δ=0).

### Components/Axes

* **X-axis:** Datasets: HotpotQA, CS-QA, GPQA, AQUA, GSM8K, MATH, HumanEval.

* **Y-axis:** Δ (%), ranging from -15% to 25% with increments of 5%.

* **Legend (Right side):**

* Light Blue Circle: LLaMA3.1-8B

* Yellow Circle: LLaMA3.1-70B

* Dark Blue Circle: Qwen2.5-7B

* Red Circle: Qwen2.5-72B

* Blue Pentagon: Claude3.5

* Orange Pentagon: GPT-3.5

* Green Pentagon: GPT-4o

* Purple Diamond: QWQ-32B

* Dark Purple Diamond: DeepSeek-V3

* White Circle: Open LLM

* White Pentagon: Closed LLM

* White Diamond: Reasoning LLM

* Green Dashed Line: Baseline (Δ=0)

### Detailed Analysis

**HotpotQA Dataset:**

* LLaMA3.1-8B (Light Blue Circle): Approximately -13%

* LLaMA3.1-70B (Yellow Circle): Approximately 5%

* Qwen2.5-7B (Dark Blue Circle): Approximately -2%

* Qwen2.5-72B (Red Circle): Approximately 5%

* Claude3.5 (Blue Pentagon): Approximately 5%

* GPT-3.5 (Orange Pentagon): Approximately 5%

* GPT-4o (Green Pentagon): Approximately 3%

* QWQ-32B (Purple Diamond): Approximately -1%

* DeepSeek-V3 (Dark Purple Diamond): Approximately -1%

* Open LLM (White Circle): Approximately -3%

* Closed LLM (White Pentagon): Approximately -8%

* Reasoning LLM (White Diamond): Approximately -1%

**CS-QA Dataset:**

* LLaMA3.1-8B (Light Blue Circle): Approximately -8%

* LLaMA3.1-70B (Yellow Circle): Approximately -6%

* Qwen2.5-7B (Dark Blue Circle): Approximately -9%

* Qwen2.5-72B (Red Circle): Approximately 8%

* Claude3.5 (Blue Pentagon): Approximately 8%

* GPT-3.5 (Orange Pentagon): Approximately 8%

* GPT-4o (Green Pentagon): Approximately 1%

* QWQ-32B (Purple Diamond): Approximately 11%

* DeepSeek-V3 (Dark Purple Diamond): Approximately -1%

* Open LLM (White Circle): Approximately -1%

* Closed LLM (White Pentagon): Approximately -1%

* Reasoning LLM (White Diamond): Approximately -1%

**GPQA Dataset:**

* LLaMA3.1-8B (Light Blue Circle): Approximately 1%

* LLaMA3.1-70B (Yellow Circle): Approximately 15%

* Qwen2.5-7B (Dark Blue Circle): Approximately 1%

* Qwen2.5-72B (Red Circle): Approximately 17%

* Claude3.5 (Blue Pentagon): Approximately 15%

* GPT-3.5 (Orange Pentagon): Approximately 20%

* GPT-4o (Green Pentagon): Approximately 4%

* QWQ-32B (Purple Diamond): Approximately 12%

* DeepSeek-V3 (Dark Purple Diamond): Approximately 17%

* Open LLM (White Circle): Approximately -5%

* Closed LLM (White Pentagon): Approximately -1%

* Reasoning LLM (White Diamond): Approximately 11%

**AQUA Dataset:**

* LLaMA3.1-8B (Light Blue Circle): Approximately 2%

* LLaMA3.1-70B (Yellow Circle): Approximately 1%

* Qwen2.5-7B (Dark Blue Circle): Approximately 3%

* Qwen2.5-72B (Red Circle): Approximately 4%

* Claude3.5 (Blue Pentagon): Approximately 1%

* GPT-3.5 (Orange Pentagon): Approximately 5%

* GPT-4o (Green Pentagon): Approximately 2%

* QWQ-32B (Purple Diamond): Approximately 11%

* DeepSeek-V3 (Dark Purple Diamond): Approximately -1%

* Open LLM (White Circle): Approximately 1%

* Closed LLM (White Pentagon): Approximately 1%

* Reasoning LLM (White Diamond): Approximately 1%

**GSM8K Dataset:**

* LLaMA3.1-8B (Light Blue Circle): Approximately 11%

* LLaMA3.1-70B (Yellow Circle): Approximately 10%

* Qwen2.5-7B (Dark Blue Circle): Approximately 11%

* Qwen2.5-72B (Red Circle): Approximately 10%

* Claude3.5 (Blue Pentagon): Approximately 11%

* GPT-3.5 (Orange Pentagon): Approximately 10%

* GPT-4o (Green Pentagon): Approximately 11%

* QWQ-32B (Purple Diamond): Approximately 11%

* DeepSeek-V3 (Dark Purple Diamond): Approximately 4%

* Open LLM (White Circle): Approximately 1%

* Closed LLM (White Pentagon): Approximately 1%

* Reasoning LLM (White Diamond): Approximately 1%

**MATH Dataset:**

* LLaMA3.1-8B (Light Blue Circle): Approximately 3%

* LLaMA3.1-70B (Yellow Circle): Approximately 3%

* Qwen2.5-7B (Dark Blue Circle): Approximately 3%

* Qwen2.5-72B (Red Circle): Approximately 3%

* Claude3.5 (Blue Pentagon): Approximately 3%

* GPT-3.5 (Orange Pentagon): Approximately 3%

* GPT-4o (Green Pentagon): Approximately 3%

* QWQ-32B (Purple Diamond): Approximately 3%

* DeepSeek-V3 (Dark Purple Diamond): Approximately -4%

* Open LLM (White Circle): Approximately -6%

* Closed LLM (White Pentagon): Approximately -6%

* Reasoning LLM (White Diamond): Approximately -4%

**HumanEval Dataset:**

* LLaMA3.1-8B (Light Blue Circle): Approximately 4%

* LLaMA3.1-70B (Yellow Circle): Approximately 5%

* Qwen2.5-7B (Dark Blue Circle): Approximately 4%

* Qwen2.5-72B (Red Circle): Approximately 4%

* Claude3.5 (Blue Pentagon): Approximately 4%

* GPT-3.5 (Orange Pentagon): Approximately 4%

* GPT-4o (Green Pentagon): Approximately 4%

* QWQ-32B (Purple Diamond): Approximately 4%

* DeepSeek-V3 (Dark Purple Diamond): Approximately 1%

* Open LLM (White Circle): Approximately 1%

* Closed LLM (White Pentagon): Approximately 1%

* Reasoning LLM (White Diamond): Approximately 1%

### Key Observations

* The performance of different models varies significantly across different datasets.

* GPT-3.5 generally performs well across all datasets, often achieving high scores.

* LLaMA3.1-8B shows the lowest performance on HotpotQA.

* Reasoning LLM shows the lowest performance on MATH.

* The performance of Open LLM and Closed LLM is consistently low across all datasets.

### Interpretation

The scatter plot provides a comparative analysis of various language models' performance on different datasets. The variation in performance highlights the strengths and weaknesses of each model in handling different types of tasks. For example, GPT-3.5 consistently performs well, indicating its robustness across different tasks, while LLaMA3.1-8B struggles with HotpotQA. The plot also reveals that certain models, like Open LLM and Closed LLM, consistently underperform compared to others. This information is valuable for selecting the most appropriate model for a specific task and for identifying areas where model improvement is needed. The "Reasoning LLM" models (marked with diamonds) show a wide range of performance, suggesting that reasoning ability is highly task-dependent.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Scatter Plot: Performance Comparison of LLMs Across Datasets

### Overview

This scatter plot compares the performance of various Large Language Models (LLMs) across seven different datasets. The y-axis represents the performance difference (Δ) in percentage points relative to a baseline. The x-axis represents the dataset name. Each LLM is represented by a unique marker and color. A horizontal dashed line at Δ=0 indicates the baseline performance.

### Components/Axes

* **X-axis:** Dataset - with markers for HotpotQA, CS-QA, GPQA, AQUA, GSM8K, MATH, and HumanEval.

* **Y-axis:** Δ (%) - Performance difference in percentage points. Scale ranges from approximately -15% to 25%.

* **Legend:** Located in the top-right corner, listing the LLMs and their corresponding marker styles and colors:

* Llama3.1-8B (Light Green Circle)

* Llama3.1-70B (Light Blue Circle)

* Qwen2.5-7B (Orange Circle)

* Qwen2.5-72B (Red Circle)

* Claude3.5 (Teal Triangle)

* GPT-3.5 (Dark Orange Diamond)

* GPT-4 (Yellow Diamond)

* QWQ-32B (Purple Diamond)

* DeepSeek-v3 (Magenta Diamond)

* Open LLM (White Circle)

* Closed LLM (Light Gray Triangle)

* Reasoning LLM (Light Gray Diamond)

* Baseline (Δ=0) (Horizontal Dashed Line)

### Detailed Analysis

The plot shows the performance variation of each LLM across the datasets. The following approximate data points are extracted, noting the inherent uncertainty in reading values from a visual plot:

* **HotpotQA:**

* Llama3.1-8B: ~-2%

* Llama3.1-70B: ~2%

* Qwen2.5-7B: ~-5%

* Qwen2.5-72B: ~-1%

* Claude3.5: ~-2%

* GPT-3.5: ~-10%

* GPT-4: ~10%

* QWQ-32B: ~5%

* DeepSeek-v3: ~-10%

* Open LLM: ~-12%

* Closed LLM: ~-1%

* Reasoning LLM: ~-14%

* **CS-QA:**

* Llama3.1-8B: ~5%

* Llama3.1-70B: ~10%

* Qwen2.5-7B: ~2%

* Qwen2.5-72B: ~8%

* Claude3.5: ~8%

* GPT-3.5: ~-2%

* GPT-4: ~15%

* QWQ-32B: ~10%

* DeepSeek-v3: ~5%

* Open LLM: ~-5%

* Closed LLM: ~-2%

* Reasoning LLM: ~-8%

* **GPQA:**

* Llama3.1-8B: ~10%

* Llama3.1-70B: ~18%

* Qwen2.5-7B: ~5%

* Qwen2.5-72B: ~10%

* Claude3.5: ~5%

* GPT-3.5: ~5%

* GPT-4: ~10%

* QWQ-32B: ~10%

* DeepSeek-v3: ~10%

* Open LLM: ~5%

* Closed LLM: ~5%

* Reasoning LLM: ~10%

* **AQUA:**

* Llama3.1-8B: ~5%

* Llama3.1-70B: ~10%

* Qwen2.5-7B: ~2%

* Qwen2.5-72B: ~8%

* Claude3.5: ~2%

* GPT-3.5: ~2%

* GPT-4: ~10%

* QWQ-32B: ~10%

* DeepSeek-v3: ~10%

* Open LLM: ~2%

* Closed LLM: ~2%

* Reasoning LLM: ~5%

* **GSM8K:**

* Llama3.1-8B: ~5%

* Llama3.1-70B: ~10%

* Qwen2.5-7B: ~2%

* Qwen2.5-72B: ~8%

* Claude3.5: ~2%

* GPT-3.5: ~2%

* GPT-4: ~10%

* QWQ-32B: ~10%

* DeepSeek-v3: ~10%

* Open LLM: ~2%

* Closed LLM: ~2%

* Reasoning LLM: ~5%

* **MATH:**

* Llama3.1-8B: ~-5%

* Llama3.1-70B: ~5%

* Qwen2.5-7B: ~-2%

* Qwen2.5-72B: ~2%

* Claude3.5: ~-2%

* GPT-3.5: ~-10%

* GPT-4: ~10%

* QWQ-32B: ~5%

* DeepSeek-v3: ~-5%

* Open LLM: ~-10%

* Closed LLM: ~-5%

* Reasoning LLM: ~-10%

* **HumanEval:**

* Llama3.1-8B: ~5%

* Llama3.1-70B: ~10%

* Qwen2.5-7B: ~2%

* Qwen2.5-72B: ~8%

* Claude3.5: ~2%

* GPT-3.5: ~2%

* GPT-4: ~10%

* QWQ-32B: ~10%

* DeepSeek-v3: ~10%

* Open LLM: ~2%

* Closed LLM: ~2%

* Reasoning LLM: ~5%

### Key Observations

* GPT-4 consistently outperforms other models across all datasets, often by a significant margin.

* Llama3.1-70B generally performs better than Llama3.1-8B.

* Qwen2.5-72B generally performs better than Qwen2.5-7B.

* The "Reasoning LLM" consistently underperforms compared to other models, particularly on HotpotQA, MATH, and GSM8K.

* Open LLMs and Closed LLMs show similar performance across most datasets.

* Performance varies significantly across datasets, suggesting that LLM capabilities are not uniform.

### Interpretation

The data suggests that GPT-4 is the most capable LLM among those tested, demonstrating superior performance across a diverse range of tasks. The larger models (e.g., Llama3.1-70B, Qwen2.5-72B) generally exhibit better performance than their smaller counterparts, indicating that model size is a significant factor. The consistent underperformance of the "Reasoning LLM" suggests that its reasoning capabilities are limited compared to other models. The variation in performance across datasets highlights the importance of evaluating LLMs on a variety of benchmarks to obtain a comprehensive understanding of their strengths and weaknesses. The differences between Open and Closed LLMs are minimal, suggesting that access to the model weights does not necessarily dictate performance. The plot provides valuable insights for selecting the most appropriate LLM for a given task and for identifying areas where further research and development are needed.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Scatter Plot: Language Model Performance Delta Across Datasets

### Overview

This image is a scatter plot comparing the performance delta (Δ, in percentage) of various large language models (LLMs) across seven different benchmark datasets. Each data point represents a specific model's performance relative to a baseline (Δ=0). The plot categorizes models as Open LLMs, Closed LLMs, or Reasoning LLMs using distinct marker shapes.

### Components/Axes

* **Y-Axis:** Labeled "Δ (%)". The scale ranges from -15 to 25, with major tick marks every 5 units. A horizontal dashed green line at Δ=0 is labeled "Baseline (Δ=0)".

* **X-Axis:** Labeled "Dataset". It lists seven categorical datasets: `HotpotQA`, `CS-QA`, `GPQA`, `AQUA`, `GSM8K`, `MATH`, and `HumanEval`. Vertical dashed lines separate each dataset category.

* **Legend (Positioned on the right side):**

* **Models (by color):**

* `LLaMA3.1-8B` (Teal circle)

* `LLaMA3.1-70B` (Light green circle)

* `Qwen2.5-7B` (Light blue circle)

* `Qwen2.5-72B` (Red circle)

* `Claude3.5` (Dark blue circle)

* `GPT-3.5` (Orange pentagon)

* `GPT-4o` (Yellow-green pentagon)

* `QWQ-32B` (Pink diamond)

* `DeepSeek-V3` (Purple diamond)

* **Model Type (by shape):**

* `Open LLM` (Circle)

* `Closed LLM` (Pentagon)

* `Reasoning LLM` (Diamond)

* **Baseline:** `Baseline (Δ=0)` (Green dashed line)

### Detailed Analysis

Performance deltas are approximate, read from the chart's grid.

**1. HotpotQA:**

* `LLaMA3.1-8B` (Teal, Circle): ~ -13%

* `Qwen2.5-7B` (Light blue, Circle): ~ -2%

* `LLaMA3.1-70B` (Light green, Circle): ~ +3%

* `Qwen2.5-72B` (Red, Circle): ~ +4%

* `Claude3.5` (Dark blue, Circle): ~ +1%

* `GPT-3.5` (Orange, Pentagon): ~ +5%

* `GPT-4o` (Yellow-green, Pentagon): ~ +3%

* `QWQ-32B` (Pink, Diamond): ~ -1%

* `DeepSeek-V3` (Purple, Diamond): ~ +17%

**2. CS-QA:**

* `LLaMA3.1-8B` (Teal, Circle): ~ -8%

* `Qwen2.5-7B` (Light blue, Circle): ~ -9%

* `LLaMA3.1-70B` (Light green, Circle): ~ -6%

* `Qwen2.5-72B` (Red, Circle): ~ 0%

* `Claude3.5` (Dark blue, Circle): ~ +7%

* `GPT-3.5` (Orange, Pentagon): ~ +8%

* `GPT-4o` (Yellow-green, Pentagon): ~ +6%

* `QWQ-32B` (Pink, Diamond): ~ -8%

* `DeepSeek-V3` (Purple, Diamond): ~ -4%

**3. GPQA:**

* `LLaMA3.1-8B` (Teal, Circle): ~ +9%

* `Qwen2.5-7B` (Light blue, Circle): ~ +20%

* `LLaMA3.1-70B` (Light green, Circle): ~ +20%

* `Qwen2.5-72B` (Red, Circle): ~ +16%

* `Claude3.5` (Dark blue, Circle): ~ +9%

* `GPT-3.5` (Orange, Pentagon): ~ +14%

* `GPT-4o` (Yellow-green, Pentagon): ~ +16%

* `QWQ-32B` (Pink, Diamond): ~ +11%

* `DeepSeek-V3` (Purple, Diamond): ~ +12%

**4. AQUA:**

* `LLaMA3.1-8B` (Teal, Circle): ~ -5%

* `Qwen2.5-7B` (Light blue, Circle): ~ -2%

* `LLaMA3.1-70B` (Light green, Circle): ~ +1%

* `Qwen2.5-72B` (Red, Circle): ~ +3%

* `Claude3.5` (Dark blue, Circle): ~ +1%

* `GPT-3.5` (Orange, Pentagon): ~ +16%

* `GPT-4o` (Yellow-green, Pentagon): ~ +15%

* `QWQ-32B` (Pink, Diamond): ~ +1%

* `DeepSeek-V3` (Purple, Diamond): ~ +5%

**5. GSM8K:**

* `LLaMA3.1-8B` (Teal, Circle): ~ -1%

* `Qwen2.5-7B` (Light blue, Circle): ~ +9%

* `LLaMA3.1-70B` (Light green, Circle): ~ +5%

* `Qwen2.5-72B` (Red, Circle): ~ +9%

* `Claude3.5` (Dark blue, Circle): ~ +2%

* `GPT-3.5` (Orange, Pentagon): ~ +22%

* `GPT-4o` (Yellow-green, Pentagon): ~ +11%

* `QWQ-32B` (Pink, Diamond): ~ +10%

* `DeepSeek-V3` (Purple, Diamond): ~ +4%

**6. MATH:**

* `LLaMA3.1-8B` (Teal, Circle): ~ +9%

* `Qwen2.5-7B` (Light blue, Circle): ~ +4%

* `LLaMA3.1-70B` (Light green, Circle): ~ +4%

* `Qwen2.5-72B` (Red, Circle): ~ +1%

* `Claude3.5` (Dark blue, Circle): ~ +2%

* `GPT-3.5` (Orange, Pentagon): ~ +9%

* `GPT-4o` (Yellow-green, Pentagon): ~ +10%

* `QWQ-32B` (Pink, Diamond): ~ -4%

* `DeepSeek-V3` (Purple, Diamond): ~ -4%

**7. HumanEval:**

* `LLaMA3.1-8B` (Teal, Circle): ~ -5%

* `Qwen2.5-7B` (Light blue, Circle): ~ -5%

* `LLaMA3.1-70B` (Light green, Circle): ~ +7%

* `Qwen2.5-72B` (Red, Circle): ~ -10%

* `Claude3.5` (Dark blue, Circle): ~ -1%

* `GPT-3.5` (Orange, Pentagon): ~ +1%

* `GPT-4o` (Yellow-green, Pentagon): ~ +4%

* `QWQ-32B` (Pink, Diamond): ~ +10%

* `DeepSeek-V3` (Purple, Diamond): ~ -4%

### Key Observations

1. **High Variance:** Performance deltas vary dramatically across both models and datasets. No single model dominates all benchmarks.

2. **Dataset Difficulty:** Models show the widest spread of performance on `GPQA` and `GSM8K`, suggesting these datasets may differentiate model capabilities more sharply.

3. **Top Performers:** `GPT-3.5` (Orange pentagon) achieves the single highest delta on the chart (~+22% on GSM8K). `Qwen2.5-7B` and `LLaMA3.1-70B` also show strong peaks (~+20% on GPQA).

4. **Notable Underperformance:** `LLaMA3.1-8B` (Teal circle) has the lowest delta (~-13% on HotpotQA). `Qwen2.5-72B` (Red circle) shows a significant drop on HumanEval (~-10%).

5. **Reasoning LLMs (Diamonds):** `DeepSeek-V3` (Purple) shows high variance, with a strong positive outlier on HotpotQA (~+17%) but negative performance on MATH and HumanEval. `QWQ-32B` (Pink) is generally closer to the baseline.

6. **Open vs. Closed:** Closed LLMs (Pentagons: GPT-3.5, GPT-4o) tend to cluster in the upper half of the chart for most datasets, but are not universally superior.

### Interpretation

This chart visualizes the **non-uniform progress and specialization** in the current LLM landscape. The data suggests:

* **Benchmark Sensitivity:** A model's "capability" is not a single number but a profile across tasks. Strengths in mathematical reasoning (GSM8K, MATH) do not guarantee strength in question answering (HotpotQA, CS-QA) or code generation (HumanEval).

* **The "No Free Lunch" Theorem in AI:** The absence of a model that excels in all categories indicates that architectural choices, training data, and optimization targets create trade-offs. For example, a model fine-tuned for math may see regressions on other tasks.

* **The Baseline is Key:** The Δ=0 baseline is critical for interpretation. It likely represents the performance of a reference model (e.g., an earlier version or a standard baseline). Points above the line indicate improvement over this reference; points below indicate regression. The chart therefore measures **relative advancement**, not absolute accuracy.

* **Strategic Implications:** For a practitioner, this chart argues for **model selection based on the specific task**. Choosing a model requires consulting its performance profile on benchmarks analogous to the intended application, rather than relying on aggregate scores or reputation. The high variance, especially among open models, highlights the rapid and divergent evolution in this field.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Scatter Plot: Model Performance Across Datasets

### Overview

The image is a scatter plot comparing the performance of various large language models (LLMs) across seven datasets. The y-axis represents percentage change (Δ%) relative to a baseline (Δ=0), while the x-axis lists datasets: HotpotQA, CS-QA, GPQA, AQUA, GSM8K, MATH, and HumanEval. Data points are color-coded and shaped by model type, with a legend on the right.

### Components/Axes

- **X-axis (Dataset)**: Categorical labels for seven datasets (HotpotQA, CS-QA, GPQA, AQUA, GSM8K, MATH, HumanEval).

- **Y-axis (Δ%)**: Numerical scale from -15% to 25%, with a dashed green baseline at 0%.

- **Legend**: 12 models represented by colors and markers:

- **Cyan circles**: LLaMA 3.1-8B

- **Yellow circles**: LLaMA 3.1-70B

- **Red circles**: Qwen 2.5-72B

- **Blue circles**: Claude 3.5

- **Orange circles**: GPT-3.5

- **Green circles**: GPT-4o

- **Pink diamonds**: QWQ-32B

- **Purple diamonds**: DeepSeek-V3

- **White circles**: Open LLM

- **Gray circles**: Closed LLM

- **Pink diamonds**: Reasoning LLM

### Detailed Analysis

- **HotpotQA**:

- LLaMA 3.1-70B (yellow) shows the highest Δ (~18%), while Claude 3.5 (blue) has the lowest (~-15%).

- GPT-4o (green) and DeepSeek-V3 (purple) cluster near 0%.

- **CS-QA**:

- GPT-4o (green) peaks at ~12%, while LLaMA 3.1-8B (cyan) dips to ~-8%.

- Qwen 2.5-72B (red) and Reasoning LLM (pink) show moderate gains (~5-7%).

- **GPQA**:

- LLaMA 3.1-70B (yellow) and GPT-4o (green) exceed 15%, while Open LLM (white) and Closed LLM (gray) cluster near 0%.

- **AQUA**:

- GPT-4o (green) and DeepSeek-V3 (purple) show ~10-12% gains, while LLaMA 3.1-8B (cyan) dips to ~-5%.

- **GSM8K**:

- GPT-4o (green) peaks at ~22%, with LLaMA 3.1-70B (yellow) at ~10%.

- Claude 3.5 (blue) and Open LLM (white) show minimal gains (~2-3%).

- **MATH**:

- GPT-4o (green) and DeepSeek-V3 (purple) exceed 10%, while LLaMA 3.1-8B (cyan) and Qwen 2.5-72B (red) cluster near 0%.

- **HumanEval**:

- GPT-4o (green) and DeepSeek-V3 (purple) show ~5-7% gains, while LLaMA 3.1-8B (cyan) and Qwen 2.5-72B (red) dip below 0%.

### Key Observations

1. **GPT-4o (green circles)** consistently outperforms other models across most datasets, with the highest Δ in GSM8K (~22%).

2. **LLaMA 3.1-70B (yellow circles)** shows strong performance in GPQA and AQUA but underperforms in HumanEval.

3. **Claude 3.5 (blue circles)** and **Qwen 2.5-72B (red circles)** exhibit mixed results, with notable dips in HotpotQA and MATH.

4. **Reasoning LLM (pink diamonds)** and **DeepSeek-V3 (purple diamonds)** demonstrate competitive performance, particularly in GSM8K and MATH.

5. **Open LLM (white circles)** and **Closed LLM (gray circles)** generally cluster near the baseline (Δ=0), indicating minimal improvement.

### Interpretation

The data suggests that **GPT-4o** and **DeepSeek-V3** are the most robust models across diverse tasks, with GPT-4o excelling in GSM8K and MATH. LLaMA 3.1-70B performs well in specialized domains (GPQA, AQUA) but struggles with reasoning-heavy tasks like HumanEval. Models like **Claude 3.5** and **Qwen 2.5-72B** show inconsistent results, highlighting potential limitations in generalization. The baseline (Δ=0) serves as a critical reference, emphasizing that many models fail to outperform a neutral benchmark in certain datasets. This variability underscores the importance of dataset-specific model selection in real-world applications.

DECODING INTELLIGENCE...