TECHNICAL ASSET FINGERPRINT

aea6ddbdcc2e8a231383f112

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

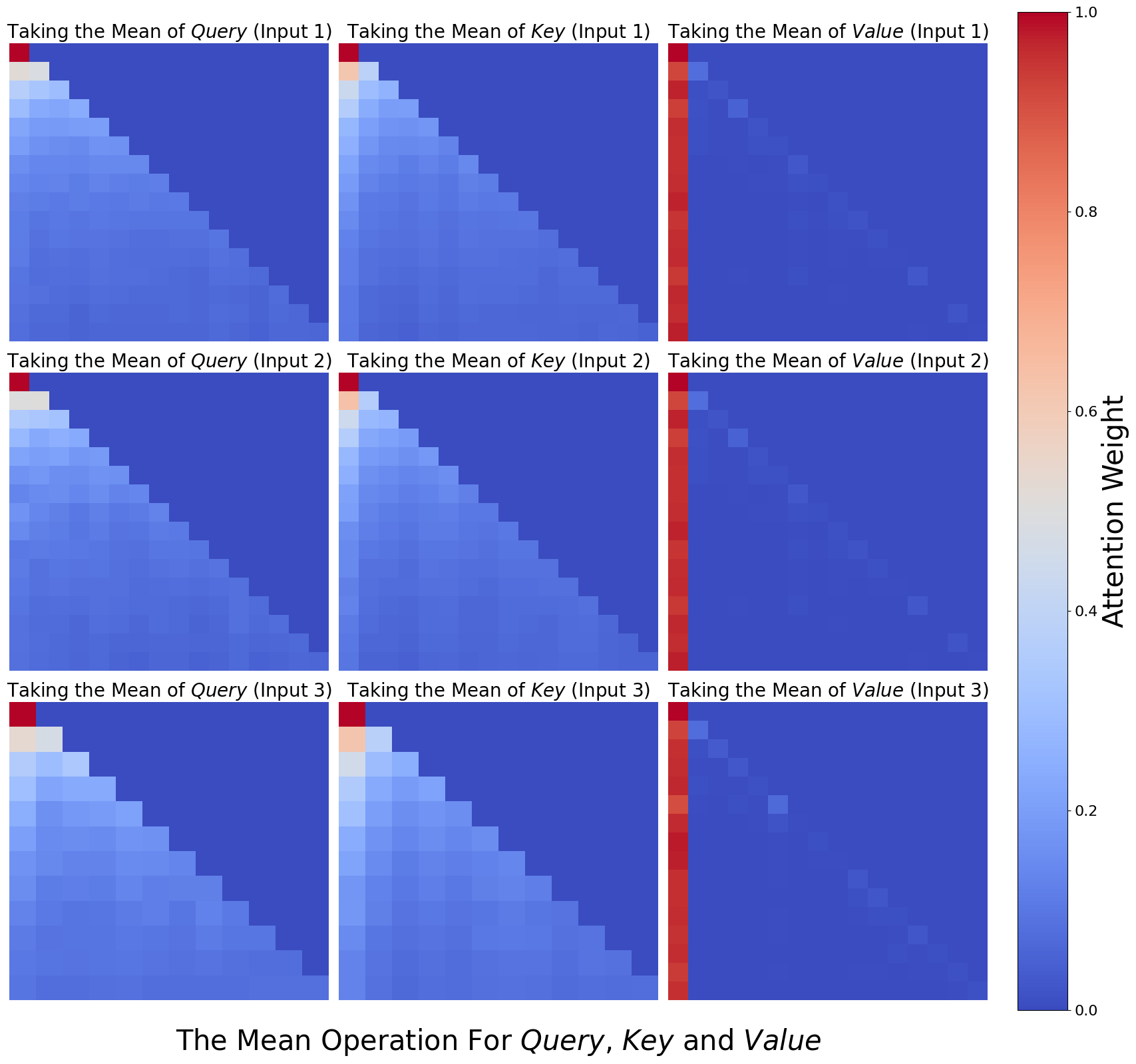

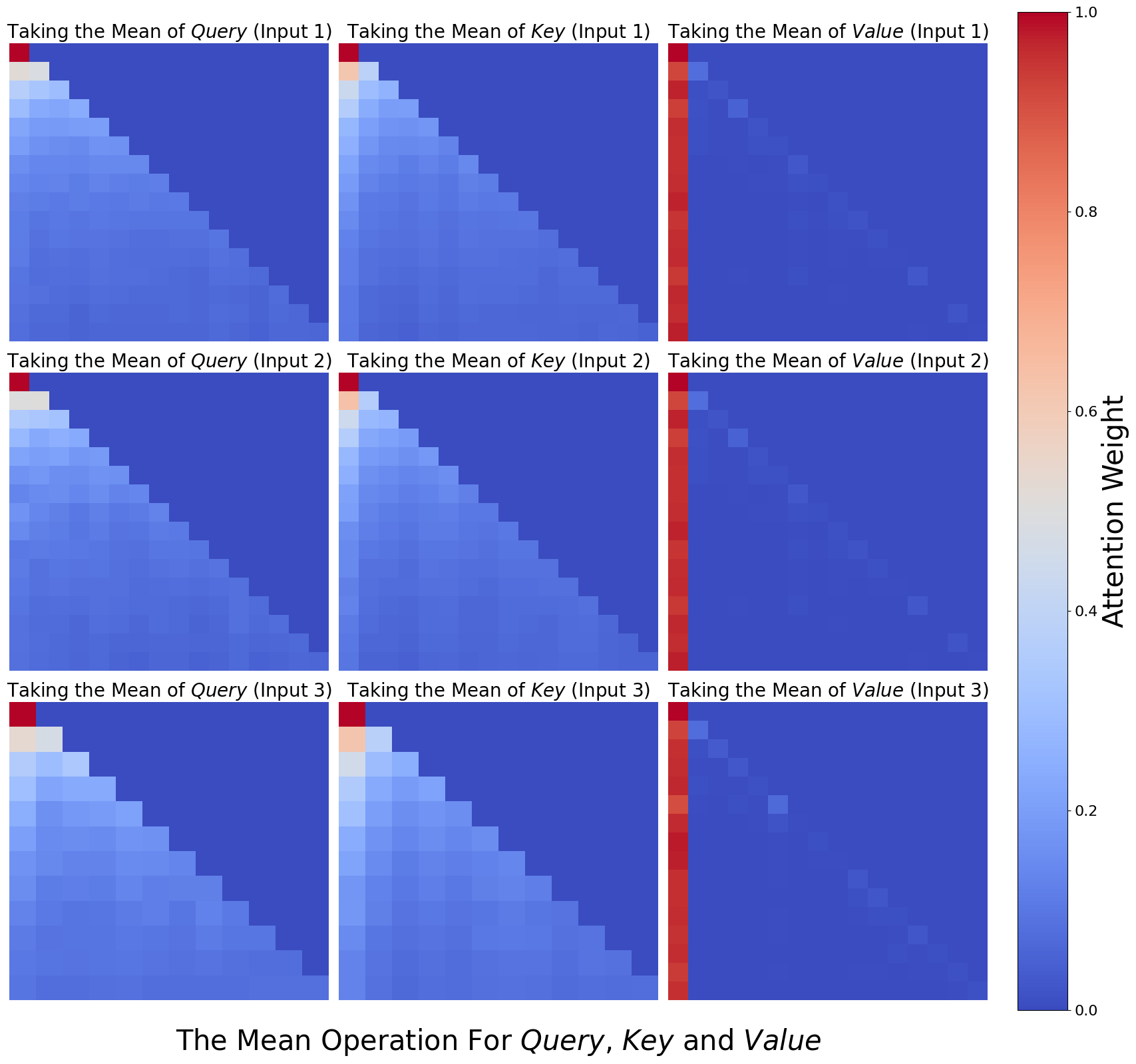

## Heatmap: The Mean Operation For Query, Key and Value

### Overview

The image presents a series of heatmaps visualizing the attention weights resulting from taking the mean of Query, Key, and Value inputs across three different input instances (Input 1, Input 2, Input 3). The heatmaps are arranged in a 3x3 grid, with each row representing an input instance and each column representing the Query, Key, or Value component. The color intensity represents the attention weight, ranging from blue (low) to red (high), as indicated by the colorbar on the right.

### Components/Axes

* **Title:** "The Mean Operation For Query, Key and Value" (located at the bottom center)

* **Heatmap Titles (Row 1):**

* Column 1: "Taking the Mean of *Query* (Input 1)"

* Column 2: "Taking the Mean of *Key* (Input 1)"

* Column 3: "Taking the Mean of *Value* (Input 1)"

* **Heatmap Titles (Row 2):**

* Column 1: "Taking the Mean of *Query* (Input 2)"

* Column 2: "Taking the Mean of *Key* (Input 2)"

* Column 3: "Taking the Mean of *Value* (Input 2)"

* **Heatmap Titles (Row 3):**

* Column 1: "Taking the Mean of *Query* (Input 3)"

* Column 2: "Taking the Mean of *Key* (Input 3)"

* Column 3: "Taking the Mean of *Value* (Input 3)"

* **Colorbar (Right):**

* Label: "Attention Weight" (vertical text)

* Scale: 0.0 to 1.0, with increments of 0.2 (0.0, 0.2, 0.4, 0.6, 0.8, 1.0)

### Detailed Analysis

Each heatmap is a square grid, approximately 15x15 cells.

* **Query Heatmaps (Column 1):**

* Input 1: High attention weights (red/orange) concentrated in the top-left corner, decreasing towards the bottom-right.

* Input 2: Similar pattern to Input 1, but with slightly lower overall attention weights.

* Input 3: Similar pattern to Input 1 and Input 2, but with slightly lower overall attention weights than Input 2.

* **Key Heatmaps (Column 2):**

* Input 1: High attention weights (red/orange) concentrated in the top-left corner, decreasing towards the bottom-right.

* Input 2: Similar pattern to Input 1, but with slightly lower overall attention weights.

* Input 3: Similar pattern to Input 1 and Input 2, but with slightly lower overall attention weights than Input 2.

* **Value Heatmaps (Column 3):**

* Input 1: High attention weights (red) concentrated in the first column, with very low attention weights (blue) elsewhere.

* Input 2: Similar pattern to Input 1, with high attention weights in the first column and low attention weights elsewhere.

* Input 3: Similar pattern to Input 1 and Input 2, with high attention weights in the first column and low attention weights elsewhere.

### Key Observations

* The Query and Key heatmaps show a similar pattern, with attention focused on the initial elements and decreasing as the sequence progresses.

* The Value heatmaps show a strong focus on the first element, suggesting it is the most important when taking the mean of the Value input.

* The attention weights generally decrease from Input 1 to Input 3 for Query and Key.

### Interpretation

The heatmaps visualize the attention distribution when taking the mean of Query, Key, and Value inputs. The concentration of attention in the top-left corner of the Query and Key heatmaps suggests that the initial elements of these sequences are more influential in determining the mean. The strong focus on the first element in the Value heatmaps indicates that this element dominates the mean calculation for the Value input. The decreasing attention weights from Input 1 to Input 3 for Query and Key might indicate a diminishing importance of these inputs over time or iterations. The visualization highlights how different components (Query, Key, Value) contribute to the overall attention mechanism when their means are considered.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Heatmaps: Attention Weight Visualization

### Overview

The image presents a 3x3 grid of heatmaps, visualizing attention weights. Each heatmap corresponds to a specific operation (taking the mean) applied to Query, Key, and Value, with three different inputs (Input 1, Input 2, Input 3). The color intensity represents the attention weight, ranging from 0.0 (blue) to 1.0 (red).

### Components/Axes

* **Title:** "The Mean Operation For Query, Key and Value" (located at the bottom center)

* **Y-axis Label:** "Attention Weight" (located on the right side, ranging from 0.0 to 1.0)

* **Heatmap Titles:** Each heatmap has a title indicating the operation and input. Examples: "Taking the Mean of Query (Input 1)", "Taking the Mean of Key (Input 2)", "Taking the Mean of Value (Input 3)".

* **Grid Structure:** 3 rows and 3 columns, representing the combinations of Query/Key/Value and Input 1/2/3.

* **Color Scale:** A continuous color scale from blue (low attention weight) to red (high attention weight).

### Detailed Analysis

Each heatmap is approximately 15x15 cells. The x and y axes of each heatmap are not explicitly labeled, but represent the dimensions over which attention is calculated. The color intensity within each cell represents the attention weight.

**Row 1 (Input 1):**

* **Taking the Mean of Query (Input 1):** The heatmap shows a strong diagonal pattern, with higher attention weights (towards red) along the main diagonal. Attention weights range from approximately 0.2 to 0.9.

* **Taking the Mean of Key (Input 1):** Similar to the Query heatmap, a strong diagonal pattern is observed, with attention weights ranging from approximately 0.2 to 0.9.

* **Taking the Mean of Value (Input 1):** This heatmap exhibits a more scattered pattern, with a few isolated red cells and a generally lower overall attention weight, ranging from approximately 0.1 to 0.6.

**Row 2 (Input 2):**

* **Taking the Mean of Query (Input 2):** The diagonal pattern is less pronounced than in Input 1, with a more gradual increase in attention weight along the diagonal. Weights range from approximately 0.1 to 0.7.

* **Taking the Mean of Key (Input 2):** A clear diagonal pattern is present, with attention weights ranging from approximately 0.2 to 0.8. A vertical red line is present at approximately x=7.

* **Taking the Mean of Value (Input 2):** Similar to Input 1, this heatmap shows a scattered pattern with lower overall attention weights, ranging from approximately 0.1 to 0.5. A vertical red line is present at approximately x=7.

**Row 3 (Input 3):**

* **Taking the Mean of Query (Input 3):** The diagonal pattern is again visible, but less distinct than in Input 1. Attention weights range from approximately 0.1 to 0.6.

* **Taking the Mean of Key (Input 3):** A strong diagonal pattern is observed, with attention weights ranging from approximately 0.2 to 0.8. A vertical red line is present at approximately x=7.

* **Taking the Mean of Value (Input 3):** This heatmap shows a scattered pattern with lower overall attention weights, ranging from approximately 0.1 to 0.5. A vertical red line is present at approximately x=7.

### Key Observations

* **Diagonal Dominance:** The "Taking the Mean of Query" and "Taking the Mean of Key" heatmaps consistently exhibit a strong diagonal pattern across all three inputs, suggesting a higher attention weight for elements that are closer to each other in the sequence.

* **Value Scatter:** The "Taking the Mean of Value" heatmaps consistently show a more scattered pattern with lower overall attention weights, indicating a less focused attention distribution.

* **Vertical Red Line:** A vertical red line appears in the "Taking the Mean of Key" and "Taking the Mean of Value" heatmaps for Inputs 2 and 3, at approximately x=7. This suggests a consistently high attention weight for a specific element in the sequence.

* **Input Variation:** The intensity of the diagonal pattern varies across the three inputs, suggesting that the attention distribution is influenced by the input data.

### Interpretation

The heatmaps visualize the attention weights calculated when taking the mean of Query, Key, and Value for different inputs. The strong diagonal patterns in the Query and Key heatmaps suggest that the model is attending to elements that are close to each other in the sequence, which is a common behavior in attention mechanisms. The scattered pattern in the Value heatmaps indicates that the model is less focused on specific elements when processing the value information.

The vertical red line in the Key and Value heatmaps for Inputs 2 and 3 suggests that a particular element in the sequence consistently receives high attention. This could be due to the importance of that element in the input data or a specific characteristic of the model.

The variation in attention patterns across the three inputs indicates that the attention mechanism is sensitive to the input data and can adapt its attention distribution accordingly. The data suggests that the attention mechanism is functioning as expected, focusing on relevant elements in the sequence and adapting to different inputs. The consistent difference in attention patterns between Query/Key and Value suggests that these components play different roles in the attention process.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Heatmap Grid: Attention Weights for Mean Operations on Query, Key, and Value

### Overview

The image displays a 3x3 grid of heatmaps visualizing attention weights resulting from applying a "mean" operation to the Query, Key, and Value components of a transformer-like attention mechanism. The visualization compares the effect across three different inputs. A vertical color bar on the right provides the scale for interpreting the attention weights.

### Components/Axes

* **Grid Structure:** 9 individual heatmaps arranged in 3 rows and 3 columns.

* **Row Labels (Top of each heatmap):**

* Row 1: "Taking the Mean of *Query* (Input 1)", "Taking the Mean of *Key* (Input 1)", "Taking the Mean of *Value* (Input 1)"

* Row 2: "Taking the Mean of *Query* (Input 2)", "Taking the Mean of *Key* (Input 2)", "Taking the Mean of *Value* (Input 2)"

* Row 3: "Taking the Mean of *Query* (Input 3)", "Taking the Mean of *Key* (Input 3)", "Taking the Mean of *Value* (Input 3)"

* **Color Bar (Right side):**

* **Label:** "Attention Weight" (vertical text).

* **Scale:** Continuous gradient from 0.0 (dark blue) to 1.0 (dark red). Major tick marks are at 0.0, 0.2, 0.4, 0.6, 0.8, and 1.0.

* **Main Title (Bottom center):** "The Mean Operation For *Query*, *Key* and *Value*"

* **Heatmap Axes:** Each individual heatmap is a square grid. The axes are not explicitly labeled with indices, but the visual pattern implies a sequence of tokens (e.g., position 1, 2, 3...). The top-left cell of each heatmap corresponds to the interaction between the first token and itself.

### Detailed Analysis

**1. Query Column (Leftmost Column):**

* **Trend:** All three heatmaps (Inputs 1, 2, 3) show a nearly identical pattern.

* **Pattern:** A strong diagonal gradient. The top-left cell (position 1 attending to position 1) is dark red (weight ≈ 1.0). Moving right along the top row or down along the first column, the color quickly transitions to light orange, then beige, and finally to shades of blue. The lower-right triangle of the heatmap is uniformly dark blue (weight ≈ 0.0). This creates a sharp, descending diagonal boundary from the top-left to the bottom-right.

* **Interpretation:** Attention is heavily concentrated on the first token, with rapidly diminishing weights for tokens further along the sequence. The pattern is causal (lower-triangular), meaning a token can only attend to itself and previous tokens.

**2. Key Column (Middle Column):**

* **Trend:** Very similar to the Query column across all three inputs.

* **Pattern:** The same strong diagonal gradient is present. The top-left cell is dark red (≈1.0). The gradient appears slightly smoother or more diffused compared to the Query column, but the overall structure—a high-weight region in the top-left decaying to zero in the bottom-right—is preserved.

* **Interpretation:** The mean operation on Keys produces an attention pattern nearly identical to that of Queries, suggesting a symmetric role in this specific context.

**3. Value Column (Rightmost Column):**

* **Trend:** A distinctly different pattern from Query and Key, consistent across all three inputs.

* **Pattern:** The **entire first column** of each heatmap is a solid, vertical red stripe (weight ≈ 1.0). The rest of the heatmap is predominantly dark blue (≈0.0), with a few scattered, isolated cells of lighter blue (weight ≈ 0.1-0.3). These lighter cells appear randomly, with no clear diagonal structure.

* **Spatial Grounding:** The high-attention region is a vertical bar on the far left of each Value heatmap, not a diagonal. This is a fundamental structural difference from the Query/Key patterns.

### Key Observations

1. **Consistency Across Inputs:** The patterns for Query, Key, and Value are remarkably consistent across Input 1, Input 2, and Input 3. This suggests the observed effects are a property of the mean operation itself on these components, not specific to a single input.

2. **Dichotomy Between Q/K and V:** There is a clear dichotomy. The mean of Query and mean of Key produce causal, diagonal attention patterns focused on the first token. The mean of Value produces a pattern where attention is exclusively and uniformly focused on the first token for all positions (vertical stripe).

3. **Sparsity in Value Attention:** Beyond the first column, the Value heatmaps are extremely sparse, with only a handful of non-zero (light blue) attention weights scattered seemingly at random.

4. **Color-Legend Confirmation:** The dark red in the top-left of Q/K heatmaps and the first column of V heatmaps matches the 1.0 mark on the color bar. The dark blue background matches the 0.0 mark.

### Interpretation

This visualization demonstrates how the inductive bias of a transformer's attention mechanism changes dramatically depending on which component (Query, Key, or Value) is aggregated via a mean operation before computing attention.

* **Query & Key Mean:** Applying the mean to Query or Key vectors results in an attention pattern that strongly resembles a **causal (autoregressive) mask**. The model attends almost solely to the first token, with a smooth, decaying focus on subsequent tokens. This could imply that averaging Q or K vectors collapses the sequence's positional information, making the first token a dominant "summary" that all other positions attend to in a structured, decreasing manner.

* **Value Mean:** Applying the mean to Value vectors leads to a **uniform focus on the first token**. Every output position attends exclusively (or almost exclusively) to the information from the first input token. This suggests that the Value component carries the core content to be propagated, and averaging it across the sequence causes all positions to retrieve the same, initial content. The scattered light blue cells may represent noise or minor, non-systematic attention to other positions.

**In essence, the data suggests that for this model or experiment:**

1. The first token holds a privileged position, acting as an anchor for attention when components are averaged.

2. The *content* (Value) is treated fundamentally differently from the *addressing mechanisms* (Query/Key). Averaging content leads to uniform retrieval of the first token's information, while averaging addressing leads to a structured, decaying focus on that same first token.

3. This could be a visualization of how "mean pooling" or similar operations might simplify or distort the nuanced, token-specific interactions in a standard attention head, potentially leading to a loss of positional or contextual nuance beyond the first token.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Heatmap Grid: Attention Weight Visualization for Query, Key, and Value Operations

### Overview

The image displays a 3x3 grid of heatmaps visualizing attention weight distributions across three operations: **Query**, **Key**, and **Value**. Each row and column corresponds to "Input 1", "Input 2", and "Input 3", with color intensity representing attention weight magnitudes (0.0–1.0). The heatmaps reveal diagonal dominance and symmetric patterns, suggesting self-attention mechanisms.

---

### Components/Axes

1. **Rows**:

- **Row 1**: "Taking the Mean of Query (Input 1)"

- **Row 2**: "Taking the Mean of Query (Input 2)"

- **Row 3**: "Taking the Mean of Query (Input 3)"

2. **Columns**:

- **Column 1**: "Taking the Mean of Key (Input 1)"

- **Column 2**: "Taking the Mean of Key (Input 2)"

- **Column 3**: "Taking the Mean of Key (Input 3)"

3. **Color Legend**:

- **Blue (0.0)**: Low attention weight

- **Red (1.0)**: High attention weight

- **Gradient**: Intermediate values (e.g., light blue ≈ 0.2, orange ≈ 0.6)

4. **Axis Markers**:

- Diagonal lines in each heatmap segment the grid into upper/lower triangles.

---

### Detailed Analysis

1. **Diagonal Dominance**:

- All heatmaps show **red squares along the main diagonal** (e.g., Input 1-Query vs. Input 1-Key), indicating **self-attention** (high weights for matching inputs).

- Example: Input 3-Query vs. Input 3-Value has the darkest red (≈1.0).

2. **Upper/Lower Triangles**:

- **Upper triangle** (above diagonal): Gradual transition from red to blue, with weights decreasing from ≈0.8 (near diagonal) to ≈0.0 (top-right corner).

- **Lower triangle** (below diagonal): Mirror image of upper triangle, with weights decreasing from ≈0.8 (near diagonal) to ≈0.0 (bottom-left corner).

3. **Input-Specific Patterns**:

- **Input 1**: Strongest diagonal dominance (≈1.0) and sharpest gradient.

- **Input 2**: Slightly weaker diagonal (≈0.9) and more diffuse gradients.

- **Input 3**: Moderate diagonal (≈0.7) and broader low-weight regions.

4. **Symmetry**:

- Upper and lower triangles exhibit near-perfect symmetry, suggesting bidirectional attention patterns.

---

### Key Observations

1. **Self-Attention Focus**: Diagonal red squares confirm that each input primarily attends to itself.

2. **Consistency Across Inputs**: Similar patterns across Inputs 1–3, but Input 1 shows the highest self-attention.

3. **Gradient Smoothness**: Gradual color transitions suggest smooth attention weight distributions.

4. **No Outliers**: No anomalous regions deviate from the diagonal/gradient pattern.

---

### Interpretation

1. **Mechanism Insight**:

- The diagonal dominance aligns with **self-attention** in transformer models, where tokens attend most strongly to themselves.

- Symmetric upper/lower triangles imply **bidirectional** attention (e.g., Query-Key and Key-Query interactions).

2. **Input Dependency**:

- Input 1’s sharper gradients suggest higher sensitivity to positional relationships, while Input 3’s broader low-weight regions indicate more diffuse attention.

3. **Technical Implications**:

- The heatmaps validate that attention mechanisms prioritize self-comparison over cross-input interactions.

- The grid structure highlights how mean operations aggregate attention weights across inputs.

4. **Limitations**:

- No explicit labels for individual tokens or positional indices, limiting granular analysis.

- Color scale lacks intermediate markers (e.g., 0.4, 0.6), requiring visual estimation.

---

### Spatial Grounding & Verification

- **Legend Position**: Right-aligned color bar with clear 0.0–1.0 scale.

- **Axis Labels**: Rows/columns explicitly labeled with operations and inputs.

- **Color Consistency**: Red squares on diagonals match the legend’s 1.0 value; blue corners match 0.0.

---

### Content Details

- **Heatmap Values** (approximate):

- Diagonal: 0.7–1.0 (red)

- Near-diagonal (1 step away): 0.5–0.8 (orange)

- Far from diagonal: 0.0–0.2 (blue)

- **Grid Structure**: 3x3 matrix with uniform patterns across rows/columns.

---

### Final Notes

The heatmaps provide a clear visualization of attention weight distributions, emphasizing self-attention and input-dependent variations. The absence of textual annotations beyond axis labels and legends necessitates reliance on color gradients for quantitative interpretation.

DECODING INTELLIGENCE...