## Bar Chart: Indexical 'you' Performance Across Language Models

### Overview

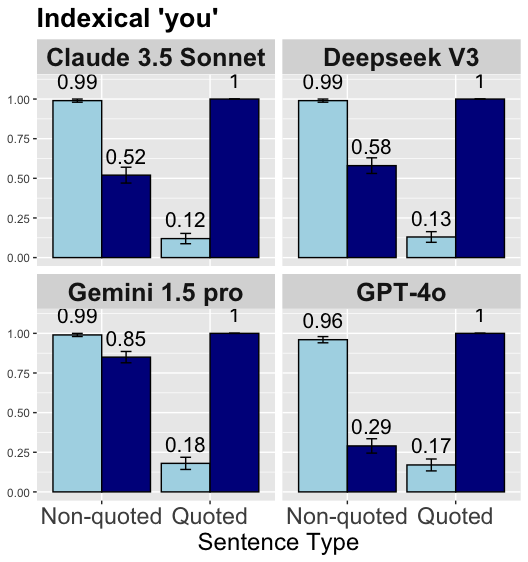

The image is a multi-panel bar chart titled "Indexical 'you'". It displays comparative performance metrics for four large language models (LLMs) on a task related to the word "you". The chart is divided into four subplots, one for each model. Each subplot compares two sentence types: "Non-quoted" and "Quoted". For each sentence type, two distinct metrics (represented by light blue and dark blue bars) are shown, with their numerical values annotated above each bar. Error bars are present on most light blue bars.

### Components/Axes

* **Main Title:** "Indexical 'you'"

* **Subplot Titles (Models):**

* Top-Left: "Claude 3.5 Sonnet"

* Top-Right: "Deepseek V3"

* Bottom-Left: "Gemini 1.5 pro"

* Bottom-Right: "GPT-4o"

* **X-Axis (Common to all subplots):**

* **Label:** "Sentence Type"

* **Categories:** "Non-quoted" (left group), "Quoted" (right group)

* **Y-Axis (Common to all subplots):**

* **Scale:** Linear, from 0.00 to 1.00.

* **Markers:** 0.00, 0.25, 0.50, 0.75, 1.00.

* **Data Series (Colors):**

* **Light Blue Bars:** Present for both "Non-quoted" and "Quoted" categories. All have error bars.

* **Dark Blue Bars:** Present for both "Non-quoted" and "Quoted" categories. No visible error bars.

* **Note:** There is no explicit legend within the image. The two colors represent two different, unlabeled metrics or conditions.

### Detailed Analysis

**Trend Verification:** For the **light blue bars**, the value is consistently high for "Non-quoted" sentences and drops sharply for "Quoted" sentences across all models. For the **dark blue bars**, the value is variable for "Non-quoted" sentences but is consistently at the maximum (1.00) for "Quoted" sentences.

**Data Points by Model:**

1. **Claude 3.5 Sonnet**

* **Non-quoted:** Light Blue = 0.99, Dark Blue = 0.52

* **Quoted:** Light Blue = 0.12, Dark Blue = 1.00

2. **Deepseek V3**

* **Non-quoted:** Light Blue = 0.99, Dark Blue = 0.58

* **Quoted:** Light Blue = 0.13, Dark Blue = 1.00

3. **Gemini 1.5 pro**

* **Non-quoted:** Light Blue = 0.99, Dark Blue = 0.85

* **Quoted:** Light Blue = 0.18, Dark Blue = 1.00

4. **GPT-4o**

* **Non-quoted:** Light Blue = 0.96, Dark Blue = 0.29

* **Quoted:** Light Blue = 0.17, Dark Blue = 1.00

### Key Observations

* **Universal Pattern:** All four models exhibit the same directional trend: a high light-blue score for non-quoted text that plummets for quoted text, and a dark-blue score that rises to a perfect 1.00 for quoted text.

* **Model Variability:** The primary difference between models lies in the **dark blue bar for "Non-quoted" sentences**. Gemini 1.5 pro scores highest (0.85), followed by Deepseek V3 (0.58), Claude 3.5 Sonnet (0.52), and GPT-4o (0.29).

* **Consistency in Light Blue:** The light blue metric is remarkably consistent for "Non-quoted" text (0.96-0.99) and for "Quoted" text (0.12-0.18) across all models.

* **Perfect Scores:** The dark blue metric achieves a value of exactly 1.00 for "Quoted" sentences in every model, suggesting a ceiling effect or a binary success condition for that specific metric in that context.

### Interpretation

The chart investigates how different LLMs process the indexical pronoun "you" in two distinct linguistic contexts: within direct speech (Quoted) and outside of it (Non-quoted). The two unlabeled metrics (light blue and dark blue) likely represent different aspects of model performance, such as **accuracy of reference resolution** versus **detection of the pronoun's presence**, or **correct interpretation** versus **literal transcription**.

The data suggests a fundamental dichotomy in model behavior:

1. The **light blue metric** indicates that models are highly proficient (scores ~0.99) at handling "you" in non-quoted, likely indirect or reported, speech. However, their performance on this same metric collapses (scores ~0.15) when "you" appears within direct quotes. This could imply a difficulty in correctly interpreting or contextualizing the pronoun when it is part of a quoted dialogue.

2. The **dark blue metric** shows the inverse pattern. Its perfect score of 1.00 for quoted text across all models suggests it measures a task that is trivially easy in that context—perhaps simply identifying that a quoted segment exists or that the word "you" is present within it. The significant variation in this metric for non-quoted text (0.29 to 0.85) is the key differentiator between models, indicating that Gemini 1.5 pro is substantially better at this particular aspect of processing "you" in indirect contexts compared to GPT-4o.

In essence, the chart reveals that while all models share a common architectural or training-based pattern in handling quoted vs. non-quoted "you," they differ markedly in their capability on the task represented by the dark blue bar for non-quoted sentences. This could be critical for applications involving narrative understanding, dialogue systems, or analyzing reported speech.