# Technical Document Extraction: RLHF vs. DPO Comparison

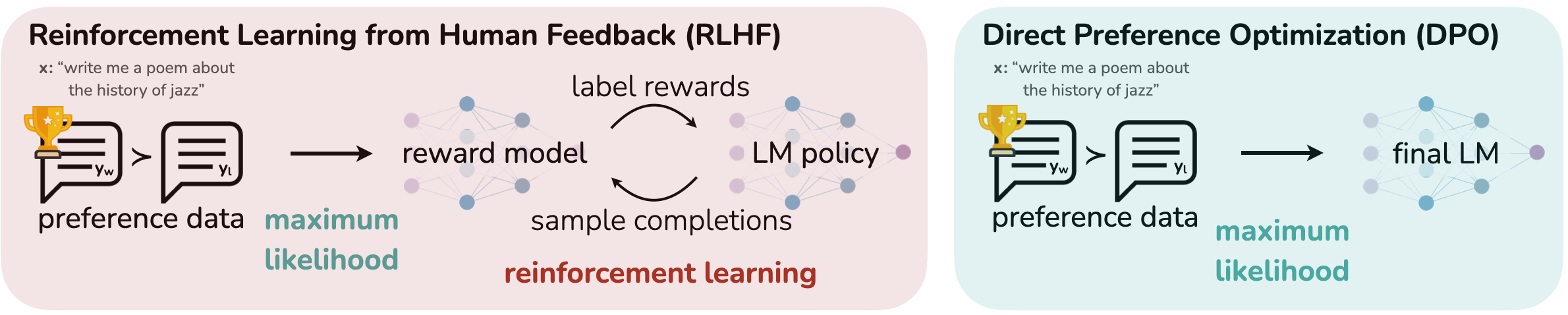

This image provides a comparative technical diagram illustrating two different methodologies for aligning Large Language Models (LLMs) with human preferences: **Reinforcement Learning from Human Feedback (RLHF)** and **Direct Preference Optimization (DPO)**.

---

## 1. Component Isolation

The image is divided into two primary horizontal segments, each contained within a rounded rectangular container.

### Region A: Reinforcement Learning from Human Feedback (RLHF)

* **Background Color:** Light Pink/Beige

* **Header:** "Reinforcement Learning from Human Feedback (RLHF)" (Bold, Black)

* **Input Example (x):** "write me a poem about the history of jazz"

* **Process Flow:**

1. **Preference Data:** Represented by two speech bubble icons.

* The left bubble contains a gold trophy icon and the label **$y_w$** (winning/preferred response).

* The right bubble contains the label **$y_l$** (losing/less preferred response).

* A "greater than" symbol (**>**) sits between them, indicating preference.

2. **Transition 1:** A black arrow points from the preference data toward the models. Below this arrow is the teal text: **"maximum likelihood"**.

3. **Model Interaction (The Loop):**

* **Reward Model:** Represented by a neural network diagram (purple/blue nodes).

* **LM Policy:** Represented by a neural network diagram (purple/blue nodes).

* **Feedback Loop:** Two curved arrows create a cycle between the Reward Model and the LM Policy.

* Top arrow (Reward Model $\rightarrow$ LM Policy): labeled **"label rewards"**.

* Bottom arrow (LM Policy $\rightarrow$ Reward Model): labeled **"sample completions"**.

* **Footer Label:** Below the loop, the text **"reinforcement learning"** is written in bold, dark red.

### Region B: Direct Preference Optimization (DPO)

* **Background Color:** Light Cyan/Blue

* **Header:** "Direct Preference Optimization (DPO)" (Bold, Black)

* **Input Example (x):** "write me a poem about the history of jazz"

* **Process Flow:**

1. **Preference Data:** Identical to the RLHF section.

* Left bubble with trophy: **$y_w$**.

* Right bubble: **$y_l$**.

* Symbol: **>**.

2. **Transition:** A black arrow points directly from the preference data to the final model. Below this arrow is the teal text: **"maximum likelihood"**.

3. **Final Output:**

* **Final LM:** Represented by a single neural network diagram (blue/purple nodes).

* Unlike RLHF, there is no secondary model or iterative feedback loop shown.

---

## 2. Comparative Analysis of Logic and Flow

| Feature | RLHF Pipeline | DPO Pipeline |

| :--- | :--- | :--- |

| **Initial Input** | Preference pairs ($y_w > y_l$) | Preference pairs ($y_w > y_l$) |

| **Intermediate Step** | Train a separate **Reward Model** using maximum likelihood. | None (Direct optimization). |

| **Optimization Method** | **Reinforcement Learning** loop (sampling completions and labeling rewards). | **Maximum Likelihood** applied directly to the final LM. |

| **Complexity** | High (requires maintaining two models and an RL training loop). | Low (single-stage policy optimization). |

| **End State** | An optimized **LM Policy**. | A **final LM**. |

---

## 3. Textual Transcription (Precise)

**Header Left:** Reinforcement Learning from Human Feedback (RLHF)

**Header Right:** Direct Preference Optimization (DPO)

**Common Text (Both Sides):**

* x: "write me a poem about the history of jazz"

* $y_w$ (within speech bubble with trophy)

* $y_l$ (within speech bubble)

* preference data

* maximum likelihood

**RLHF Specific Text:**

* reward model

* label rewards

* LM policy

* sample completions

* reinforcement learning

**DPO Specific Text:**

* final LM

---

## 4. Summary of Visual Trends

The diagram visually argues for the simplicity of DPO over RLHF.

* **RLHF** is depicted as a multi-stage, cyclical process involving a separate reward model and an iterative reinforcement learning phase (indicated by the red text and circular arrows).

* **DPO** is depicted as a linear, streamlined process that bypasses the reward model and RL loop entirely, moving from preference data directly to the final language model using maximum likelihood.