TECHNICAL ASSET FINGERPRINT

af8348b2eb1a19d4d953142f

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

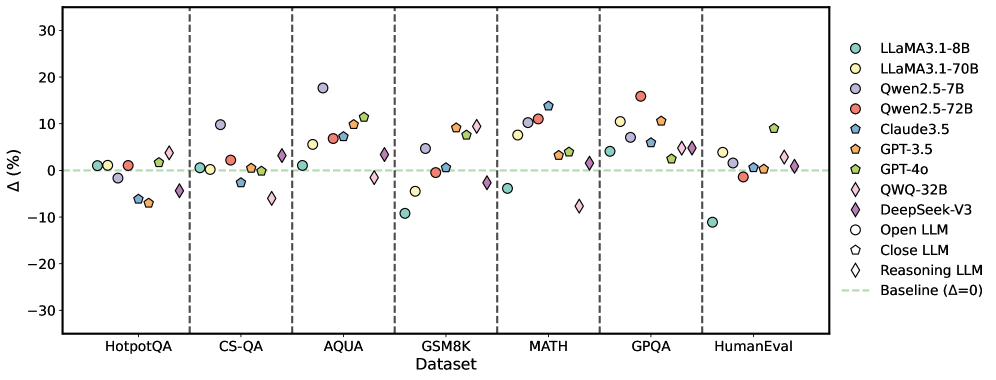

## Scatter Plot: LLM Performance Across Datasets

### Overview

The image is a scatter plot comparing the performance of various Large Language Models (LLMs) across different datasets. The y-axis represents the percentage difference (Δ (%)), and the x-axis represents the datasets. Each LLM is represented by a unique color and marker shape, as indicated in the legend on the right. A baseline at Δ = 0 is shown as a dashed green line.

### Components/Axes

* **X-axis:** "Dataset" with categories: HotpotQA, CS-QA, AQUA, GSM8K, MATH, GPQA, HumanEval.

* **Y-axis:** "Δ (%)" ranging from -30 to 30 with tick marks at -30, -20, -10, 0, 10, 20, and 30.

* **Legend (Right side):**

* Light Green Circle: LLAMA3.1-8B

* Yellow Circle: LLAMA3.1-70B

* Light Purple Circle: Qwen2.5-7B

* Red Circle: Qwen2.5-72B

* Teal Pentagon: Claude3.5

* Orange Pentagon: GPT-3.5

* Light Green Pentagon: GPT-4o

* Light Blue Diamond: QWQ-32B

* Dark Purple Diamond: DeepSeek-V3

* White Circle: Open LLM

* Gray Pentagon: Close LLM

* White Diamond: Reasoning LLM

* Dashed Light Green Line: Baseline (Δ=0)

### Detailed Analysis

Here's a breakdown of the approximate performance of each model on each dataset:

* **HotpotQA:**

* LLAMA3.1-8B (Light Green Circle): ~1%

* LLAMA3.1-70B (Yellow Circle): ~1%

* Qwen2.5-7B (Light Purple Circle): ~1%

* Qwen2.5-72B (Red Circle): ~1%

* Claude3.5 (Teal Pentagon): ~1%

* GPT-3.5 (Orange Pentagon): ~-7%

* GPT-4o (Light Green Pentagon): ~2%

* QWQ-32B (Light Blue Diamond): ~-7%

* DeepSeek-V3 (Dark Purple Diamond): ~-5%

* Open LLM (White Circle): ~1%

* Close LLM (Gray Pentagon): ~-6%

* Reasoning LLM (White Diamond): ~-2%

* **CS-QA:**

* LLAMA3.1-8B (Light Green Circle): ~-2%

* LLAMA3.1-70B (Yellow Circle): ~1%

* Qwen2.5-7B (Light Purple Circle): ~-1%

* Qwen2.5-72B (Red Circle): ~2%

* Claude3.5 (Teal Pentagon): ~-1%

* GPT-3.5 (Orange Pentagon): ~-6%

* GPT-4o (Light Green Pentagon): ~1%

* QWQ-32B (Light Blue Diamond): ~-10%

* DeepSeek-V3 (Dark Purple Diamond): ~-4%

* Open LLM (White Circle): ~-2%

* Close LLM (Gray Pentagon): ~-7%

* Reasoning LLM (White Diamond): ~2%

* **AQUA:**

* LLAMA3.1-8B (Light Green Circle): ~10%

* LLAMA3.1-70B (Yellow Circle): ~17%

* Qwen2.5-7B (Light Purple Circle): ~4%

* Qwen2.5-72B (Red Circle): ~11%

* Claude3.5 (Teal Pentagon): ~12%

* GPT-3.5 (Orange Pentagon): ~8%

* GPT-4o (Light Green Pentagon): ~10%

* QWQ-32B (Light Blue Diamond): ~-1%

* DeepSeek-V3 (Dark Purple Diamond): ~5%

* Open LLM (White Circle): ~18%

* Close LLM (Gray Pentagon): ~13%

* Reasoning LLM (White Diamond): ~2%

* **GSM8K:**

* LLAMA3.1-8B (Light Green Circle): ~-8%

* LLAMA3.1-70B (Yellow Circle): ~1%

* Qwen2.5-7B (Light Purple Circle): ~2%

* Qwen2.5-72B (Red Circle): ~9%

* Claude3.5 (Teal Pentagon): ~1%

* GPT-3.5 (Orange Pentagon): ~1%

* GPT-4o (Light Green Pentagon): ~10%

* QWQ-32B (Light Blue Diamond): ~-1%

* DeepSeek-V3 (Dark Purple Diamond): ~-4%

* Open LLM (White Circle): ~-8%

* Close LLM (Gray Pentagon): ~-2%

* Reasoning LLM (White Diamond): ~2%

* **MATH:**

* LLAMA3.1-8B (Light Green Circle): ~1%

* LLAMA3.1-70B (Yellow Circle): ~11%

* Qwen2.5-7B (Light Purple Circle): ~4%

* Qwen2.5-72B (Red Circle): ~11%

* Claude3.5 (Teal Pentagon): ~11%

* GPT-3.5 (Orange Pentagon): ~7%

* GPT-4o (Light Green Pentagon): ~9%

* QWQ-32B (Light Blue Diamond): ~-1%

* DeepSeek-V3 (Dark Purple Diamond): ~-5%

* Open LLM (White Circle): ~1%

* Close LLM (Gray Pentagon): ~-1%

* Reasoning LLM (White Diamond): ~2%

* **GPQA:**

* LLAMA3.1-8B (Light Green Circle): ~-11%

* LLAMA3.1-70B (Yellow Circle): ~6%

* Qwen2.5-7B (Light Purple Circle): ~4%

* Qwen2.5-72B (Red Circle): ~15%

* Claude3.5 (Teal Pentagon): ~4%

* GPT-3.5 (Orange Pentagon): ~11%

* GPT-4o (Light Green Pentagon): ~10%

* QWQ-32B (Light Blue Diamond): ~-1%

* DeepSeek-V3 (Dark Purple Diamond): ~-5%

* Open LLM (White Circle): ~-1%

* Close LLM (Gray Pentagon): ~-2%

* Reasoning LLM (White Diamond): ~2%

* **HumanEval:**

* LLAMA3.1-8B (Light Green Circle): ~1%

* LLAMA3.1-70B (Yellow Circle): ~2%

* Qwen2.5-7B (Light Purple Circle): ~1%

* Qwen2.5-72B (Red Circle): ~5%

* Claude3.5 (Teal Pentagon): ~-2%

* GPT-3.5 (Orange Pentagon): ~-3%

* GPT-4o (Light Green Pentagon): ~1%

* QWQ-32B (Light Blue Diamond): ~-11%

* DeepSeek-V3 (Dark Purple Diamond): ~2%

* Open LLM (White Circle): ~1%

* Close LLM (Gray Pentagon): ~-2%

* Reasoning LLM (White Diamond): ~2%

### Key Observations

* The performance of the LLMs varies significantly across different datasets.

* Some models consistently outperform others on specific datasets.

* There is a noticeable spread in performance, indicating that no single model is universally superior.

* The "Reasoning LLM" (White Diamond) consistently hovers around the baseline (Δ=0) across all datasets.

* GPT-3.5 (Orange Pentagon) tends to underperform compared to other models, often showing negative Δ values.

* QWQ-32B (Light Blue Diamond) shows significant underperformance on CS-QA and HumanEval.

* LLAMA3.1-70B (Yellow Circle) and Qwen2.5-72B (Red Circle) often achieve higher Δ values compared to other models, particularly on AQUA, MATH, and GPQA datasets.

### Interpretation

The scatter plot provides a comparative analysis of various LLMs across a range of datasets, highlighting their strengths and weaknesses. The data suggests that the choice of LLM should be tailored to the specific task or dataset, as performance varies significantly. The consistent performance of the "Reasoning LLM" near the baseline might indicate a more general-purpose model, while others are optimized for specific types of questions or data. The underperformance of GPT-3.5 on several datasets is a notable outlier, suggesting potential limitations in its architecture or training data. The superior performance of LLAMA3.1-70B and Qwen2.5-72B on certain datasets indicates their potential suitability for tasks involving those specific types of data or reasoning. The plot underscores the importance of benchmarking LLMs on diverse datasets to gain a comprehensive understanding of their capabilities.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

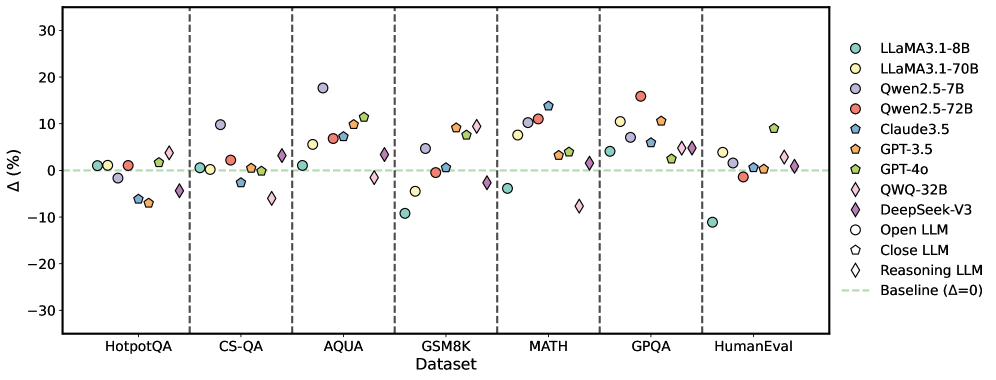

## Scatter Plot: Performance Comparison of Large Language Models

### Overview

This image presents a scatter plot comparing the performance of various Large Language Models (LLMs) across seven different datasets. The y-axis represents the performance difference (Δ) in percentage points relative to a baseline, while the x-axis lists the datasets used for evaluation. Each LLM is represented by a unique marker and color. Vertical dashed lines separate the datasets.

### Components/Axes

* **X-axis:** Dataset - with the following categories: HotpotQA, CS-QA, AQUA, GSM8K, MATH, GPQA, HumanEval.

* **Y-axis:** Δ (%) - Performance difference in percentage points. The scale ranges from approximately -30% to 30%.

* **Legend:** Located in the top-right corner, identifying each LLM with a corresponding color and marker shape. The legend includes:

* LLaMA3.1-8B (Light Blue Circle)

* LLaMA3.1-70B (Light Orange Circle)

* Qwen.2.5-7B (Light Grey Circle)

* Qwen.2.5-72B (Red Circle)

* Claude3.5 (Dark Blue Triangle)

* GPT-3.5 (Dark Orange Triangle)

* GPT-4o (Dark Purple Diamond)

* QWQ-32B (Pink Diamond)

* DeepSeek-V3 (Purple Hexagon)

* Open LLM (Grey Hexagon)

* Close LLM (Light Green Hexagon)

* Reasoning LLM (Black Diamond)

* Baseline (Δ=0) - Horizontal dashed line.

### Detailed Analysis

The plot shows the performance variation of each LLM across the datasets. The baseline is represented by a horizontal dashed line at Δ=0.

* **HotpotQA:** Most models cluster around the baseline (0%), with some variation. LLaMA3.1-8B shows a slight negative difference (approximately -2%), while Qwen.2.5-72B shows a slight positive difference (approximately +2%).

* **CS-QA:** Performance differences are more pronounced. GPT-4o shows the highest positive difference (approximately +10%), while Qwen.2.5-7B shows a negative difference (approximately -5%).

* **AQUA:** GPT-4o exhibits a significant positive difference (approximately +20%), while Qwen.2.5-7B shows a negative difference (approximately -10%).

* **GSM8K:** GPT-4o continues to show a strong positive difference (approximately +15%), while Qwen.2.5-7B remains negative (approximately -10%).

* **MATH:** GPT-4o shows the highest positive difference (approximately +25%), while Qwen.2.5-7B shows a negative difference (approximately -15%).

* **GPQA:** Performance is more varied. GPT-4o shows a positive difference (approximately +10%), while DeepSeek-V3 shows a negative difference (approximately -10%).

* **HumanEval:** Models are relatively close to the baseline. GPT-4o shows a slight positive difference (approximately +5%), while Qwen.2.5-7B shows a slight negative difference (approximately -2%).

**Specific Data Points (Approximate):**

* **GPT-4o:** HotpotQA (+0%), CS-QA (+10%), AQUA (+20%), GSM8K (+15%), MATH (+25%), GPQA (+10%), HumanEval (+5%).

* **Qwen.2.5-7B:** HotpotQA (-2%), CS-QA (-5%), AQUA (-10%), GSM8K (-10%), MATH (-15%), GPQA (-5%), HumanEval (-2%).

* **LLaMA3.1-70B:** Generally shows positive, but smaller differences than GPT-4o.

### Key Observations

* GPT-4o consistently outperforms other models across most datasets, particularly on GSM8K, MATH, and AQUA.

* Qwen.2.5-7B consistently underperforms compared to the baseline across most datasets.

* The performance differences between models are most significant on datasets like MATH and AQUA, suggesting these datasets are more sensitive to model capabilities.

* The spread of data points within each dataset indicates varying performance levels among the LLMs.

### Interpretation

The data suggests that GPT-4o is a significantly more capable LLM than the others tested, especially in tasks requiring reasoning and mathematical abilities (as evidenced by its performance on GSM8K and MATH). The consistent negative performance of Qwen.2.5-7B indicates it may be less effective for these types of tasks. The varying performance across datasets highlights the importance of evaluating LLMs on a diverse range of benchmarks to get a comprehensive understanding of their capabilities. The clustering of points around the baseline in some datasets (e.g., HotpotQA) suggests that these tasks are relatively easy for most of the tested models. The large spread in performance on datasets like MATH suggests a greater degree of differentiation in model capabilities. The baseline (Δ=0) serves as a crucial reference point, allowing for a clear assessment of whether a model is performing better or worse than a standard level.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

\n

## Scatter Plot: LLM Performance Delta Across Datasets

### Overview

The image is a scatter plot comparing the performance change (Δ, in percentage) of various Large Language Models (LLMs) across seven different benchmark datasets. Each data point represents a specific model's performance delta relative to a baseline (Δ=0). The models are categorized as "Open LLM," "Close LLM," or "Reasoning LLM" using distinct marker shapes.

### Components/Axes

* **Chart Type:** Scatter plot with categorical x-axis.

* **X-Axis (Horizontal):** Labeled "Dataset." It lists seven benchmark categories, separated by vertical dashed lines. From left to right:

1. HotpotQA

2. CS-QA

3. AQUA

4. GSM8K

5. MATH

6. GPQA

7. HumanEval

* **Y-Axis (Vertical):** Labeled "Δ (%)". It represents the percentage change in performance. The scale runs from -30 to 30, with major tick marks at intervals of 10 (-30, -20, -10, 0, 10, 20, 30).

* **Baseline:** A horizontal, light green dashed line at y=0, labeled "Baseline (Δ=0)" in the legend.

* **Legend (Right Side):** Positioned to the right of the plot area. It maps model names to specific marker colors and shapes, and defines the marker categories.

* **Models & Colors:**

* LLaMA3.1-8B: Teal circle

* LLaMA3.1-70B: Light yellow circle

* Qwen2.5-7B: Light purple circle

* Qwen2.5-72B: Salmon/red circle

* Claude3.5: Blue pentagon

* GPT-3.5: Orange pentagon

* GPT-4o: Green pentagon

* QWQ-32B: Purple diamond

* DeepSeek-V3: Pink diamond

* **Marker Categories:**

* Open LLM: Circle (○)

* Close LLM: Pentagon (⬠)

* Reasoning LLM: Diamond (◇)

### Detailed Analysis

Data points are grouped vertically above each dataset label. Values are approximate, estimated from the y-axis position.

**1. HotpotQA**

* **Above Baseline (Δ > 0):** LLaMA3.1-8B (~1%), LLaMA3.1-70B (~1%), Qwen2.5-72B (~1%), GPT-4o (~2%), QWQ-32B (~4%).

* **Below Baseline (Δ < 0):** Qwen2.5-7B (~-2%), Claude3.5 (~-7%), GPT-3.5 (~-7%), DeepSeek-V3 (~-4%).

**2. CS-QA**

* **Above Baseline:** Qwen2.5-7B (~10%), Qwen2.5-72B (~2%), Claude3.5 (~-3% *Note: This point is slightly below 0*), GPT-3.5 (~-1%), GPT-4o (~0%), QWQ-32B (~3%).

* **Below Baseline:** LLaMA3.1-8B (~0%), LLaMA3.1-70B (~-1%), DeepSeek-V3 (~-6%).

**3. AQUA**

* **Above Baseline:** LLaMA3.1-70B (~5%), Qwen2.5-7B (~18%), Qwen2.5-72B (~7%), Claude3.5 (~9%), GPT-3.5 (~10%), GPT-4o (~12%).

* **Below Baseline:** LLaMA3.1-8B (~1%), QWQ-32B (~-1%), DeepSeek-V3 (~3% *Note: This point is slightly above 0*).

**4. GSM8K**

* **Above Baseline:** Qwen2.5-7B (~5%), Qwen2.5-72B (~0%), Claude3.5 (~9%), GPT-3.5 (~8%), GPT-4o (~10%).

* **Below Baseline:** LLaMA3.1-8B (~-9%), LLaMA3.1-70B (~-4%), QWQ-32B (~-3%), DeepSeek-V3 (~-1%).

**5. MATH**

* **Above Baseline:** LLaMA3.1-70B (~8%), Qwen2.5-7B (~11%), Qwen2.5-72B (~14%), Claude3.5 (~4%), GPT-3.5 (~4%), GPT-4o (~4%).

* **Below Baseline:** LLaMA3.1-8B (~-3%), QWQ-32B (~-8%), DeepSeek-V3 (~-1%).

**6. GPQA**

* **Above Baseline:** LLaMA3.1-70B (~11%), Qwen2.5-7B (~16%), Qwen2.5-72B (~6%), Claude3.5 (~5%), GPT-3.5 (~11%), GPT-4o (~3%), QWQ-32B (~5%), DeepSeek-V3 (~5%).

* **Below Baseline:** LLaMA3.1-8B (~4% *Note: This point is above 0*).

**7. HumanEval**

* **Above Baseline:** LLaMA3.1-70B (~4%), Qwen2.5-7B (~-1% *Note: This point is slightly below 0*), Claude3.5 (~0%), GPT-3.5 (~-2%), GPT-4o (~9%), QWQ-32B (~3%), DeepSeek-V3 (~2%).

* **Below Baseline:** LLaMA3.1-8B (~-11%), Qwen2.5-72B (~-1%).

### Key Observations

1. **High Variance in AQUA & GPQA:** The AQUA and GPQA datasets show the widest spread of performance deltas, with several models achieving gains above +10% and others falling below the baseline.

2. **Consistently Strong on MATH:** Most models show a positive performance delta on the MATH dataset, with Qwen2.5-72B showing the highest gain (~+14%).

3. **LLaMA3.1-8B Struggles:** The LLaMA3.1-8B model (teal circle) frequently appears below the baseline, most notably on GSM8K (~-9%) and HumanEval (~-11%).

4. **Qwen2.5-7B's Peak:** The Qwen2.5-7B model (light purple circle) achieves the single highest observed delta on the chart, at approximately +18% on the AQUA dataset.

5. **Reasoning LLMs (Diamonds):** The "Reasoning LLM" category (QWQ-32B, DeepSeek-V3) shows mixed results. QWQ-32B has a notable positive spike on HotpotQA, while DeepSeek-V3 is often near or slightly below the baseline, except for a positive showing on GPQA.

6. **Close LLMs (Pentagons):** The "Close LLM" models (Claude3.5, GPT-3.5, GPT-4o) generally cluster together within each dataset, often showing positive deltas, particularly on AQUA and MATH.

### Interpretation

This chart visualizes a comparative benchmark study, likely measuring the improvement (or degradation) of various LLMs when using a specific technique, prompting method, or model variant compared to a standard baseline. The "Δ (%)" suggests a relative performance metric.

* **Dataset Sensitivity:** Model performance is highly dataset-dependent. A model excelling in one domain (e.g., Qwen2.5-7B on AQUA) may not lead in another (e.g., HumanEval). This underscores the importance of multi-faceted evaluation.

* **Model Size vs. Performance:** Larger models (e.g., LLaMA3.1-70B, Qwen2.5-72B) do not universally outperform smaller ones (e.g., Qwen2.5-7B) across all tasks, indicating that architecture, training data, or task alignment play critical roles.

* **Specialization:** The strong performance of several models on the MATH dataset suggests the evaluated technique or the models themselves are particularly effective for mathematical reasoning tasks. Conversely, the mixed results on code (HumanEval) and complex reasoning (HotpotQA, GPQA) indicate these remain challenging areas.

* **The "Reasoning LLM" Category:** The inclusion of a specific "Reasoning LLM" category implies these models (QWQ-32B, DeepSeek-V3) may have been designed or fine-tuned with a focus on logical inference. Their variable performance suggests that "reasoning" capability is not monolithic and manifests differently across benchmark types.

**Language Note:** All text in the image is in English.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Scatter Plot: Model Performance Comparison Across Datasets

### Overview

The image is a scatter plot comparing the performance change (Δ%) of various large language models (LLMs) across seven benchmark datasets. The plot uses color-coded markers to represent different models, with a baseline (Δ=0) indicated by a dashed green line.

### Components/Axes

- **X-axis (Dataset)**: Categorical axis with seven benchmark datasets:

- HotpotQA

- CS-QA

- AQUA

- GSM8K

- MATH

- GPQA

- HumanEval

Vertical dashed lines separate datasets.

- **Y-axis (Δ%)**: Numerical axis ranging from -30% to 30%, representing percentage change in performance.

- **Legend**: Located in the top-right corner, mapping colors/shapes to models:

- **Teal circles**: LLaMA3.1-8B

- **Yellow circles**: LLaMA3.1-70B

- **Purple circles**: Qwen2.5-7B

- **Red circles**: Qwen2.5-72B

- **Blue pentagons**: Claude3.5

- **Orange pentagons**: GPT-3.5

- **Green pentagons**: GPT-4o

- **Pink diamonds**: QWQ-32B

- **Purple diamonds**: DeepSeek-V3

- **Open circles**: Open LLM

- **Closed pentagons**: Close LLM

- **Diamond shapes**: Reasoning LLM

- **Dashed green line**: Baseline (Δ=0)

### Detailed Analysis

1. **Dataset Performance**:

- **HotpotQA**:

- LLaMA3.1-8B (teal) ≈ +2%

- GPT-3.5 (orange) ≈ -8%

- DeepSeek-V3 (purple diamond) ≈ -5%

- **CS-QA**:

- Qwen2.5-7B (purple) ≈ +12%

- GPT-4o (green pentagon) ≈ +3%

- **AQUA**:

- LLaMA3.1-70B (yellow) ≈ +18%

- Claude3.5 (blue pentagon) ≈ -2%

- **GSM8K**:

- Qwen2.5-72B (red) ≈ +15%

- GPT-4o (green pentagon) ≈ -10%

- **MATH**:

- QWQ-32B (pink diamond) ≈ +8%

- DeepSeek-V3 (purple diamond) ≈ -3%

- **GPQA**:

- LLaMA3.1-8B (teal) ≈ -5%

- GPT-3.5 (orange) ≈ +10%

- **HumanEval**:

- LLaMA3.1-8B (teal) ≈ -10%

- GPT-4o (green pentagon) ≈ +9%

2. **Trends**:

- **Positive Δ**: LLaMA3.1-70B (yellow) and GPT-4o (green pentagon) show consistent gains in multiple datasets (AQUA, GPQA, HumanEval).

- **Negative Δ**: LLaMA3.1-8B (teal) underperforms in HumanEval (-10%) and GPQA (-5%).

- **Mixed Results**: Reasoning LLMs (diamonds) show variability, with QWQ-32B (pink) performing well in MATH (+8%) but poorly in CS-QA (-12%).

### Key Observations

- **Outliers**:

- LLaMA3.1-70B (yellow) achieves the highest Δ (+18%) in AQUA.

- LLaMA3.1-8B (teal) has the largest negative Δ (-10%) in HumanEval.

- **Baseline Proximity**: Most models cluster near Δ=0, indicating minimal performance changes across datasets.

- **Model-Specific Patterns**:

- GPT-4o (green pentagon) shows strong gains in GPQA (+10%) and HumanEval (+9%).

- Qwen2.5-72B (red) performs well in GSM8K (+15%) but poorly in CS-QA (-7%).

### Interpretation

The data suggests that model performance is highly dataset-dependent. Larger models like LLaMA3.1-70B and GPT-4o demonstrate superior gains in reasoning-heavy tasks (AQUA, GPQA), while smaller models (LLaMA3.1-8B) struggle in code evaluation (HumanEval). The variability in Reasoning LLMs (diamonds) implies that reasoning capabilities may not generalize uniformly across tasks. Notably, the absence of extreme outliers (e.g., Δ > ±20%) suggests most models maintain relatively stable performance, with incremental improvements or declines. This highlights the importance of dataset-specific optimization for LLMs.

DECODING INTELLIGENCE...